Auggie scores 51.80% on SWE-bench Pro using the Augment Context Engine scaffold — the highest raw number in the category. The blog acknowledges the same Opus 4.5 model scores 45.89% on SEAL's standardized scaffold, validating that the gap is scaffold architecture, not model capability.

Auggie CLI (Augment Code)

watchAugment Code's coding CLI powered by the Augment Context Engine — a semantic codebase index. SWE-bench Pro: 51.80% on Augment's own scaffold (highest raw number in the category). 153 GitHub stars. No public release as of 2026-03-18.

43/100

Trust

153

Stars

2

Evidence

Videos

Reviews, tutorials, and comparisons from the community.

Playwright Can't Do This... But This MCP Can.

Auggie CLI: Smartest + Most Powerful AI Agentic Coder! RIP Claude Code & Gemini CLI!

Meet Auggie CLI The Smartest Coding Agent Yet by Augment Code. CLAUDE CODE KILLER?

Editorial verdict

Highest SWE-bench Pro number in the category (51.80% on Augment scaffold), but the scaffold is not standardized — they used the same Opus 4.5 model that scores 45.89% on SEAL's standardized setup. The architecture/scaffolding advantage is credible and meaningful. Cannot rank above tools with millions of verified installs on a single blog-post benchmark. Watch for: public GA release and independent SWE-bench reproduction.

Source

Docs: www.augmentcode.com

Public evidence

SEAL's standardized SWE-bench Pro leaderboard confirms Claude Code scaffold at 45.89% (#1). Augment's 51.80% is not on this leaderboard — it uses a non-standardized scaffold. Context for interpreting Augment's claim.

How does this compare?

See side-by-side metrics against other skills in the same category.

Where it wins

51.80% SWE-bench Pro on Augment scaffold — highest raw number in the category

Augment Context Engine: semantic codebase index with deep code understanding

Architecture advantage is credible: same model, better scaffold → +5.91pp over SEAL standardized

Where to be skeptical

No public release — 153 GitHub stars, not generally available

Benchmark uses Augment's own non-standardized scaffold — not an apples-to-apples comparison

No independent SWE-bench reproduction published

Cannot verify claims without public artifact

Ranking in categories

Know a better alternative?

Submit evidence and we'll run the full pipeline.

Similar skills

Aider

91Open-source AI pair programming CLI. The only tool in the coding CLI category with a fully verifiable, independent download number: 191,828/week PyPI installs, 5.7M lifetime, 15B tokens/week (homepage stat). Multi-model, git-native, no vendor lock-in. v0.86.2 released 2026-02-12.

Claude Code

90Anthropic's official agentic coding CLI. Terminal-native, tool-use-driven, with deep file system and shell access. #1 SWE-bench Pro standardized (45.89%), ~4% of GitHub public commits (SemiAnalysis), $2.5B annualized revenue (fastest enterprise SaaS to $1B ARR). 8M+ npm weekly downloads. Opus 4.6 with 1M context.

Gemini CLI

86Google's open-source terminal agent with Gemini 3 models, 1M token context, built-in Google Search grounding, and the best free tier in the category (1K req/day). 97.9K stars, 444 contributors. SWE-bench Pro standardized 43.30% (#2 behind Claude Code).

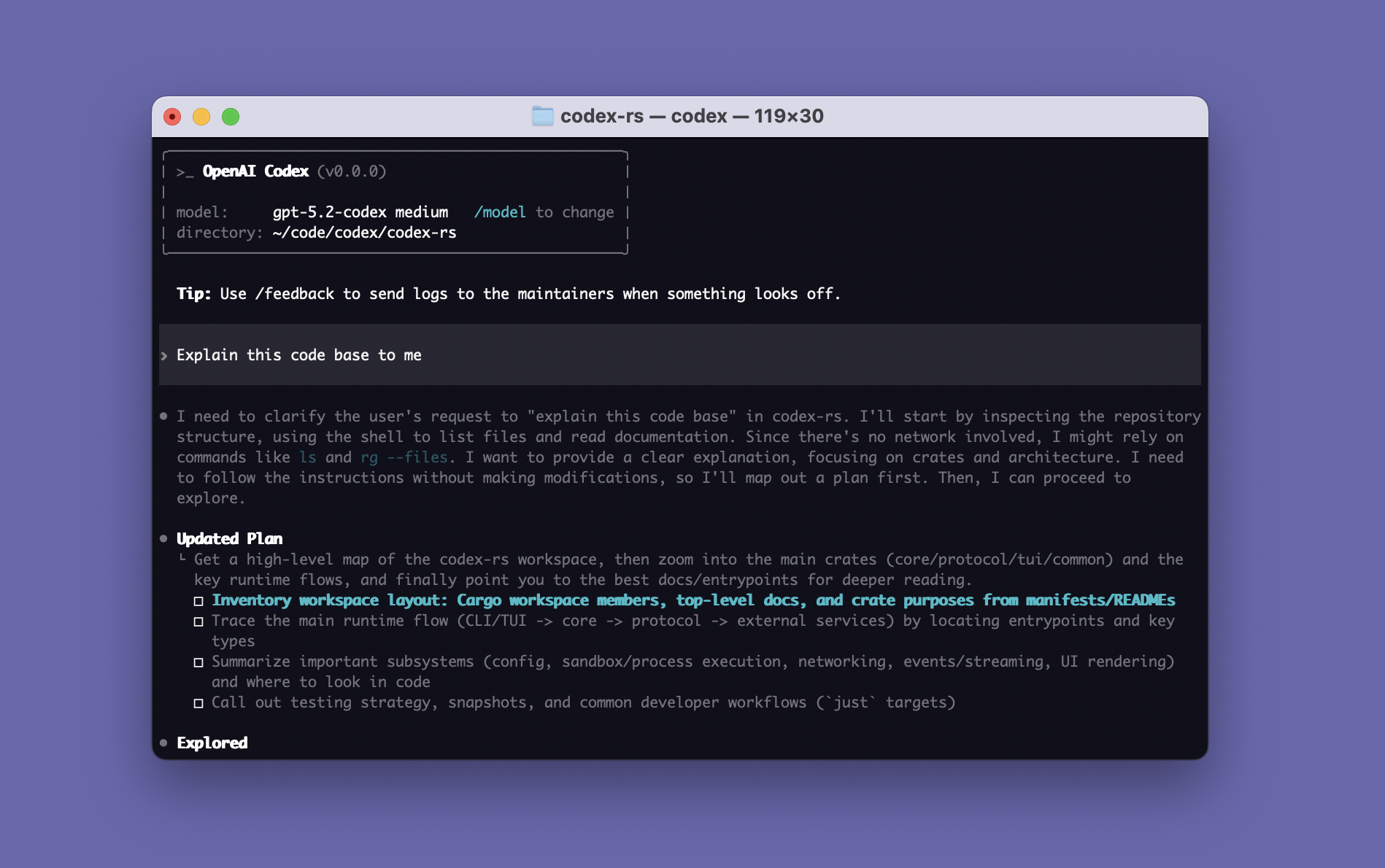

Codex CLI

85OpenAI's open-source coding agent built in Rust. Terminal-Bench 77.3% (GPT-5.3-Codex), SWE-bench Pro standardized 41.04%. 3-4x more token-efficient than Claude Code, 60-75% cheaper per task. Free with ChatGPT subscription, sandbox-first execution, 619 releases in 10 months.

Raw GitHub source

GitHub README could not be fetched right now.