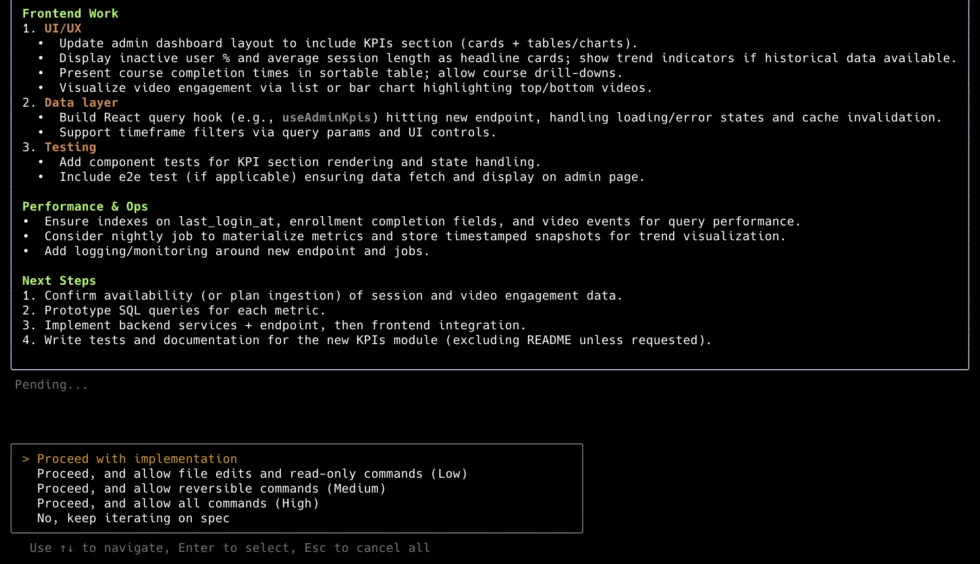

All Skills

91 skills tracked across 15 categories. Each with an editorial verdict, source links, and evidence.

91

Skills

15

Categories

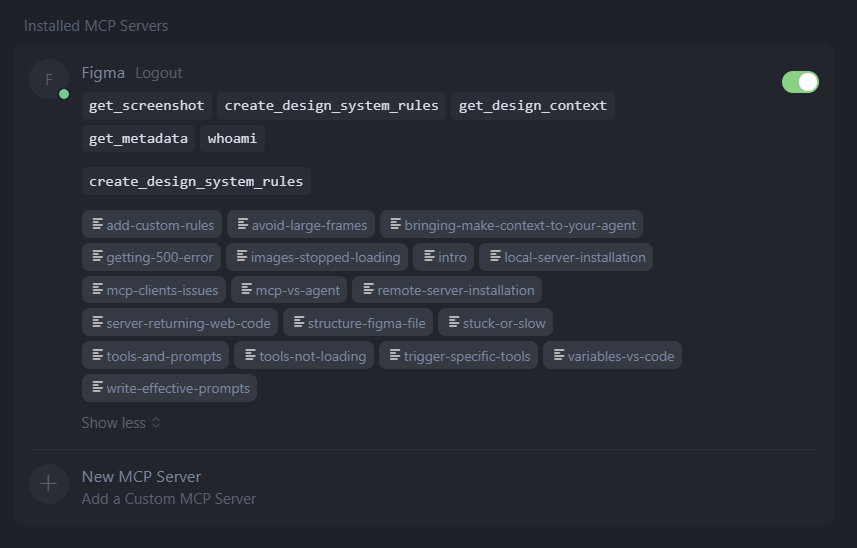

Figma MCP Server Guide

activeOfficial62The trust leader with unmatched long-term durability — triple partnership with OpenAI Codex, GitHub Copilot, and Claude Code. Bidirectional since Mar 6 2026. Wins specifically when your team has Code Connect configured. Without it, Framelink produces cleaner context. Pricing is the primary barrier: free tier (6 calls/month) is non-functional.

Framelink / Figma-Context-MCP

active70Best read-only design-to-code MCP. Use this unless you have Code Connect configured — 25% smaller output than Official, avoids prescriptive code that poisons LLM context. Works on free Figma plans with broadest editor support.

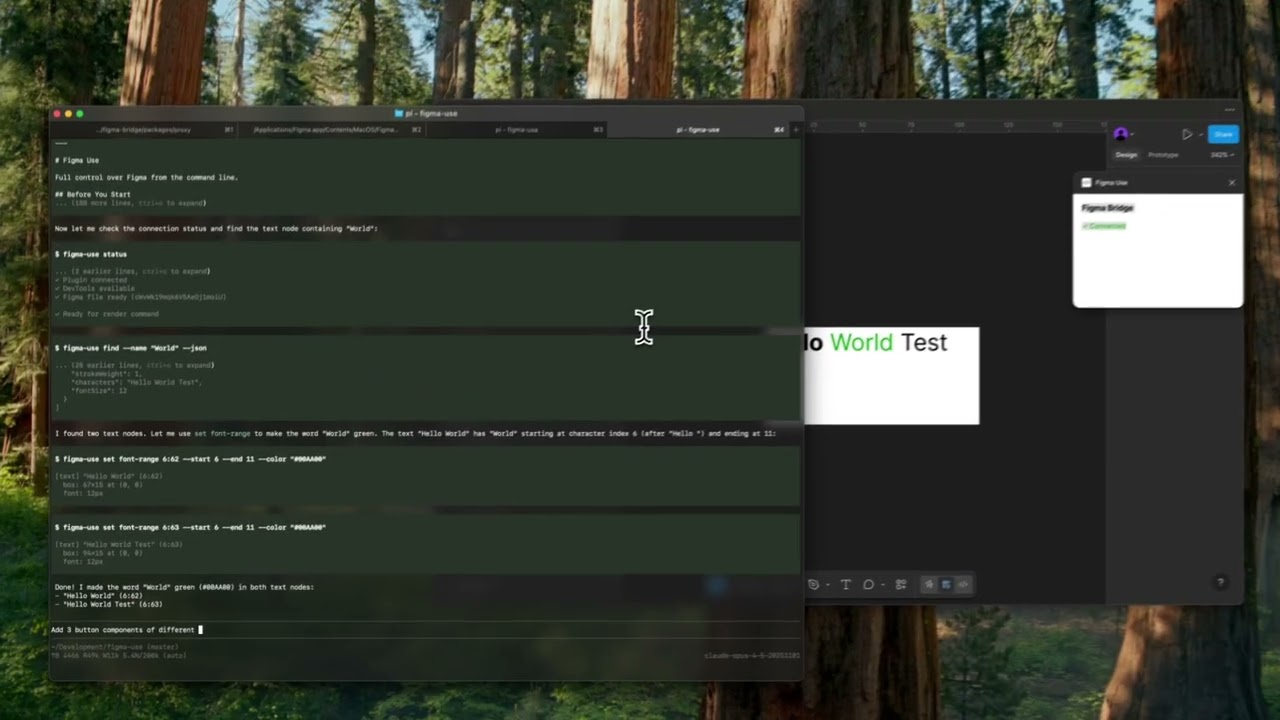

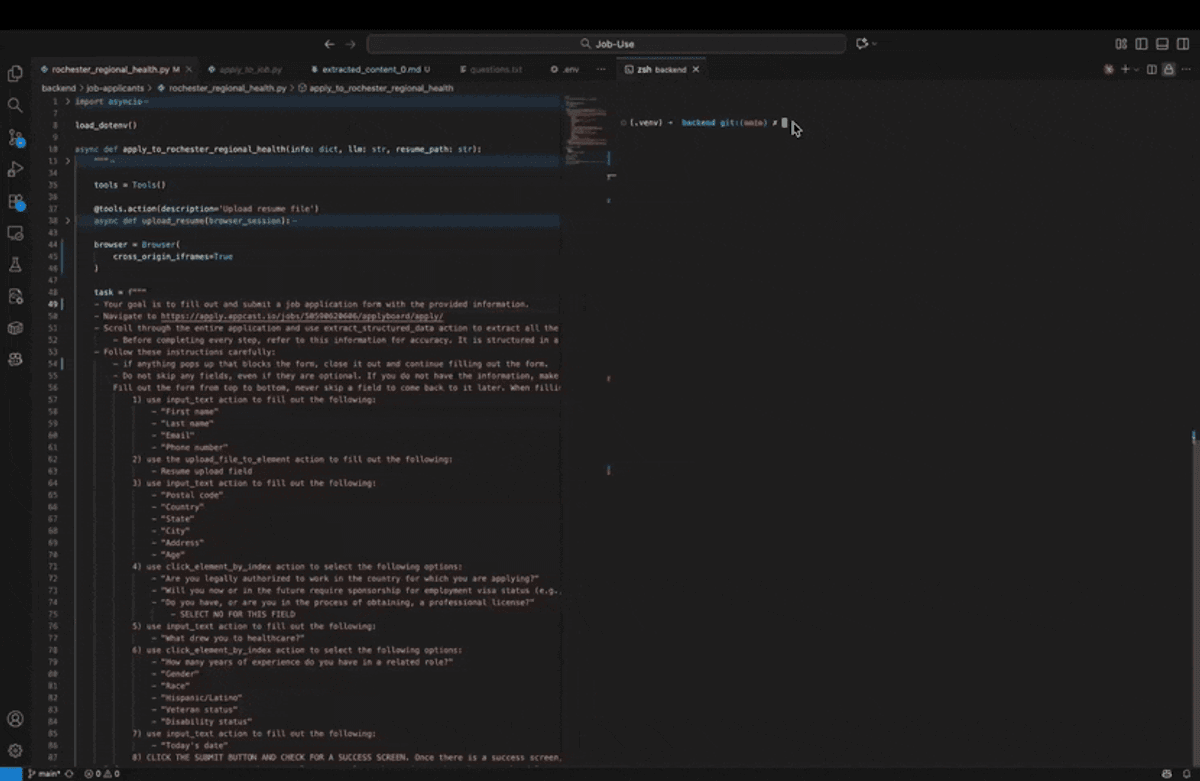

Figma-use

active48Conditional pick. Strongest HN validation in the write-access lane, but solo maintainer and potential OpenPencil pivot raise durability concerns. Use if you want CLI-first workflow without a Figma plugin.

Vibma

watch48Emerging. Only tool publishing model-specific design quality benchmarks. Harness engineering angle is genuinely differentiated, but HN Show got only 2 points and no independent reviews found.

Cursor Talk to Figma

active57Best general-purpose write-access Figma MCP for individual developers and small teams. More accessible than Console MCP, works on free Figma plans, lower setup complexity. Dropped to #4 as Console MCP's Uber validation is stronger enterprise evidence.

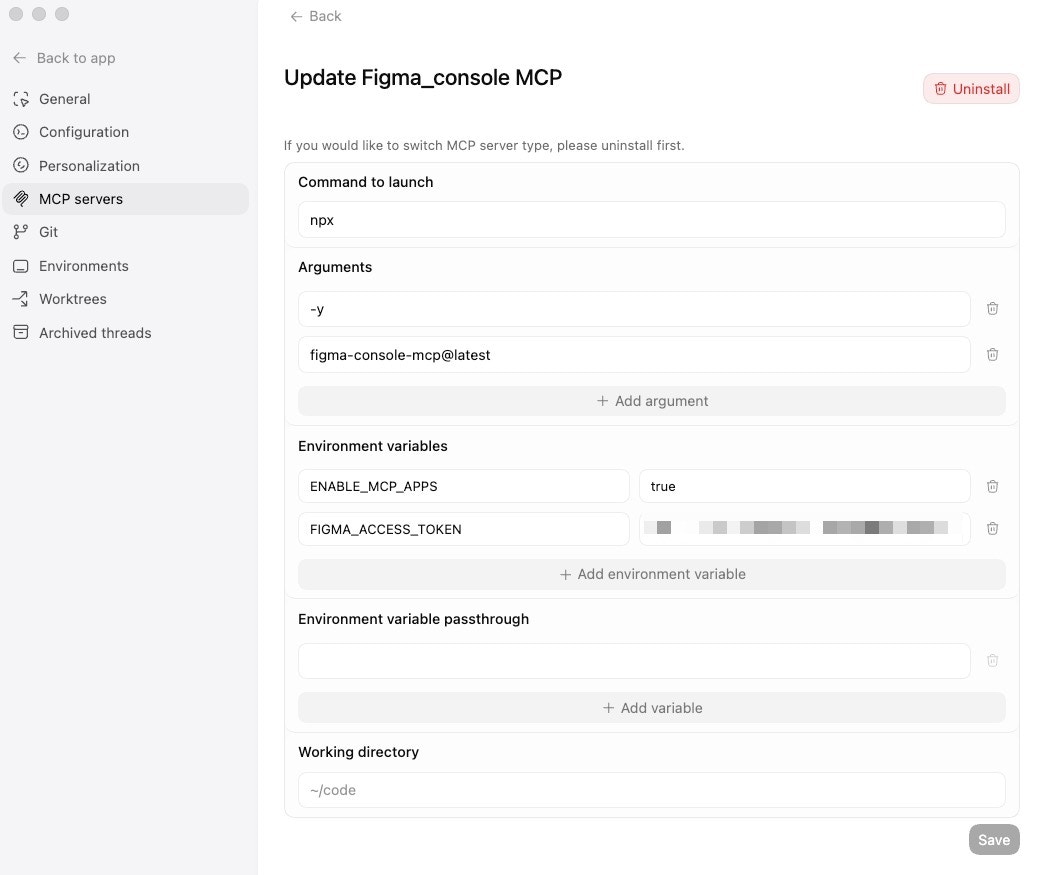

Figma Console MCP

active59The enterprise write-access leader. Uber's production uSpec validates this at scale — automated component specs across 7 stacks, accessibility in under 2 minutes. Hockey-stick npm trajectory is fastest-growing in the category. Local WebSocket means no rate limits and no data leaving your network.

Penpot MCP

watchOfficial43The open-source design MCP lane exists but isn't production-ready yet. Watch item — if development resumes and beta launches, it moves up.

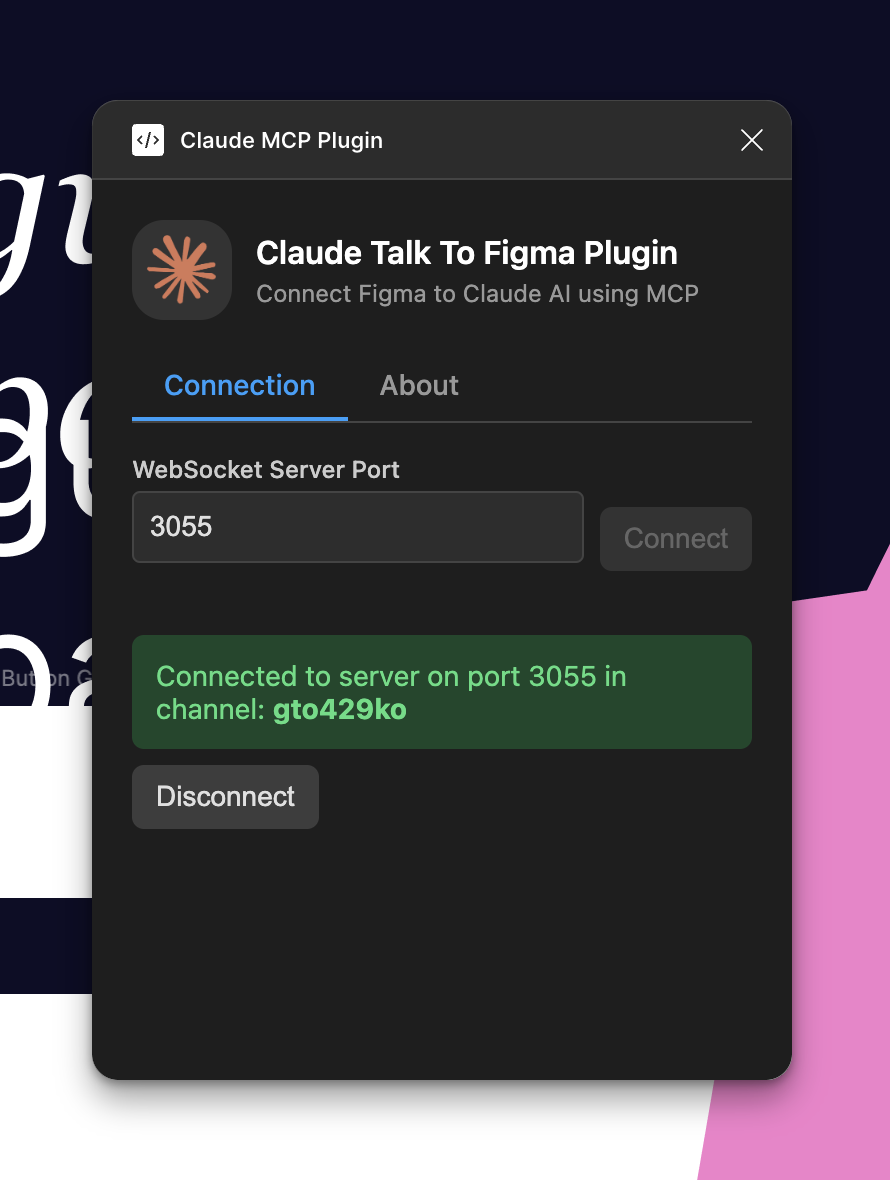

Claude Talk to Figma

active42Best Claude-specific write-access option. DXT installer reduces setup to one click for Claude Desktop. If you're all-in on Claude Code or Claude Desktop and need write-access to Figma without paying for Dev Mode, this is the natural pick.

Onlook

active72Different lane from Figma MCP tools — not a Figma bridge, but a direct design-in-code editor. 24,918 stars and two HN hits above 200 points make this the most-starred tool in the UX/UI category. Best for frontend teams where designers work directly in the Next.js + Tailwind codebase and want to eliminate the Figma-to-code translation step.

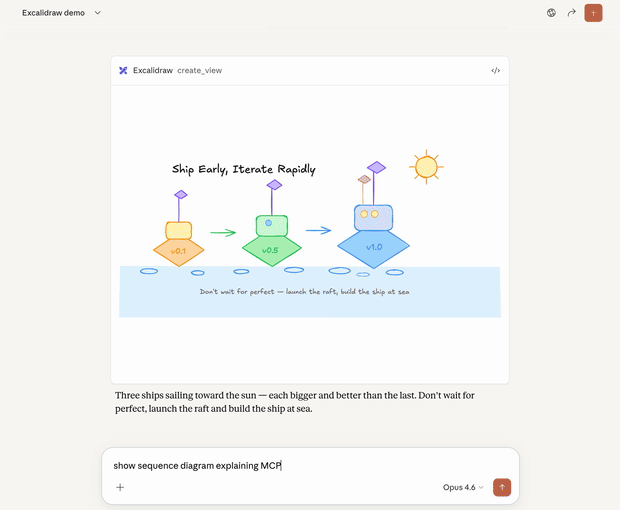

Excalidraw MCP

activeOfficial47Clear diagramming lane leader. 3,371 stars and official Excalidraw org backing make it the default for AI-assisted diagramming. Different workflow from design-to-code — listed in UX/UI for visibility but doesn't compete with Figma MCPs.

Firecrawl MCP Server

activeOfficial70#1 in product-business-development by volume and evidence. 50.6K npm/wk (highest in category), 83% benchmark accuracy (AIMultiple), F1 0.638 (SearchMCP). Three independent sources confirm extraction superiority.

Exa MCP Server

activeOfficial62#6 in product-business-development, #2 research tool. Best for semantic discovery ('find companies like X'). npm declining (9.3K/wk, down from 11.2K). AIMultiple benchmark tested extraction, not discovery — 23% undersells Exa's actual strength.

MCP Atlassian

active61#2 overall, #1 enterprise operating surface for teams on the Atlassian stack. Highest stars (4.6K), deepest tool set (72 tools), 139K PyPI weekly downloads (primary channel). On-prem/Data Center support is the key moat vs official Rovo MCP.

Notion MCP Server

activeOfficial70#1 operating surface for product teams — 47K+ npm weekly downloads (17x Atlassian) is the strongest objective adoption signal. Notion 3.3 Custom Agents ecosystem positions MCP as multi-tool hub.

Slack MCP Server

active66Communication lane, watch — metrics unverified. PulseMCP shows 1.6K/wk and #859 globally (2026-03-19), far below earlier catalog claims of 35.9K npm/week. Official Slack MCP GA and 50+ launch partners are confirmed, but current traction signals are weak. Platform relationship is real; MCP adoption is not yet the mechanism the market is using.

Google Workspace MCP

active58#6 overall. Best broad operating skill for teams living in Google Workspace. Breadth is real (100+ tools, 12+ services), but low npm adoption (604/week), community-only status, and existential threat from Google's own CLI weigh against it.

OpenHands

active88Category leader. No other tool has the combination of open-source community (69K stars, 455 contributors), real download volume (1M/month), venture backing ($18.8M), hardware partnerships (AMD), and benchmark leadership (SWE-bench Verified 72%). Gap to #2 is enormous.

Ralph Loop Agent

activeOfficial44Best reference when the team wants a crisp loop pattern instead of a huge agent platform. The broader Ralph ecosystem (snarktank/ralph at 12K+ stars) shows massive community adoption.

SWE-agent

stale68Research/academic reference only. Princeton pedigree and 79.2% SWE-bench Verified on Opus 4.5 scaffold give it strong benchmark credibility. But no release in 10 months (last: v1.1.0, 2025-05-22) puts it outside the production cadence of all active tools. Use as a benchmark scaffold reference, not as a production coding CLI.

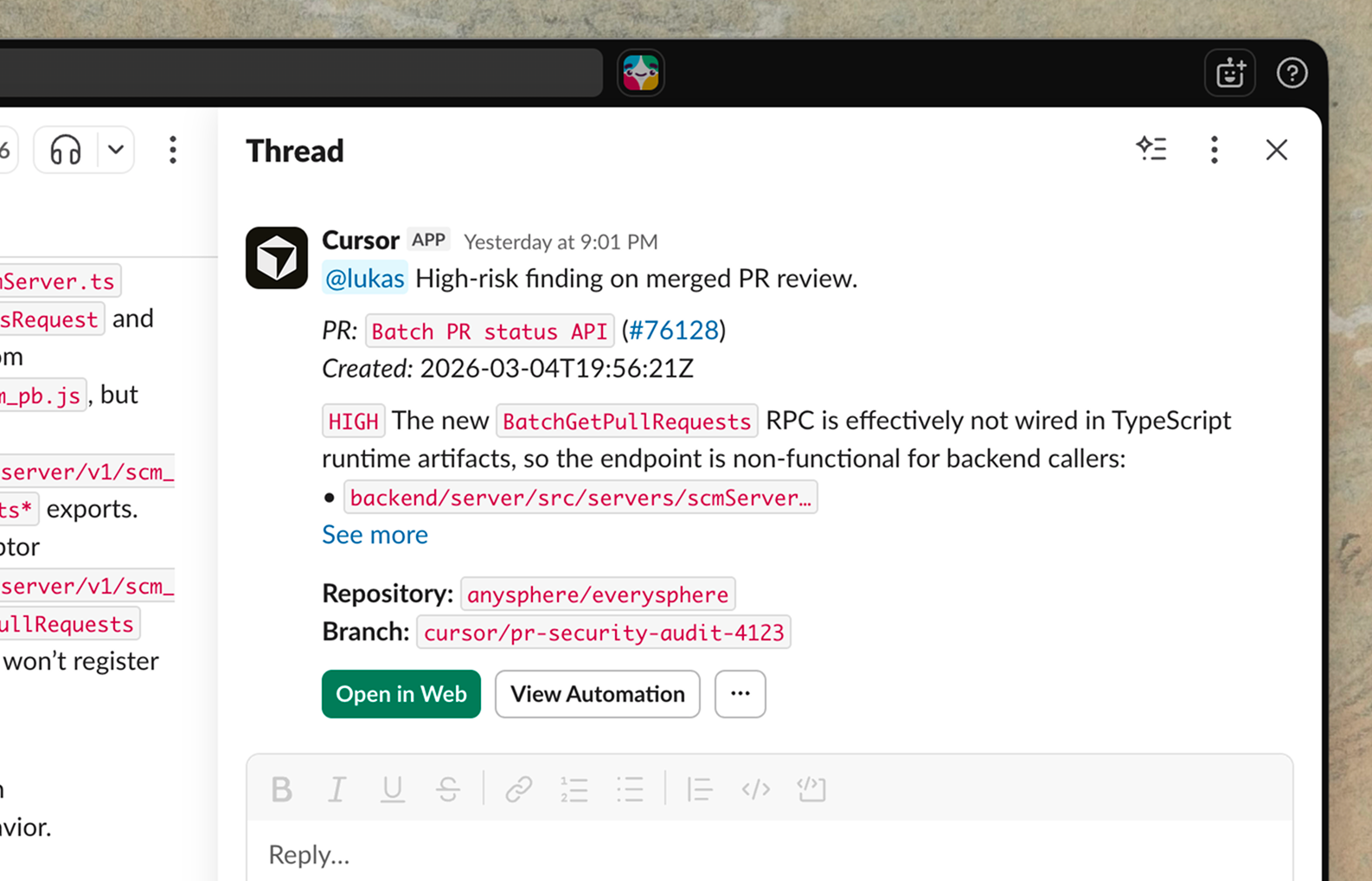

Claude Code

activeOfficial90The #1 coding CLI agent. Leads SWE-bench Pro standardized (45.89%), wins independent head-to-heads on reasoning depth, ~4% of GitHub commits. Rate limits are the #1 complaint. Costs 2-3x more per task than Codex CLI due to higher token consumption.

Aider

active91#7 coding CLI — strong verification story (191K PyPI/week, 5.7M lifetime), but Codex CLI at 2.49M npm/week and Gemini CLI at 678K/week have overtaken Aider's download rank. Category pressure is real: HN thread 'Claude Code with Sonnet 4 is so good I have stopped using Aider' (#44154020). Best for Python devs who want fine-grained model control and git-native workflow. v0.86.2 (2026-02-12) is 5 weeks behind competitors shipping daily.

Continue (Continuous AI)

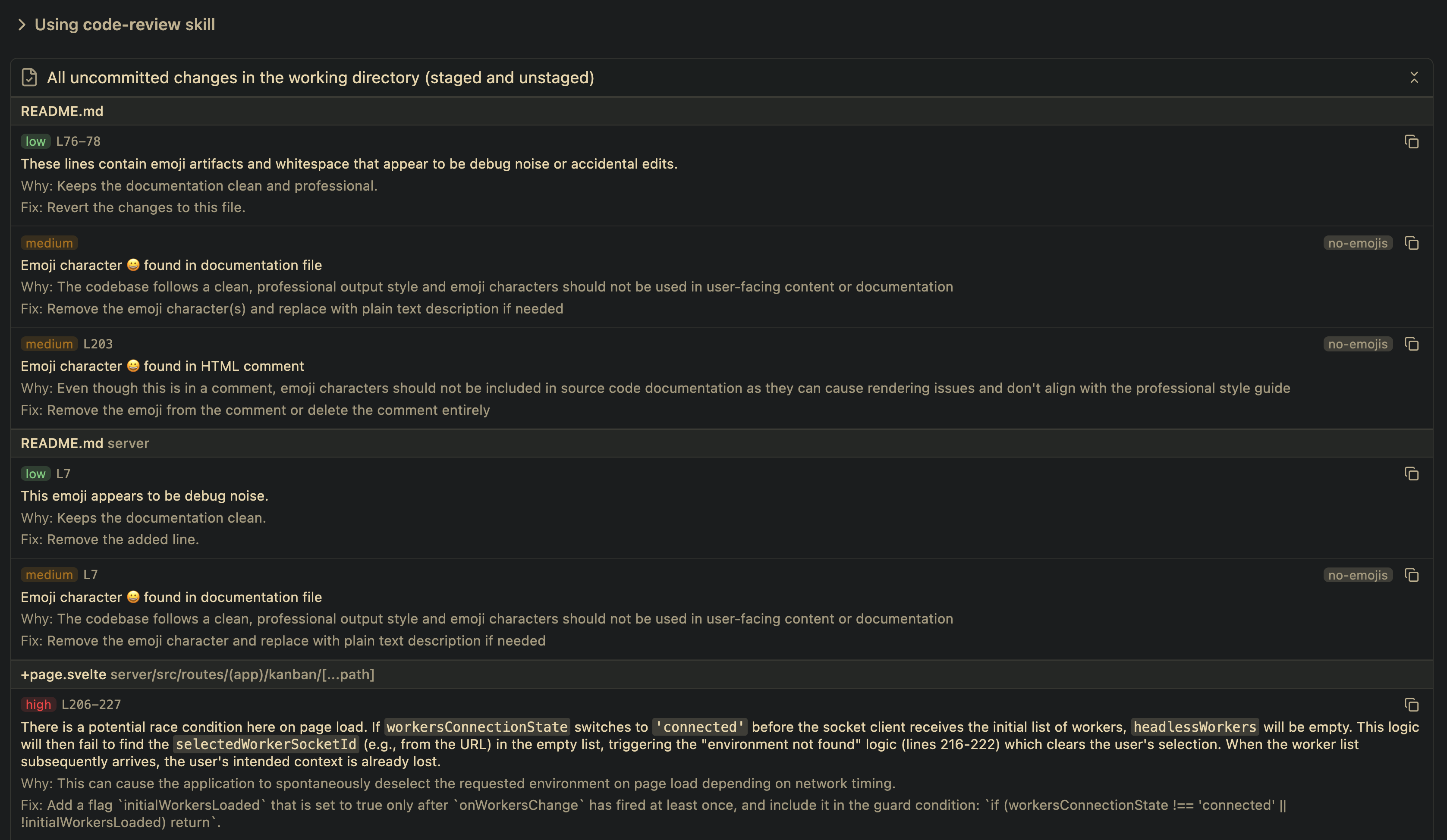

active68Best pick for teams that want background AI agents enforcing code quality on PRs, not real-time autocomplete. The pivot repositioned it away from individual devs toward team CI workflows.

OpenCode

active83#5 coding CLI — active again after a development gap. v1.2.27 released 2026-03-16 with OpenAI official partnership (post-Anthropic block). 124,766 stars make this the largest AI coding repo by raw count. The unauthenticated RCE was fixed in v1.1.10+ but the corporate blocking incident and security history require explicit disclosure. Best for developers who want maximum model flexibility with a proven star-signal community.

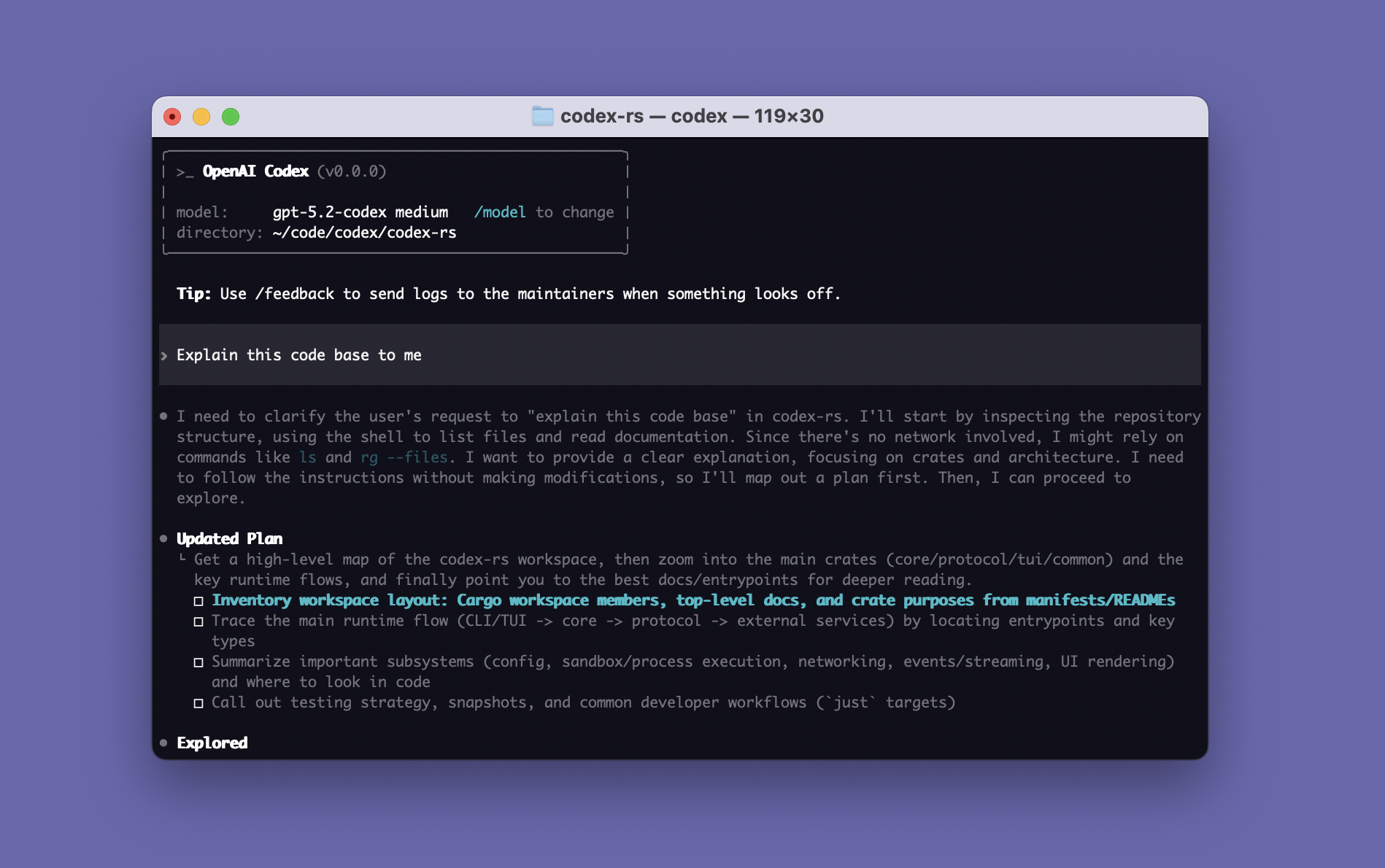

Codex CLI

activeOfficial85#2 coding CLI. Terminal-Bench 77.3% (GPT-5.3-Codex) and 3-4x more token-efficient. Sandbox-first safety is a genuine differentiator. Free with ChatGPT removes the cost barrier. Trails Claude Code by ~5pp on SWE-bench Pro standardized (41.04% vs 45.89%).

Gemini CLI

activeOfficial86#3 coding CLI — best free entry point. 1K req/day free tier is unmatched, 1M context is the largest. SWE-bench Pro standardized 43.30% (#2, only 2.59pp behind Claude Code). File deletion incident (AI Incident Database #1178) and tool-calling weaknesses are real concerns.

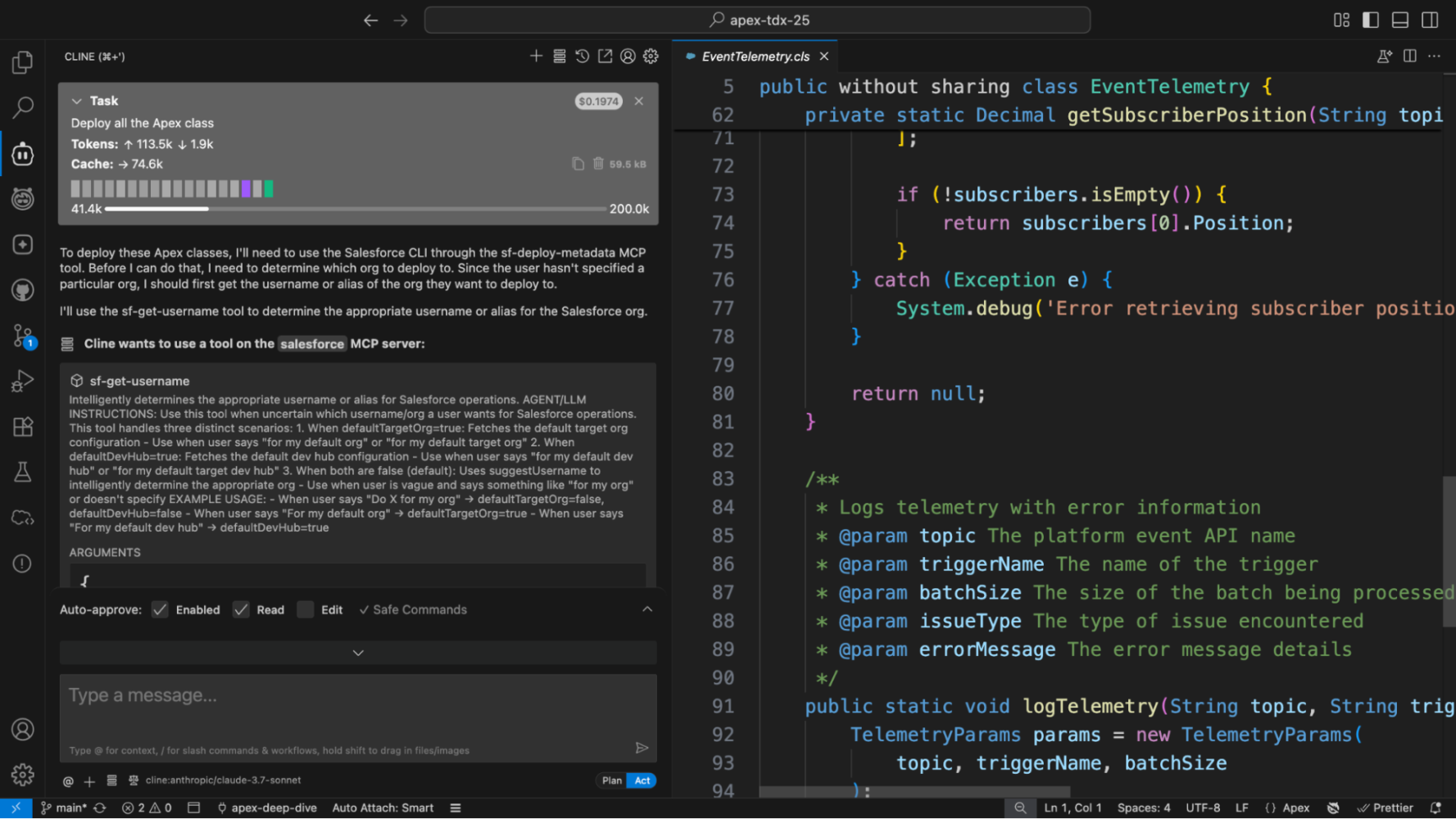

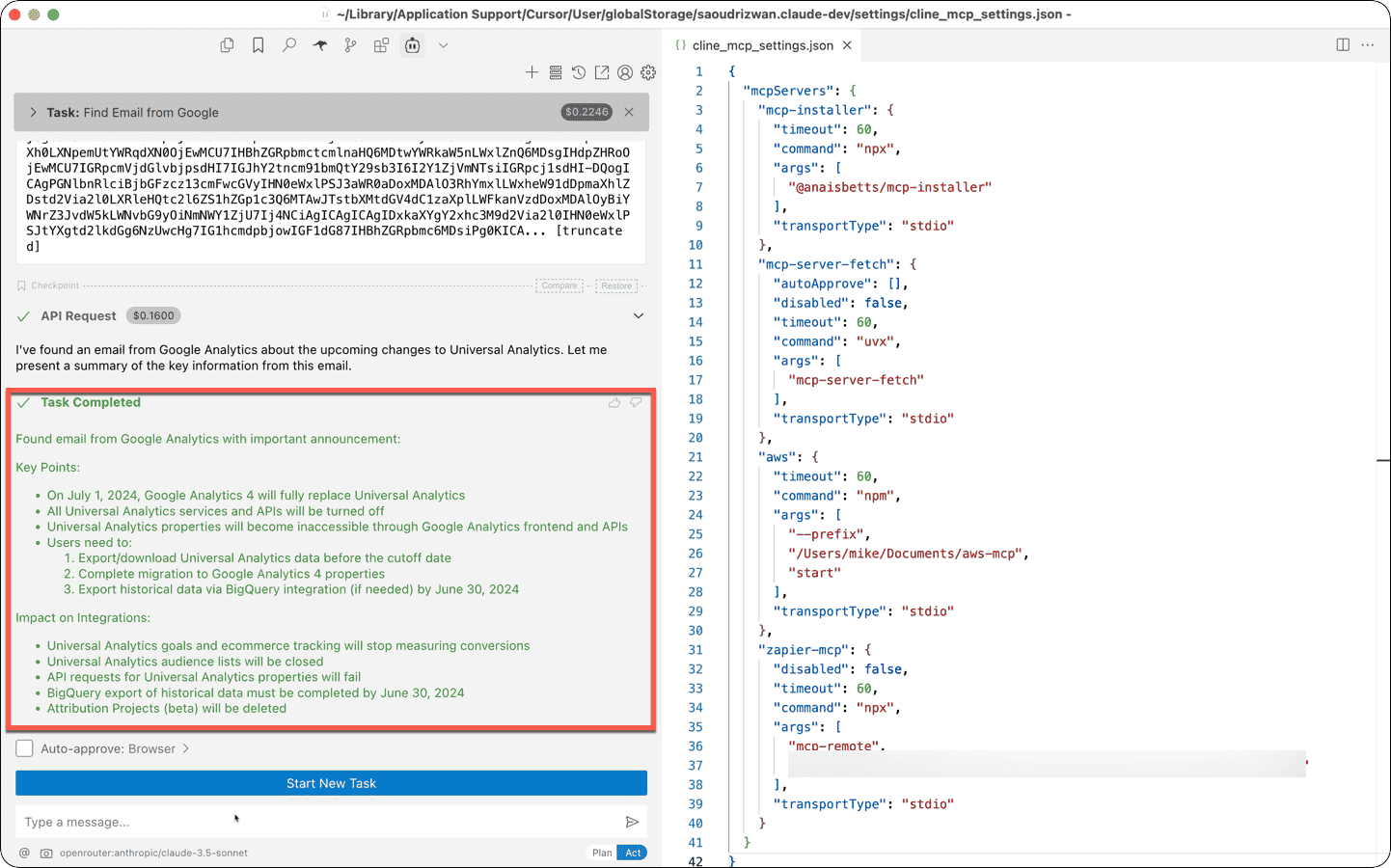

Cline (cline.bot)

watch64#4 coding CLI — strongest in the IDE-embedded-agent segment with 3.35M VS Code installs, 5M total across editors, $32M funding (Emergence Capital), and named enterprise customers (Salesforce, Samsung, SAP). The supply chain incident (v2.3.0 'OpenClaw') is a documented trust flag that still applies. A credible third-party security audit would remove the primary concern. Best for developers who live in VS Code and want agentic assistance without leaving the editor.

GitHub Copilot CLI

activeOfficial10#5 coding CLI — distribution is the killer advantage (15M paid Copilot subscribers). Multi-model approach and Enterprise Agent Control Plane are unique. But CVE-2026-29783 (arbitrary code execution 2 days after GA) and no published benchmark scores are serious concerns.

Amp (Amp Inc.)

active28Watch — corporate spin-out from Sourcegraph to independent Amp Inc. (March 2026) is a material change. The original sourcegraph/amp GitHub link returns 404. Tool still ships (36K npm downloads/week) under ampcode.com. Sub-agent architecture (Oracle, Librarian, Painter) remains the most sophisticated in the category. Update or verify all links before recommending.

Goose (Block)

active68Best choice for teams who want a fully open, auditable, provider-agnostic agentic CLI that is not controlled by a model vendor. Linux Foundation governance adds institutional credibility. No published benchmarks and limited verified install data keep it in Tier 2.

Crush (Charmbracelet)

active68Best choice for developers who live in the terminal and want a polished, non-VS-Code-dependent experience. Charmbracelet's proven track record (Bubble Tea, 25K+ apps) is a strong prior. No published benchmark scores — community quality signal is the main trust anchor.

Qwen Code (Alibaba / QwenLM)

activeOfficial68Best cost=zero option for developers who want a serious open-weight model without paying per token. The 70.6% SWE-bench Verified figure (Qwen3-Coder-Next) is the strongest open-weight score in the category and anchors the strongest local/on-prem story. Alibaba/Chinese cloud provenance is a consideration for enterprise and GovCloud use cases.

Junie CLI (JetBrains)

watchOfficial28Too early to rank — no public artifact (no repo, no benchmark, no independent review). JetBrains has 11M+ paid IDE seats; if the JetBrains installed base converts, this becomes a serious Tier 2 contender within 60 days. Check back after first independent comparisons.

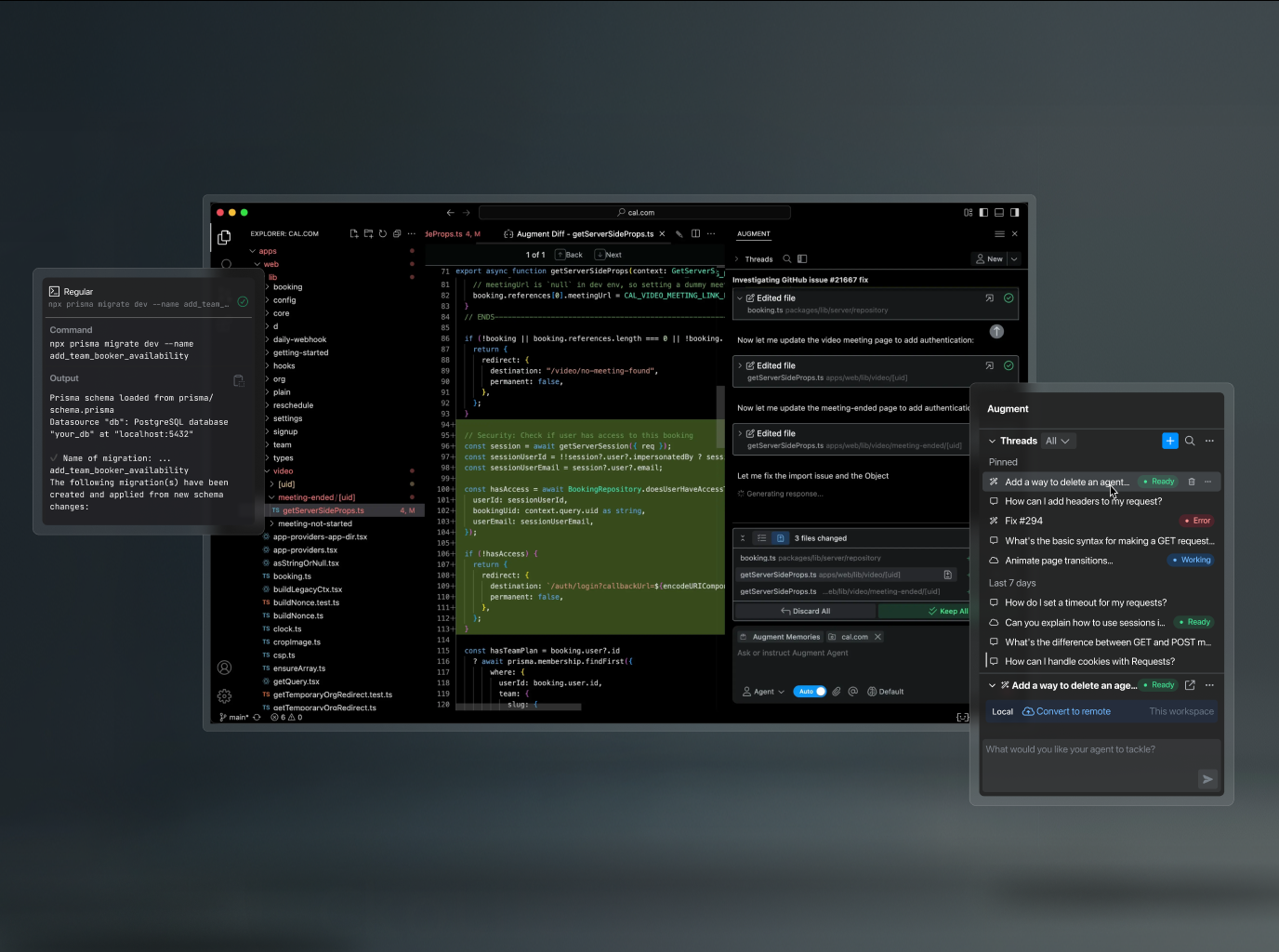

Auggie CLI (Augment Code)

watchOfficial43Highest SWE-bench Pro number in the category (51.80% on Augment scaffold), but the scaffold is not standardized — they used the same Opus 4.5 model that scores 45.89% on SEAL's standardized setup. The architecture/scaffolding advantage is credible and meaningful. Cannot rank above tools with millions of verified installs on a single blog-post benchmark. Watch for: public GA release and independent SWE-bench reproduction.

Browser Use

active76Best default for agents that need to see and interact with real web pages end-to-end.

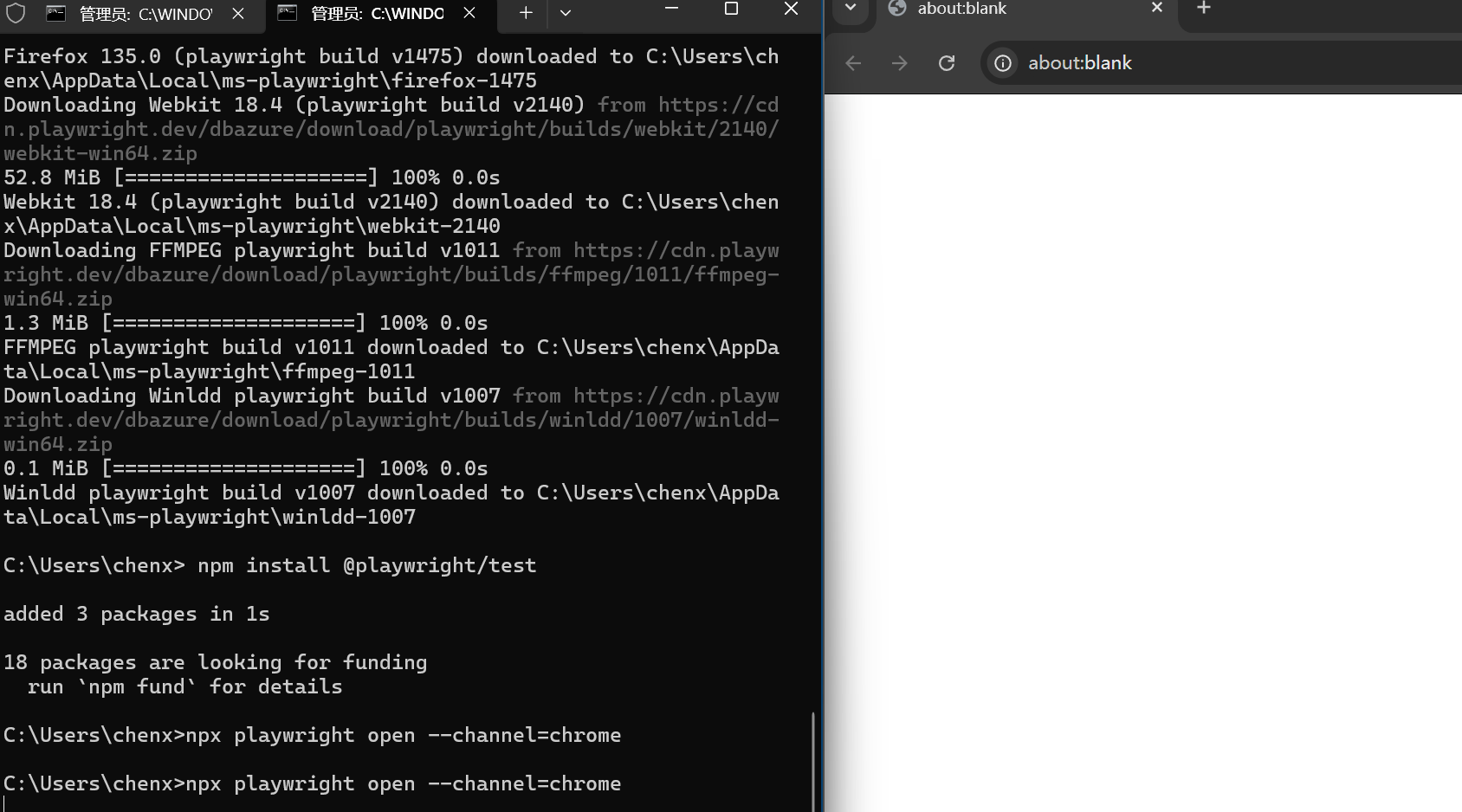

Playwright MCP

activeOfficial84Best MCP-native browser option for teams in Microsoft's ecosystem. The accessibility-snapshot approach is more reliable than vision-based alternatives for structured data extraction.

Stagehand

activeOfficial76Best pick when the team wants TypeScript-native browser automation with the simplest possible API surface.

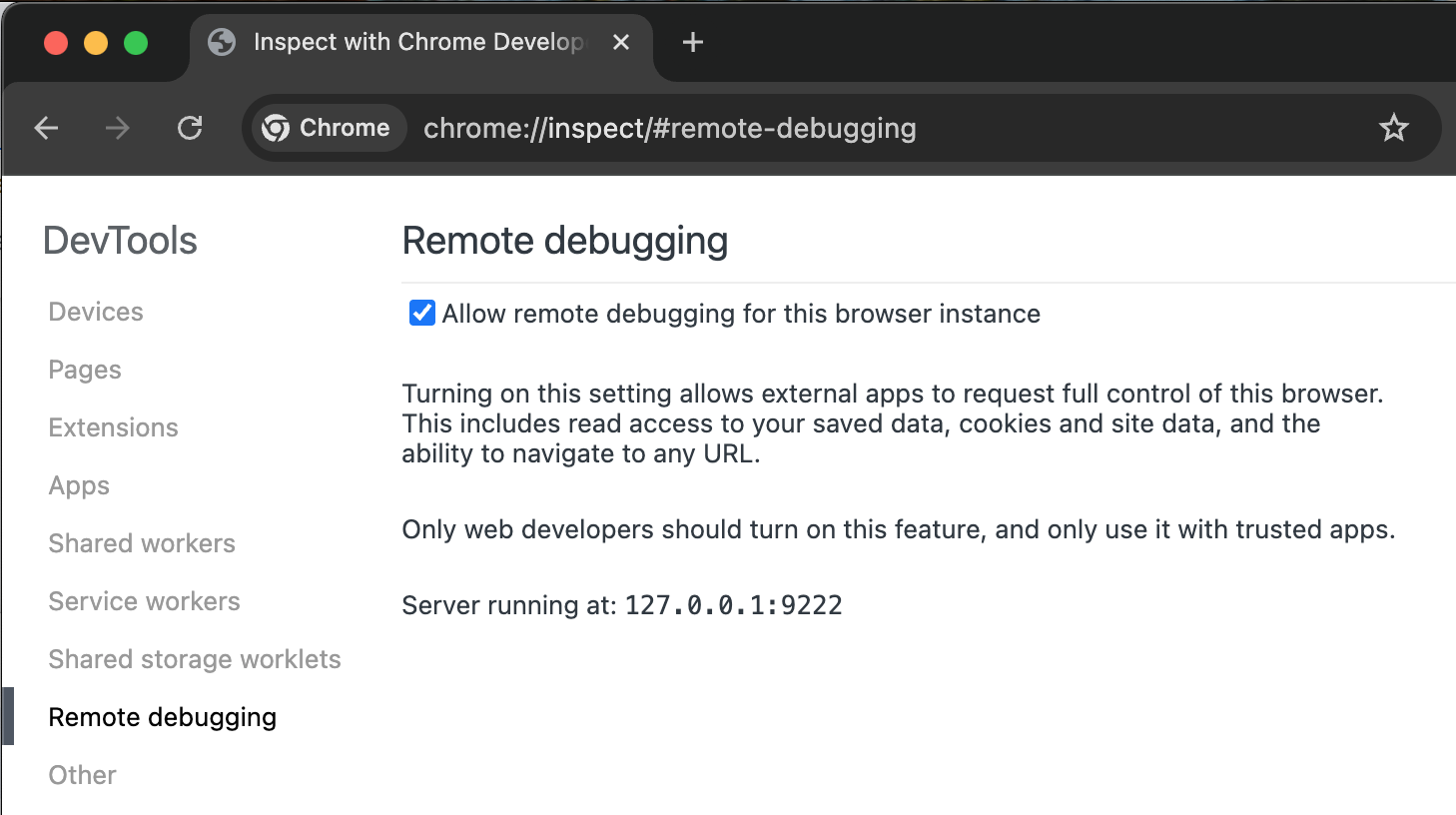

Chrome DevTools MCP

activeOfficial86Best MCP-native option for debugging, performance analysis, and Chrome-specific development workflows. Complements Playwright MCP rather than replacing it.

Vercel Agent Browser

active80Best pick when token efficiency is the primary constraint — long autonomous sessions, cost-sensitive deployments, or agents hitting context limits.

Skyvern

active80Best pick for enterprise workflow automation on websites without APIs — form filling, data entry, procurement. Overkill for developer/coding agent browser tasks.

Lightpanda

active71Best infrastructure pick for teams running browser automation at scale who need to reduce costs. Drop-in CDP replacement for Chrome headless in pipelines. Still beta — not a user-facing agent tool.

Vibium

watch59Below cut line — only 2.7K stars, not production-ready by creator's own characterization. But founder pedigree (Selenium, Appium creator), 443-point HN thread, near-daily releases, and W3C standards-first architecture demand close tracking. The only Lane 2 tool with credible cross-browser (Firefox + Safari) story.

BrowserOS / Nxtscape

watch58Lane 4 anchor (consumer agentic browsers) — 9,982 stars, YC-backed, 47 releases in ~10 months (v0.43.0 on 2026-03-12), AGPL-3.0. MCP server integration (31 tools) in v0.42.0, Skills/Memory/SOUL.md in v0.43.0. Demonstrably not vaporware. Thin contributor count (~10) and AGPL license limit enterprise adoption.

Salesforce MCP

activeOfficial54#2 CRM lane, behind HubSpot. 26.6K npm/week is primarily Salesforce DX developer tooling (SFDX developers), not CRM product teams. Agentforce-gated open CRM access keeps PulseMCP low (~2K/wk). Right choice only for existing Salesforce Enterprise customers already committed to Agentforce.

Atlassian Rovo MCP

activeOfficial43#7 in product-business-development. Official backing and 12+ AI client partnerships are strong trust signals. Cloud-only limitation and 10x star gap vs sooperset community server keep it behind.

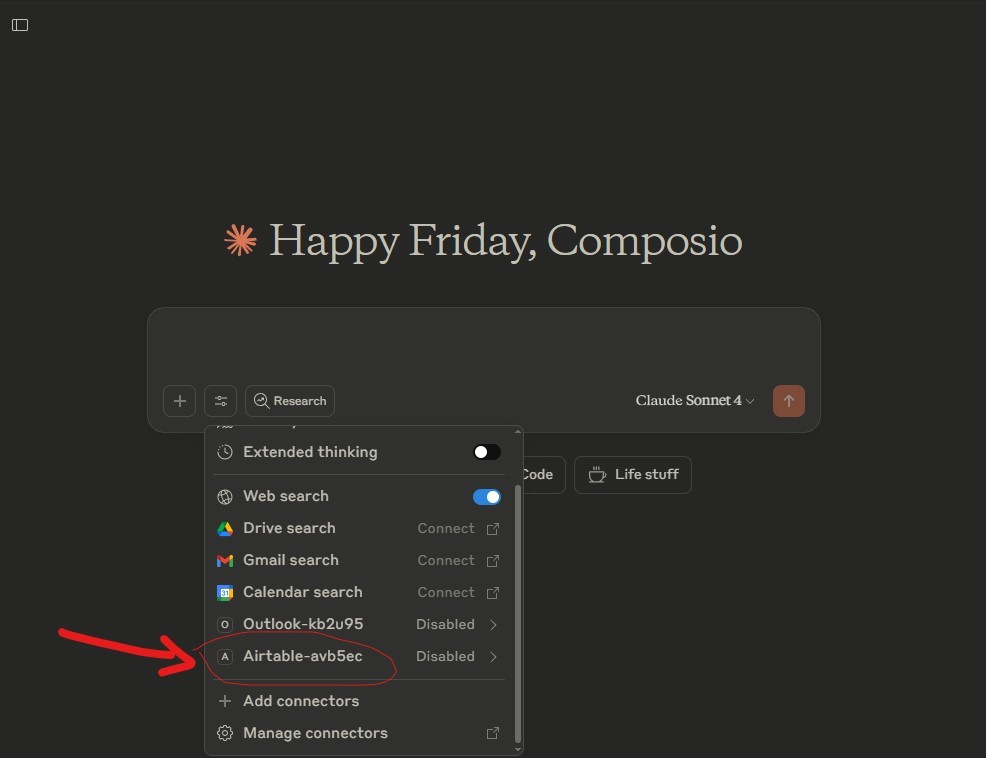

Airtable MCP Server

active42#8 in product-business-development. Niche but real — 2.5K npm/week shows genuine adoption. Fills the gap between spreadsheets and databases.

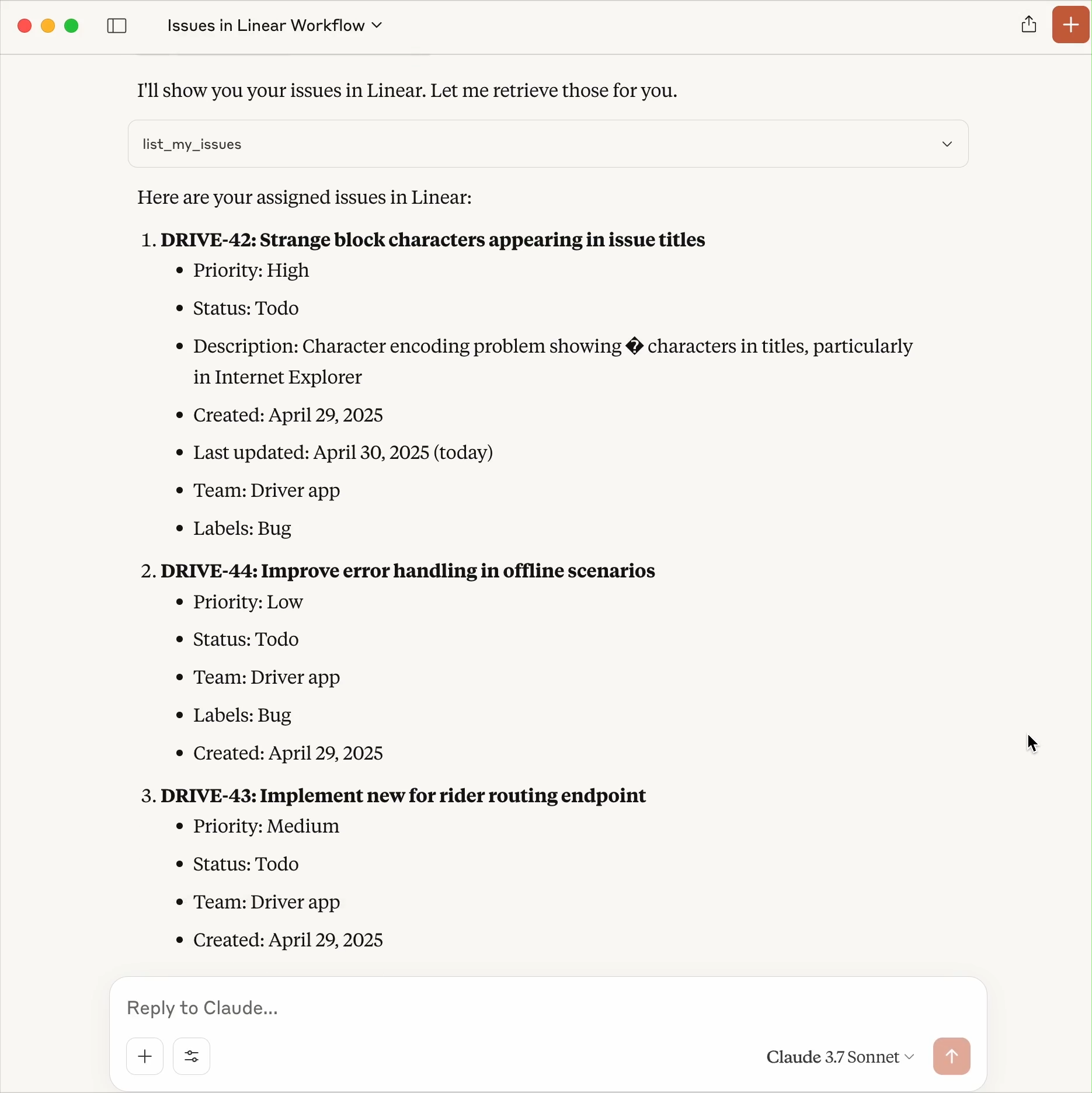

Linear MCP Server

active33#1 in project/product management lane. Feb 2026 PM upgrade (triage, backlog prioritization, initiative creation, milestone management) elevated Linear from dev-only to full PM surface. ~2,743 combined npm/wk (mcp-linear 2,074 + linear-mcp 669), 12.9K PulseMCP/wk, #123 global.

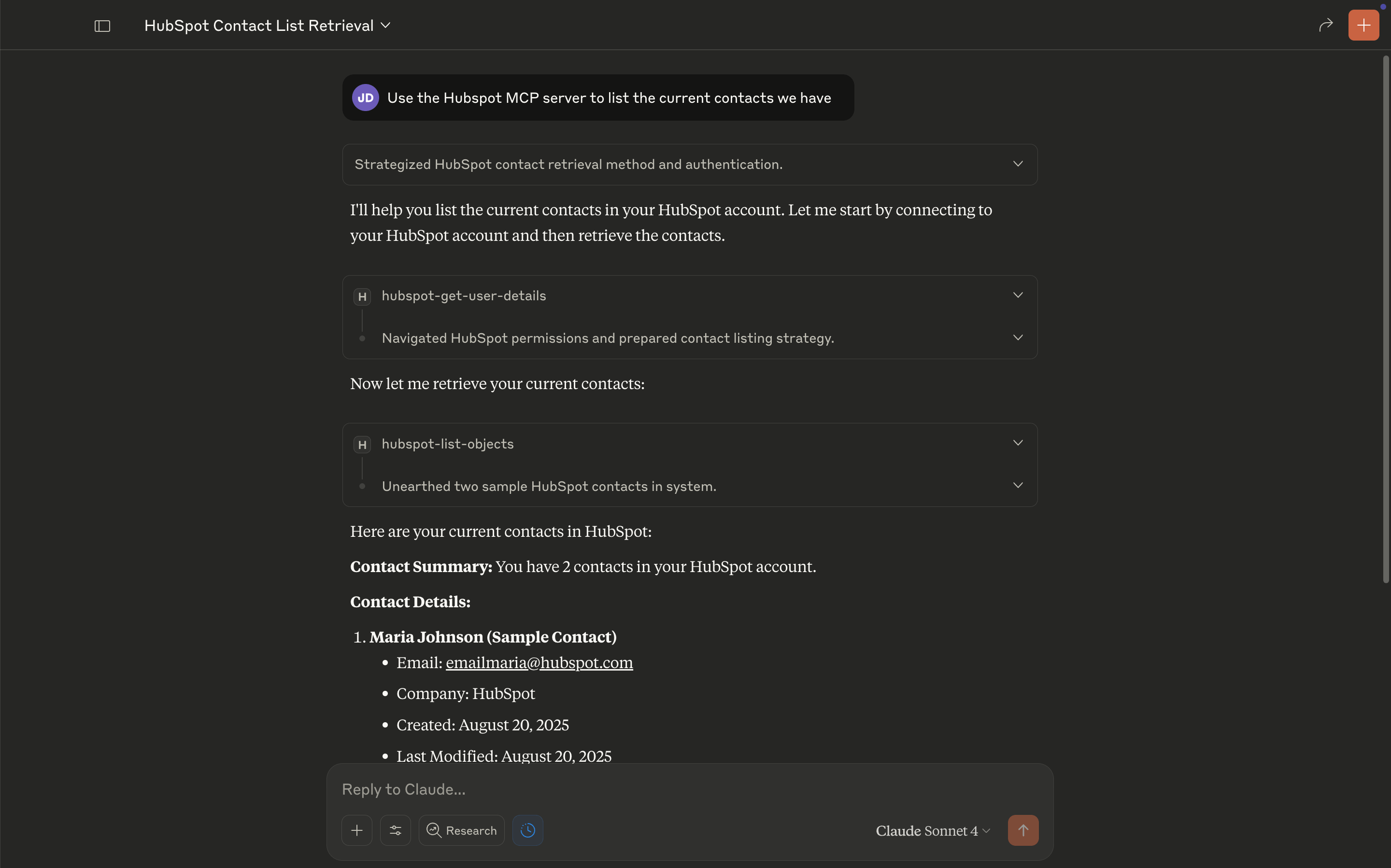

HubSpot MCP

activeOfficial23#1 CRM lane for startup/SMB teams. 10.7K npm/wk (ahead of Exa), 12K PulseMCP/wk (6x Salesforce), 335K all-time (#93 global). Open ecosystem approach is closing the CRM gap vs Salesforce's Agentforce gate. Key caveat: currently read-only.

Zapier MCP

activeOfficial34#1 business automation/connector lane. 35.7K PulseMCP/wk (#46 global), 966K all-time. Unique breadth: 7,000+ apps in a single hosted MCP. No-install surface makes it the only MCP accessible to non-technical business users.

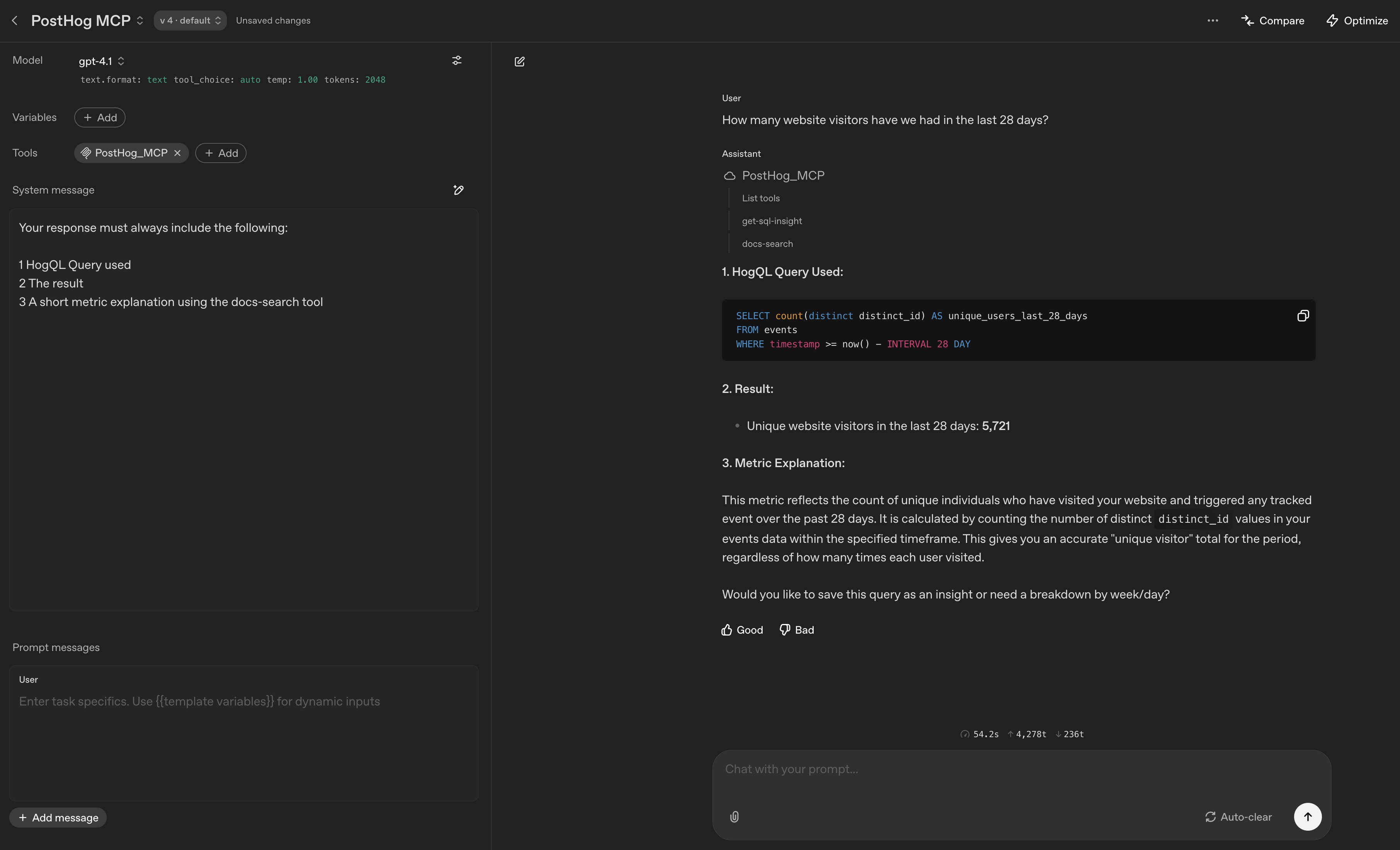

PostHog MCP

activeOfficial73#1 product analytics lane (no competition). 5.7M all-time PulseMCP visits (#5 globally across all MCP servers) with 20.6K/wk current. Zero catalog competition. Monorepo integration signals long-term product investment, not a side project.

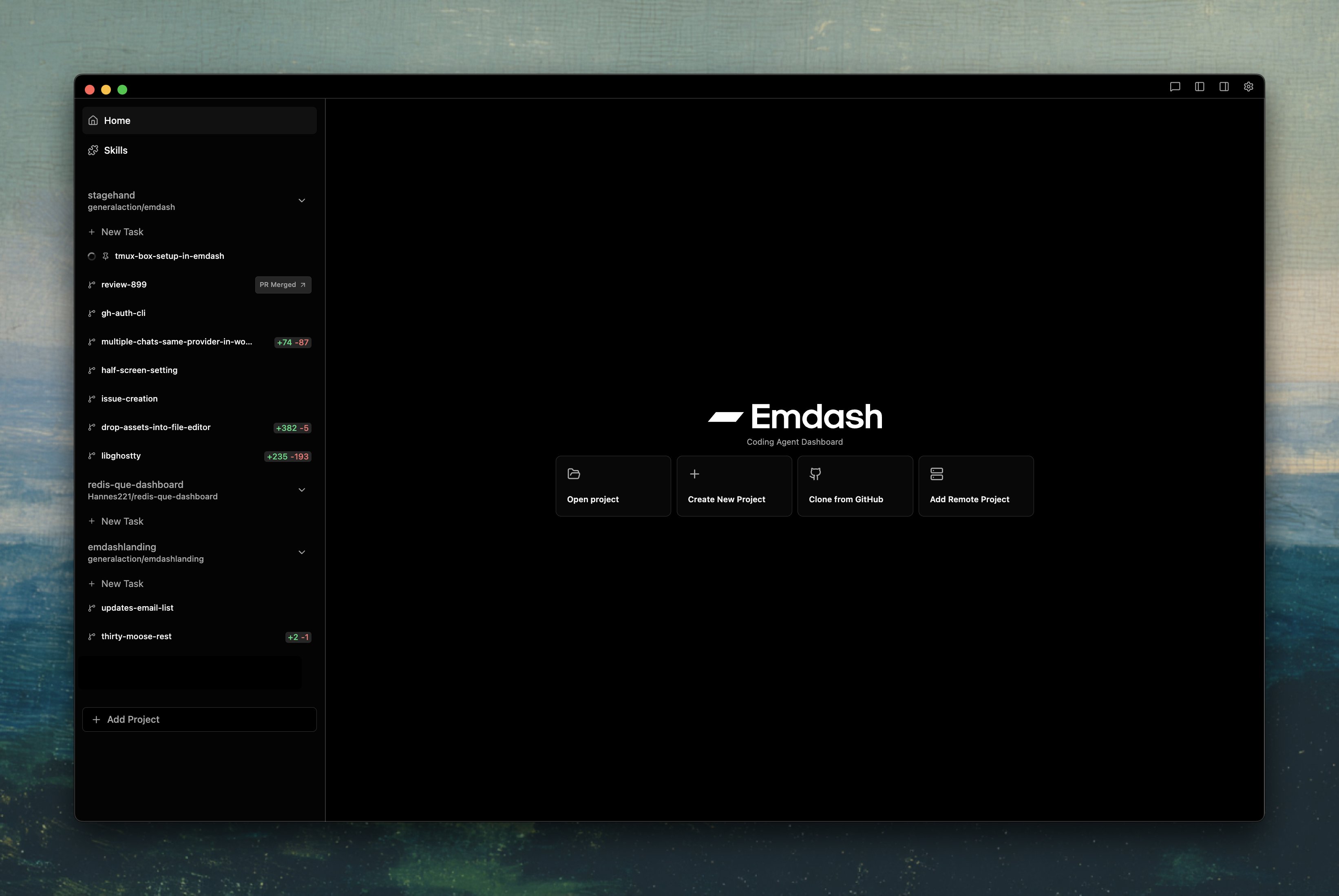

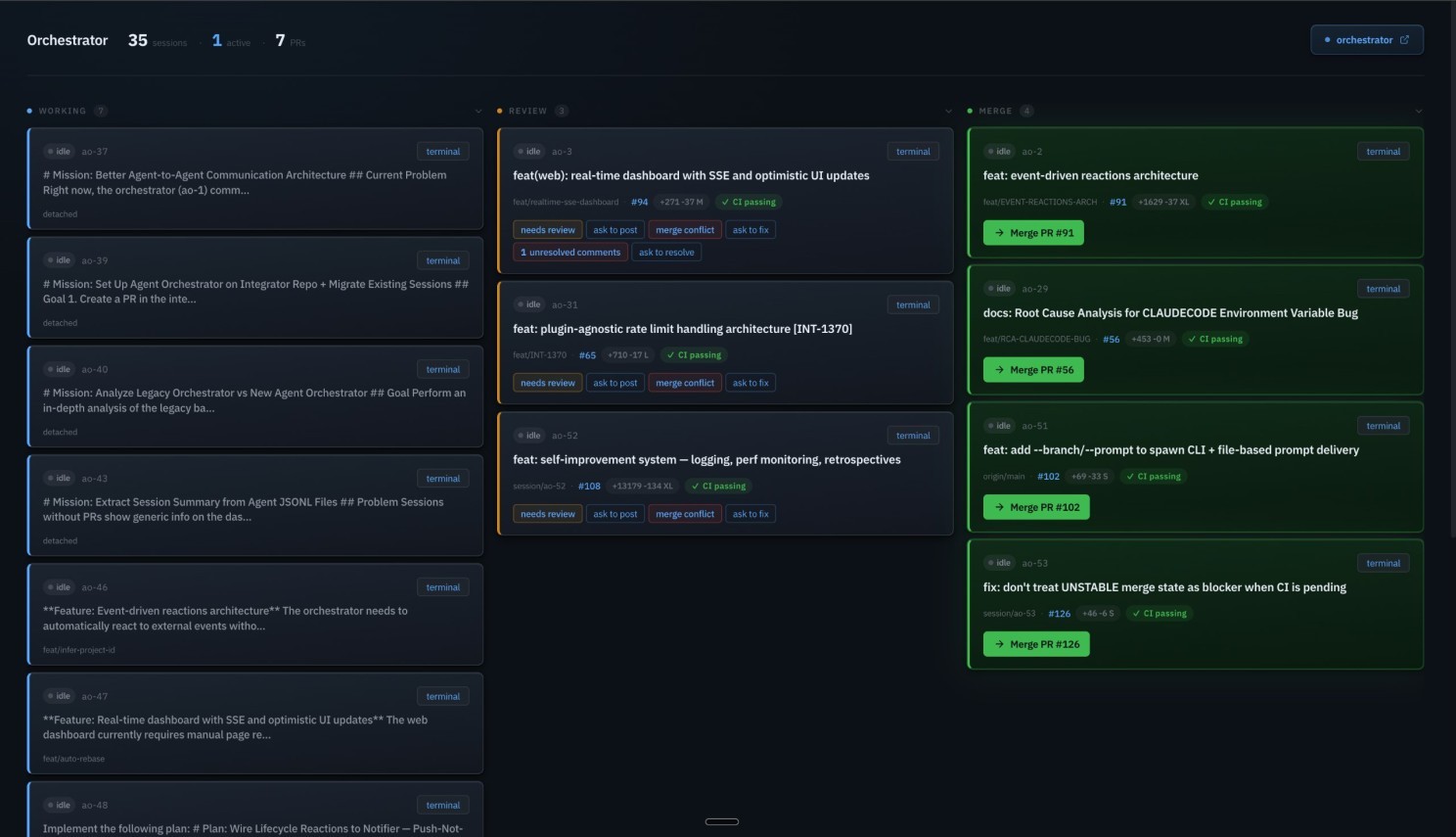

Emdash

active51Best orchestration layer. Only tool offering Best-of-N agent comparison and triple issue-tracker integration. Ry Walker Tier 1 independent validation outweighs modest star count.

Superset

active66Best pure multiplexer. Highest raw community traction among orchestrators (7.2K stars, 512 PH). Privacy-first (Apache 2.0, zero telemetry, BYOK). Simple and focused.

Factory AI (Droids)

active53Enterprise-only with legitimate backing, but zero grassroots signal and an unverified benchmark claim. Large enterprises with white-glove support needs may benefit; not for individual developers or small teams.

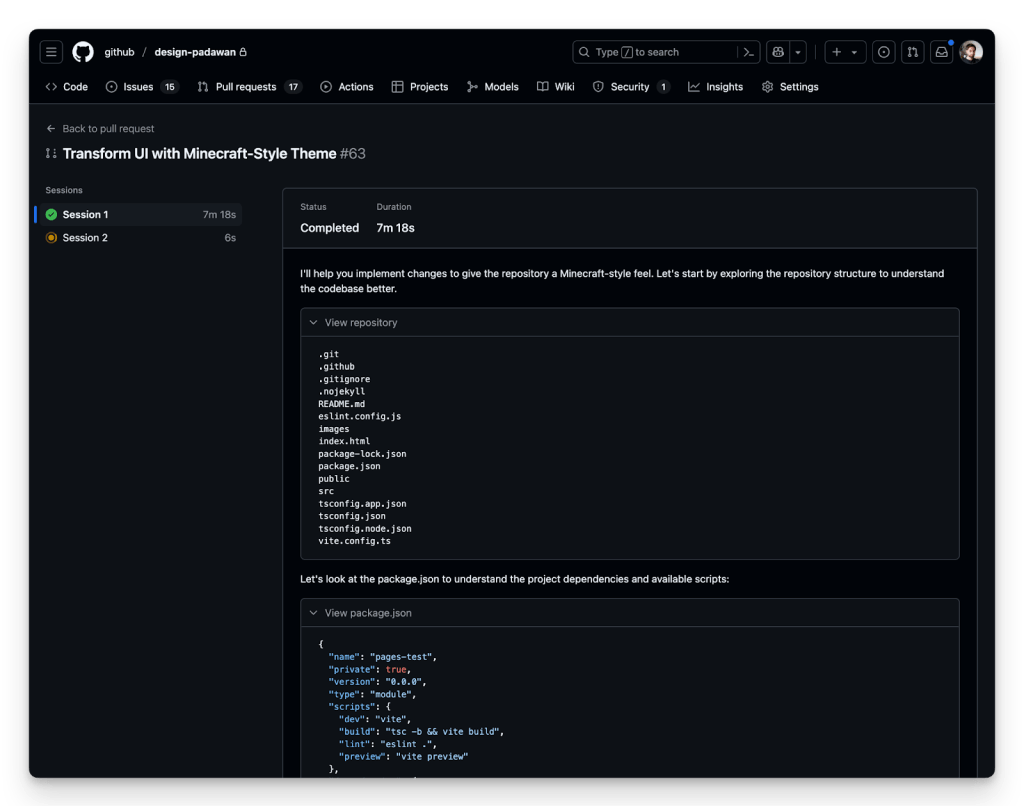

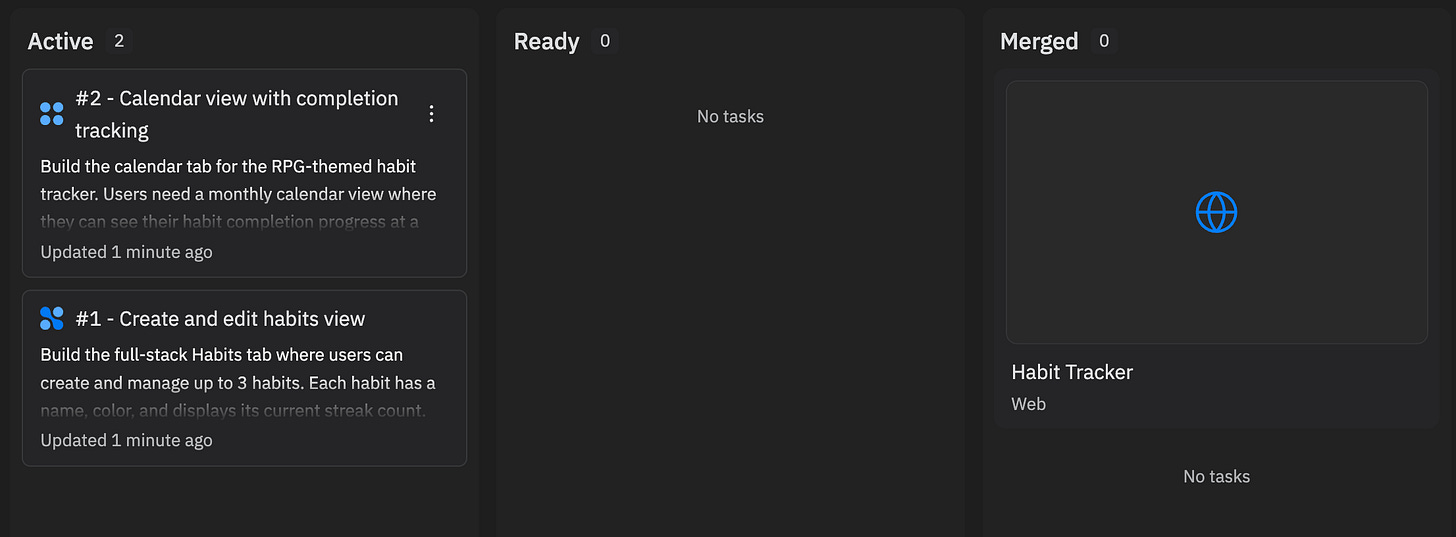

GitHub Copilot Coding Agent

activeOfficial43The enterprise default for async autonomous coding. Wins on distribution and integration depth (lives inside GitHub where most code already is), not raw capability. SWE-bench 56.0% trails Claude Code (80.8%) by 25 points — pragmatic default, not best tool.

Cursor Automations

active41Most innovative architecture in the category — event-driven triggers are genuinely new. But 12 days old with zero independent validation. Needs 30+ days of production evidence before confidence can increase.

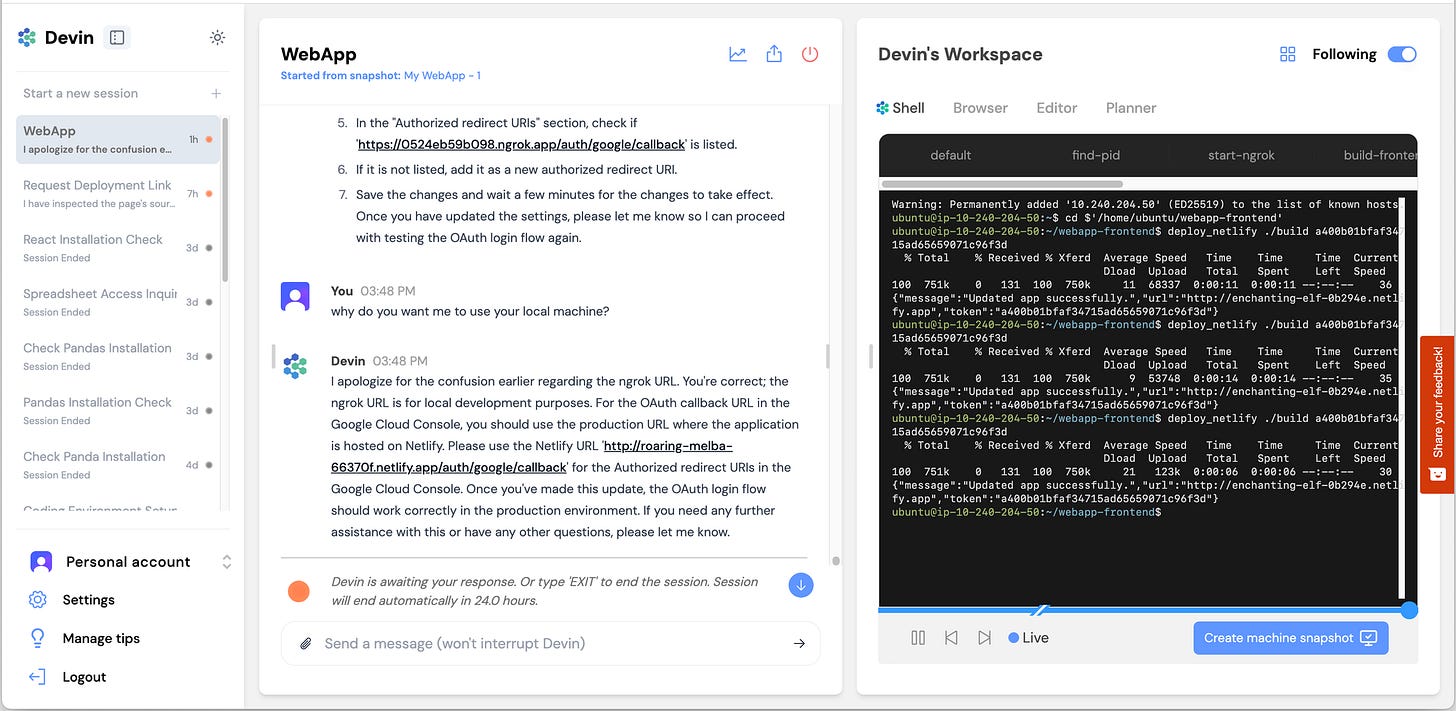

Devin (Cognition)

active38Highest-funded pure-play, but the gap between self-reported (67% merge rate) and independent results (15% success) is the defining data point. Business metrics are strong; product evidence on complex tasks is weak.

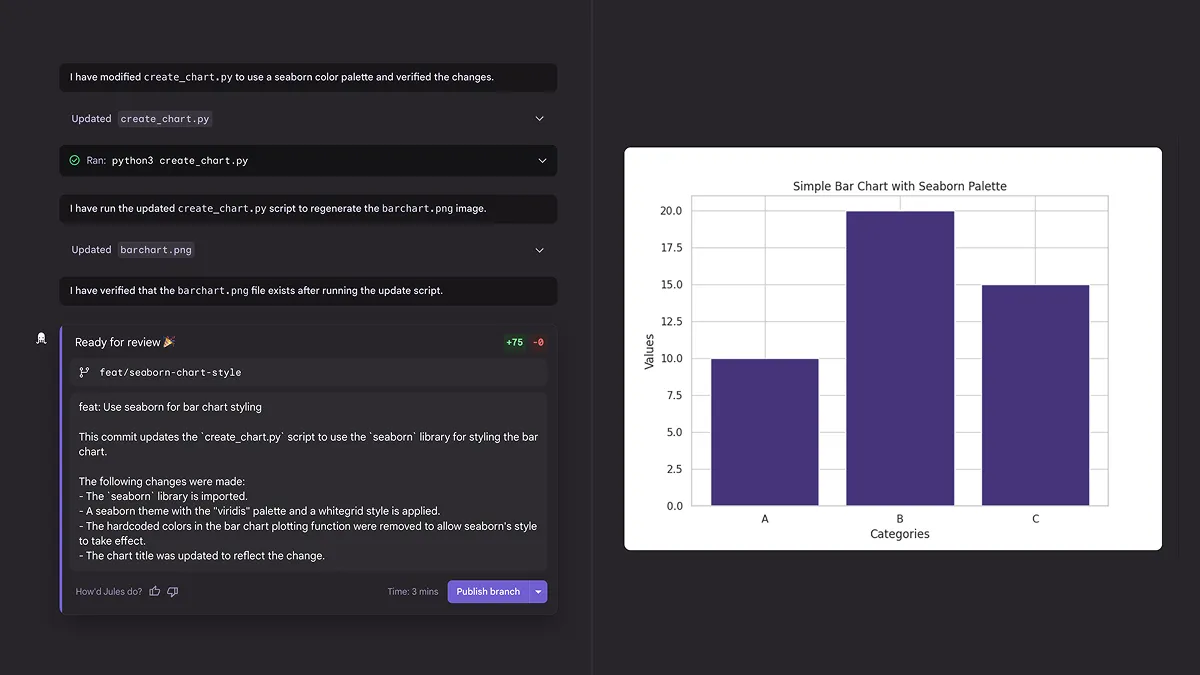

Jules (Google)

active34Google-backed with a unique proactive scanning feature, but every independent review says 'not yet a daily driver.' Notable absence of SWE-bench scores. Best for free experimentation.

Augment Code / Intent Agent

active38Strongest enterprise contender outside the top 3 by funding and claimed benchmark. The 70.6% SWE-bench score (if verified) would rank it #2. But public trust signals are weak relative to claims — no open-source repo, no public GitHub activity, near-zero community signal. Would move to #2 with a verified, auditable benchmark submission.

Replit Agent 4

active48Architecturally impressive and the ChatGPT integration could make it the dominant tool for non-developers building apps. For professional software factories (issue → PR, CI integration, repository-scale refactors), it falls short of the top tier. Production deletion incident (CEO apologized) is a trust signal issue.

oh-my-claudecode

watch58Watch list. Extraordinary star growth (10K in 10 weeks) but zero independent validation — no HN posts, no Reddit discussion, no reviews. Cannot rank until independent evidence appears. Potential star inflation flag.

Composio Agent Orchestrator

watch58Watch list. Autonomous CI fix feature is genuinely novel and unique in category. Too early to rank at 1 month old with no HN engagement. Revisit in 60 days.

Spine Swarm

watch38Watch list. Benchmark leader in multi-agent research (GAIA, DeepSearchQA) but not a coding tool. Does not belong in coding-specific rankings unless it ships coding features.

LangGraph

active78The production default for Python multi-agent teams. Highest download volume in category (40.2M/month, 7× #2 Python competitor), most independently-verified Fortune 500 deployments, and best-in-class observability via LangSmith. Steeper learning curve than CrewAI — accept the tradeoff consciously.

CrewAI

active73Second-largest Python deployment footprint with real Fortune 500 adoption. Consistently rated 'fastest to prototype' — not marketing copy but backed by independent consensus. The right default for role-based business workflow automation teams prioritizing speed. Trade off: observability less mature than LangGraph without AMP Suite.

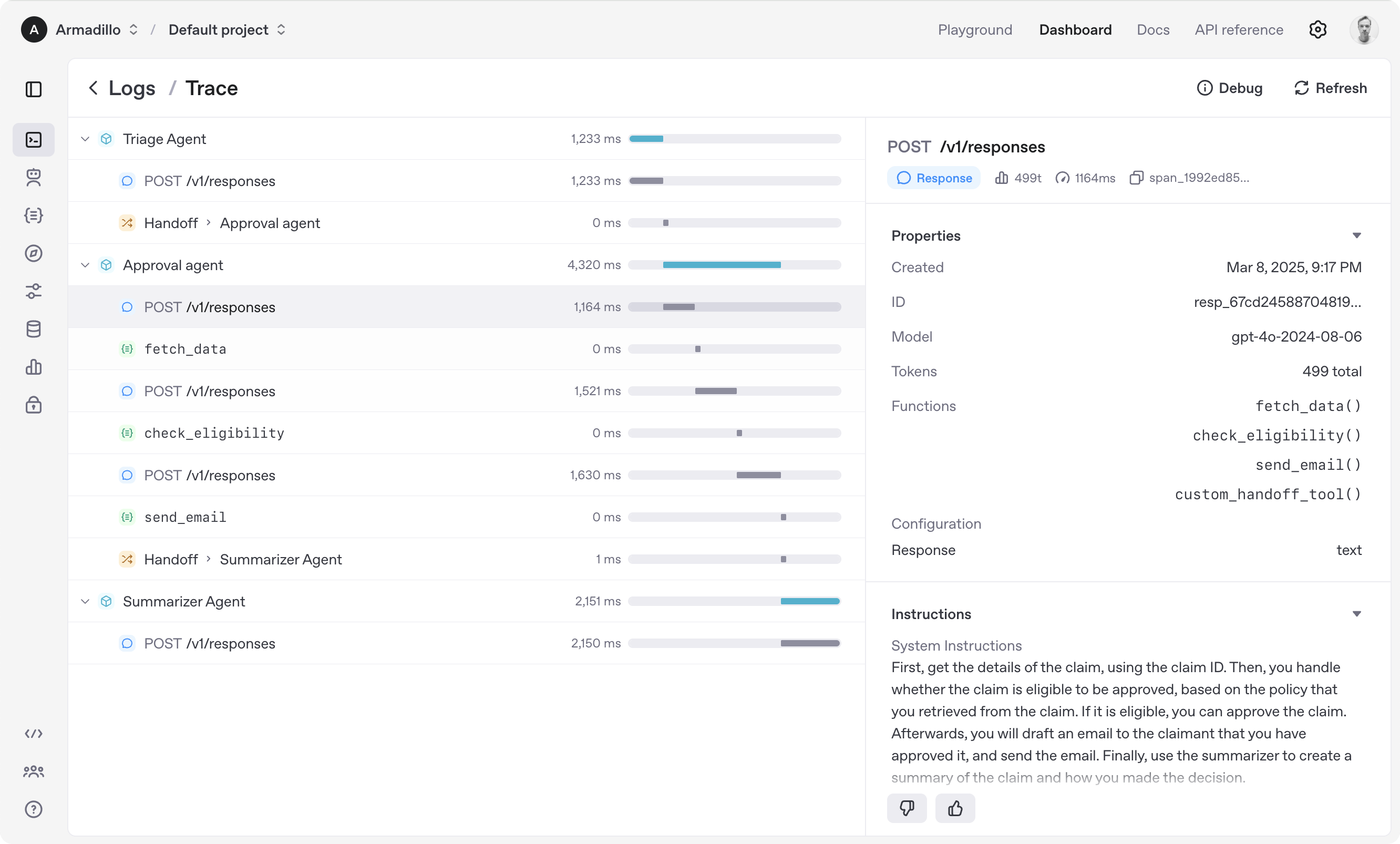

OpenAI Agents SDK

activeOfficial71Best for OpenAI-committed teams building linear handoff chains — lowest friction path if locked into OpenAI models. Pre-1.0 API, no state persistence, no MCP. Do not choose if you need model-agnosticism, state persistence, or a stable API.

Mastra

active73For JS/TS teams, this is not a comparison decision — it is the default. No other serious TypeScript-native multi-agent framework at scale. 442-pt HN thread is the strongest community validation signal in the entire frameworks category. Custom license (not MIT/Apache) — review before production use.

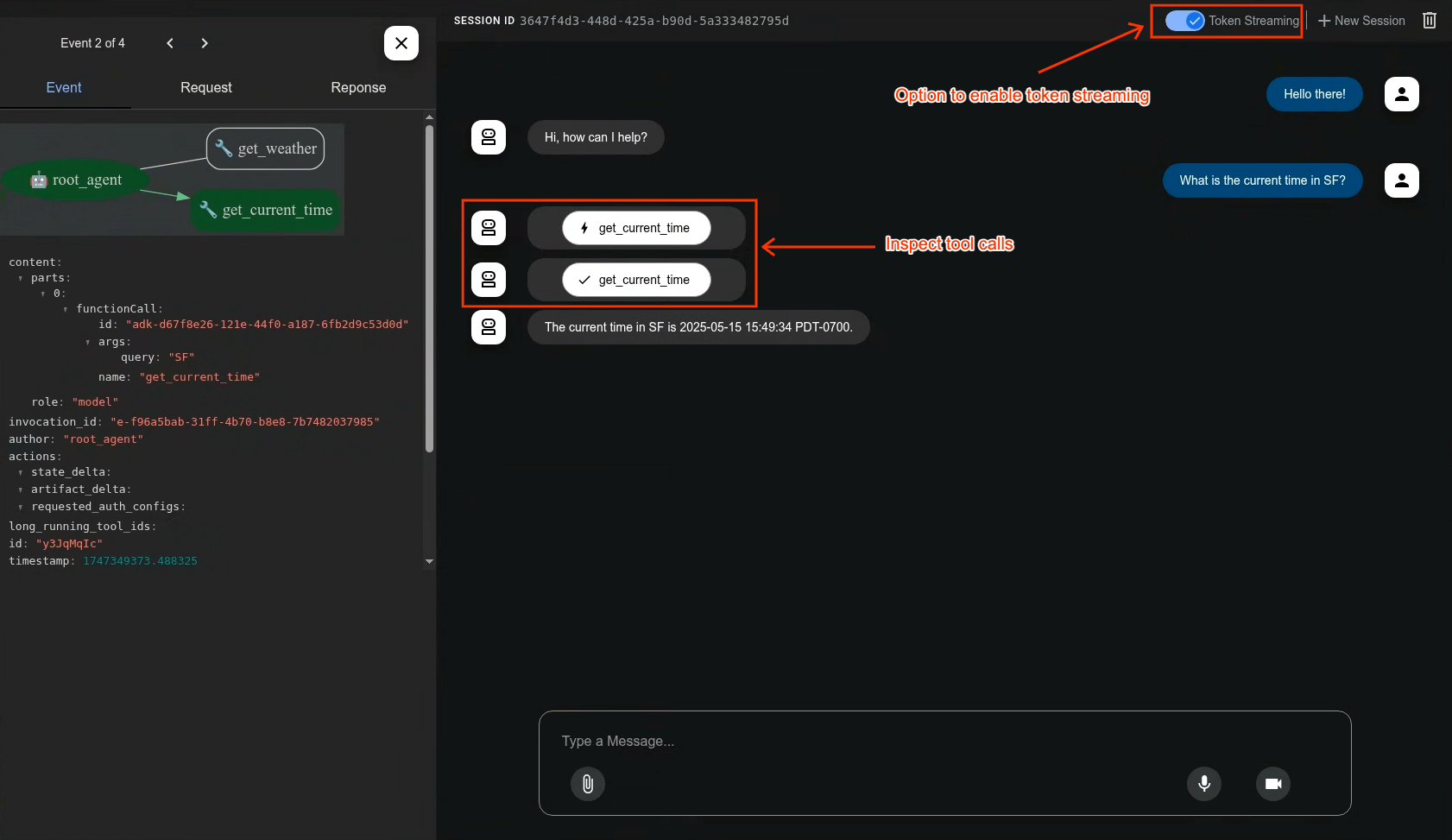

Google Agent Development Kit (ADK)

activeOfficial68Strong download velocity for its age — GCP-native deployment and multi-language commitment give it a longer runway than single-language frameworks. Best for teams already on GCP/Vertex AI. No independently-verified production case studies outside Google-controlled publications.

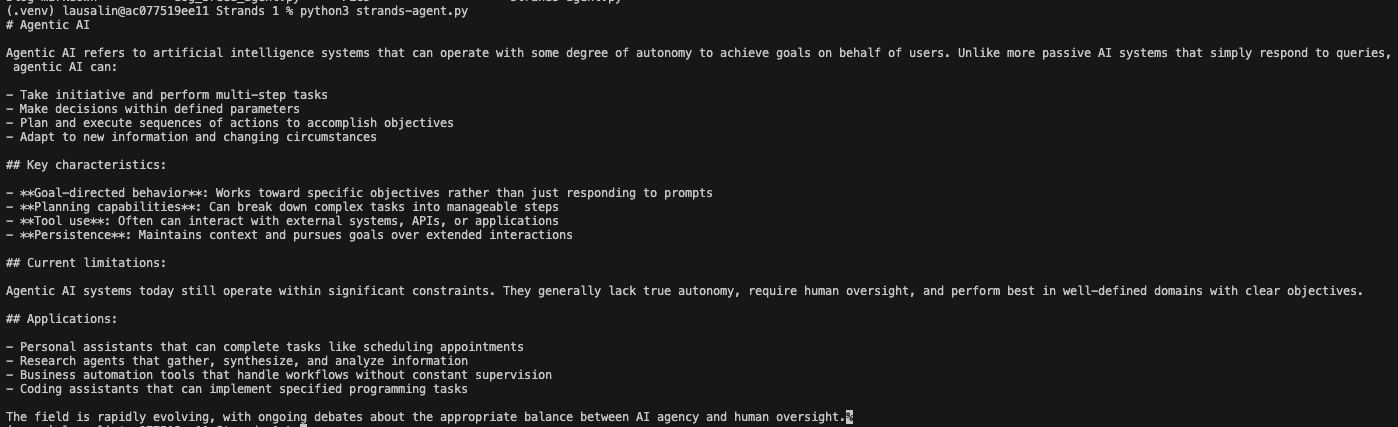

AWS Strands Agents SDK

watchOfficial53AWS Bedrock teams only. High claimed downloads but anomalous download/star ratio (1,038 vs CrewAI 122) and zero HN organic discussion despite 14M cumulative downloads raises CI/CD pipeline inflation concern. Official AWS tooling is genuine advantage for Bedrock teams; lock-in penalty is high for everyone else.

smolagents (HuggingFace)

watch78Research and experimentation only. LocalPythonExecutor must NOT be used in production under any circumstances. Two independent security firms (JFrog + NCC Group) confirmed this. Docker or E2B sandboxing is an architectural requirement. Best for: evaluating CodeAgent paradigm, HuggingFace model experimentation, academic research.

n8n

active83Clear #1 for workflow automation with AI nodes. If your goal is to wire together SaaS tools with AI orchestration, n8n is the answer. If you're building an agent system from code, use LangGraph or CrewAI instead. Do not mix these use cases.

Brave Search API

activeOfficial64#1 search API for AI agents — fastest latency (669ms), highest independent benchmark score (14.89), SOC 2 Type II attested. Default recommendation for general-purpose agent search.

SearXNG

active78#4 in search-news — the only option where no query ever leaves your infrastructure. 26,644 stars, active development. Not independently benchmarked on quality, but unmatched on privacy and cost.

Tavily

activeOfficial48#5 in search-news — biggest distribution advantage via LangChain default integration. AIMultiple 13.67 (#5) shows meaningful gap below top-4. Nebius acquisition adds strategic uncertainty.

Jina Reader

staleOfficial43#6 in search-news — simplest possible single-URL extraction. ReaderLM-v2 is strong for edge/on-device. But OSS repo has had no commits for 10+ months and Firecrawl is a strict superset. On a downward trajectory.

Crawl4AI

active83#7 in search-news — the open-source self-hosted choice. 62K stars, Apache-2.0, actively maintained (v0.8.5 released 2026-03-18). Three independent comparisons show lower success rate vs Firecrawl (89.7% vs 95.3%) but wins on license, cost, and developer control.

Parallel AI Search

activeOfficial38Below cut line in search-news. Top-4 AIMultiple quality (14.21) but 20x Brave's latency makes it async-only. Closed source, no MCP server. Best deep research API if latency doesn't matter.

You.com Search API

activeOfficial28Below cut line in search-news. Research API launched Feb 26, 2026 with strong self-reported benchmark claims (#1 DeepSearchQA). Too new for independent verification — no HN traction, no AIMultiple placement. If claims verified, jumps to ranked list immediately.

Linkup

watchOfficial28Below cut line in search-news. 86K weekly downloads suggest real adoption despite 43 GitHub stars. Strong angel backing (Datadog CEO, Mistral CEO). All benchmark claims self-reported — no independent verification yet.

Perplexity Sonar API

activeOfficial48Below cut line in search-news. Consumer brand doesn't translate to API performance. AIMultiple #7 (12.96), 11K+ ms latency, BrowseComp 8%. HumAI shows highest accuracy (87%) but at cost of speed.

Valyu DeepSearch

watchOfficial28Below cut line in search-news. Unique proprietary data angle (50+ sources: SEC, clinical trials) but almost all evidence is self-reported. Minimal traction. Needs independent verification.

Serper

activeOfficial28Below cut line in search-news. Budget Google SERP wrapper. No semantic understanding, no independent index. Useful only when cost is the primary constraint.

Spider Cloud

active38Below cut line in search-news. Niche high-volume crawling tool. Benchmark claims entirely self-reported. Tiny community (2.3K stars).

Google Grounding with Search

activeOfficial28Below cut line in search-news. Platform lock-in (Gemini API only). Not a standalone search API. If Gemini Deep Research exits preview with MCP support, deep research lane shifts dramatically.

Bright Data MCP

activeOfficial51Below cut line in search-news. #1 in Browser MCP Benchmark (100% extraction success, 90% automation). 2,214 stars, 60+ MCP tools. Not a search API — web access infrastructure. The only option for scraping sites with aggressive anti-bot defenses.

Hyperbrowser MCP

watchOfficial30Below cut line in search-news. Strong 90% browser automation (tied Bright Data #1) but 63% web extraction (worst among ranked). GitHub stale 4+ months (last push Nov 2025). Watchlist — strong HN traction and stealth capabilities, but maintenance risk.

ScrapeGraphAI

activeOfficial68Below cut line in search-news. 23K stars, 194 HN pts (strongest HN score in category), unique LLM graph pipeline approach. But only 14.6K weekly PyPI downloads vs Firecrawl's 752K — star count likely inflated by viral novelty. Stars-to-downloads ratio 1,580:1 vs Firecrawl's 81:1.

Kimi Code (Moonshot AI)

watchOfficial38Watch — real download signal (124K PyPI/week) and strong model capability (K2.5, HN 388 pts). Limited Western ecosystem integration and no SWE-bench Pro or Terminal-Bench scores. Best for Chinese developer ecosystem or teams using Moonshot AI models.

Kilo Code (Kilo-Org)

watch43Watch — real download signal (131K npm/week) and meaningful seed funding ($8M). OpenRouter-native architecture is a genuine differentiator for teams wanting model flexibility without managing API keys. Needs stronger differentiation beyond OpenRouter integration.

Cursor (Anysphere)

activeOfficial28Reference only in coding-clis — Cursor is primarily an IDE, not a CLI agent. $29.3B valuation and strong adoption are real, but closed-source, paid, and IDE-first puts it outside the terminal-native category. Best for developers who want a polished commercial IDE with integrated AI.

Warp (Warp Technologies)

activeOfficial43Reference only in coding-clis — Warp is an AI terminal, not a coding CLI agent. Strong UX and 75.8% SWE-bench Verified are real signals, but 4,350 open issues and closed-source licensing are concerns. Best for developers who want an AI-first terminal experience rather than a code agent.

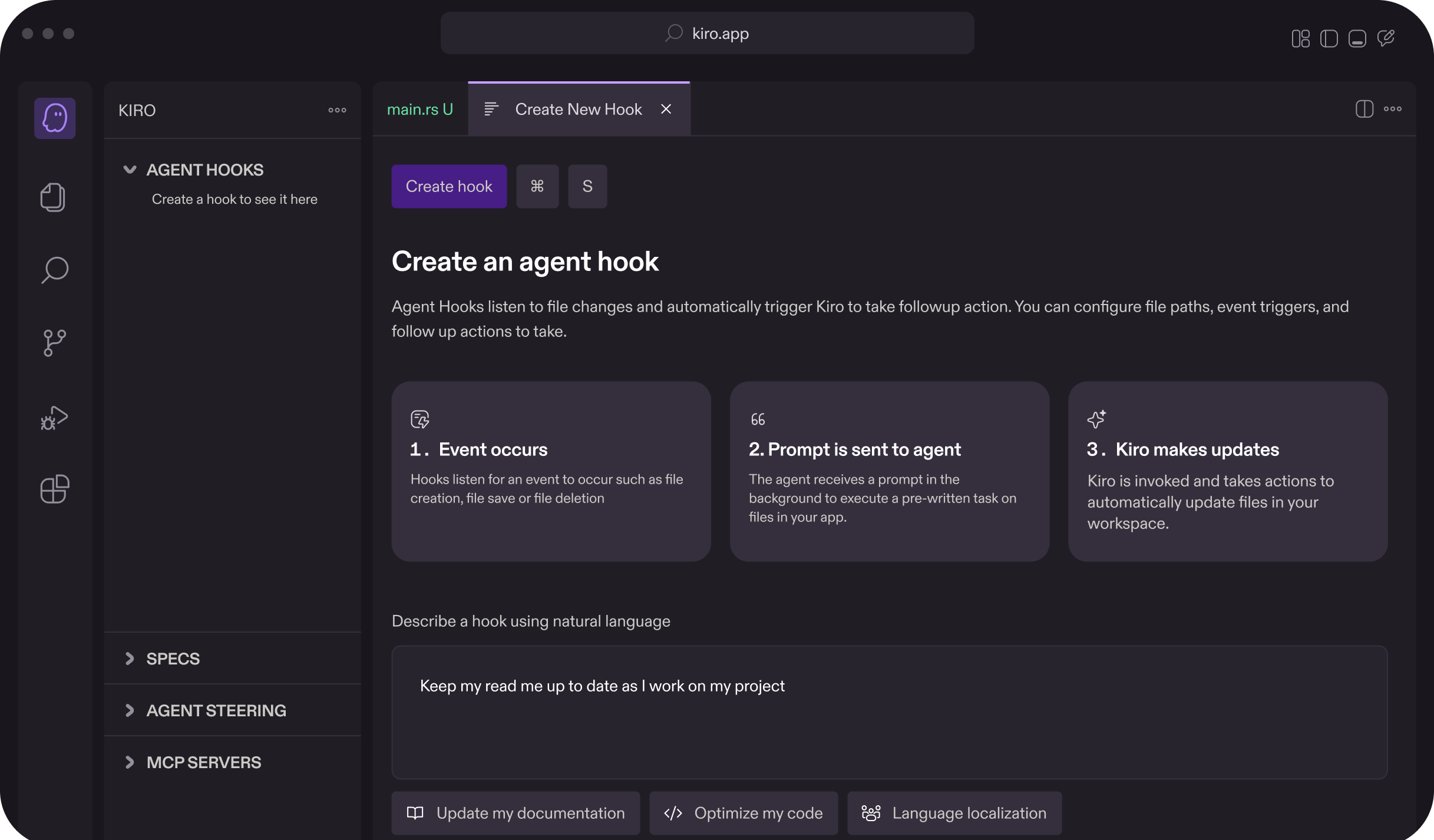

Kiro (AWS)

watchOfficial38Watch — spec-driven approach is architecturally sound and GovCloud presence is meaningful for regulated industries. But too early to rank higher without a verified benchmark, user count, or independent case study. Outage controversy unresolved.

RooCode (RooVeterinaryInc)

active53#6 coding CLI — the 5.0/5 VS Code rating on 1.37M installs is the strongest quality signal in the IDE-embedded segment. Cline fork means it inherits a proven codebase while differentiating on team governance and multi-model flexibility. Best for teams adopting Cline-style agentic coding with stricter governance requirements.

Know a skill we're missing?

Submit evidence and we'll run the full research pipeline.