Canceled Max plans for both Claude and ChatGPT in favor of Droid. One of the strongest individual endorsements in the category.

Factory AI (Droids)

activeTerminal-Bench #1 (58.75%), $50M Series B at $300M valuation (Sequoia, NEA, NVIDIA). Wipro partnership (tens of thousands of engineers) — largest enterprise deployment commitment. Previously claimed 84.8% SWE-bench is UNVERIFIED. Zero grassroots developer adoption.

53/100

Trust

610

Stars

5

Evidence

Product screenshot

Videos

Reviews, tutorials, and comparisons from the community.

These Factory AI Droids Built My App in 10 Minutes! (Rust + TypeScript!) 👉 Code, Debug & Ship Apps

AI Droids, Dev Velocity, and Bulletproof Security | Inside Factory

Editorial verdict

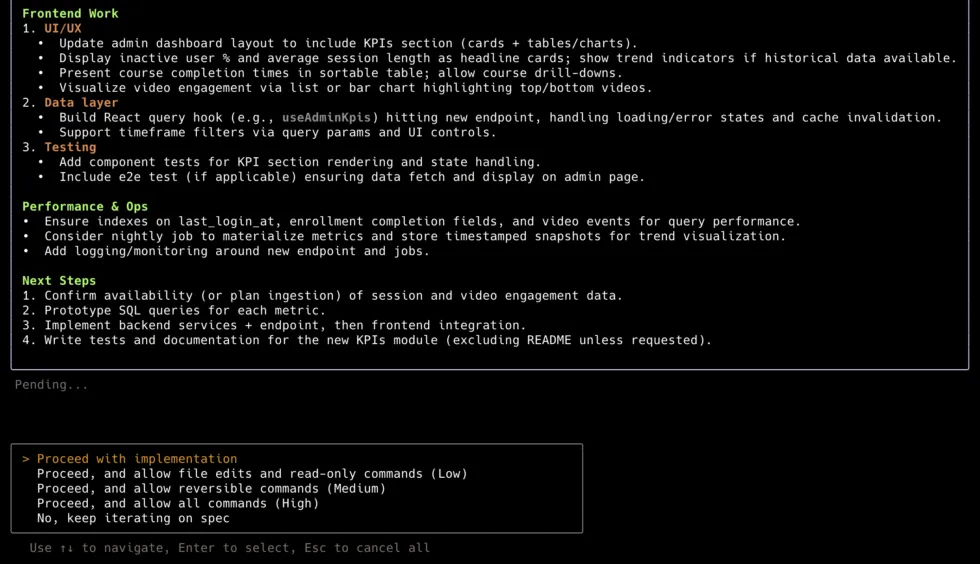

Enterprise-only with legitimate backing, but zero grassroots signal and an unverified benchmark claim. Large enterprises with white-glove support needs may benefit; not for individual developers or small teams.

Source

Public evidence

Specific technical criticisms: slow response times, inferior code quality vs Claude, unusable history UI. Directly contradicts Every.to positive review.

Droid with Opus scored 58.8% on Terminal-Bench, beating Claude Code (43.2%) and Codex CLI (42.8%). Strongest benchmark result in the autonomous platform subcategory.

Highest funding in the multi-agent category. Investor quality (Sequoia, NEA, NVIDIA) signals strong enterprise thesis.

Largest enterprise deployment commitment in the software factory category. Claims 31x faster feature delivery and 96.1% shorter migration times.

How does this compare?

See side-by-side metrics against other skills in the same category.

Where it wins

Terminal-Bench #1 at 58.75% (beat Claude Code 43.2%, Codex CLI 42.8%)

$50M Series B at $300M valuation — Sequoia, NEA, NVIDIA, J.P. Morgan

Wipro partnership: tens of thousands of engineers — largest enterprise deployment commitment in category

Enterprise customers: MongoDB, EY, Bayer, Zapier, Clari

Danny Aziz (GM of Spiral): 'canceled Claude + ChatGPT Max plans for Droid'

Where to be skeptical

Zero grassroots developer adoption — no significant HN threads, Reddit, or independent reviews

Previously claimed 84.8% SWE-bench score UNVERIFIED by any independent source

Robert Matsuoka: 'great vision, flawed execution, not ready for serious work'

Closed-source, no self-hosting, enterprise pricing only

Only 610 stars — no open-source community path

Ranking in categories

Know a better alternative?

Submit evidence and we'll run the full pipeline.

Similar skills

Aider

91Open-source AI pair programming CLI. The only tool in the coding CLI category with a fully verifiable, independent download number: 191,828/week PyPI installs, 5.7M lifetime, 15B tokens/week (homepage stat). Multi-model, git-native, no vendor lock-in. v0.86.2 released 2026-02-12.

Claude Code

90Anthropic's official agentic coding CLI. Terminal-native, tool-use-driven, with deep file system and shell access. #1 SWE-bench Pro standardized (45.89%), ~4% of GitHub public commits (SemiAnalysis), $2.5B annualized revenue (fastest enterprise SaaS to $1B ARR). 8M+ npm weekly downloads. Opus 4.6 with 1M context.

OpenHands

88Category leader in multi-agent orchestration — 69,352 stars (verified), $18.8M Series A, AMD hardware partnership, 455 contributors, 1M downloads/month PyPI (3.4M all-time). SWE-Bench Verified 72% with Claude 4.5 Extended Thinking (updated 2026-03-19), Multi-SWE-Bench #1 across 8 languages. Gap to #2 is enormous on every axis.

n8n

83179,860 GitHub stars — largest OSS repo in adjacent workflow-automation space by 2×. 3,000+ enterprise customers, ~200,000 active users, $60M Series B. 1,100+ ready-to-use integrations, native AI Agent node, MCP client/server support. Best for orchestrating SaaS integrations and processes with AI nodes — not for building agent systems in code.

Raw GitHub source

GitHub README could not be fetched right now.