Brave wins on speed (669ms vs ~1,200ms), benchmark score (14.89 vs 14.39), MCP breadth (6 tools vs 1), and free tier (2K queries/mo). Exa wins on semantic depth (neural embeddings, people/company/code verticals), weekly downloads (940K vs REST-only), HN traction (412 vs 95 pts), and now deep research (Exa Deep, March 2026). Use Brave as the default; switch to Exa when you need meaning-based search, vertical lookups, or deep research.

Search & News

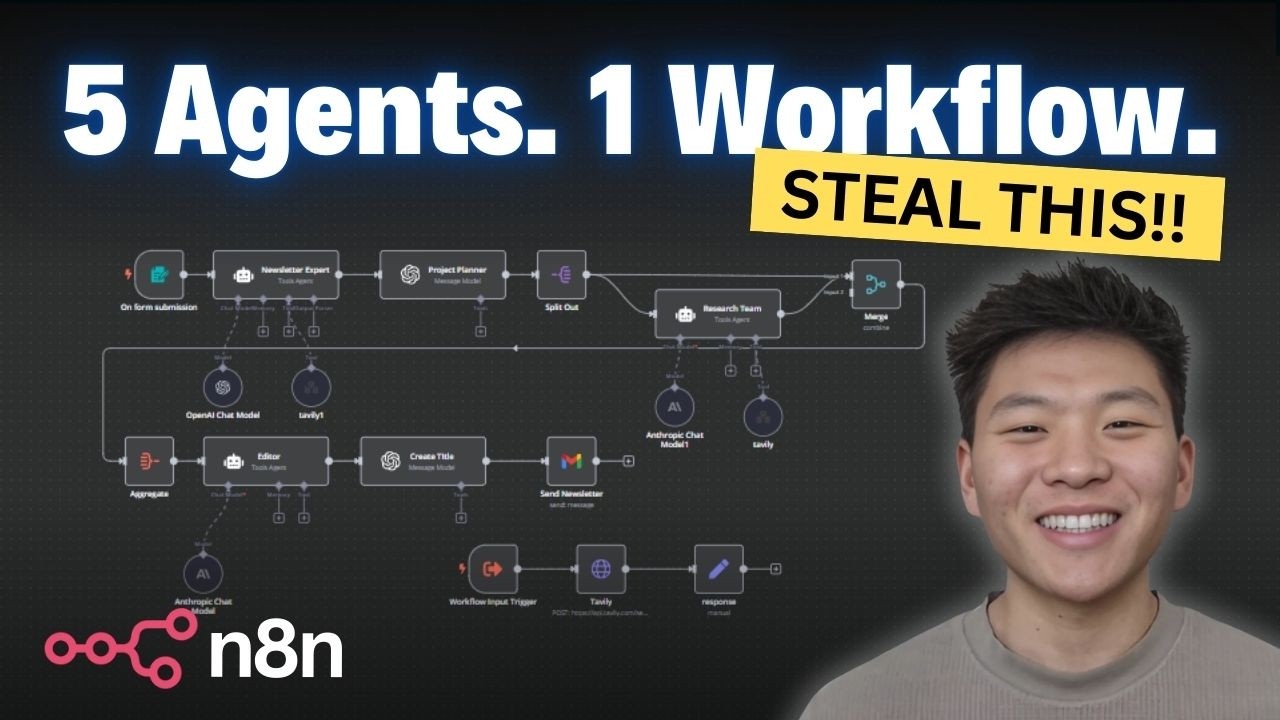

Web search, scraping, and deep research tools for AI agents. The category has split into three lanes: search APIs (Brave, Exa, Tavily), scrape/crawl tools (Firecrawl, Crawl4AI), and deep research APIs (Parallel, Perplexity Sonar). Most serious agent workflows need tools from the first two lanes. MCP support is table stakes — the real differentiators are benchmark quality, latency, index independence, and license. Deep research lane is still immature — WideSearch academic benchmark shows near 0% success on broad tasks.

18

Ranked

15

Signals

Current ranking

Best for: Default search API for AI agents — fastest, broadest MCP tooling, independent benchmark winner

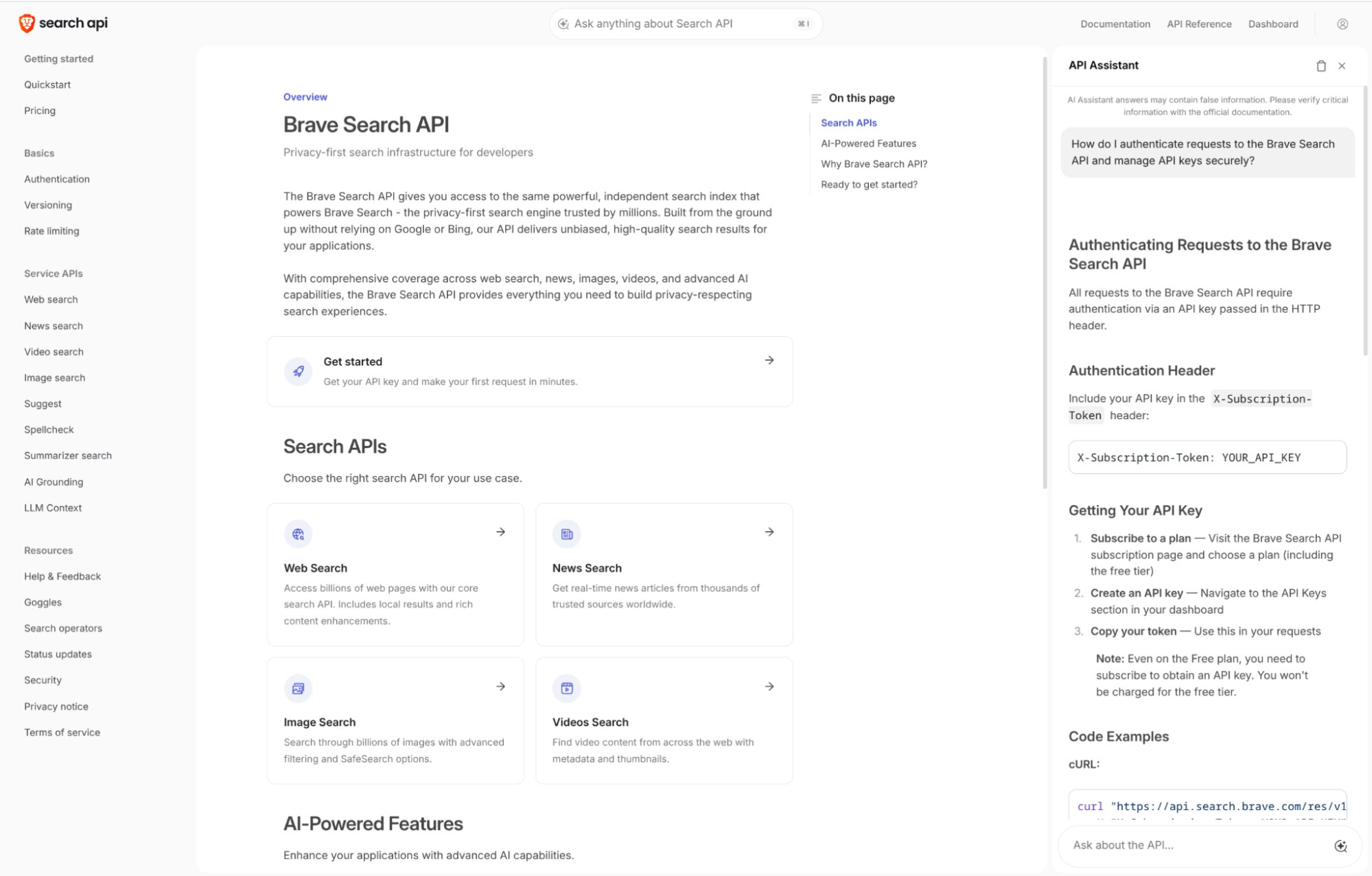

#1 Agent Score (14.89) in AIMultiple 2026, confirmed by Data4AI independently. Fastest latency (669ms). Only independent commercial search API after Bing API shutdown (Aug 2025). Independent index (40B pages). 6-tool MCP server. SOC 2 Type II attested (Oct 2025). 35K+ API customers, 2,700+ paid. Free tier (2K queries/month).

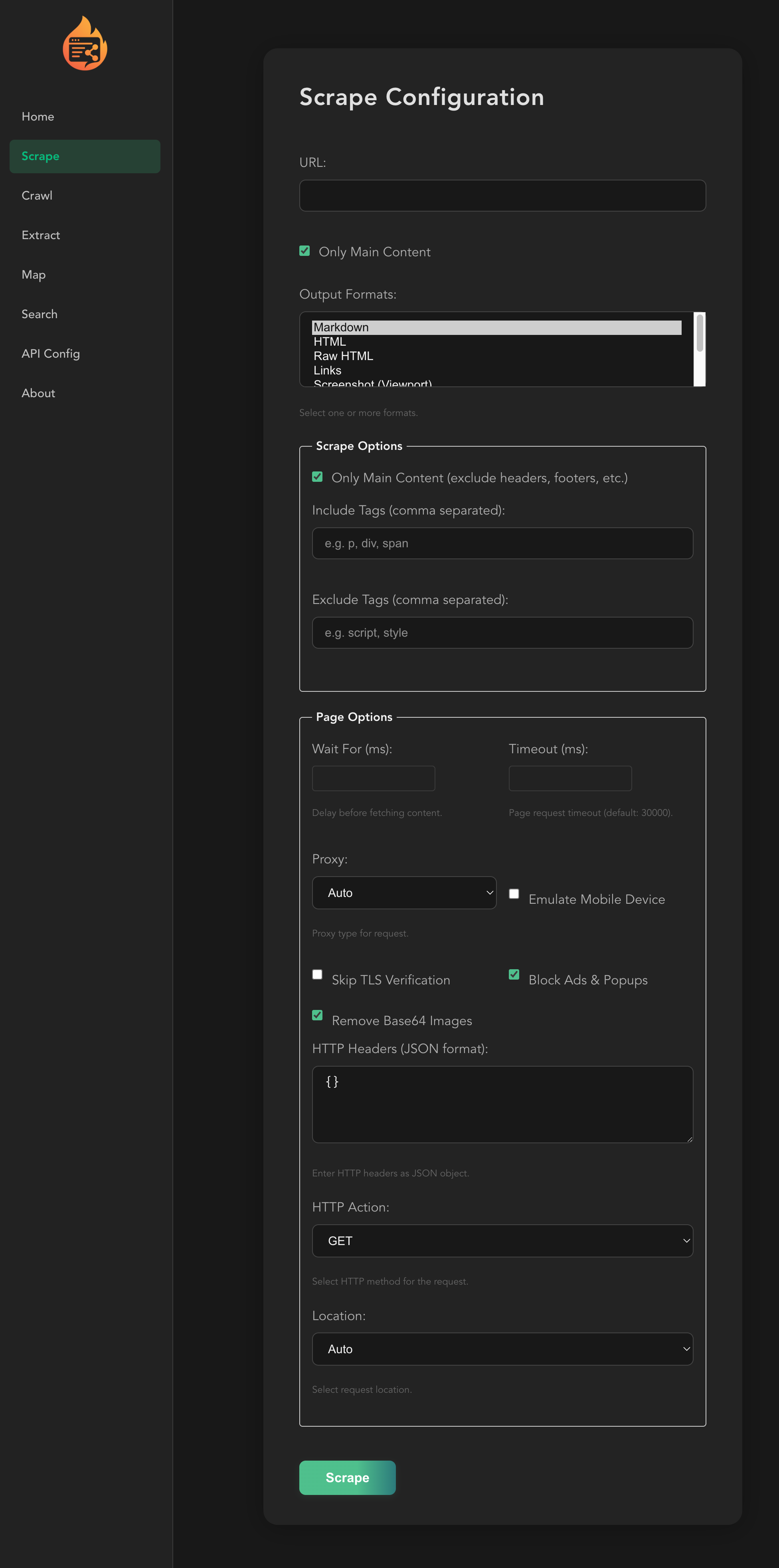

Best for: Web scraping, structured extraction, turning messy pages into LLM-ready content — the research/extraction workhorse

95,324 GitHub stars — highest traction in category. Agent Score 14.58 (#2, highest relevance 4.30). 1.23M combined weekly downloads. ScrapeOps 10/10 ('best tool you can get'). MCP server: 5,809 stars (highest in category). Search + scrape + autonomous /agent endpoint — only tool covering all three lanes. FIRE-1 agent, Spark model family, parallel agents. Multi-language SDKs (Python, JS, Go, Rust, Java). 95.3% success rate. Browser MCP: fastest extraction (7s, 83% success).

Best for: Semantic search, similarity search, market mapping — strongest where traditional keyword search fails

Agent Score 14.39 (#3), statistically tied with top tier. Best HN traction in category (412 pts). 940K weekly downloads (670K PyPI + 270K npm). SOC 2 Type II. Enterprise customers: Notion, Cursor, AWS, Databricks. Neural index: people (1B+ LinkedIn profiles), company, code verticals. Exa Deep launched March 4 2026 — enters deep research lane ($12-15/1K). HumAI: 94.9% SimpleQA (highest), 81% complex retrieval. $85M Series B at $700M, Nvidia-backed.

Best for: Privacy-first, self-hosted meta-search — no API keys, no vendor lock-in, no cost

26,644 stars, active development (last commit 2026-03-15). Zero cost, zero API keys — aggregates 70+ search engines. Privacy guarantee: no query ever leaves your infrastructure. Rolling Docker releases. HN: 302 pts + 134 pts. AGPL-3.0.

Best for: LangChain-native workflows where Tavily is the path of least resistance — fastest response time and highest uptime

1.18M weekly downloads (#2, 1.03M PyPI + 155K npm). Default search tool in LangChain. Full platform now: search + extract + crawl + /research endpoint (GA). HumAI: fastest response (187ms), highest uptime (99.94%). Acquired by Nebius for $275-400M — strongest financial runway. Enterprise pricing $0.0002/query at volume.

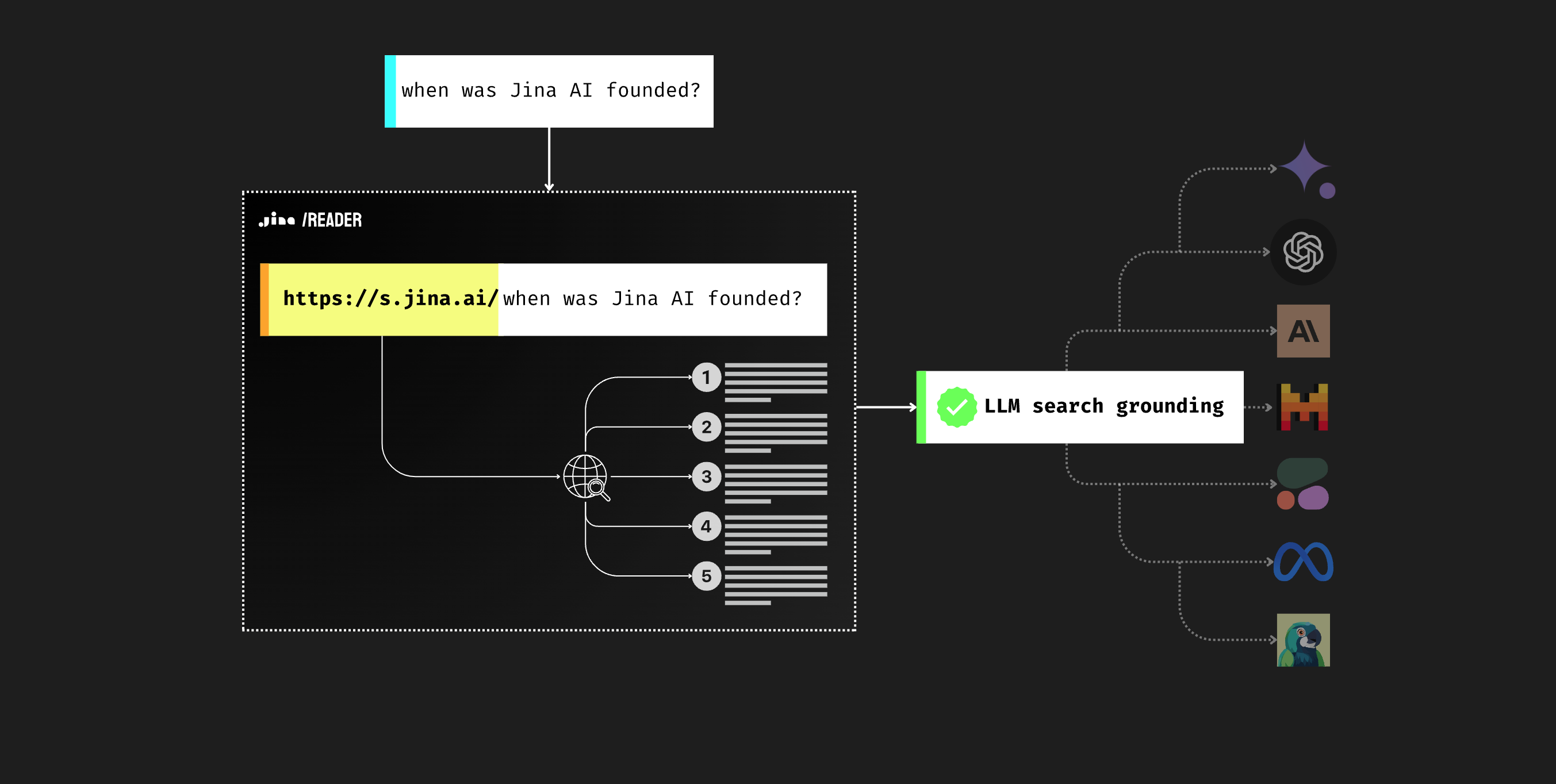

Best for: Simplest URL-to-markdown conversion (one-line API) with ReaderLM-v2 for local extraction

10,292 stars. ReaderLM-v2 (1.5B SLM, 512K context, 29 languages) presented at ICLR 2025. Hosted API remains active. The r.jina.ai URL-prefix pattern is the simplest possible interface for single-page reads.

Best for: Free, open-source self-hosted crawling — Apache-2.0, no vendor dependency, full developer control

62,249 GitHub stars (#2 in category). 6,353 forks — nearly matches Firecrawl's 6,516 (heavy dev usage). Apache-2.0 license. Completely free. 384K weekly PyPI downloads. Actively maintained — v0.8.5 released 2026-03-18. ScrapeOps: 'Best open source' (7/10). v0.8.x: deep crawl crash recovery, prefetch mode (5-10x faster), adaptive intelligence.

Best for: Deep research where quality matters more than speed

Agent Score 14.21 (#4) — top tier on quality. Self-reported BrowseComp: 48%/58% accuracy vs GPT-4 browsing 1%. $740M valuation (Kleiner Perkins, Index Ventures). Founded by Parag Agrawal (ex-Twitter CEO).

Best for: OpenAI-native search — the provider OpenAI already uses

OpenAI integrated You.com as core search provider — strongest distribution signal in category. Self-reported: 93% SimpleQA, #1 DeepSearchQA. MCP server launched. 1,000 free API queries/month.

Best for: Highest raw answer accuracy (87% in HumAI) with citation synthesis

87% accuracy (highest in HumAI). 94% citation quality. Sonar Deep Research for multi-step retrieval. Official MCP server. Citation tokens no longer billed (Feb 2026).

Best for: AI-native web search API with sub-second speed and strong angel backing

$10M seed (Feb 2026, Gradient). Angels: Olivier Pomel (Datadog CEO), Arthur Mensch (Mistral CEO). Customers include KPMG, Artisan. /fast endpoint for sub-second search. MCP integrated with Claude Desktop.

Best for: Enterprise scraping behind aggressive anti-bot defenses — perfect accuracy where others fail

#1 Browser MCP Benchmark (AIMultiple 2026): 100% extraction success, 90% automation, 77% scalability. 2,214 stars. 60+ MCP tools — broadest MCP tooling. Industrial-grade anti-bot (CAPTCHA solving, proxy rotation, geo-unblocking). Free MCP tier. The only option for sites that actively block bots.

Best for: AI-native browser automation with stealth capabilities — Claude Computer Use / OpenAI Computer Use

90% browser automation (tied #1 with Bright Data, AIMultiple 2026). Stealth-first: CAPTCHA solving, IP rotation, fingerprint management. Supports Claude + OpenAI Computer Use agents. 63 HN pts on launch. 10,000 concurrent browsers.

Best for: LLM-graph-based extraction — describe what you want, AI builds the extraction pipeline

23,033 GitHub stars. 194 HN pts (strongest in category). Active development v1.74.0 (Mar 15, 2026). arXiv paper Feb 2026. Open-source + hosted API dual model. 'You Only Scrape Once' graph reuse. Apache-2.0 OSS.

Best for: High-stakes knowledge work (finance, economics, medical) — if claims hold

Claims 94% SimpleQA and 79% FreshQA (vs Google 39%). 50+ proprietary data sources (SEC, clinical trials). a16z backed. LangChain integration. DeepSearch v2.0 with tool calling.

Best for: Cheapest Google SERP access ($0.30/1K queries)

3-10x cheaper than alternatives. LangChain integration. 2,500 free searches on signup.

Best for: High-volume scraping performance (Rust-based)

Claims 100K pages/sec, 7x Firecrawl throughput. MIT license. 2,332 stars. Rust-based zero-copy parsing. Cost advantage at scale (~$48/100K pages vs Firecrawl ~$240).

Best for: Gemini-native workflows only

Native to Gemini API. 5,000 free prompts/month. Gemini Deep Research preview: 59.2% BrowseComp, 46.4% HLE (highest reported).

Head to head

AIMultiple benchmark: ~1pt gap (14.89 vs 13.67) described as 'meaningful, not random' — confirmed independently by Data4AI. Brave: independent index (only one after Bing shutdown), SOC 2. Tavily: full platform (search + extract + /research GA), enterprise pricing ($0.0002/query at volume), 1.18M downloads. Meta Llama Stack now defaults to Brave over Tavily. Brave is objectively stronger on search quality; Tavily evolving into a platform play.

Firecrawl wins on features (search+scrape+agent), enterprise compliance, multi-language SDKs, benchmark score (14.58), success rate (95.3% vs 89.7%), and ScrapeOps rating (10/10 vs 7/10). Crawl4AI wins on license (Apache-2.0 vs AGPL), cost (free at any scale), fork ratio (6,353 vs 6,516 — nearly equal dev usage), and local LLM support. Crawl4AI actively maintained — v0.8.5 released March 18.

Exa wins on quality (14.39 vs 13.67 Agent Score, 81% vs 71% complex retrieval, 96% vs 85% citations), neural index, and now deep research (Exa Deep). Tavily wins on distribution (1.18M weekly downloads, LangChain default), response time (187ms), and full platform breadth (search+extract+research GA). Exa is the better tool; Tavily is the more convenient one. Both have strategic risk — Tavily's Nebius acquisition, Exa's Deep claims unverified.

Firecrawl does everything Jina Reader does, plus search, structured extraction, batch processing, and agent endpoint. Jina Reader's OSS repo is stale (no commits for 10+ months). Firecrawl is the superset choice for new projects. Firecrawl 4-5x cheaper at volume.

SearXNG: free, self-hosted, private, 70+ aggregated engines. Brave: higher quality (14.89 benchmark), faster (669ms), managed, SOC 2. Privacy vs quality tradeoff. SearXNG is the only option for teams that can't send queries to third-party APIs.

Both serve deep research. Parallel: 48% BrowseComp vs Perplexity 8%. Both slow (13,600ms vs 11,000ms+). Parallel delivers on depth; Perplexity has higher raw accuracy (87% HumAI) but truncation issues. Parallel wins on deep research quality if you can tolerate latency and cost.

Public signals

100 queries, GPT-5.2 judge, bootstrap resampling with 10K resamples for 95% CI. Top 4 (Brave, Firecrawl, Exa, Parallel Pro) statistically indistinguishable. Brave-Tavily gap (~1pt) is 'meaningful, not random.'

Bloomberg-confirmed. API unchanged post-acquisition. No pricing changes yet — watch Q2 for Nebius integration shifts.

799 stars. 6 tools, SOC 2 Type II. 35K+ free customers, 2,700+ paid. Microsoft shut down Bing API (Aug 2025) — Brave is now the only independent commercial search API at scale. Snowflake + AWS integrations.

95,324 main repo stars, 5,809 MCP stars (highest in category). 1.23M combined weekly downloads (764K PyPI + 470K npm). ScrapeOps: 'best tool you can get' (10/10). 143 contributors. AGPL-3.0 license is main blocker.

8 MCP servers, 4 tasks × 5 runs, LangGraph + Claude 3.5 Sonnet. Bright Data: 100% extraction, 90% automation, 77% scalability. Firecrawl: 83% extraction, fastest speed (7s). Hyperbrowser: 90% automation. Exa: 23% extraction (best for search, not scrape).

940K weekly downloads (670K PyPI + 270K npm). Exa Deep: two tiers — deep ($12/1K, 4-12s) and deep-reasoning ($15/1K, 12-50s). HumAI: 94.9% SimpleQA (highest). MCP server: 4,031 stars but only 23% web search success — use SDK directly.

Infrastructure-grade credibility. Exa serves web search to thousands of companies including Cursor. Feb 2026: Exa Fast (sub-500ms) and Exa Deep (agentic) launched.

Independent comparison. Perplexity highest accuracy (87%), Tavily fastest (187ms, 99.94% uptime), Exa best citations (96%). Exa vs Tavily: 81% vs 71% on complex retrieval.

Second independent source confirming AIMultiple findings. Brave 14.89 vs Tavily 13.67 gap 'held up across repeated statistical tests.' Strengthens Brave #1 position.

arXiv preprint from academic researchers. Over 10 agentic search systems evaluated, best performer only 5%. Tempers ALL 'deep research' claims. The entire deep research lane is immature.

Independent hands-on testing. Firecrawl: 'best tool you can get.' Crawl4AI: 'best open source.' ScrapeGraphAI tied with Firecrawl. 'Almost all open source tools are a complete waste of time' — only Crawl4AI viable.

Capsolver, Bright Data, Apify all agree: Firecrawl 95.3% success vs Crawl4AI 89.7%. Crawl4AI wins on license/cost. Crawl4AI actively maintained (v0.8.5, Mar 18).

New contender. Angels include Datadog CEO and Mistral CEO. Customers include KPMG. Sub-second /fast endpoint. Below cut line — too early.

Industrial-grade anti-bot (CAPTCHA, proxies, geo-unblocking). Not a search API — web access infrastructure. Only option for sites with aggressive bot defenses.

Best BrowseComp and HLE scores reported, but preview status, locked to Gemini API, no MCP server, no independent verification. If exits preview with MCP support, deep research lane shifts.

What changes this

If Brave ships a deep research endpoint — would make it the clear #1 across all lanes.

If Exa Deep gets independent benchmark validation — could leapfrog to #2 overall. Self-reported claims (March 2026) need verification.

If Tavily closes the AIMultiple gap — the Nebius resources make this plausible; next benchmark update will be telling. If pricing changes post-acquisition, it drops.

If Crawl4AI ships a search API or MCP server — would threaten Firecrawl's all-in-one position with a permissive license.

If WideSearch-style broad research tasks become solvable (>20% success) — reshuffles the deep research lane entirely.

Gemini Deep Research API is now in preview (59.2% BrowseComp, 46.4% HLE). If it exits preview with MCP support, deep research lane shifts dramatically.

If You.com's benchmark claims (93% SimpleQA, #1 DeepSearchQA) are independently verified, it enters ranked list on OpenAI distribution moat alone.

If Linkup gets independent benchmark validation — $10M seed and claimed parity; if confirmed, enters top-5.

Jina Reader — on track for delisting by mid-2026 if no repo activity. Effectively dead (10+ months no commits).

A second independent benchmark beyond AIMultiple would add confidence or reveal blind spots in current rankings.