OpenHands: 69K vs 610 stars, open-source vs proprietary, AMD hardware partnership, broader community by 10x. Factory wins on funding ($50M vs $18.8M), Terminal-Bench #1, and managed service. But Factory’s two contradictory reviews (Every.to vs hyperdev) signal high-variance UX. Different buyers — self-hosted vs managed.

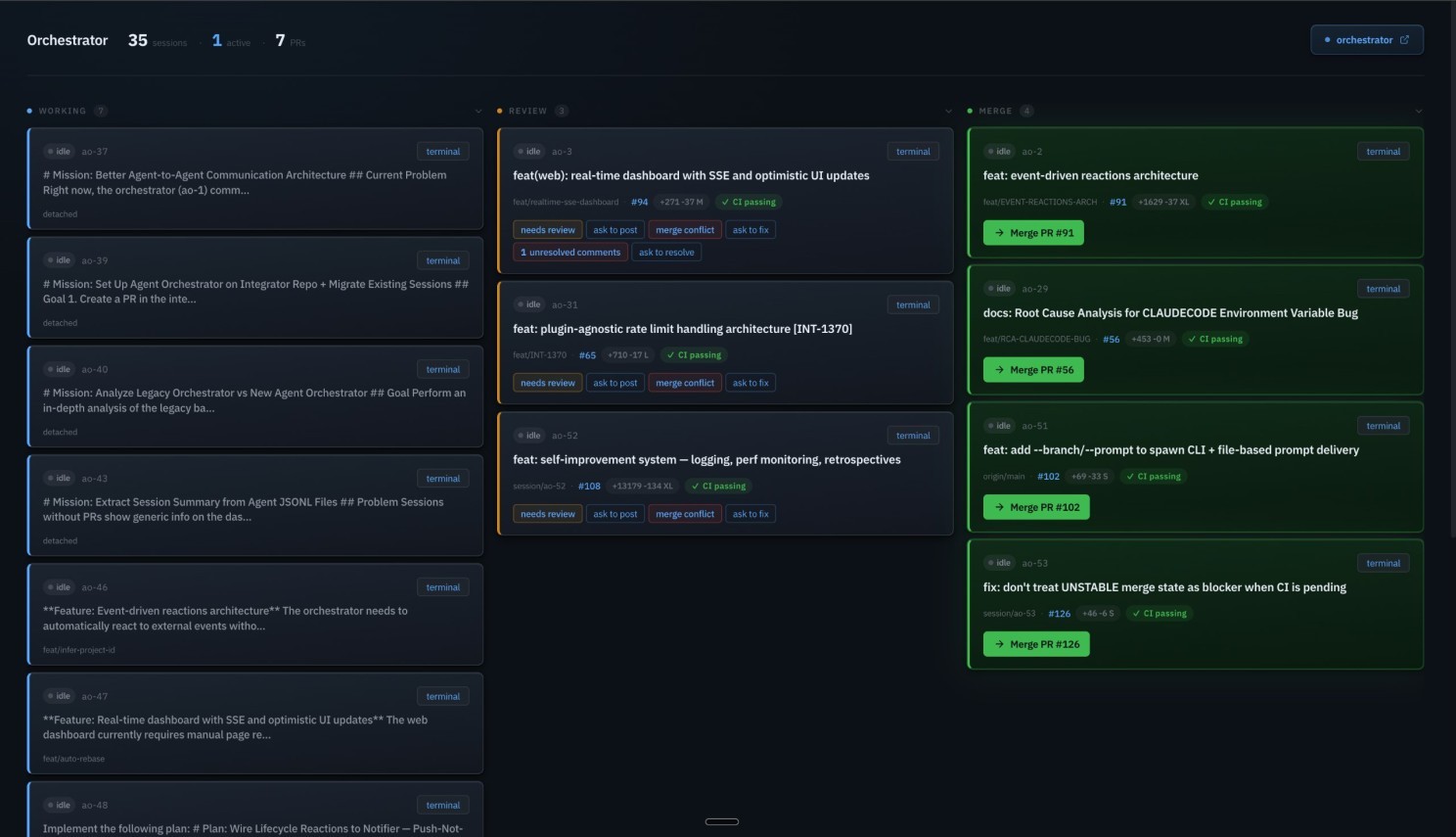

Teams of Agents / Multi-Agent Orchestration

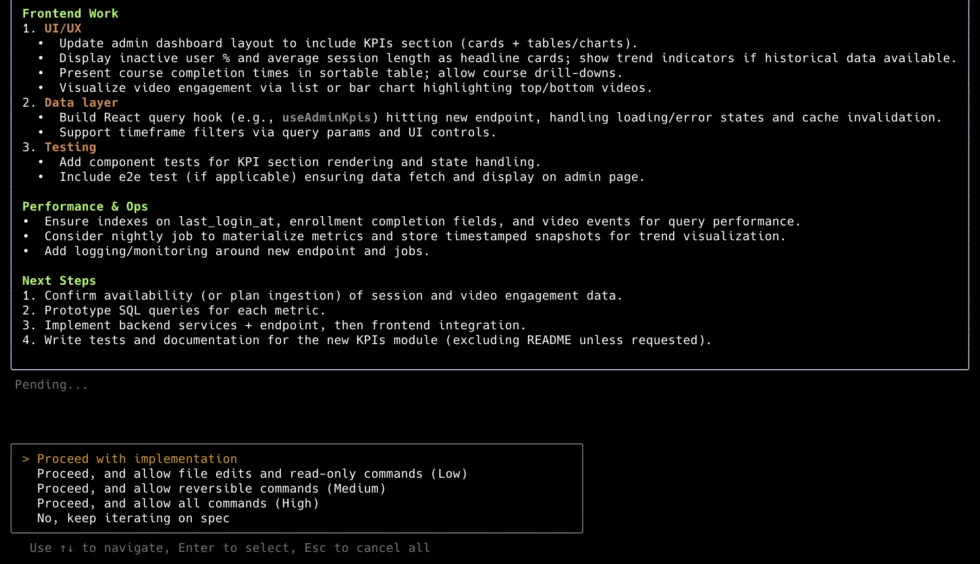

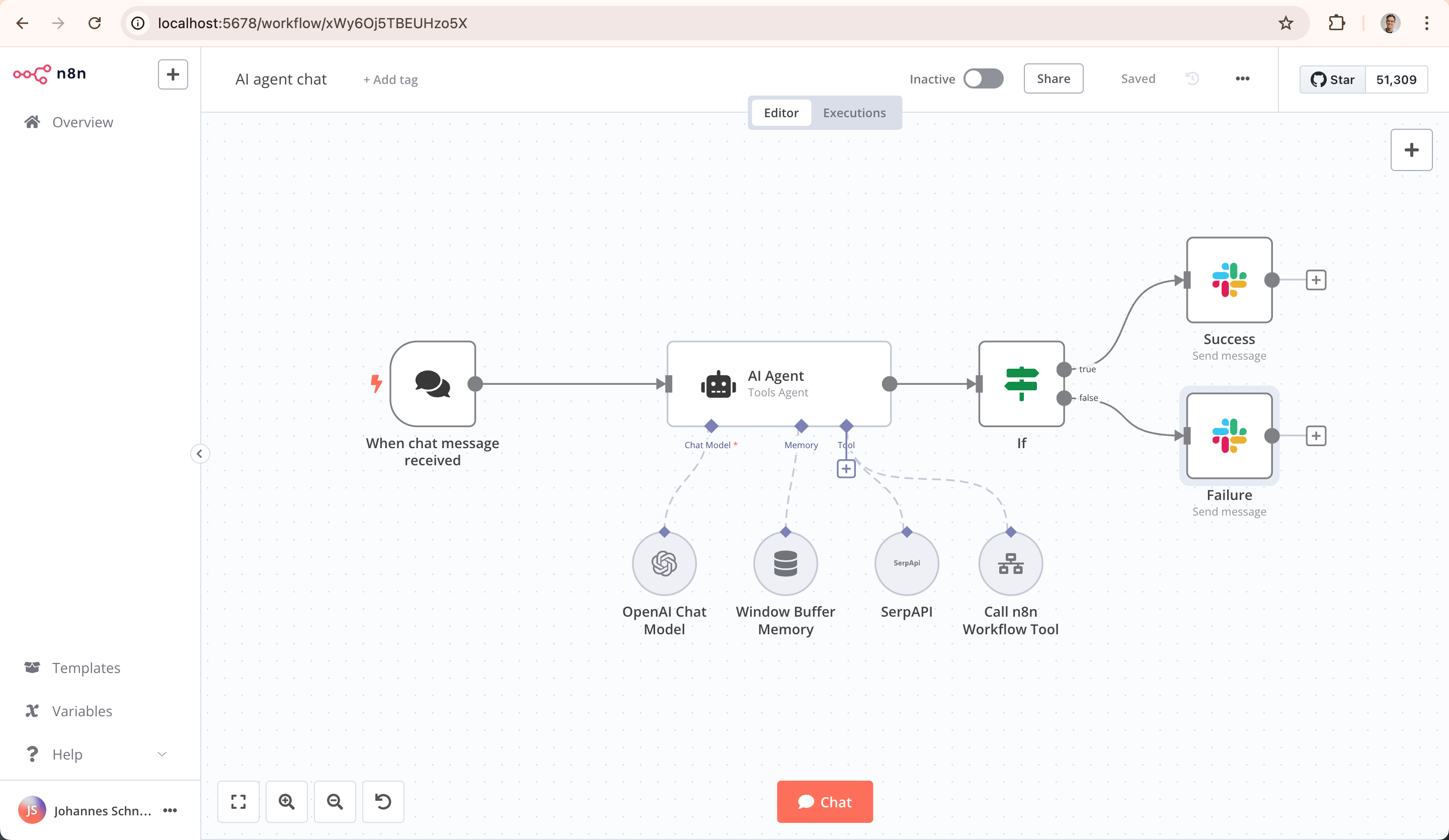

Five distinct segments with almost no cross-over: (1) Python agent frameworks — build multi-agent systems in code (LangGraph #1, OpenAI Agents SDK #2, Pydantic AI #3, CrewAI #4, plus cloud-native: Strands/AWS, ADK/GCP, Semantic Kernel/Azure); (2) TypeScript framework — Mastra (no competitor); (3) Autonomous coding agents — delegate software development to an agent (OpenHands, Factory AI); (4) Parallel agent IDEs — run multiple coding agents simultaneously (Emdash, ccpm, Superset); (5) Workflow automation — orchestrate integrations visually (n8n, Sim Studio). Ranking all on a single list is misleading — each serves a different buyer.

23

Ranked

14

Signals

Current ranking

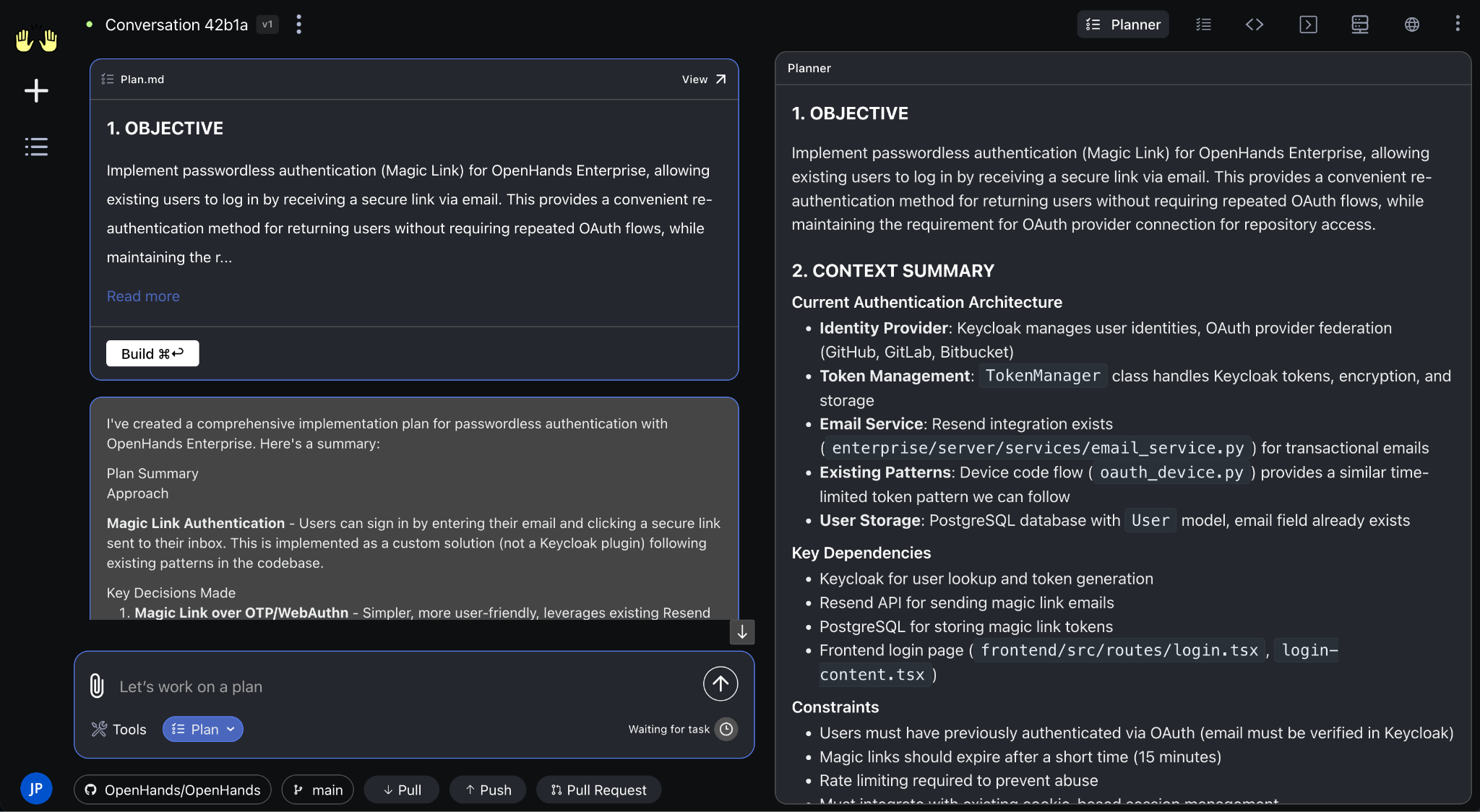

Best for: End-to-end autonomous coding platform — self-hostable, model-agnostic, enterprise-validated

69,425 stars (verified), $18.8M Series A (Madrona, Menlo, Fujitsu), AMD strategic partnership, 455 contributors, SWE-Bench Verified 72% with Claude 4.5 Extended Thinking. Gap to #2 is enormous on every axis.

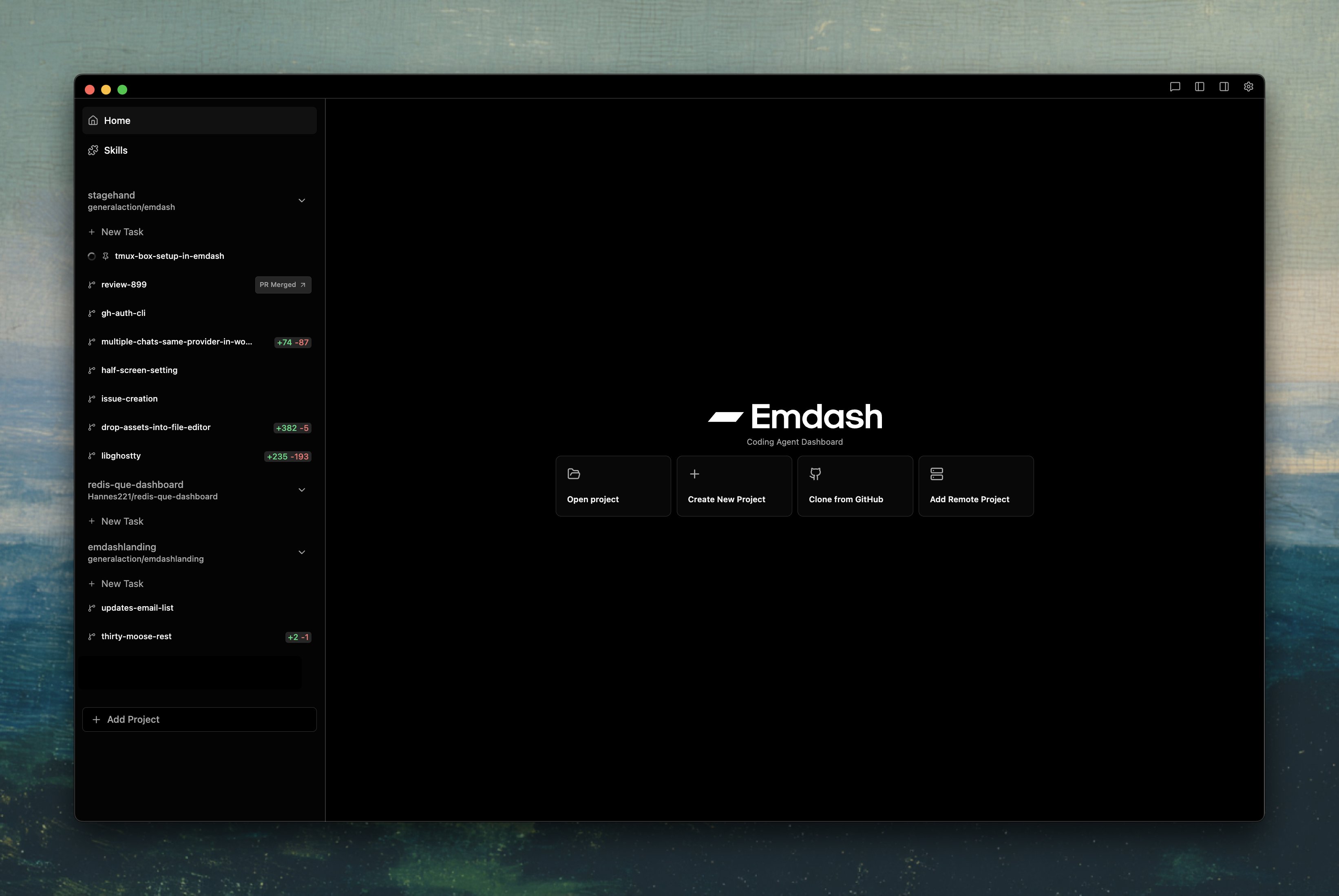

Best for: Multi-agent orchestration with Best-of-N comparison and issue-tracker integration (Linear, Jira, GitHub Issues)

Lowest star count in segment but highest evidence quality. YC W26 backing. Best-of-N is a genuinely novel approach. 18+ agent CLI support. 206pts HN / 71 comments. SSH remote, PR creation + CI monitoring.

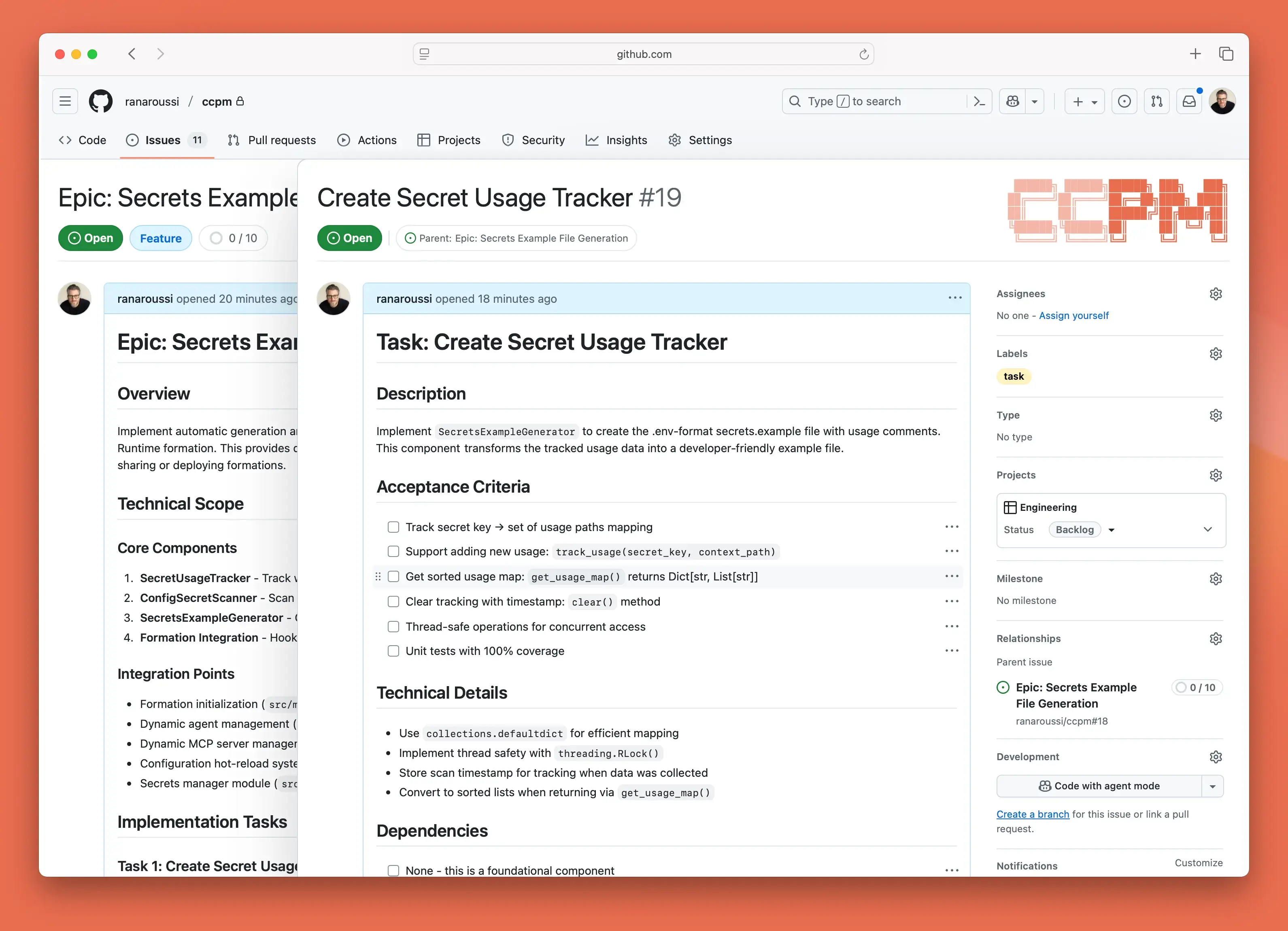

Best for: Shell-based parallel agent execution using GitHub Issues + git worktrees — pragmatic, no unnecessary complexity

7,707 stars. 175 HN pts with 112 comments — deepest community discussion in the parallel IDE segment. Uses existing primitives (GitHub Issues, git worktrees) rather than inventing new orchestration. Only 1 open issue — remarkably lean.

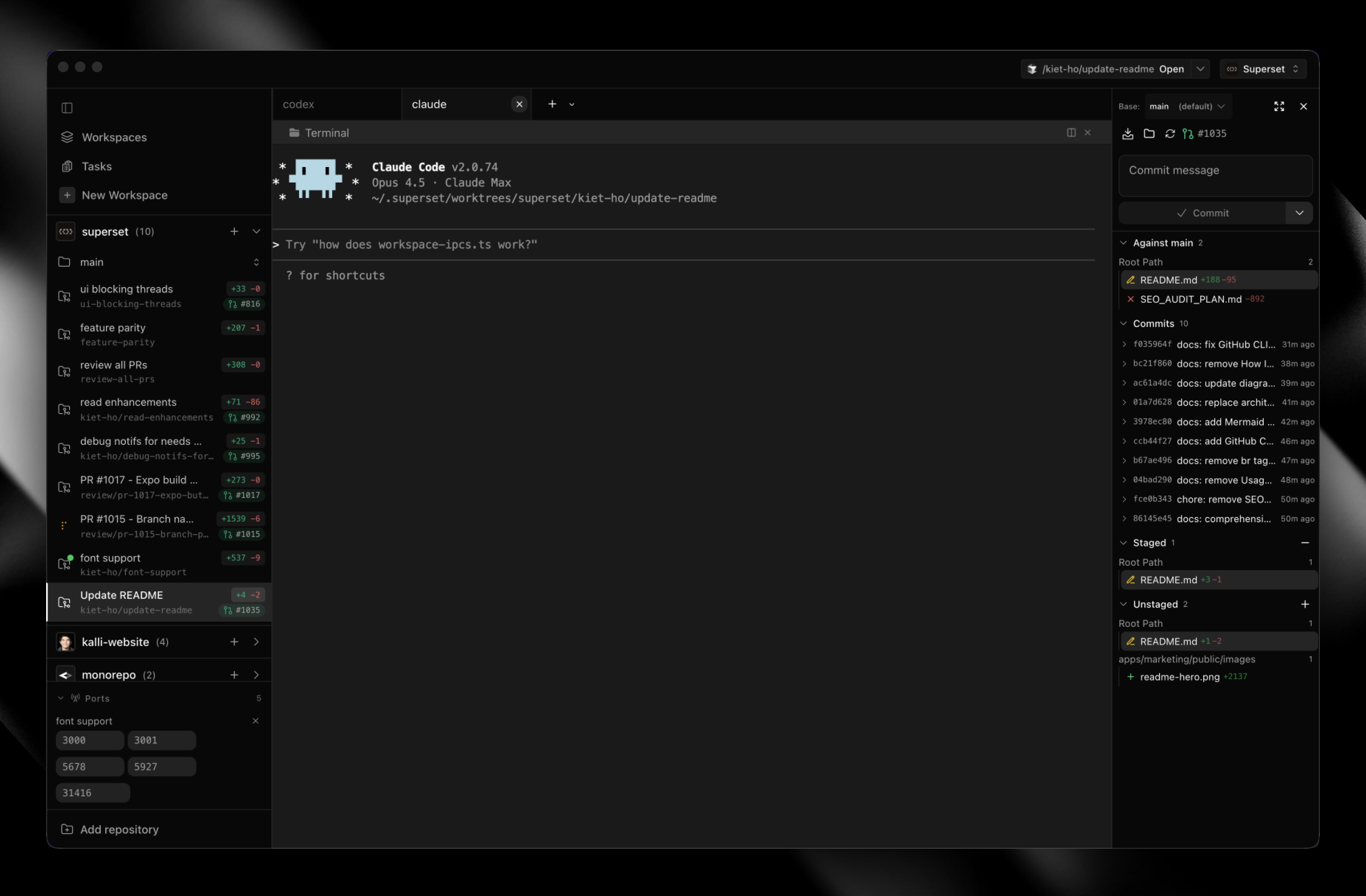

Best for: Simple, privacy-respecting parallel agent execution — the ‘tmux for agents’ buyer on macOS

7,463 stars, 96pts HN with 90 comments, 512 Product Hunt upvotes, desktop-v1.2.1 (2026-03-18). Apache 2.0, zero telemetry, BYOK. Dogfooded: ‘We use Superset to build Superset.’ Built-in diff viewer, 10+ agent CLIs.

Best for: Enterprise teams needing managed coding agent service with support contracts

$65M total funding ($50M Series B at $300M valuation — NEA, Sequoia, J.P. Morgan, NVIDIA). Enterprise customers: MongoDB, EY, Bayer, Zapier, Clari (Stanford Today, press). Strongest enterprise traction in the coding agent space.

Best for: Claude Code power users wanting swarm/parallel agent features as an extension

10,110 stars in ~10 weeks — extraordinary growth rate. 32 agents, 5 execution modes (including Ultrapilot 3-5x parallel, Swarm), smart model routing. Addy Osmani cited it.

Best for: TypeScript-heavy teams wanting autonomous CI fix and programmatic agent orchestration

4,510 stars, MIT, 30 parallel agents, 40K LoC TypeScript. Autonomous CI fix and merge conflict resolution — unique in category. Dogfooded: 86/102 PRs built by agents.

Best for: Teams prioritizing benchmark performance in multi-agent research tasks (not coding-specific)

GAIA Level 3 #1 (61.5%), DeepSearchQA #1 (87.6%, beat Perplexity by 8.1%). 109pts HN / 69 comments. YC S23. Visual canvas model is differentiated.

Best for: Issue-level repair with strong academic benchmark credibility

18.7K stars, MIT, Princeton NLP. 79.2% SWE-bench Verified with Opus 4.5. Best single-agent issue fixer.

Best for: Loop-pattern reference implementation

Clean loop pattern with Vercel/Anthropic adoption. VentureBeat and The Register coverage.

Best for: Python teams building production multi-agent systems — complex stateful workflows, model-agnostic, enterprise observability

40.8M PyPI/month — #1 Python framework by 7×. DL/star ratio 1,516 — highest production adoption signal. Independently-verified Fortune 500: Klarna, Replit, Uber, LinkedIn (Particula.tech). NVIDIA enterprise partnership. ~400 companies on LangGraph Platform. LangSmith best-in-class observability. Checkpointing + state persistence.

Best for: Teams wanting fast iteration with minimal boilerplate — simpler agent chains where state persistence isn’t critical

17.9M PyPI/month — 3× CrewAI, closing on LangGraph. Minimalist API (4 primitives: Agents, Handoffs, Guardrails, Tracing) learnable in an afternoon. Now supports 100+ LLMs via Chat Completions API — not locked to OpenAI. Fastest star accumulation (20K in 12 months). TypeScript SDK also available.

Best for: Python teams that already use Pydantic and want type-safe agent logic — pairs with LangGraph for orchestration

15.6M PyPI/month — #3 by volume, ahead of CrewAI. DL/star ratio 1,003 — massive silent adoption. Pydantic team’s reputation is unmatched trust signal. Runtime type enforcement is a genuine differentiator. V1 shipped with Temporal integration + Logfire observability. ZenML: ‘PydanticAI for agent logic, LangGraph for orchestration’ is the emerging production pattern.

Best for: Rapid prototyping of role-based multi-agent systems — teams that need A2A/MCP protocol support today

5.7M PyPI/month (3× growth in 6 months). Fortune 500: PwC, IBM, DocuSign (Particula.tech). YAML-driven — consistently rated ‘fastest to prototype’ (~40% faster idea-to-demo than LangGraph). Only major framework with native A2A + MCP support (v1.10).

Best for: AWS-native teams — don’t fight the platform if already on Bedrock

5.5M PyPI/month. DL/star ratio 1,027 — highest in category (driven by enterprise CI pipelines). AWS dogfoods in Kiro, Amazon Q, AWS Glue, VPC Reachability Analyzer. InfoQ (independent): ‘30min → 45sec, 94% quality improvement, $5M savings.’ 20+ pre-built tools with MCP support for 1000s more. Simplest API: ‘dead simple’ per independent review.

Best for: GCP-native teams wanting fastest path from prototype to deployed agent

4.4M PyPI/month. Fastest absolute star growth (18.5K in <12 months). Pre-built Workflow agents (Sequential, Parallel, Loop). Most complete DevOps story: built-in evaluation, testing, containerization, deployment. Named customers: Renault Group, Box, Revionics. ZenML: ‘ADK emphasizes velocity, LangGraph emphasizes control.’

Best for: .NET/C# enterprise shops on Azure — teams needing multi-language agent SDKs

27.5K stars. Named customers: KPMG, BMW, Fujitsu (independently corroborated at European AI Summit). 10,000+ orgs on Azure AI Foundry Agent Service. Multi-language (Python, C#, Java) — unique. MCP support shipped v1.28.1. 2.8M PyPI/month (Python only).

Best for: Full-stack agent platform — agents + teams + workflows + AgentOS control plane

38.8K stars (top 3 in category). $5.4M funding. Full-stack offering with AgentOS control plane. Active development.

Best for: Legacy — teams on AutoGen should plan migration to Microsoft Agent Framework

55.9K stars (2nd highest in category, legacy). Microsoft officially placed in maintenance mode — bug fixes and security patches only (VentureBeat 2026-02-19). AutoGen + Semantic Kernel merging into Microsoft Agent Framework (GA ~Q2 2026).

Best for: Research/experimentation with code-as-actions paradigm — NOT for production

26,160 stars (HuggingFace brand + ‘Open Deep Research’ virality, 395 HN pts). CodeAgent paradigm is genuinely differentiated. 456K PyPI/month.

Best for: TypeScript/JavaScript teams — the default choice with no comparable competitor

2.0M npm/month — only JS-native framework at this scale. 22,144 stars; 442-pt HN Show HN + 213-pt v1.0 launch. $13M YC W25 (Paul Graham, Guillermo Rauch, Amjad Masad). Named customers: Replit, PayPal, Adobe. Vercel AI SDK integration.

Best for: Orchestrating SaaS integrations and processes visually with AI nodes — NOT for building agent systems in code

180K stars. $40M ARR, 3,000+ enterprise customers (Vodafone, Delivery Hero, Microsoft). $180M total funding. 1,100+ integrations. Native AI Agent node + MCP. Unassailable.

Best for: Teams needing true Apache-2.0 open-source workflow automation — the n8n alternative

27K stars in ~4 months. 240 HN pts, 61 comments (‘Apache-2.0 n8n alternative’). $7M Series A. Apache-2.0 license differentiator vs n8n’s ‘fair-code’ Sustainable Use License.

Head to head

Emdash: Tier 1 vs Tier 2 independent classification, Best-of-N feature, 22+ agents, Linear/Jira/GitHub Issues integration. Superset: 2.7x more stars, 90 HN comments (more discussion), Apache 2.0, zero telemetry. Emdash for teams with issue trackers; Superset for individuals wanting tmux-for-agents.

Different lanes entirely. OpenHands is a full autonomous platform — delegate whole tasks. Emdash is an orchestration layer — supervise parallel agents yourself. Platform vs multiplexer.

OMC has 3.7x more stars and fastest growth, but is Claude Code-only. Emdash supports 22+ agents and has Ry Walker Tier 1 independent validation. If Claude Code remains dominant, OMC’s lock-in is a strength. If market fragments, Emdash’s agent-agnostic approach wins.

Public signals

40.8M downloads/month — 7× CrewAI. DL/star ratio 1,516 — highest production adoption signal. Klarna, Replit, Uber, LinkedIn independently verified (Particula.tech). NVIDIA enterprise partnership confirmed. ~400 companies on LangGraph Platform. LangSmith best-in-class observability.

Downloads exploded to 17.9M/mo — 3× CrewAI, closing on LangGraph. No longer OpenAI-locked: supports 100+ LLMs via Chat Completions API. Minimalist API (Agents, Handoffs, Guardrails, Tracing). Fastest star accumulation: 20K in 12 months. TypeScript SDK also available.

15.6M downloads/month — #3 by volume, ahead of CrewAI. DL/star ratio 1,003 — massive silent adoption. Pydantic team trust signal. Runtime type enforcement unique. ZenML: ‘PydanticAI for agent logic, LangGraph for orchestration’ is the 2026 production pattern.

5.7M/month. 46.6K stars (highest of pure agent frameworks) but DL/star ratio 123 vs LangGraph’s 1,516. Only major framework with native A2A + MCP support (v1.10). ~40% faster to prototype than LangGraph. Named customers: DocuSign, IBM, PwC.

DL/star ratio 1,027 — highest in category (enterprise CI pipelines). AWS dogfoods in Kiro, Amazon Q, Glue, VPC Reachability Analyzer. InfoQ (independent): ‘30min→45sec, 94% quality improvement, $5M savings.’ Near-zero HN traction despite massive downloads.

Strongest named customer list in entire category (KPMG, BMW, Fujitsu — independently corroborated at European AI Summit). 10,000+ orgs on Azure AI Foundry Agent Service. Multi-language (Python, C#, Java). .NET-first — Python is secondary.

Only serious JS/TS framework at scale — 2.0M npm/month with no comparable competitor. Two strong HN launches (442pts + 213pts). $13M YC W25. Named customers: Replit, PayPal, Adobe.

69,425 stars (verified). $18.8M Series A (Madrona, Menlo, Fujitsu). AMD strategic partnership. SWE-bench Verified 72% with Claude 4.5 Extended Thinking. Multi-SWE-Bench #1 (8 languages). Gap to #2 is enormous.

Best-of-N (run multiple agents on same task, ship the best diff). Issue tracker integration (Linear, Jira, GitHub Issues — unique). 18+ agents. SSH remote, PR creation + CI monitoring. YC W26. Lowest stars but highest evidence quality.

112 HN comments is the deepest community discussion in parallel IDE segment. Shell-based, uses GitHub Issues + git worktrees. Only 1 open issue — remarkably lean. Pragmatic approach over complexity.

Only credible open-source alternative to n8n. Apache-2.0 license differentiator vs n8n’s ‘fair-code’ license. 27K stars in ~4 months. Revenue/enterprise evidence thin — too early to challenge n8n.

Top 3 by stars but 3rd most inflated DL/star ratio. All HN submissions appear from same user (likely founder). Performance claims (10,000x faster) are single-source, unverified. No independently confirmed enterprise customers.

41.5K stars in 3.5 months but zero HN stories >10 pts — most suspicious growth pattern in the analysis. Custom SUL-1.0 license. Plugin ecosystem exists but star-to-discourse gap is too wide to rank. Rank above Emdash only when HN or equivalent discourse materializes.

VentureBeat (2026-02-19): Microsoft officially confirmed bug-fixes and security patches only, no new features. 399K PyPI/month (7% of CrewAI). Last release python-v0.7.5 (2025-09-30). Replaced by Microsoft Agent Framework (RC 2026-02-19, GA ~Q2 2026) which combines AutoGen + Semantic Kernel.

What changes this

OpenAI Agents SDK ships checkpointing → jumps to #1 Python framework. LangGraph’s primary moat is state persistence.

LangGraph adds native A2A + MCP → eliminates CrewAI’s last differentiator. CrewAI drops further.

Microsoft Agent Framework hits GA → AutoGen drops off entirely. Semantic Kernel may merge. New combined entry enters top 5.

Pydantic AI + LangGraph becomes official pattern → Pydantic AI rises to #2 as the standard agent-logic layer.

Independent Agno enterprise case study surfaces → Agno jumps from #8 to #5-6. Currently held back by evidence quality only.

A2A protocol reaches broad adoption → frameworks without A2A support (LangGraph, OpenAI SDK) face pressure to add it or lose ground.

Strands breaks out of AWS ecosystem → multi-cloud Strands would immediately challenge for #3 based on DL/star ratio.

CVE in another code-execution framework → validates smolagents security concerns as category-wide.

oh-my-openagent gets HN validation → displaces Emdash as #1 parallel IDE. Star count would finally match discourse.

If Claude Code Agent Teams exits experimental, it directly compresses oh-my-claudecode and partially compresses Emdash/Superset/ccpm.

If Emdash crosses 10K stars or ships v1.0, it solidifies #1 parallel IDE. The star gap to ccpm/Superset is narrowing.

If Superset ships Linux/Windows support, it eliminates its biggest limitation and could challenge for #2 parallel IDE.