Copilot: 15M devs, 12K orgs, 564 HN pts, proven track record. Cursor: $2B ARR, event-driven triggers, 7M MAU but 7 HN pts on Automations. Copilot wins on distribution and trust; Cursor wins on autonomy model. Copilot for now; Cursor could challenge within 6 months.

Software Factories

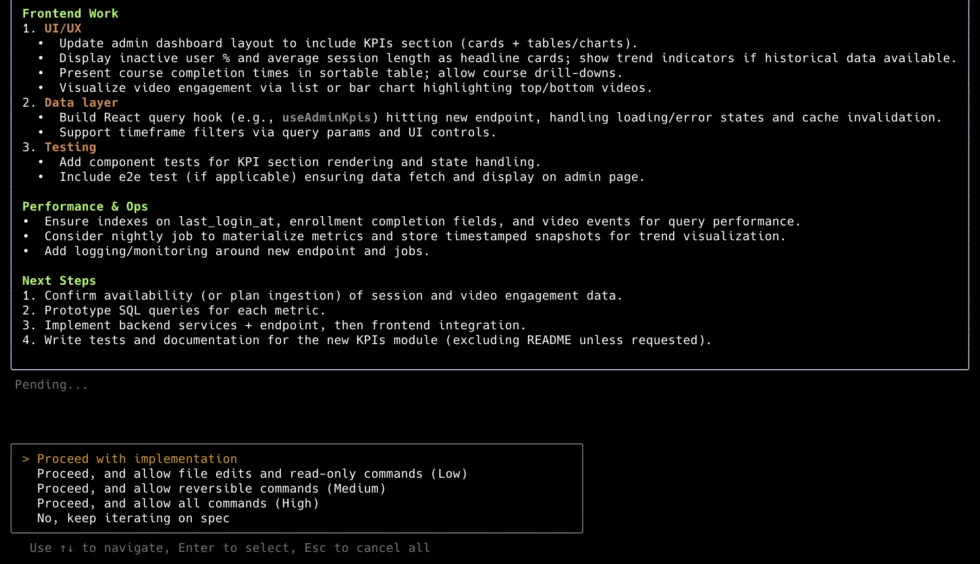

Autonomous coding agents that plan, write, test, and ship code with minimal human oversight. Claude Code leads on benchmarks, community signal, and platform distribution (Apple Xcode). Cursor leads on revenue and event-driven automation. Gemini CLI is the free-tier disruptor. The category has split into CLI-first (Claude Code, Codex CLI, Gemini CLI), IDE-integrated (Cursor, Copilot, Cline), open-source (OpenHands), and enterprise-managed (Augment, Factory). SWE-bench Verified is dead — Pro is the new standard.

18

Ranked

12

Signals

Current ranking

Best for: Developers who want the most capable coding agent — complex multi-file refactors, greenfield projects, long-running autonomous execution

80,078 stars, 51 commits/month, v2.1.80 (2026-03-19). SWE-bench Pro 49.8% (custom scaffolding) — top tier. SWE-bench Verified 80.9% (Opus 4.5) — #1 overall. HN: 2,127 pts top story; 16,000+ pts across top-20 stories — more than all competitors combined. 46% 'most loved' (morphllm survey) vs Cursor 19%, Copilot 9%. Apple Xcode 26.3 native integration (2026-02-26). ~$2.5B run-rate (unconfirmed).

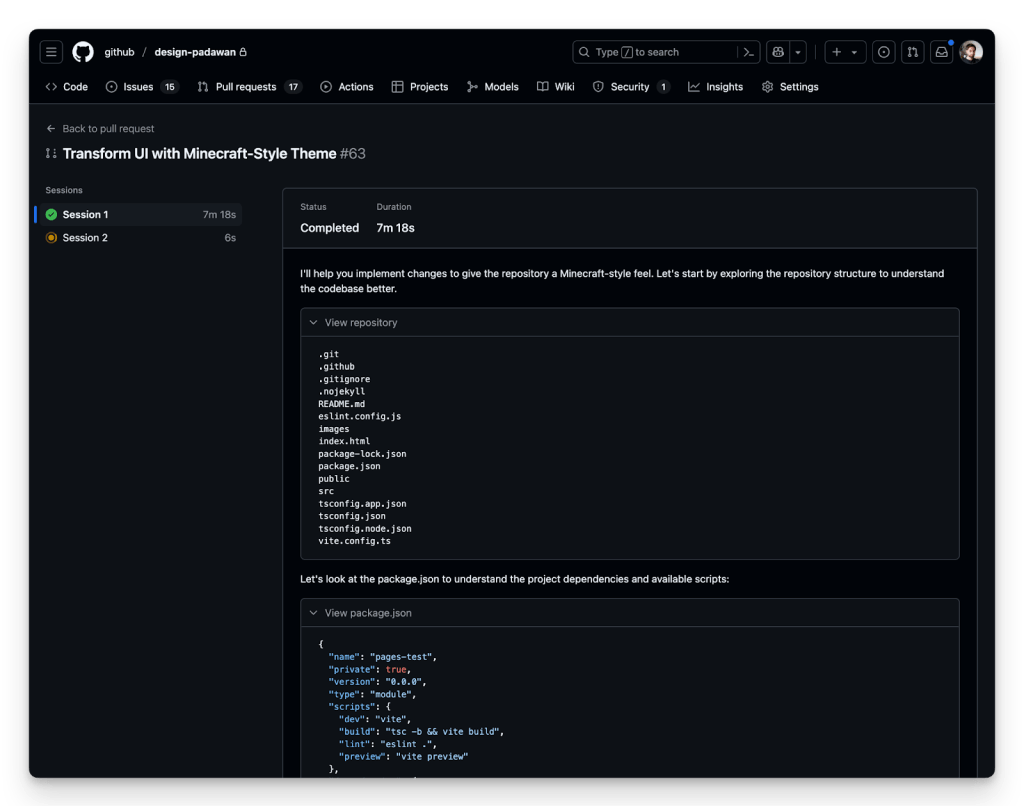

Best for: Teams that want an all-in-one IDE with autonomous background agents AND event-driven automation (Slack → agent writes PR → human reviews)

$2B ARR doubled in 90 days (Bloomberg, 2026-03-02), 1M+ DAU, $29.3B valuation. SWE-bench Pro 50.2% (custom) — marginally #1 on Pro. Automations: event-driven triggers (Slack, PagerDuty, Linear, webhooks, cron) launched 2026-03-05. 35% of Cursor's own PRs merged by agents. Enterprise 60% of revenue. Named customers: OpenAI, Midjourney, Perplexity, Shopify.

Best for: Budget-conscious developers, Google Cloud/Android shops, and anyone wanting a capable free coding CLI

98,380 stars (#1 in category). SWE-bench Verified 80.6% (Gemini 3.1 Pro) — near Claude Code. SWE-bench Pro 43.3% (Gemini 3 Pro SEAL). Free tier: 1,000 req/day. HN: 1,428 pts top story. 709 commits/month — very active.

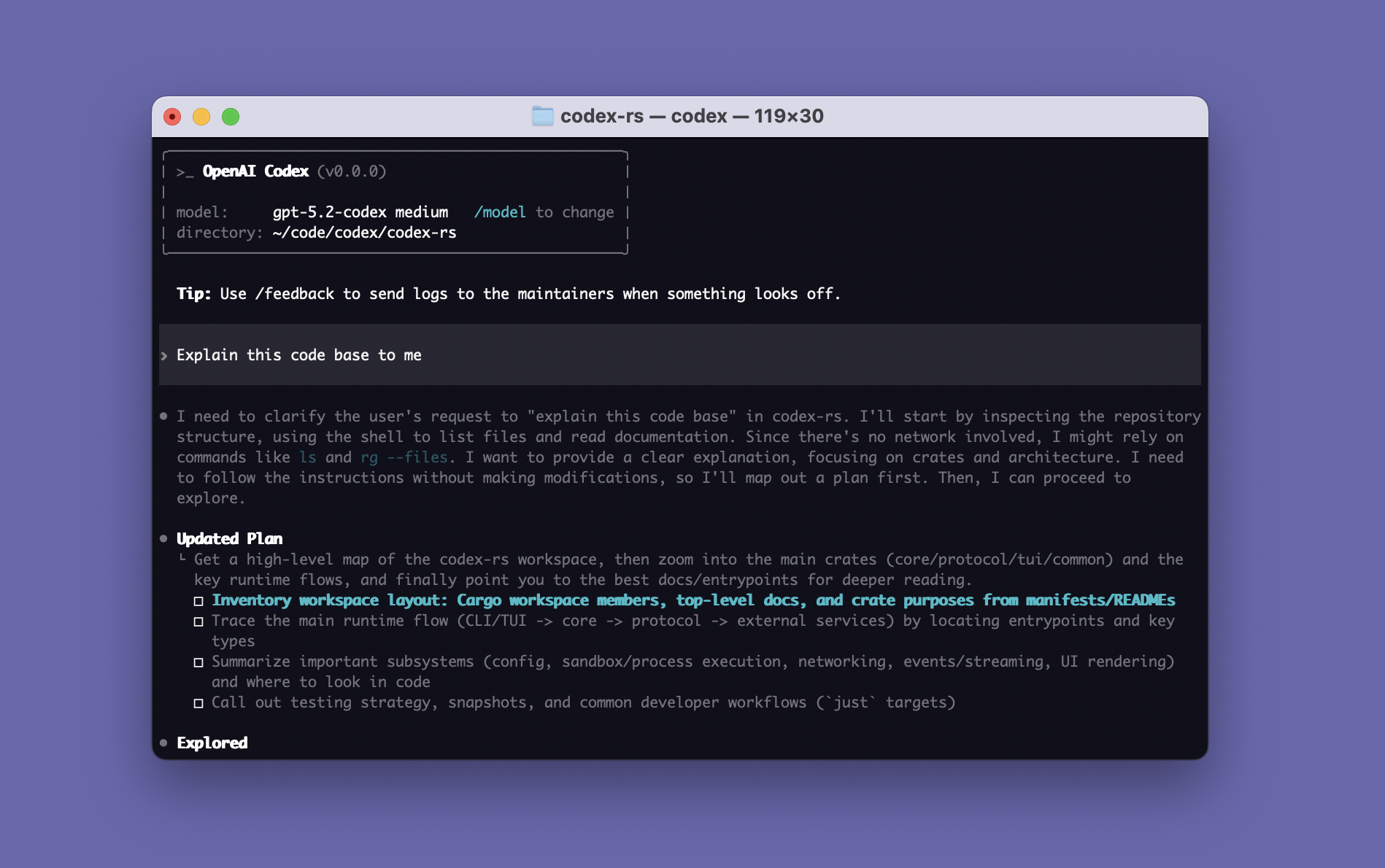

Best for: Developers in the OpenAI ecosystem wanting a fast, token-efficient coding CLI with strong benchmark scores

66,359 stars. SWE-bench Pro 57.0% (custom) — #1 on Pro by this metric. Terminal-Bench 77.3% (#2). 896 commits/month — highest commit velocity. Rust-based, sandbox-first execution. HN: 587 pts top story. 1M+ first-month users. Free with ChatGPT subscription.

Best for: Enterprise teams already on GitHub needing zero-friction async coding with compliance baked in

20M+ users, 4.7M paid (75% YoY growth), ~90% Fortune 100. Multi-model GA (Claude + Codex, Feb 2026). Agentic Code Review GA (Mar 2026). CLI GA (Feb 2026). SWE-bench Verified 56.0%. Jira integration public preview.

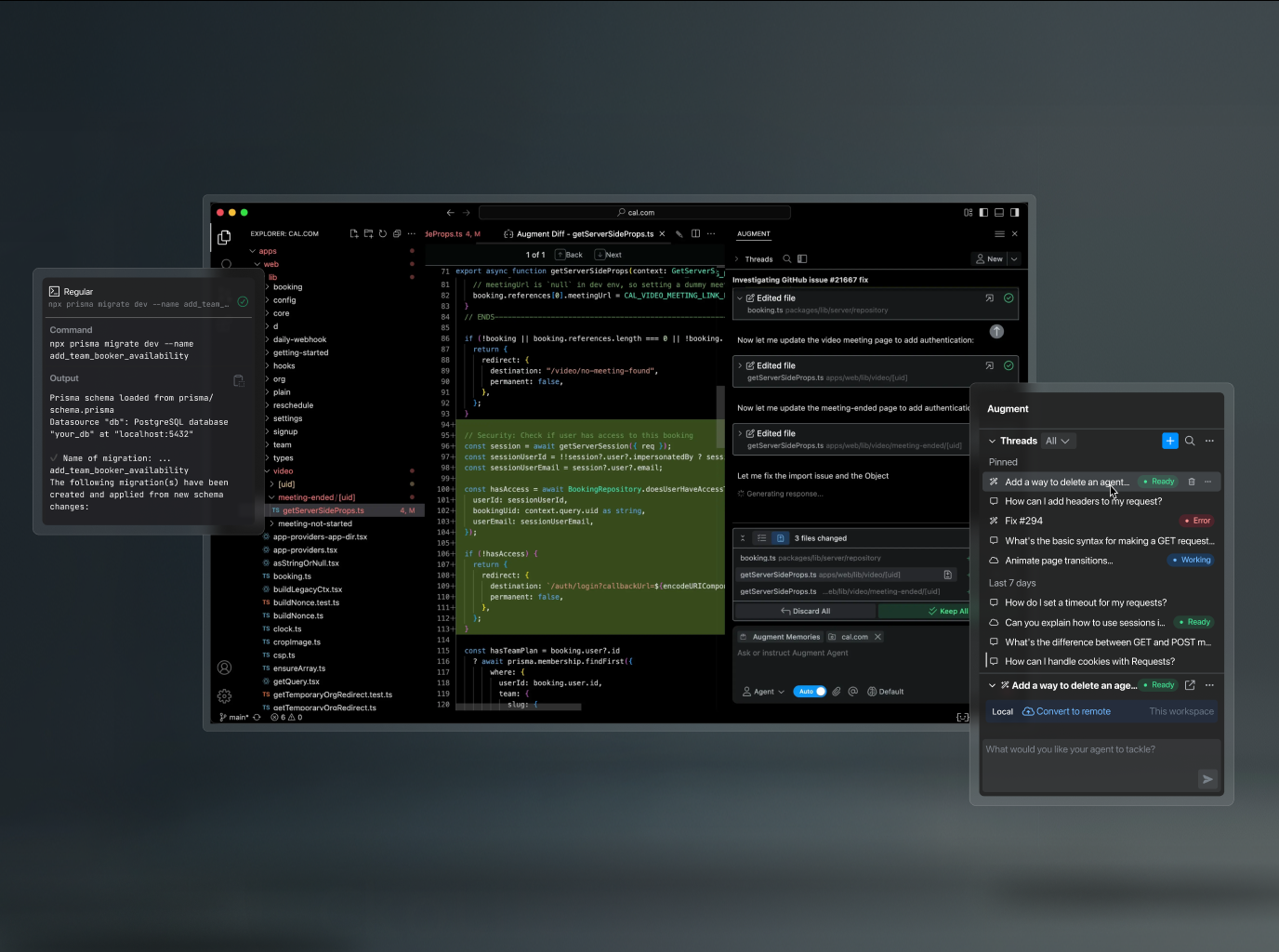

Best for: Large enterprises with massive monorepos needing deep codebase understanding via enterprise sales

$252M total funding (confirmed TechCrunch). SWE-bench Pro 51.8% (#1 custom). 70.6% Verified (third-party, unaudited). Context Engine indexes entire codebases. Tekion case study: time-to-merge dropped 60%.

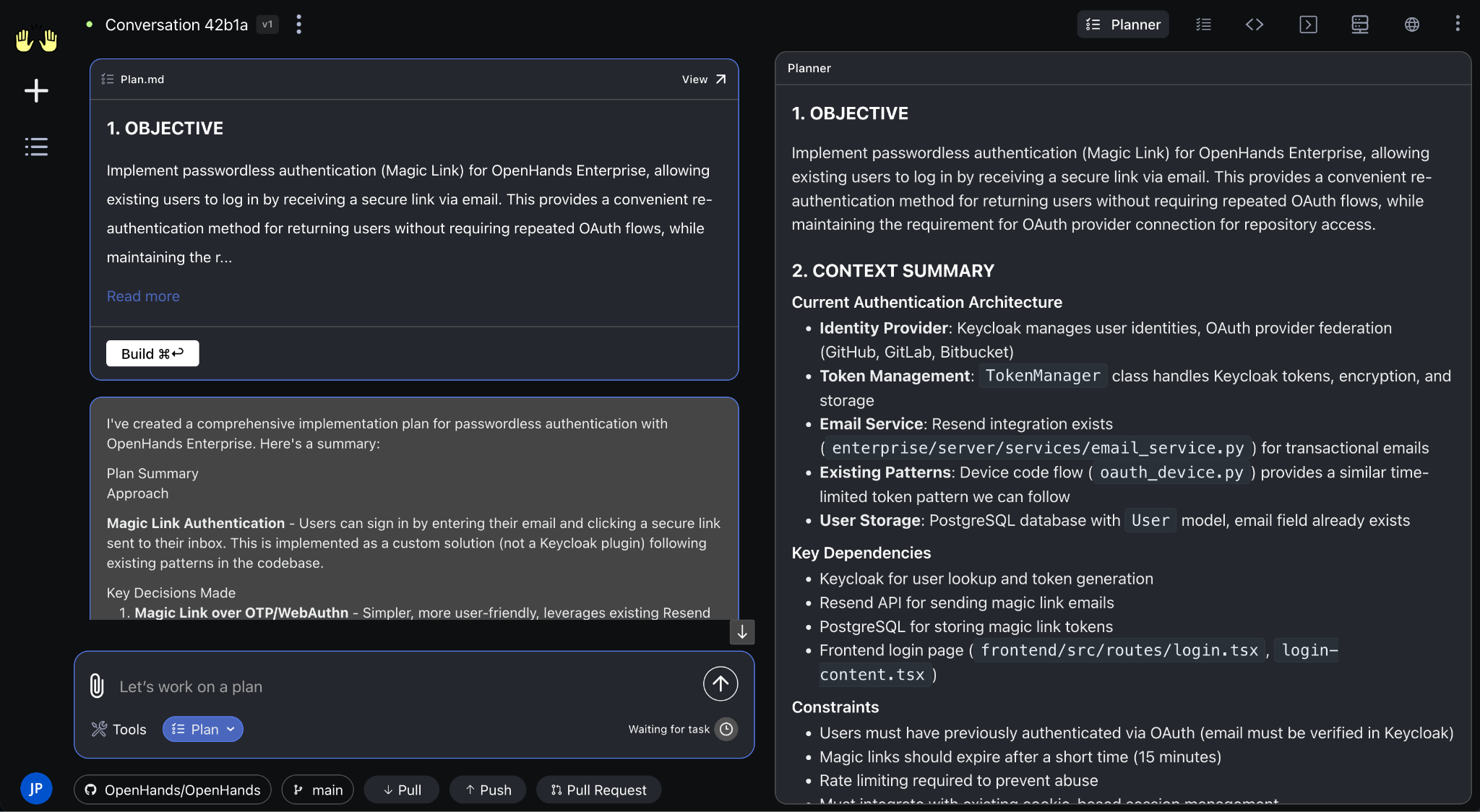

Best for: Regulated industries needing on-prem/air-gapped deployment with MIT-licensed, model-agnostic agents

69,425 stars, 455 contributors, MIT license. $23.8M raised. ICLR paper. #1 Multi-SWE-Bench (8 languages). SWE-bench Verified 43.2%. Planning Agent v1.5.0 (Mar 2026). 272 commits/month.

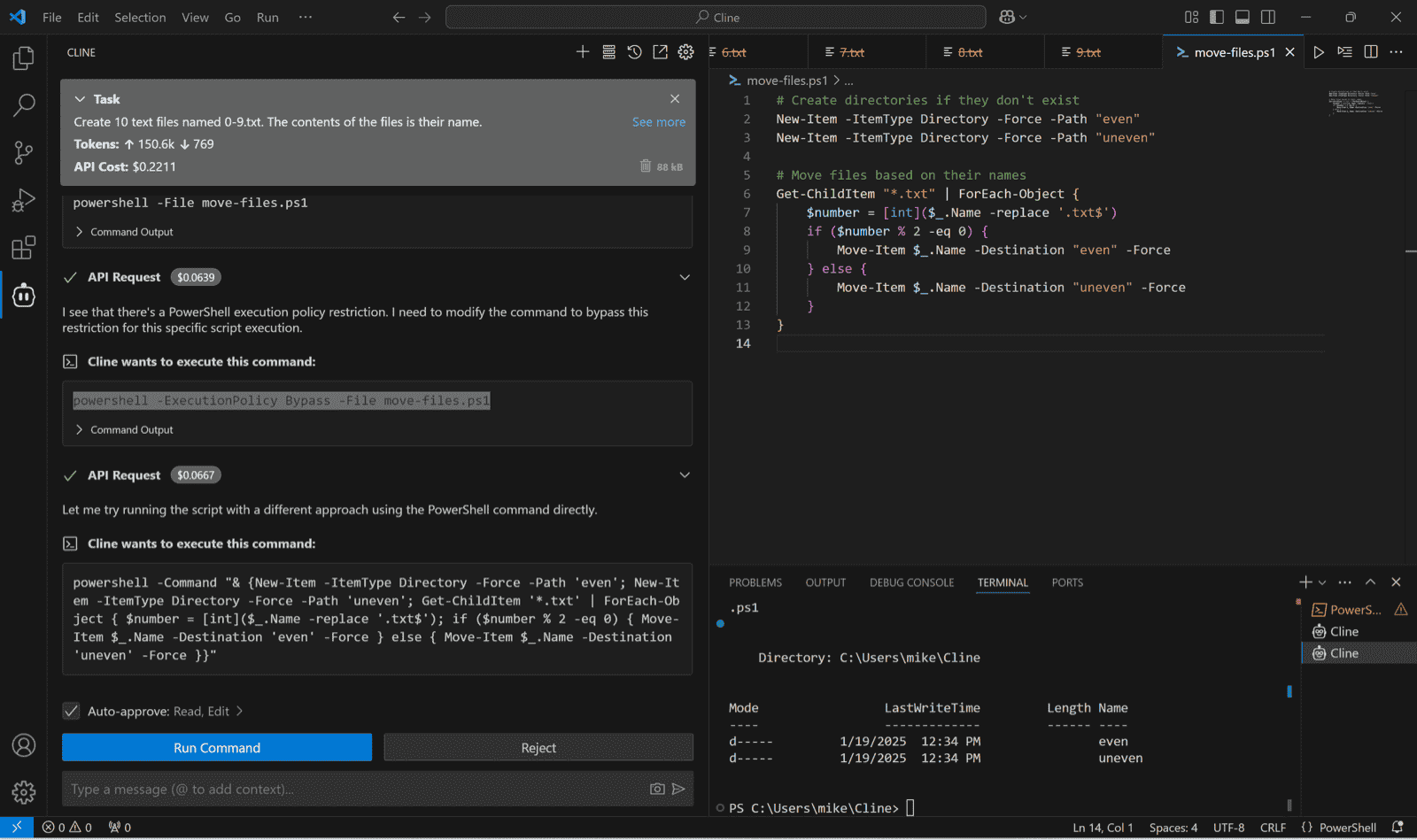

Best for: VS Code users wanting BYOM flexibility with the largest IDE-agent install base

59,157 stars, 5M+ installs across platforms. Apache 2.0, BYOM. $32M funding (Emergence Capital). Named enterprise customers: Salesforce, Samsung, SAP. 155 commits/month, v3.74.0 (2026-03-19).

Best for: CLI power users wanting open-source, multi-model, git-native pair programming with zero lock-in

42,157 stars. 49.2% SWE-bench Verified — independently reproducible. Apache 2.0, BYOK. Good multi-model and git integration. Pioneer in CLI-based AI coding.

Best for: Teams wanting a fully sandboxed autonomous agent — Windsurf acquisition could change the picture

$10.2B valuation, ~$900M total funding. Enterprise customers: Goldman Sachs, Santander, Nubank. Full browser + terminal + editor sandbox. Acquired Windsurf — may gain IDE distribution. 502 HN pts.

Best for: Non-developer / vibe-coding audience building full-stack apps with minimal code knowledge

Parallel sub-agents (auth, DB, backend, frontend simultaneously), mobile app generation, infinite canvas design variants. ChatGPT distribution partnership. 2.28M monthly visits.

Best for: Large enterprises (5,000+ engineers) wanting vendor-managed, compliance-friendly coding agent with white-glove support

$70M total funding. Terminal-Bench contribution (ICLR 2026 paper). Wipro partnership (tens of thousands of engineers). Customers: MongoDB, EY, Bayer, Zapier, Clari. Sequoia/NVIDIA backed.

Best for: Suspended — safety incident (6.3M orders lost) pending 90-day safety reset

AWS GovCloud launch (Feb 2026). Spec-driven workflow is a genuine differentiator. AWS backing provides distribution floor. Free-$39/mo.

Best for: Free experimentation — proactive task scanning is unique but not yet a daily driver

Google backing. 2.28M beta visits. Proactive task scanning (finds TODOs unprompted) — unique. Free 15 tasks/day. 534 + 339 HN pts.

Best for: Free, open-source, provider-agnostic alternative — AAIF founding project

33,269 stars, 395 contributors, Apache 2.0. Linux Foundation AAIF founding member. 338 commits/month. 60% Block employee adoption. MCP reference implementation.

Best for: CLI-first power users who value Sourcegraph code intelligence lineage and BYOK flexibility

Co-founded by Quinn Slack and Beyang Liu (Sourcegraph). Self-reported profitable; Sequoia/a16z backing. CLI-first with Smart/Rush/Deep modes; Agent Skills system.

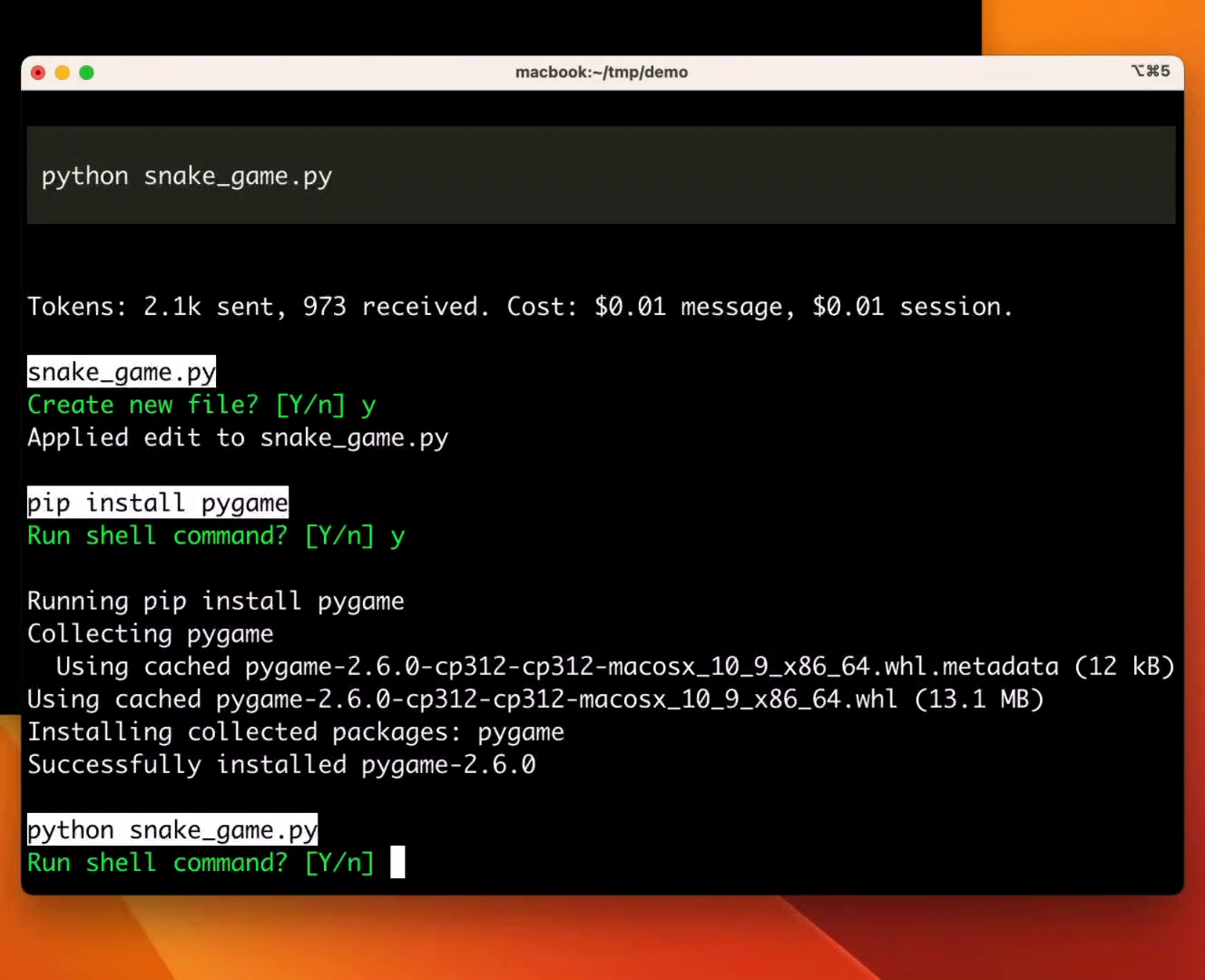

Best for: Research-grade autonomous bug fixing and benchmark reproducibility

Princeton research project. 18.7K stars. MIT licensed. mini-swe-agent (100 lines) scores >74% SWE-bench.

Best for: Simple, controllable autonomous loops with human-readable state

Simplest pattern: while-true prompt loop with file persistence. Adopted by Anthropic, Vercel, Block's Goose.

Head to head

Different lanes. Copilot: zero-friction, GitHub-native, no setup. OpenHands: self-hostable, model-agnostic, MIT, scales to 1000s of parallel tasks. Copilot for GitHub-native teams; OpenHands for control, self-hosting, data sovereignty.

Cursor: 13x Devin's pre-acq revenue, event-driven triggers are novel, $2B ARR. Devin: more autonomy history, 67% merge rate on defined tasks, $10.2B valuation. Cursor wins on revenue and trigger model; Devin has more autonomy history but weaker product evidence.

OpenHands: 68K stars, MIT, free, model-agnostic, broader enterprise logos (AMD, Apple, Google, NVIDIA). Devin: $10.2B valuation, $150M+ ARR, but 15% complex-task success. OpenHands for control and cost; Devin for turnkey defined-task automation.

Factory: Terminal-Bench #1, Sequoia+NVIDIA backing, enterprise customers. But 7 HN pts with 0 comments = near-zero community validation. Investor excitement ≠ developer adoption. Needs independent verification to move up.

Public signals

Strongest on every axis: benchmark performance (SWE-bench Pro 49.8%, Verified 80.9%), community signal (2,127 HN pts top, 16K+ across top-20 — more than all competitors combined), developer love (46% 'most loved'), Apple Xcode 26.3 native integration. v2.1.80 released 2026-03-19.

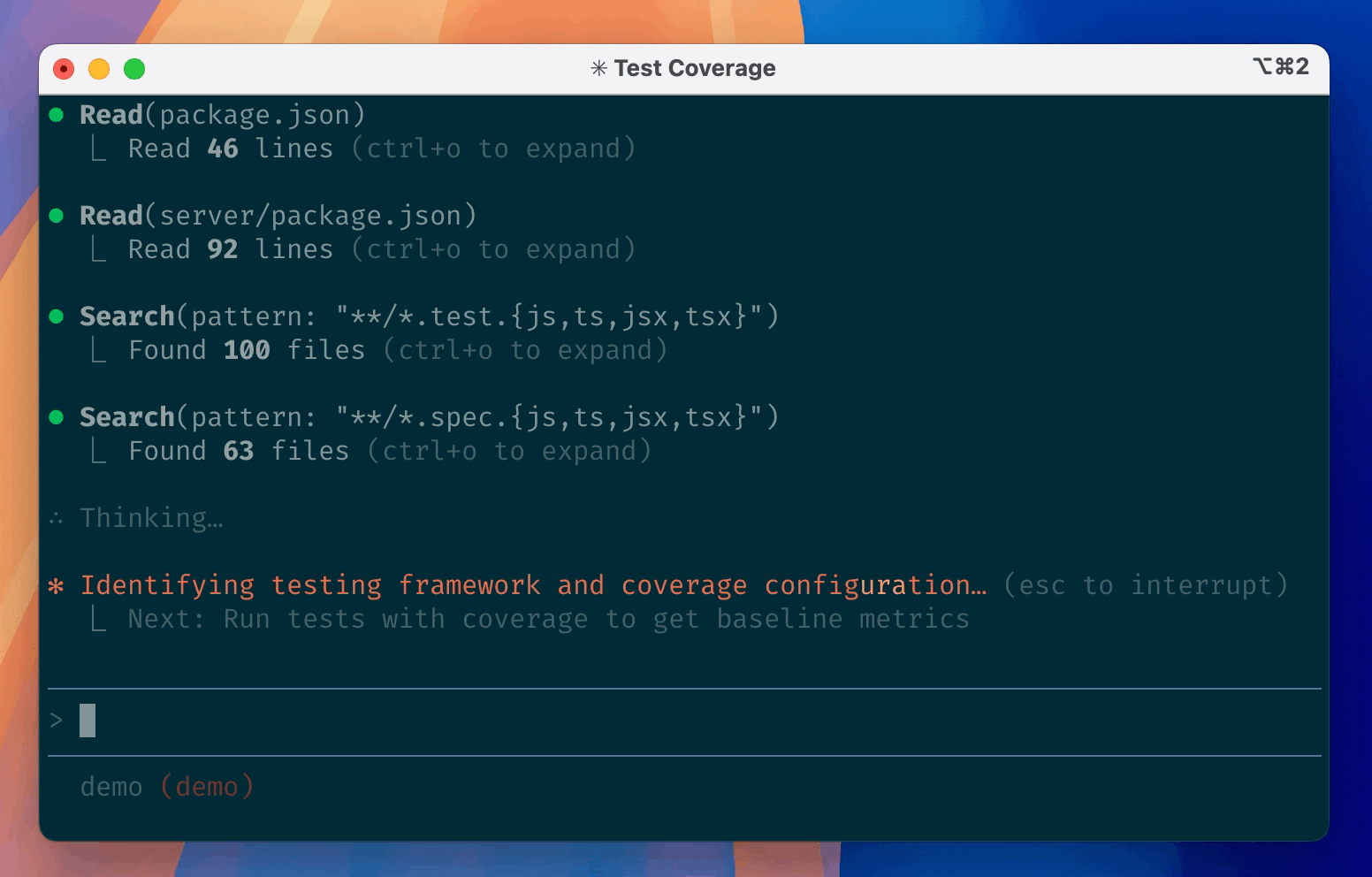

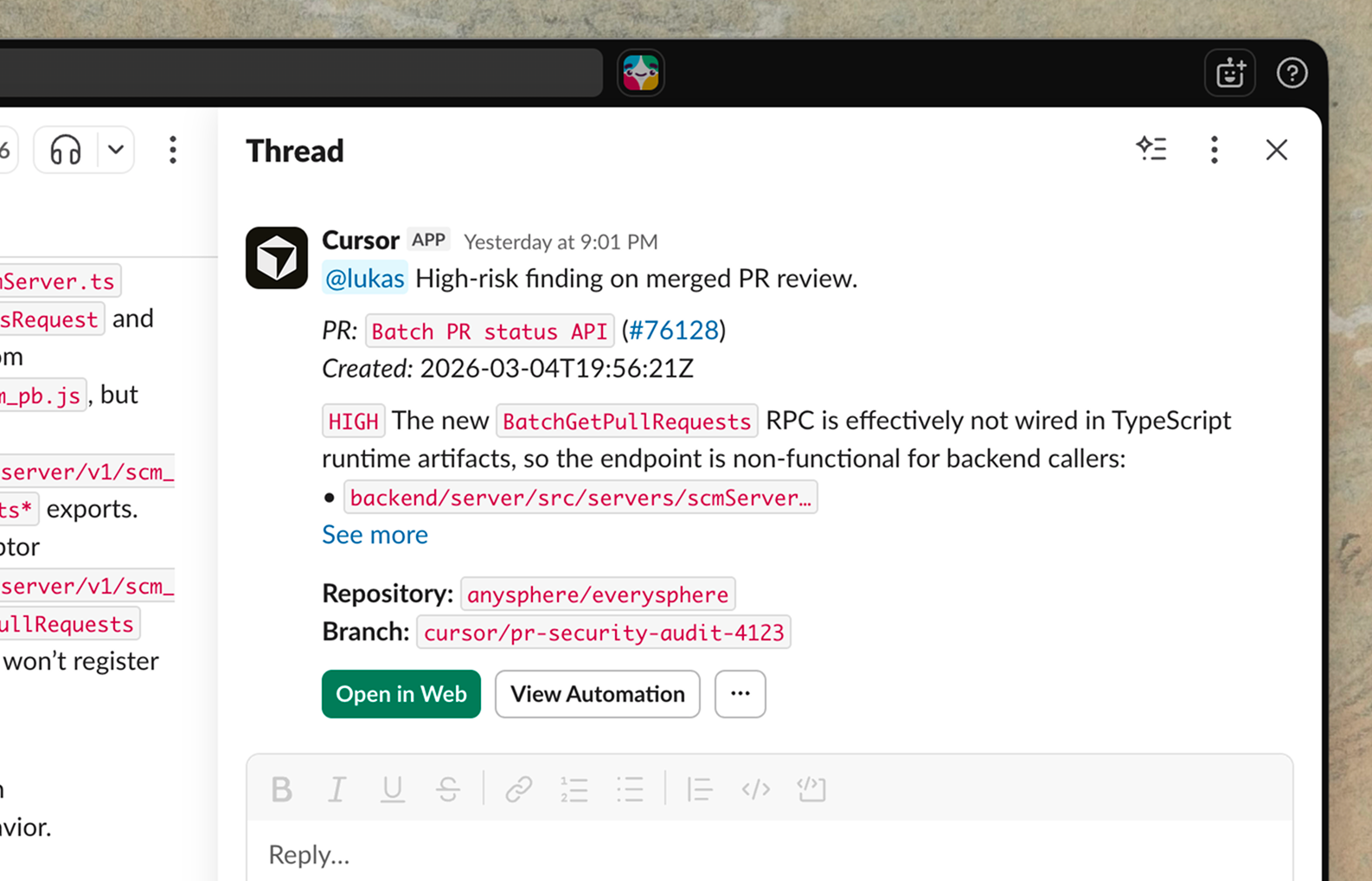

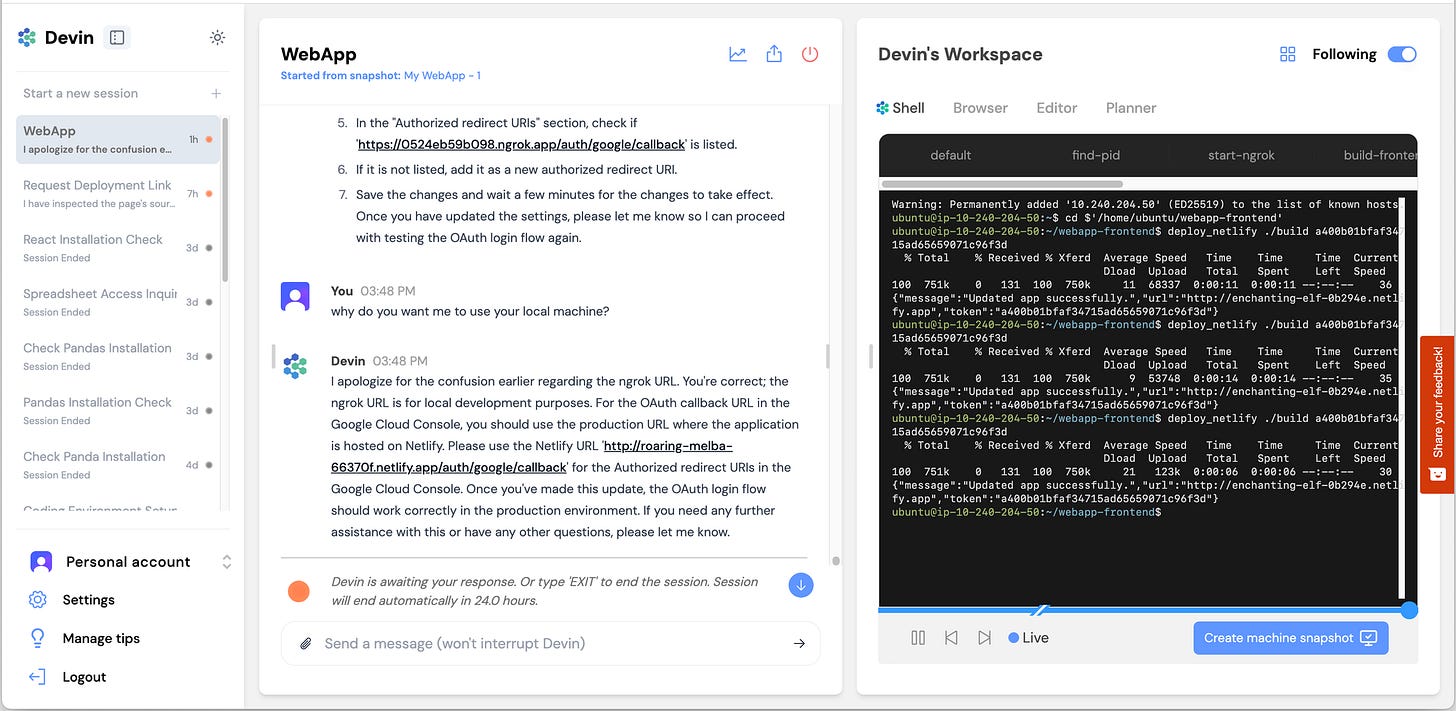

$2B ARR (Bloomberg, 2026-03-02). Automations: event-driven triggers (Slack, PagerDuty, Linear, webhooks, cron). 35% of own PRs merged by agents. 60% enterprise revenue. SWE-bench Pro 50.2% (custom).

Highest star count in the category. Free tier with 1K req/day is unmatched. SWE-bench Verified 80.6% near-parity with Claude Code. Pro score 43.3% shows real gap. 709 commits/month — very active.

Highest SWE-bench Pro score (custom scaffold). 896 commits/month — highest velocity in category. Free with ChatGPT subscription. Terminal-Bench 77.3% (#2). 1M+ first-month users.

Largest distribution moat (20M users, 4.7M paid, ~90% Fortune 100). Multi-model GA (Claude + Codex, Feb 2026). CLI GA. But SWE-bench 56.0% is lowest among tier-1 agents. Only 9% 'most loved.'

Open-source standard — 69.4K stars, 455 contributors, MIT, model-agnostic. #1 Multi-SWE-Bench. ICLR paper. Best for on-prem/air-gapped. SWE-bench Verified 43.2% shows gap vs closed-source.

The defining safety incident of the category. AI agent autonomously deleted live production environment. Amazon 90-day 'code safety reset' across ~335 critical systems. Forced 80% weekly usage mandate was contributing factor.

All frontier models trained on Verified solutions. Verified-to-Live gap is massive: 70%+ Verified → ~23% Pro → ~19% Live. SWE-bench Pro (1,865 tasks) is the new standard but few have published Pro scores.

Strongest benchmark story (Pro 51.8%, Verified 70.6%). $252M funding (TechCrunch confirmed). Context Engine for monorepos. But no public repo, no community, enterprise-only. Revenue reportedly only $20M.

Highest valuation in category. But: no public benchmarks, HN sentiment skeptical, Windsurf acquisition caused customer exodus, 96% price cut ($500→$20). Answer.AI: 15% success on complex tasks.

Stack Overflow 2025. Tools with built-in review mechanisms have structural advantage. Gartner: >40% of agentic AI projects canceled by 2027 due to inadequate risk controls.

First major SI partnership in category. $70M total funding (Sequoia, NEA, NVIDIA). Terminal-Bench published as ICLR 2026 paper. But near-zero community signal (20 HN pts).

What changes this

If Augment Code / Intent publishes a verified SWE-bench submission → moves to #2 or #1 if score holds at 70%+.

If Amp publishes an open benchmark or third-party review → enters top 5.

If Kiro publishes a SWE-bench result and clarifies the outage → moves from #9 to #6-7.

If Devin submits a current, verified SWE-bench run → re-enters top tier from archived.

If Jules publishes any quantitative capability evidence → exits watch status.

If Cursor Automations community reception grows (HN currently 7 pts) → confirms or denies whether event-driven paradigm has real developer demand.

If SWE-bench Pro adoption becomes standard → all scores above 50% should be discounted ~20%; recalibrate entire ranking.

If OpenHands closes a large named enterprise deal → strengthens #3 claim on enterprise trust.

If another major safety incident at any ranked tool → that tool drops 2+ ranks and gets safety warning.