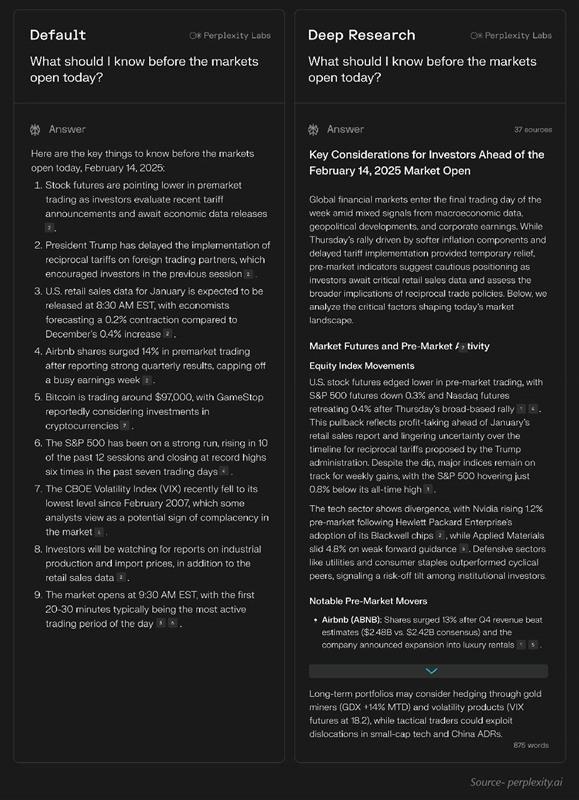

Perplexity wins on speed (15-30s vs 3-15 min), citation quality, SimpleQA accuracy (93.9%), and price ($20 vs $200). OpenAI wins on expert reasoning (HLE 26.6% vs 21.1%) and GAIA (72.57%). Use case dependent: speed vs depth.

Research

Deep research agents, academic tools, and research infrastructure. The category has split: platform deep research (Perplexity, OpenAI, Google), open-source agents (GPT Researcher, Tongyi, STORM), academic specialists (Elicit, Consensus), and infrastructure (Tavily, Firecrawl). Speed, citation quality, and self-hosting are the real differentiators.

12

Ranked

9

Signals

Current ranking

Best for: Speed-sensitive research, daily use, citation-critical work

Fastest (15-30s vs minutes). 93.9% SimpleQA accuracy (Helicone). 50+ sources/report. Highest citation reliability in independent tests. 368 HN pts. Reddit r/PhD endorsement. $20/mo.

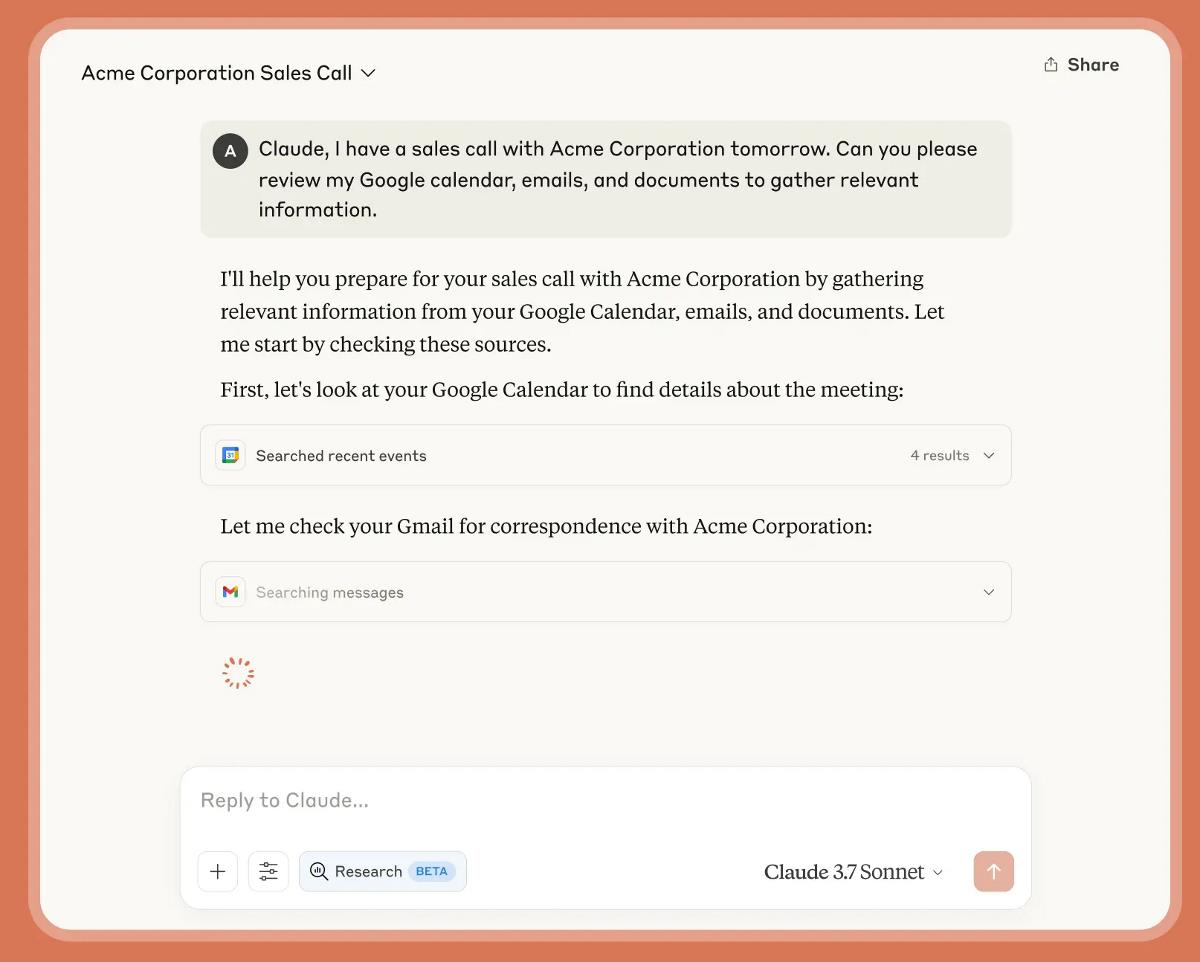

Best for: Expert-level reasoning, complex multi-step research, enterprise MCP workflows

26.6% HLE (highest of any system). 72.57% GAIA (best reported). MCP support (Feb 2026). API available. Domain-restricted searches.

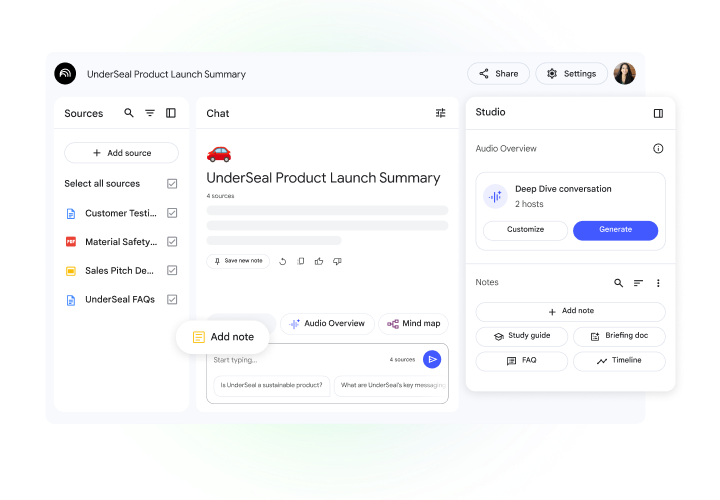

Best for: Multimodal research, long-document analysis, research-to-presentation pipelines

100+ sources/query (highest coverage). 1M token context. Gemini 3 engine. Multimodal (video/audio/PDF). 907 HN pts (highest in category). $20/mo.

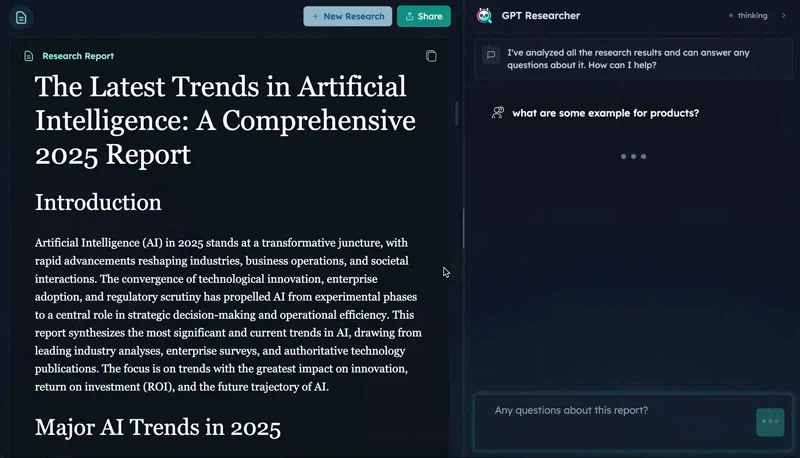

Best for: Self-hosted research pipelines, agent integration, customizable workflows

CMU DeepResearchGym #1 (citation quality, report quality, info coverage — beat Perplexity and OpenAI). 25.8K stars. 15.9K weekly PyPI downloads. MCP server. Apache 2.0.

Best for: Privacy-first deep research, local deployment, cost-sensitive teams

HLE 32.9 (exceeds OpenAI's 26.6). GAIA 70.9. Runs locally on consumer hardware (3.3B active params via MoE). 18.5K stars. 365 HN pts. Apache 2.0.

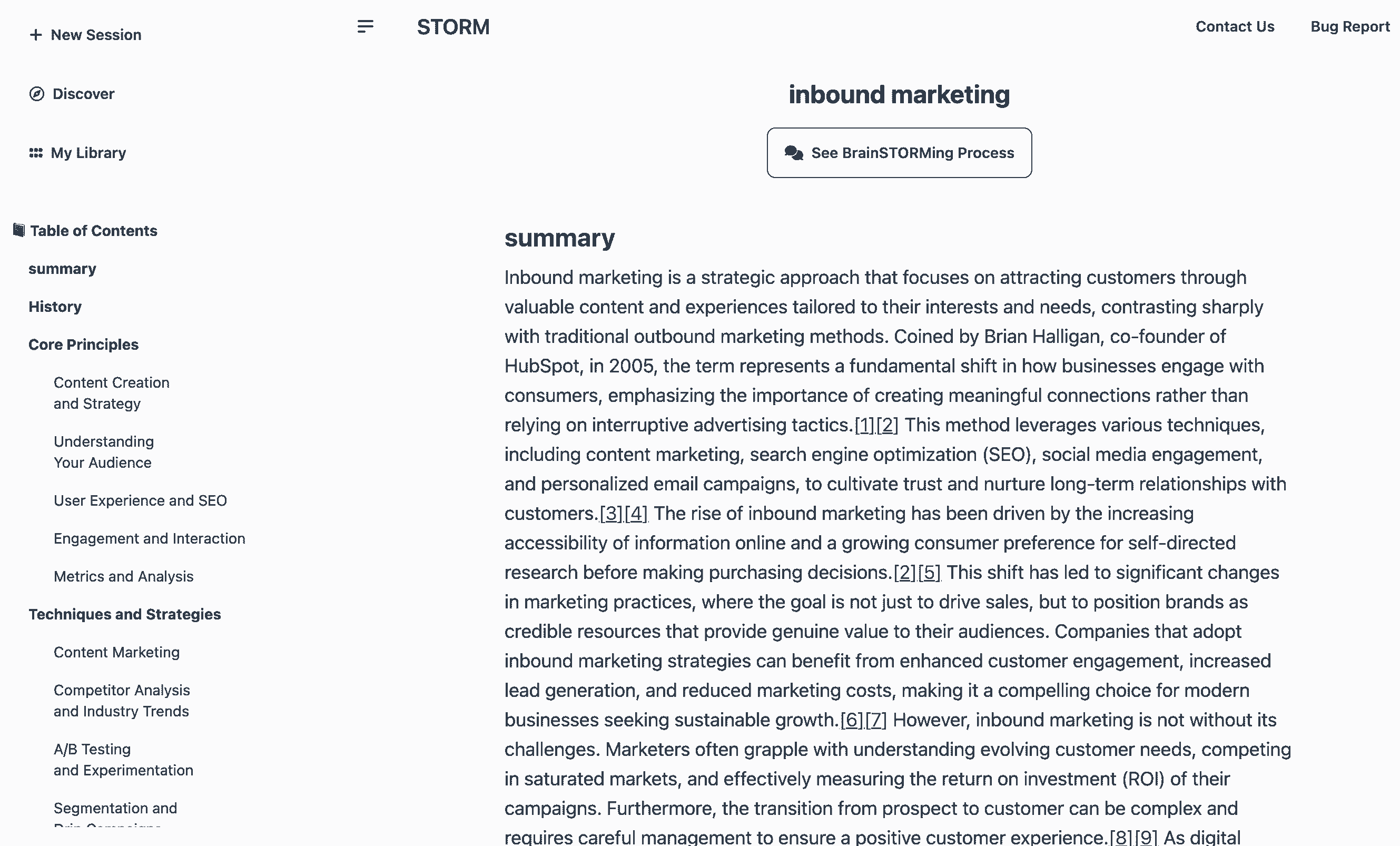

Best for: Structured knowledge curation, article-length synthesis, academic workflows

28K stars. 84.8% citation recall / 85.2% precision (peer-reviewed). Wikipedia-style article generation. Co-STORM collaborative mode (EMNLP 2024). MIT license.

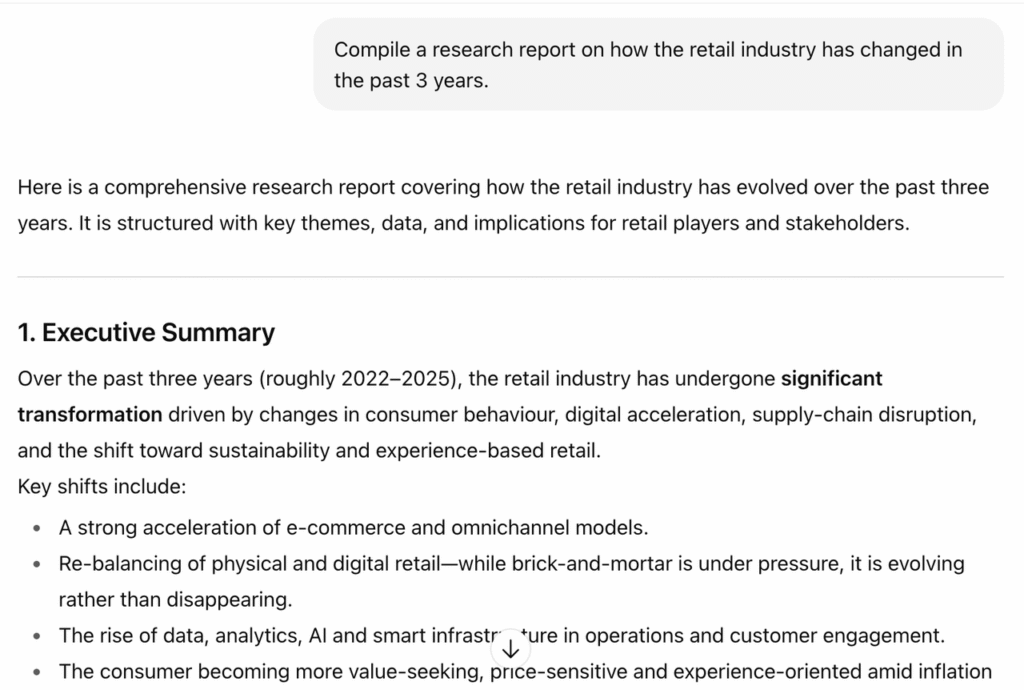

Best for: Long-form synthesis, custom research agent development

Multi-agent architecture (Opus 4 lead + Sonnet 4 subagents). 90.2% improvement over single-agent (methodology published). 1M context. Agent SDK for custom pipelines.

Best for: Breaking news orientation, social sentiment research, trend analysis

Only deep research tool with native real-time X/Twitter integration. 4-agent parallel system. Journalists use for breaking story orientation.

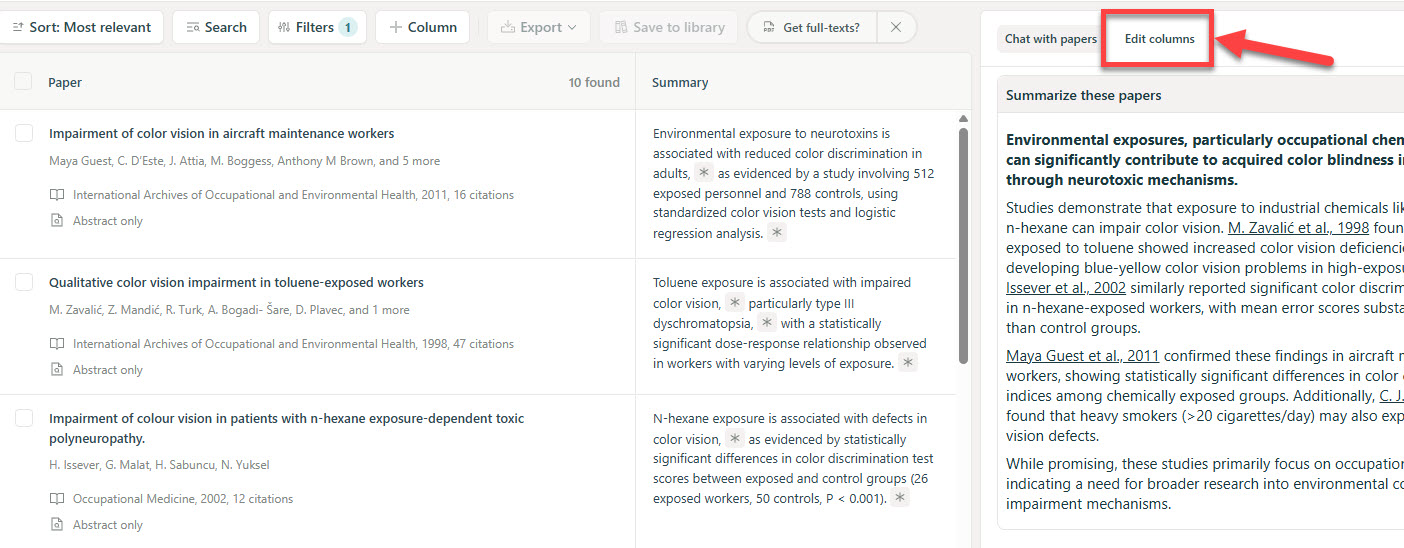

Best for: Systematic reviews, meta-analyses, clinical research, data extraction

138M papers + 545K clinical trials. API (Mar 2026). Claude Opus 4.5 extraction. Reddit-endorsed as 'essential for meta-analyses.' Sentence-level citations. $10/mo.

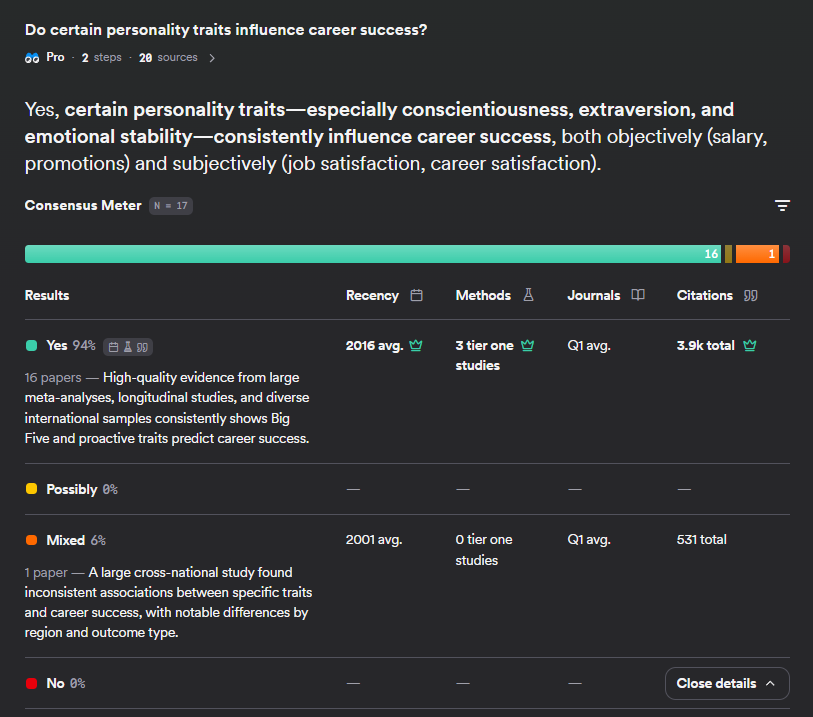

Best for: Quick claim-level evidence checking — 'does the literature support X?'

200M+ papers (via Semantic Scholar). Unique consensus meter showing scientific agreement. 1,000+ papers/query with Deep Search. 126 HN pts.

Best for: Building research agents — de facto standard search backend

Default search backend for GPT Researcher, CrewAI, LangChain. Acquired by Nebius (Feb 2026). /research endpoint GA. MCP server. Domain governance.

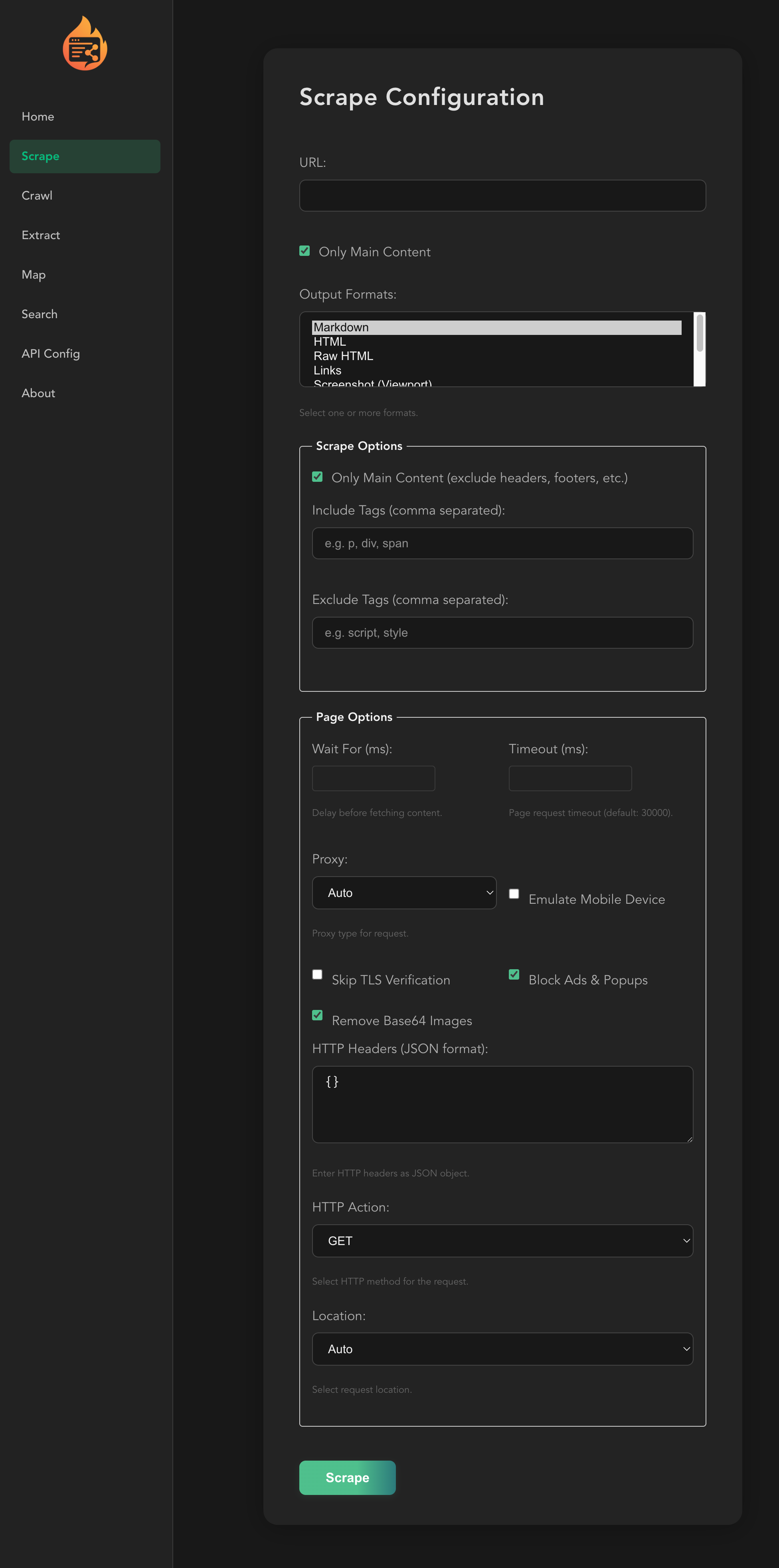

Best for: Web data extraction for research pipelines

~40K stars. Web scraping → clean markdown for LLMs. MCP server. Research agent mode. AGPL-3.0.

Head to head

Google leads on source coverage (100+ vs 10-30), multimodal (video/audio/PDF), and HN buzz (907 vs 368 pts). Perplexity leads on speed and citation quality. NotebookLM is a research workbench; Perplexity is a fast answer engine.

GPT Researcher has proven CMU benchmark validation and real PyPI adoption (15.9K/wk). Tongyi has superior benchmark scores (HLE 32.9 vs N/A) and runs locally. GPT Researcher = established leader; Tongyi = disruptive newcomer.

STORM produces better structured Wikipedia-style articles with peer-reviewed citation quality (85.2% precision). GPT Researcher is more practical for agentic research workflows with MCP integration. STORM = knowledge artifacts; GPT Researcher = research pipelines.

Elicit wins for deep academic workflows (systematic reviews, data extraction, API). Consensus wins for quick claim-level evidence checking with unique consensus meter. Complementary rather than competitive.

Public signals

Independent academic benchmark. GPT Researcher beat Perplexity, OpenAI, OpenDeepSearch, HuggingFace.

Independent comparison of deep research benchmarks. OpenAI leads on reasoning; Perplexity leads on speed.

Open-source model matching/exceeding closed-source leaders. 'DeepSeek moment for AI agents.'

Real adoption signal for open-source research agent.

Audio overview feature drove massive attention. 5 threads >200 pts.

Organic academic endorsement for systematic review workflows.

Acquisition validates Tavily as critical research infrastructure. De facto standard for agent search.

First major platform to add MCP to deep research. Enterprise integration signal.

Enables systematic reviews via API. Claude Opus 4.5 extraction.

What changes this

Independent benchmark for Claude Research — if it matches OpenAI on HLE/GAIA with third-party validation, moves to #2-3

Tongyi DeepResearch adoption data — if real PyPI/usage stats emerge, could leapfrog GPT Researcher to #4

OpenAI drops Deep Research price — if available at $20 unlimited, threatens Perplexity's #1

Perplexity ships MCP — would close the enterprise gap with OpenAI and solidify #1 across all use cases

NotebookLM ships API — would make Google a serious infrastructure play competing with Tavily

Fresh CMU benchmarks against Tongyi and updated OpenAI — would clarify open-source leader question

Consensus Deep Search gains traction — 11 HN pts is concerning; if adoption stays flat, risks becoming a footnote

Karpathy AutoResearch gets 'knowledge research' mode — would immediately enter main ranking, likely top 5 given 43K stars