Claude Code leads SWE-bench Pro standardized (45.89% vs 41.04%), Morph Tier 1 'deepest reasoning', Educative 1h17m single-shot. Codex CLI leads Terminal-Bench (77.3% GPT-5.3-Codex), 3-4x more token-efficient, 240+ tok/s. Emerging consensus: use both — Claude for planning, Codex for implementation.

Coding CLIs / Code Agents

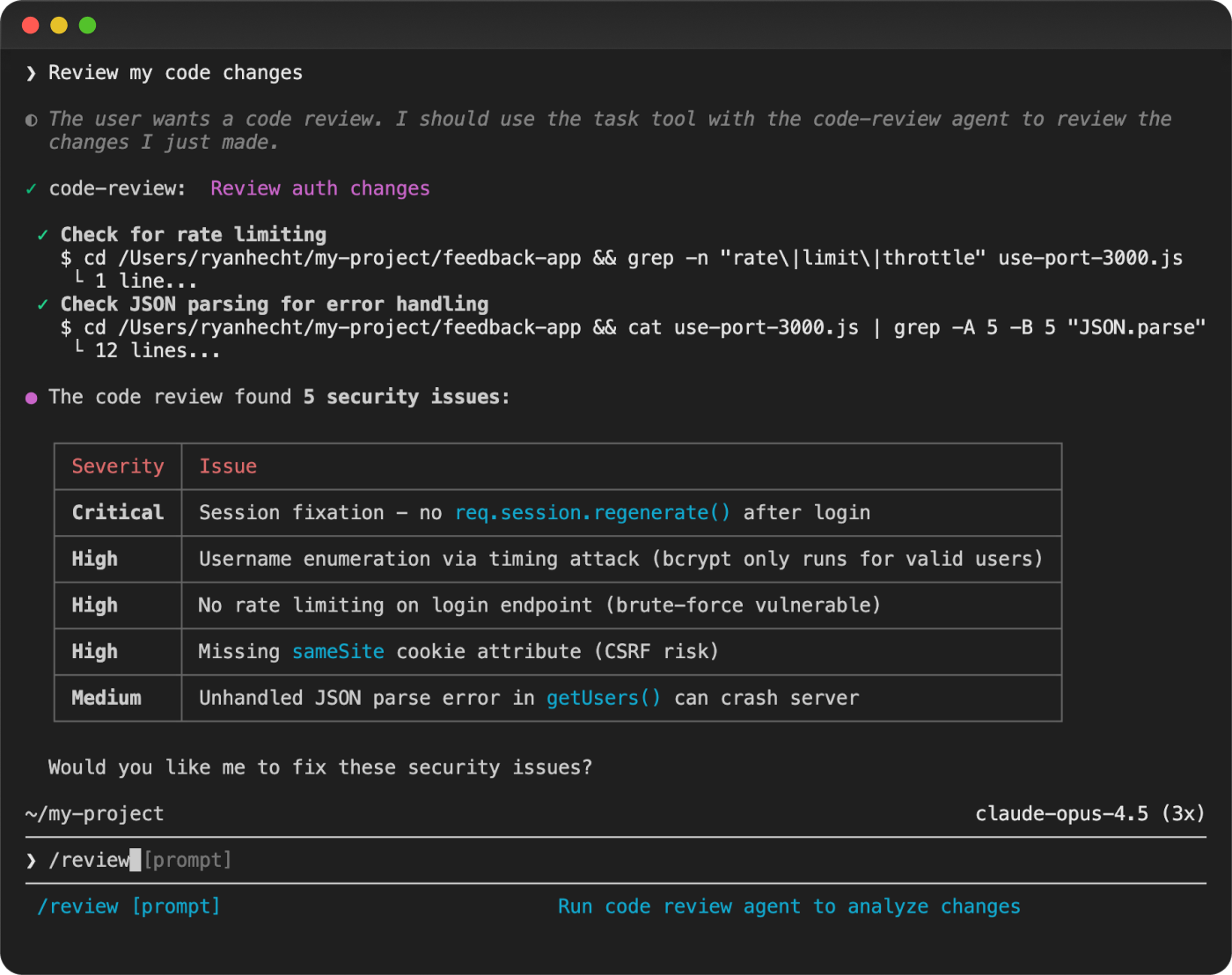

The hottest category right now. Ten+ serious CLI agents competing across three tiers. SWE-bench Pro (standardized) is necessary but no longer sufficient — METR found ~50% of SWE-bench-passing PRs would NOT be merged by real maintainers. Rankings weight benchmarks alongside practical tests, adoption, safety, and independent evaluations.

22

Ranked

18

Signals

Current ranking

Best for: Complex multi-file refactors, framework migrations, architecture — any task where first-pass quality matters most

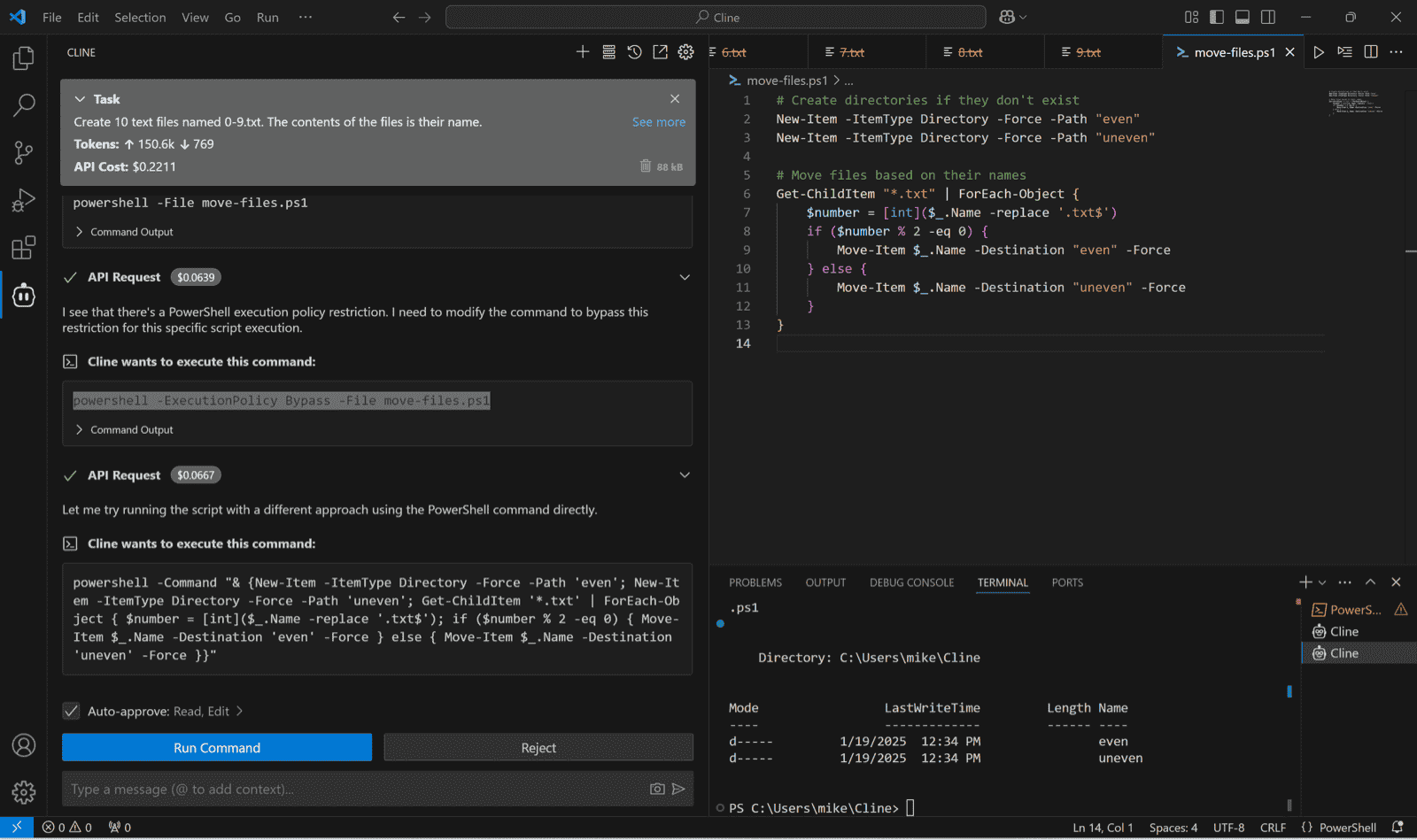

7.88M npm downloads/week — 3x nearest rival. ~4% of GitHub public commits (SemiAnalysis). #1 SWE-bench Pro standardized (45.89%, SEAL). ~95% first-pass correctness (Educative.io). $2.5B annualized revenue. Morphllm: 'best AI coding agent for most developers.'

Best for: Budget-conscious developers, students, exploratory/prototyping work, massive context window tasks

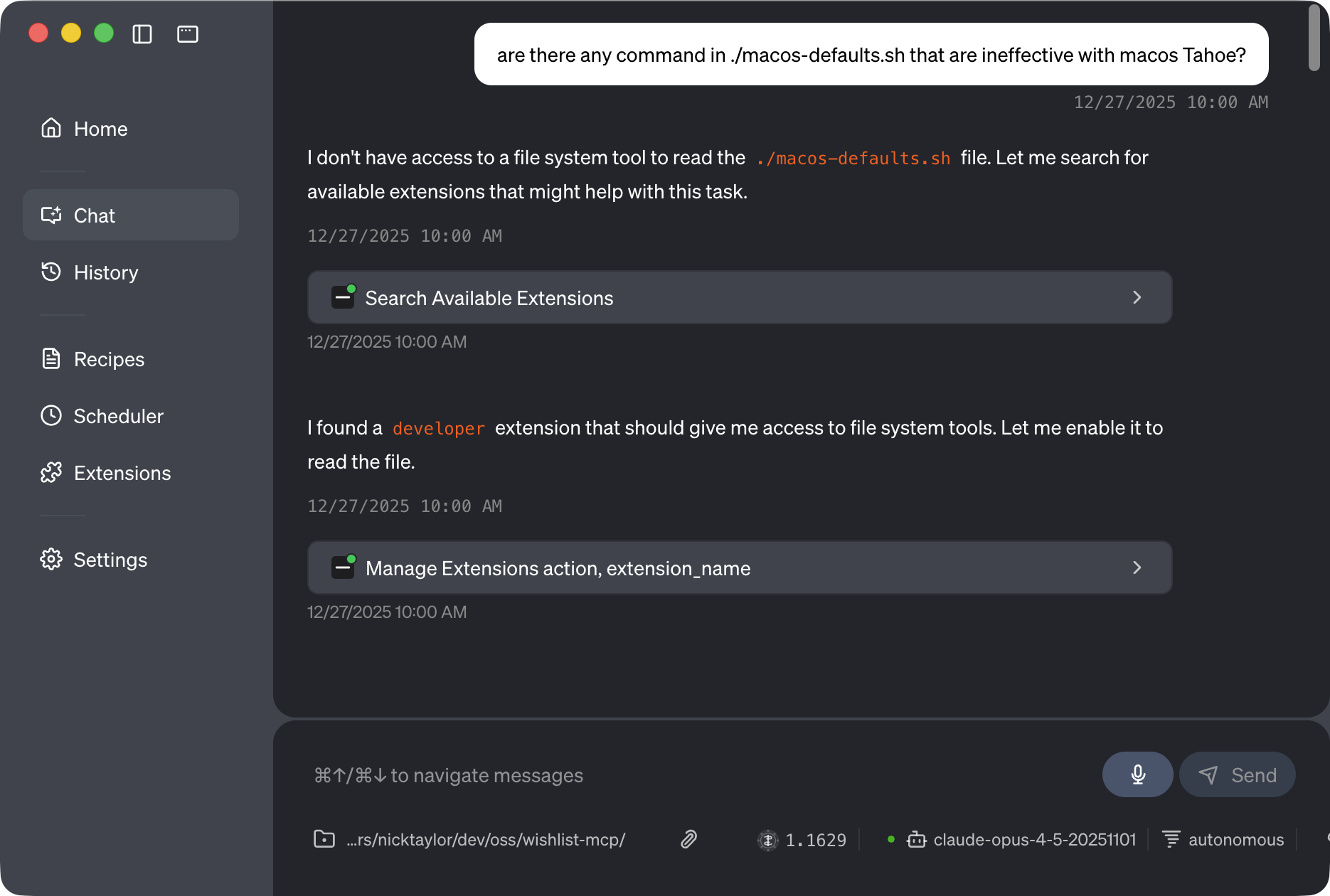

Terminal-Bench 2.0: 78.4% (#1). v0.35.0 (Mar 24) shipped keybinding/policy/telemetry fixes while the repo hit 98,957 stars and 12,593 forks. Docs confirm 1M native context and 1,000 requests/day free, and independent write-ups (FreeAcademy, GuruSup) recommend starting large personal projects here before escalating to Claude.

Best for: Teams already on GitHub Copilot, enterprise environments requiring governance and audit trails

15M Copilot subscriber distribution — largest channel in category. v1.0.11 (Mar 23) shipped `/clear`, multi-extension hook merges, and cross-platform assets with MCP configs now respected. Reddit’s GA announcement (185 upvotes) plus a GitHub demo tweet (61 likes) show real teams recreating the launch workflow, and the Enterprise Agent Control Plane is still the most mature admin layer.

Best for: High-volume daily coding, speed-sensitive workflows, air-gapped/locked-down environments (Rust binary)

2.49M npm downloads/week — still massive even after Gemini's surge. Rust rewrite — no Node.js dependency. Terminal-Bench 77.3% (#2). GPT-5.4 shipped March 2026. 240+ tok/s with 3-4x better token efficiency than Claude. Cleanest security record in Tier 1. 1M+ first-month users.

Best for: Sandboxed agent execution, security-conscious environments, model flexibility without vendor lock-in

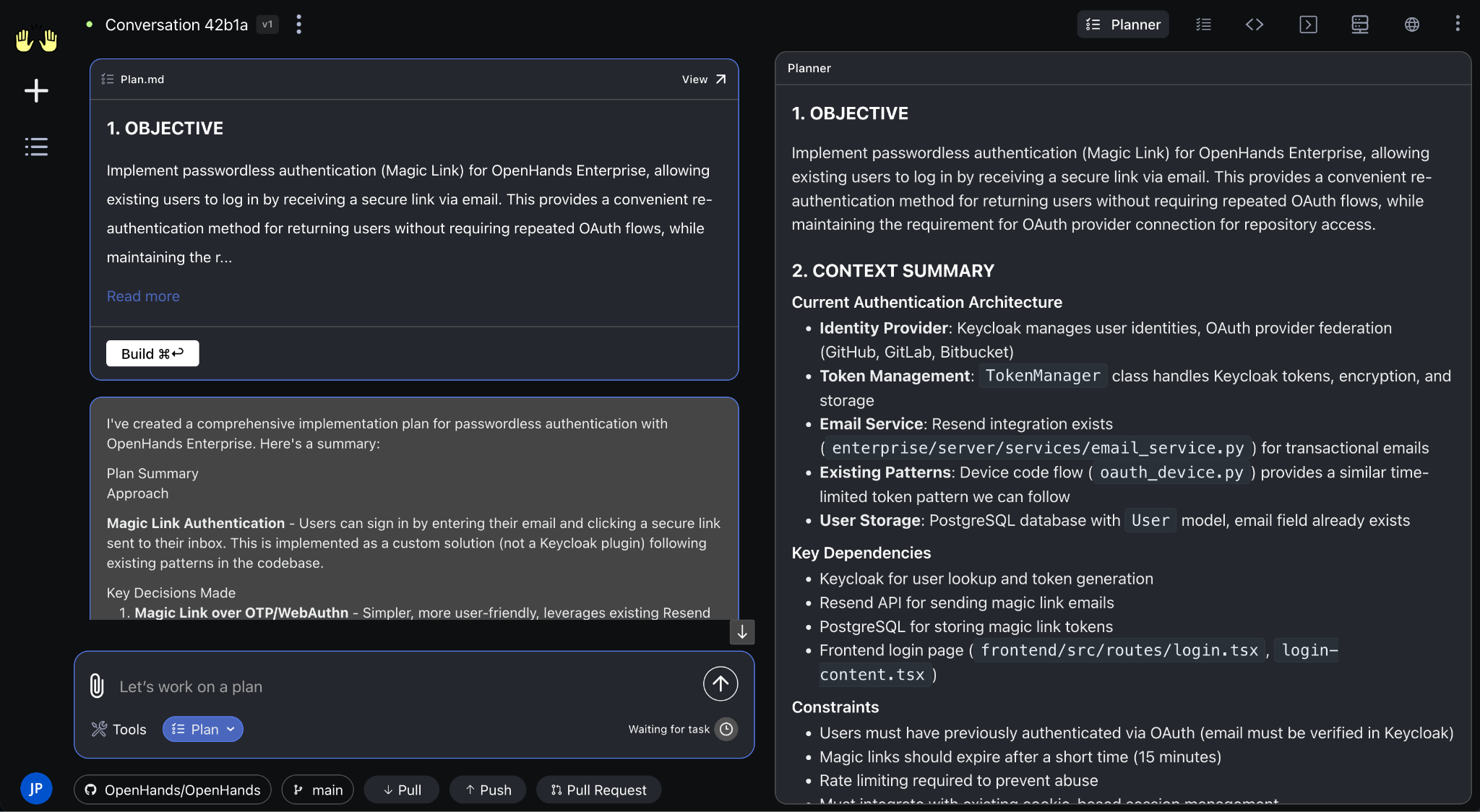

69K stars (#2 among agents). $18.8M Series A. Docker-sandboxed execution — isolated Kubernetes environments. Model-agnostic, MCP support, Planning Agent with Plan/Code mode. CLI release Feb 2026. 72% SWE-bench Verified.

Best for: VS Code-centric developers, enterprise teams with existing VS Code infrastructure

5M installs across VS Code, JetBrains, Cursor, Windsurf — largest VS Code agent user base. $32M funding (Emergence Capital). Named enterprise customers: Salesforce, Samsung, SAP. $1M open-source grant program.

Best for: Free, open-source terminal agent; BYOM flexibility; MCP-heavy workflows; Block ecosystem

33K stars, v1.28.0 released 2026-03-18. Free, Apache 2.0. MCP-first architecture. ACP integration (March 19, 2026) lets devs use existing subscriptions. 60% of Block (12K employees) use it weekly (self-reported). Linux Foundation AAIF governance.

Best for: Local-first/offline coding agents, privacy-sensitive environments, budget-limited teams

Only contender with a competitive local model (Qwen3-Coder-Next: 3B active / 80B MoE, runs on consumer hardware). SEAL standardized 38.70%. Gemini CLI fork — inherits proven architecture. 1K free req/day. 256K context (extendable to 1M). 20K stars.

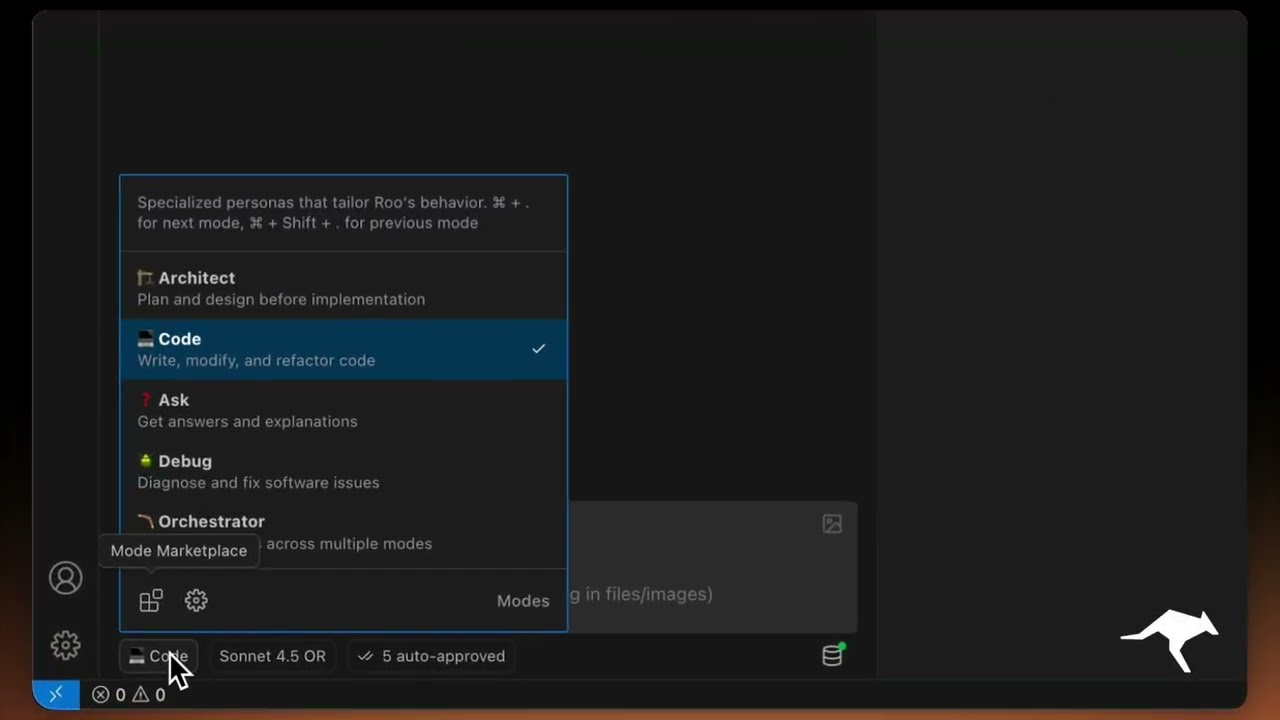

Best for: Security-conscious enterprise teams needing a VS Code agent, specialized agent personas

SOC 2 Type 2 compliance — only VS Code agent with independent auditor verification. Custom Modes (security reviewer, test writer, architect personas with scoped permissions). 5.0/5 VS Code rating on 1.37M installs. 22K stars. Clean security record.

Best for: Git-heavy workflows, multi-provider flexibility, mature battle-tested tool

Category pioneer — original terminal coding agent. 42K stars, 4.1M+ total installations (Morphllm). Best git workflow integration (automatic commits, diff-aware editing). Multi-provider: works with every major LLM provider. 191K PyPI/week.

Best for: JetBrains loyalists wanting BYOK pricing with institutional IDE vendor support

JetBrains distribution: 13M+ user base. BYOK pricing. One-click migration from Claude Code. LLM-agnostic, static analysis integration.

Best for: Background agents enforcing code quality on PRs

2,372,585 VS Code installs (second-highest in IDE segment). 31,935 stars. Last release v1.2.17 (2026-03-13). Pivoted to async CI agents for PR enforcement.

Best for: Teams with large, complex codebases needing deep code intelligence

Most sophisticated sub-agent architecture (Oracle, Librarian, Painter). Sourcegraph code intelligence DNA. 36K npm weekly downloads. Free tier + BYOK.

Best for: Benchmark research, academic reference, issue-level repair evaluation

18,777 stars. Princeton NLP origin. SWE-agent scaffold: 79.2% SWE-bench Verified on Opus 4.5 — original SWE-bench paper.

Best for: Terminal-first developers wanting polished UX, multi-platform support

Best terminal UX in the category. Charmbracelet proven track record (Bubble Tea, 25K+ apps). Multi-model, LSP, MCP, cross-platform. 21K stars, v0.50.1 (2026-03-17). HN: 367 pts.

Best for: Maximum model flexibility, open-source-first teams

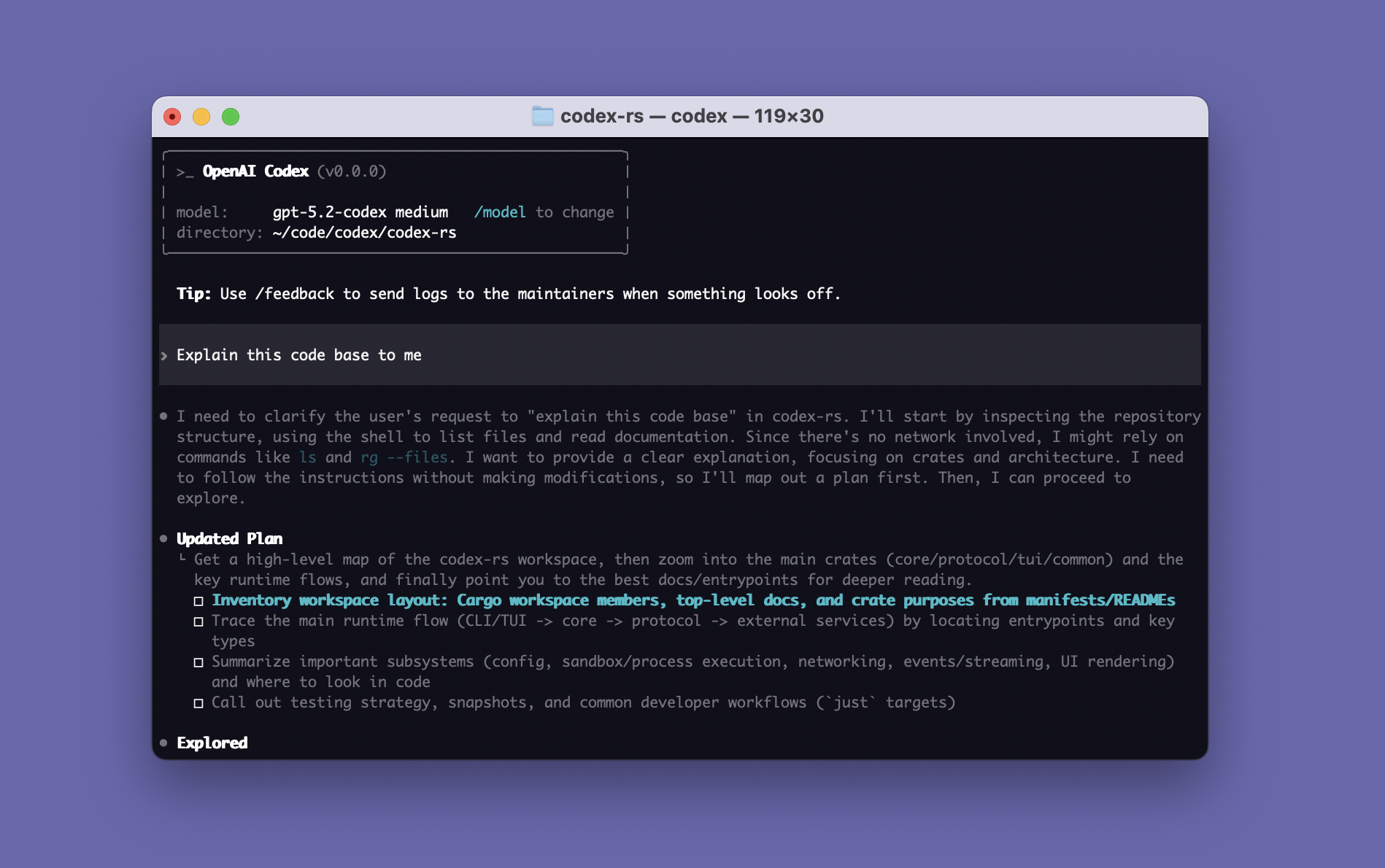

126K+ stars — largest AI coding repo by raw count. 393K npm/week. v1.2.27 active. OpenAI official partnership. 75+ model providers.

Best for: Teams wanting highest raw benchmark number, semantic codebase indexing

51.80% SWE-bench Pro on Augment scaffold — highest raw number in category. Enterprise logos (MongoDB, Spotify). Augment Context Engine.

Best for: Chinese developer ecosystem, teams using Moonshot AI models

7.2K stars, 124K PyPI weekly downloads. K2.5 model (HN: 388 pts). Moonshot AI $1B+ funding.

Best for: Privacy-conscious teams, OpenRouter users

16.8K stars, 131K npm weekly downloads. $8M seed funding. Orchestrator Mode, 500+ models. Growing fast.

Best for: Polished commercial IDE with integrated AI

$29.3B valuation, most adopted commercial AI IDE. Strong UX, agent modes (Jan 2026).

Best for: Terminal-first developers who want an integrated AI environment

26K+ stars, 75.8% SWE-bench Verified, TIME Best Inventions. Full terminal replacement.

Best for: Spec-driven development, AWS integration, GovCloud

Amazon-backed. GovCloud focus. Spec-driven development approach. CLI v1.27 (2026-03-02).

Head to head

Gemini CLI leads Terminal-Bench (78.4% vs no published scores), has SWE-bench Pro 43.30%, and best free tier. Copilot CLI has 15M subscriber distribution and Enterprise Agent Control Plane. Gemini wins on proven capability; Copilot wins on enterprise governance and distribution.

Gemini CLI: free tier (1K req/day), 1M context, 98K stars, Deep Think mode. Codex CLI: Terminal-Bench 77.3% (GPT-5.3-Codex), sandbox-first safety, free with ChatGPT. Gemini wins on cost and context; Codex wins on proven terminal performance and speed.

Both are model-agnostic, but Aider hasn't shipped a release in 7 months while OpenCode ships daily. Aider has verifiable PyPI downloads (183K/week); OpenCode's 5M MAD claim is unverified. Aider's token efficiency (4.2x less than Claude Code) is unmatched.

Gemini CLI now at 43.30% SWE-bench Pro standardized vs Claude Code's 45.89% — gap narrowed to 2.59pp. Gemini wins overwhelmingly on cost (free 1K req/day) and context (1M native). Claude Code wins on adoption (8M vs 647K npm/wk), revenue ($2.5B ARR), and HN mindshare (2,127 vs 1,428 pts). Tool-calling weaknesses keep Gemini at #3.

Amp has the most sophisticated sub-agent architecture (Oracle, Librarian, Painter) from Sourcegraph's code intelligence DNA. Claude Code has 58x more npm downloads (8M vs 139K), published benchmarks (SWE-bench Pro #1), and 24x more HN engagement. Amp is a bet on code intelligence depth; Claude Code is the proven all-rounder.

Public signals

Weekly Gemini releases (v0.35.0) plus doc-confirmed 1M context / 1K free requests elevated it above Codex. Copilot CLI’s v1.0.11 release, 185-upvote Reddit GA thread, and GitHub demo tweet pushed it to #3 despite <10K stars.

100 commits between Jan 29 and Mar 20 (~14/week, 10+ maintainers) underpin the release that shipped `--bare`, improved worktree resume, and richer MCP OAuth — a key reason Claude keeps the top slot.

181-point thread captured a v2.1.0 startup failure; maintainers patched it immediately (GitHub issue #16673). We track these regression cycles because Claude’s trust premium only holds if fixes stay public and fast.

Attackers can pipe `env curl ... | env sh` straight into Copilot CLI without approval. The 62-point HN thread (47183940) amplified it — enterprise rollouts need network policies or sandboxes ready.

Community reproduced GitHub’s GA repo to demo `/clear`, MCP configs, and Autopilot. Small but real usage signal that balances the security watchlist.

Pre-warmed git worktrees (<1s startup), SSH remotes, and first-class support for Claude, Codex, Gemini, Droid, Amp, etc. make it the leading ADE for juggling ranked CLIs.

Multi-worktree multitasking (Codex/Claude/Gemini) with push notifications when an agent finishes and spotlighted terminals. Teams report 2-3× faster runs — on-prem alternative to Emdash.

Preview→expand memory capture feeds Claude/Gemini/Codex sessions with team knowledge while drift detection intervenes mid-task. Addresses the persistent context gap in CLI workflows.

126K+ stars are real but driven by Anthropic OAuth controversy. CVE-2026-22812 (CVSS 8.8-10.0) is a second security incident after the unauthenticated RCE (fixed v1.1.10+). Two serious security incidents make trust story the weakest in category. Drops from #5 to Watch list.

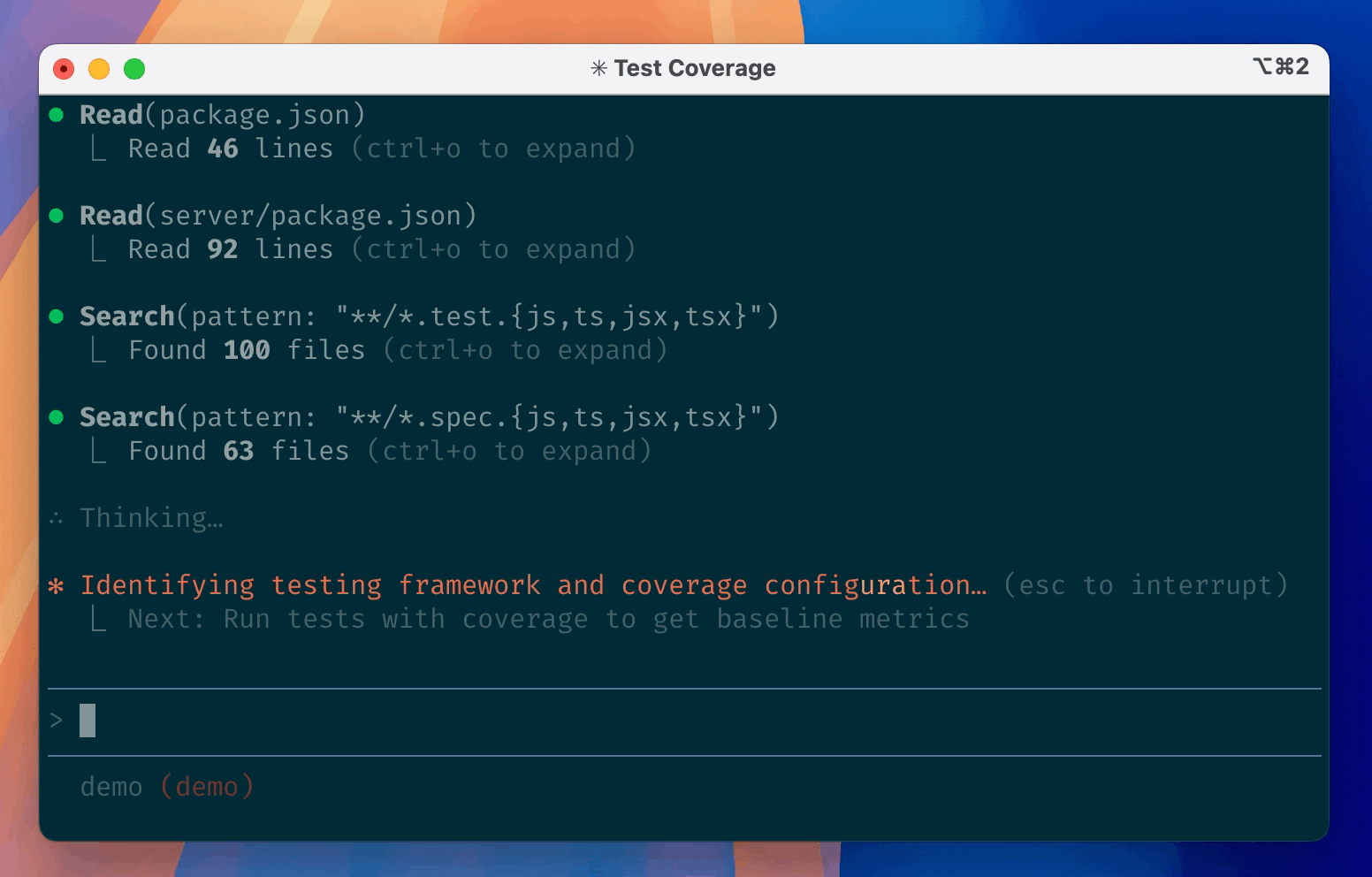

Terminal-Bench 2.0 results: Gemini CLI leads terminal-native tasks at 78.4%, Codex CLI close at 77.3%, Claude Code at 74.7%. All three are competitive — gap between #1 and #3 is only 3.7pp.

Two patched security incidents: hooks injection RCE (fixed v1.0.111) and API token exfiltration (fixed). Codex CLI has the cleanest security record in Tier 1 — zero documented incidents.

Educative.io practical test: Claude Code ~95% first-pass correctness vs Gemini CLI ~50-60%. This 2x quality gap is wider than SWE-bench Pro scores suggest. Practical quality is weighted heavily in this ranking.

69K stars (#2 among agents), Docker-sandboxed Kubernetes environments, model-agnostic with MCP support. CLI release Feb 2026. Planning Agent with Plan/Code mode. Cloud-first adds latency vs terminal-native tools.

METR's March 2026 study: ~50% of SWE-bench-passing PRs would NOT be merged. A 46% SEAL score ≈ 23% 'would actually ship' rate. Practical quality signals (first-pass correctness, switching patterns) carry weight in this ranking.

Morphllm independent test of 15 agents: scaffold maturity gap is 17 problems on same model. Claude Code named 'best AI coding agent for most developers.' Codex CLI's custom scaffold (56.8%) vs SEAL standardized (41.04%) illustrates the gap.

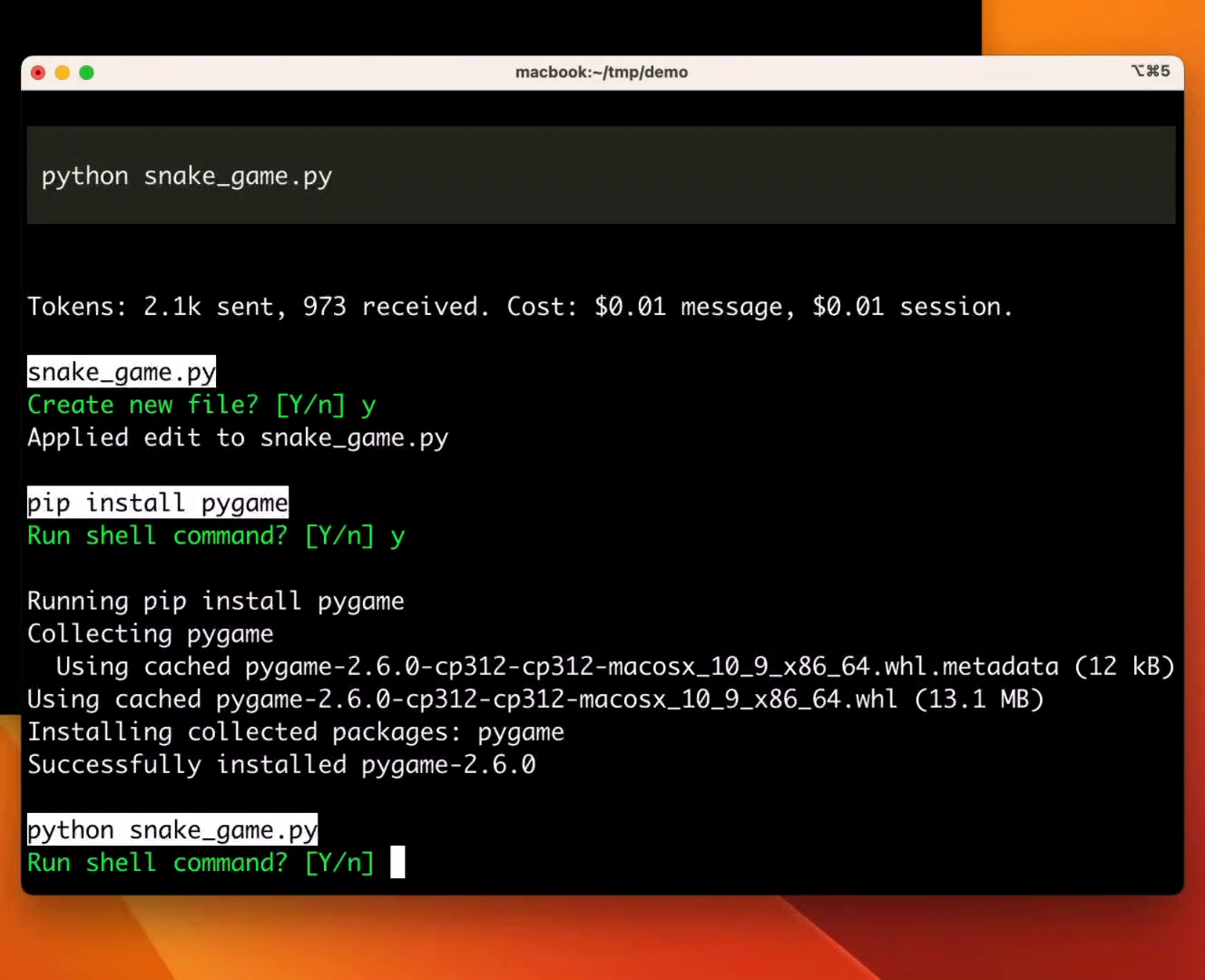

Multiple independent sources converge on using Claude Code for planning/architecture and Codex CLI for implementation. Not a compromise — may be the optimal workflow.

~4% of public GitHub commits, projected 20%+ by EOY 2026. 42,896x growth in 13 months. $2.5B annualized revenue. 8M+ npm weekly downloads — 3x Codex, 12x Gemini.

Independent daily monitoring with 56% baseline pass rate and no statistically significant degradation. Quality regression perception (1,085 HN pts) is community sentiment, not measured reality.

What changes this

Auggie CLI public GA release + independent SWE-bench Pro reproduction → could move to Tier 1 if the 51.80% scaffold advantage holds outside Augment's own benchmark setup.

Gemini CLI publishing a credible SEAL SWE-bench Pro number → could move to #1 or #2 depending on result; currently ranked on traction alone.

Junie CLI post-beta community evidence → JetBrains' 11M+ installed base is large enough that strong first 60 days of public reception would immediately justify a Tier 2 slot.

Cline publishing a credible third-party security audit → would restore trust score and move it back into active Tier 2 consideration.

Aider publishing a SWE-bench Pro standardized number → would likely lock in #2 slot; currently its install verifiability is the strongest non-Anthropic signal in the category.

OpenCode resuming active development and patching the RCE → minimum bar to re-enter the ranking.

Claude Code quality regression persisting (the 'dumbed down' thread had 1,085 pts / 702 comments) → if perception hardens into documented capability regression, Tier 1 position is at risk.

If Gemini CLI fixes the file deletion pattern and files a clean safety record for 3+ months, its free tier + 1M context makes it a serious #2 contender.

If Codex CLI closes the SWE-bench standardized gap while maintaining cost/speed advantages, the #3/#4 ordering could shift.