Latest release notes confirm the new `--bare` flag, worktree resumption improvements, MCP OAuth fixes, and multi-shell channel support — proof that Anthropic continues to ship practical workflow upgrades every week.

Claude Code

activeAnthropic's official agentic coding CLI. v2.1.81 (Mar 20) shipped `--bare`, smarter worktree resume, and improved MCP OAuth while the repo crossed 82,204 stars and logged ~14 commits/week across 10+ maintainers. Terminal-native, tool-use-driven, with deep file system + shell access, #1 SWE-bench Pro standardized (45.89%), ~4% of GitHub public commits (SemiAnalysis), $2.5B annualized revenue. 8M+ npm weekly downloads. Opus 4.6 with 1M context.

Where it wins

#1 SWE-bench Pro standardized (45.89%) — the authoritative benchmark now that Verified is saturated

~4% of GitHub public commits — strongest real-usage signal (SemiAnalysis), $2.5B annualized revenue (fastest enterprise SaaS to $1B ARR — Constellation Research)

8M+ npm weekly downloads — 3x Codex, 12x Gemini. Installs are the hardest metric to game

Opus 4.6 with 1M context — highest-capability model available in a CLI

HN peak engagement 2,127 pts — unmatched community mindshare

~95% first-pass correctness (Educative.io) — roughly double Gemini CLI's 50-60%

Wins independent blind code quality tests 67% of the time across independent evaluations

Independent daily quality monitoring with no degradation detected (MarginLab, p<0.05)

Real-world daily cost ~$6 avg, <$12 at p90

Where to be skeptical

Rate limits are the #1 complaint (1,085 pts HN thread dedicated to this)

Costs $200+/month at heavy usage — 2-3x more expensive per task than Codex CLI due to 3-4x higher token consumption

CVE-2025-59536 (CVSS 8.7) — hooks injection RCE, fixed v1.0.111. CVE-2026-21852 — API token exfiltration, fixed. Two patched security incidents

Terminal-Bench 2.0: 74.7% (#3) — improved but still trails Gemini CLI (78.4%) and Codex CLI (77.3%)

Tied to Anthropic models only

Anthropic blocked third-party subscription use, angering users (625 pts HN)

Quality regression perception: 'Claude Code is being dumbed down?' HN thread (1,085 pts, 702 comments, Feb 2026) — MarginLab monitoring shows no statistical regression but community trust is a live issue

Editorial verdict

The #1 coding CLI agent. Leads SWE-bench Pro standardized (45.89%), wins independent head-to-heads on reasoning depth, ~4% of GitHub commits. Rate limits are the #1 complaint. Costs 2-3x more per task than Codex CLI due to higher token consumption.

How to get started

Install via npm install -g @anthropic-ai/claude-code (or run curl -fsSL https://claude.ai/install.sh | bash), then run claude init inside the repo you want it to manage to link git + MCP configs. Start every task with claude plan --issue <ticket> so it drafts the plan before you approve claude apply to write diffs.

Videos

Reviews, tutorials, and comparisons from the community.

Claude Code Tutorial #1 - Introduction & Setup

Claude Code Tutorial for Beginners

Claude Code - Full Tutorial for Beginners

Related

Coding CLIs / Code Agents

Complex multi-file refactors, framework migrations, architecture — any task where first-pass quality matters most

Teams of Agents / Multi-Agent Orchestration

Related but not ranked

Software Factories

Developers who want the most capable coding agent — complex multi-file refactors, greenfield projects, long-running autonomous execution

LangGraph

95#1 Python agent framework by production evidence — 40.2M PyPI downloads/month, Fortune 500 deployments (LinkedIn, Uber, Replit, Elastic, Klarna, Cloudflare, Coinbase), ~400 LangGraph Platform companies, LangSmith rated best-in-class observability. Stable v1.x API, model-agnostic, MCP support.

Pydantic AI

95#3 Python agent framework by downloads — 15.6M PyPI/month. Built by the Pydantic team. Runtime type enforcement is a genuine differentiator no other framework offers. V1 shipped with Temporal integration for durable execution and Logfire observability. Emerging pattern: 'Pydantic AI for agent logic, LangGraph for orchestration' (ZenML).

AutoGen (Microsoft)

95⚠️ MAINTENANCE MODE — Microsoft officially confirmed bug fixes and security patches only, no new features (VentureBeat 2026-02-19). 55.9K stars but only 1.57M PyPI/month — DL/star ratio of 28, the most inflated among active frameworks. Being replaced by Microsoft Agent Framework (AutoGen + Semantic Kernel merge, GA targeted ~Q2 2026). Teams on AutoGen should plan migration.

CrewAI

93#2 Python agent framework — 5.7M PyPI downloads/month (3× growth in 6 months), Fortune 500 customers (PwC, IBM, Capgemini, NVIDIA, DocuSign), YAML-driven role-based orchestration rated 'fastest to prototype' in 2026 independent reviews. CVE-responsive: gitpython path traversal fixed in v1.11.0.

Public evidence

Users documented a v2.1.0 startup failure ('update to claude 2.1.0 then run claude. see the error.'). Maintainers acknowledged it and patched it quickly (https://github.com/anthropics/claude-code/issues/16673), reinforcing the transparent reliability story.

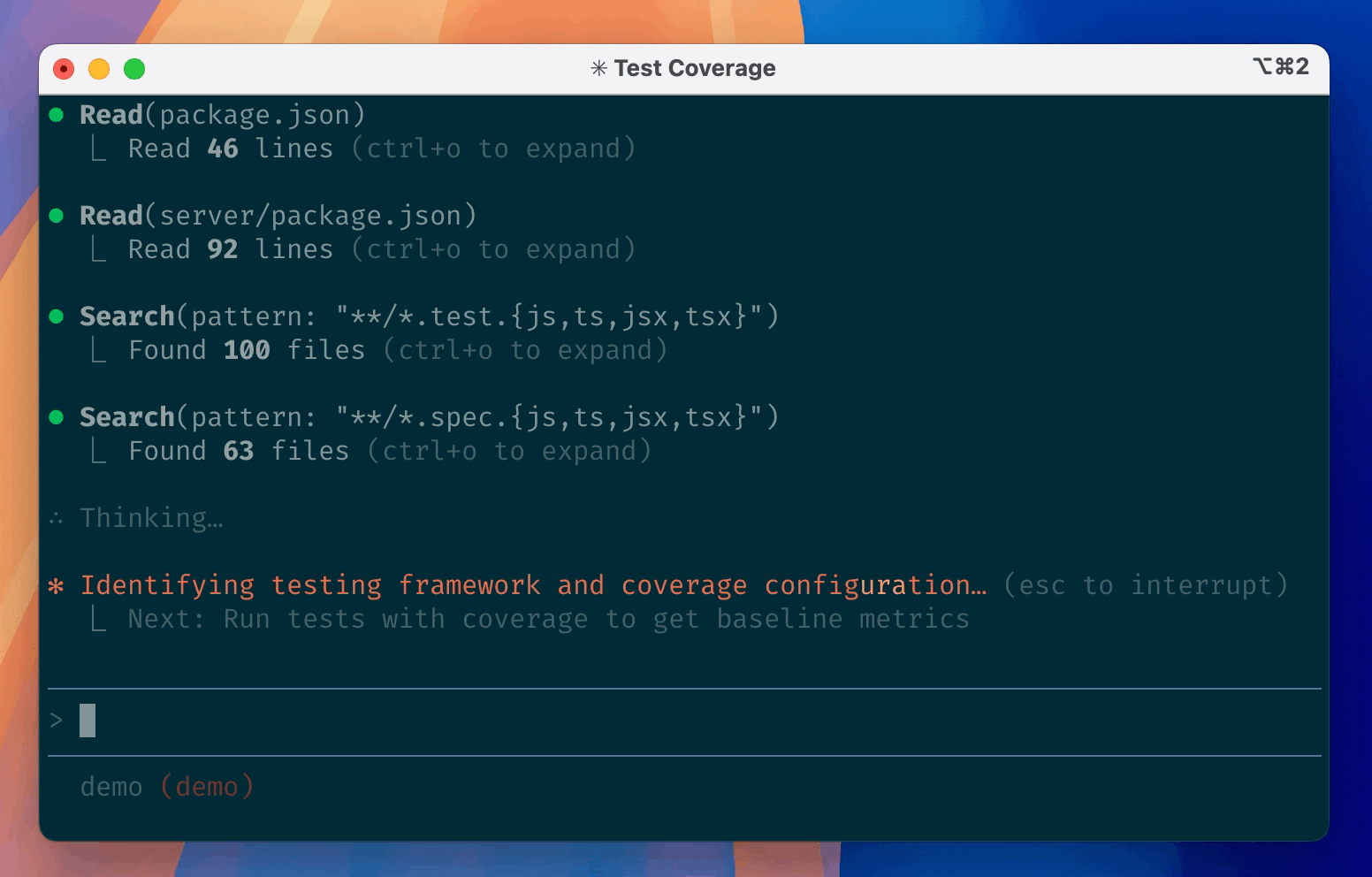

Step-by-step walkthrough of read/edit/run primitives sparked comparisons with Copilot CLI and Gemini CLI in replies, proving the CLI workflow is still the reference onboarding story for newcomers.

Claims Claude Code is best AI coding agent. Reference for feature claims only — Faros promoting Faros.

Ranks Claude Code highest for complex reasoning. Useful comparison structure but company blog, not independent review.

Major front-page HN thread on the Claude Code 2.0 release. Community consensus positioned it as the standard agentic coding CLI all others are measured against.

In-depth practitioner report from a real product team on daily Claude Code usage over 6 weeks. One of the highest-comment HN threads on any AI coding tool.

Tracks Claude Code VS Code extension installs surging from 17.7M to 29M since Jan 2026. Cites UC San Diego/Cornell survey where Claude Code (58 users) edged out GitHub Copilot (53 users) among 99 professional devs.

Swapping models changed scores ~1%. Swapping the harness changed them 22%. Claude Code's agent engineering (Agent Teams, parallel sub-agents) is the category's deepest scaffolding advantage.

Claude Code leads SWE-bench Pro standardized at 45.89% vs Codex CLI's 41.0%. SWE-bench Verified is saturated (top 5 within 1 point). Pro standardized is the fair comparison — custom scaffold scores (Codex 56.8%) are not comparable.

~4% of public GitHub commits, projected 20%+ by EOY 2026. 42,896x growth in 13 months. $2.5B annualized revenue. The hardest real-usage metric in the category.

56% baseline pass rate, no statistically significant degradation detected. No other tool in the category has this level of ongoing independent quality assurance.

One of the highest-engagement personal testimonials in the coding CLI category. Signals cultural mindshare beyond pure developer tooling.

$2.5B annualized revenue, 500+ customers at $1M+/year. Fastest enterprise SaaS to $1B ARR in history. 8M+ npm weekly downloads — 3x Codex CLI, 12x Gemini CLI.

8M+ weekly downloads is the hardest-to-game traction metric in the category. 3x Codex CLI (2.6M), 12x Gemini CLI (647K). Installs correlate with actual developer usage, not social media hype.

Maintainer merge rates are ~24pp lower than automated grading. Claude Code's 'deepest reasoning' positioning suggests it would benefit from a maintainer-merge-rate benchmark. Validates quality-over-speed thesis.

Claude Code completed a React project in 1h17m single-shot. Gemini CLI took 2h02m with multiple retries. Demonstrates Claude Code's reasoning advantage on practical tasks beyond synthetic benchmarks.

Morph's independent Tier 1 classification. Called Claude Code 'deepest reasoning on hard problems — best for complex refactors, unfamiliar codebases, architectural decisions.' Only Claude Code and Codex CLI made Tier 1.

High-engagement thread questioning whether Claude 4.5 has regressed in quality. MarginLab's independent monitoring found no statistically significant degradation (p<0.05), but community perception of quality regression is real and ongoing. The trust cost of this thread is itself a factor.

Morphllm's independent comparison of 15 coding agents: Claude Code classified as 'best AI coding agent for most developers.' Same model can score 17 problems apart in different agents — scaffold maturity is the key differentiator.

"4% of GitHub public commits are being authored by Claude Code right now... 42,896x growth in 13 months." (Dylan Patel, SemiAnalysis, Feb 2026). The single most cited real-usage metric in the category.

First native IDE integration by a major platform vendor. Developers get full Claude Code in Xcode — including subagents, background tasks, and plugins. Gives distribution to all macOS/iOS developers.

Developer preference strongly favors Claude Code at 46% 'most loved' rating. Cursor at 19%, Copilot at 9%. Strongest developer sentiment signal in the category.

Raw GitHub source

GitHub README peek

Constrained peek so you can sanity-check the source material without leaving the site.

Claude Code

Claude Code is an agentic coding tool that lives in your terminal, understands your codebase, and helps you code faster by executing routine tasks, explaining complex code, and handling git workflows -- all through natural language commands. Use it in your terminal, IDE, or tag @claude on Github.

Learn more in the official documentation.

<img src="https://raw.githubusercontent.com/anthropics/claude-code/main/demo.gif" />Get started

[!NOTE] Installation via npm is deprecated. Use one of the recommended methods below.

For more installation options, uninstall steps, and troubleshooting, see the setup documentation.

-

Install Claude Code:

MacOS/Linux (Recommended):

curl -fsSL https://claude.ai/install.sh | bashHomebrew (MacOS/Linux):

brew install --cask claude-codeWindows (Recommended):

irm https://claude.ai/install.ps1 | iexWinGet (Windows):

winget install Anthropic.ClaudeCodeNPM (Deprecated):

npm install -g @anthropic-ai/claude-code -

Navigate to your project directory and run

claude.

Plugins

This repository includes several Claude Code plugins that extend functionality with custom commands and agents. See the plugins directory for detailed documentation on available plugins.

Reporting Bugs

We welcome your feedback. Use the /bug command to report issues directly within Claude Code, or file a GitHub issue.

Connect on Discord

Join the Claude Developers Discord to connect with other developers using Claude Code. Get help, share feedback, and discuss your projects with the community.

Data collection, usage, and retention

When you use Claude Code, we collect feedback, which includes usage data (such as code acceptance or rejections), associated conversation data, and user feedback submitted via the /bug command.

How we use your data

See our data usage policies.

Privacy safeguards

We have implemented several safeguards to protect your data, including limited retention periods for sensitive information, restricted access to user session data, and clear policies against using feedback for model training.

For full details, please review our Commercial Terms of Service and Privacy Policy.