All Solutions

200 solutions tracked across 23 problem spaces. Each with an editorial verdict, source links, and evidence.

200

Solutions

23

Problems

Figma MCP Server Guide

activeOfficial74The trust leader with unmatched long-term durability — triple partnership with OpenAI Codex, GitHub Copilot, and Claude Code. Bidirectional since Mar 6 2026. Wins specifically when your team has Code Connect configured. Without it, Framelink produces cleaner context. Pricing is the primary barrier: free tier (6 calls/month) is non-functional.

Framelink / Figma-Context-MCP

active86Best read-only design-to-code MCP. Use this unless you have Code Connect configured — 25% smaller output than Official, avoids prescriptive code that poisons LLM context. Works on free Figma plans with broadest editor support.

Figma-use

active63Conditional pick. Strongest HN validation in the write-access lane, but solo maintainer and potential OpenPencil pivot raise durability concerns. Use if you want CLI-first workflow without a Figma plugin.

Vibma

watch63Emerging. Only tool publishing model-specific design quality benchmarks. Harness engineering angle is genuinely differentiated, but HN Show got only 2 points and no independent reviews found.

Cursor Talk to Figma

active83Best general-purpose write-access Figma MCP for individual developers and small teams. More accessible than Console MCP, works on free Figma plans, lower setup complexity. Dropped to #4 as Console MCP's Uber validation is stronger enterprise evidence.

Figma Console MCP

active85The enterprise write-access leader. Uber's production uSpec validates this at scale — automated component specs across 7 stacks, accessibility in under 2 minutes. Hockey-stick npm trajectory is fastest-growing in the category. Local WebSocket means no rate limits and no data leaving your network.

Penpot MCP

watchOfficial54The open-source design MCP lane exists but isn't production-ready yet. MCP now integrated into main Penpot repo (archived standalone). Watch item — if development resumes and beta launches, it moves up.

Claude Talk to Figma

active59Best Claude-specific write-access option. DXT installer reduces setup to one click for Claude Desktop. If you're all-in on Claude Code or Claude Desktop and need write-access to Figma without paying for Dev Mode, this is the natural pick.

Onlook

active84Different lane from Figma MCP tools — not a Figma bridge, but a direct design-in-code editor. 24,918 stars and two HN hits above 200 points make this the most-starred tool in the UX/UI category. Best for frontend teams where designers work directly in the Next.js + Tailwind codebase and want to eliminate the Figma-to-code translation step.

Excalidraw MCP

activeOfficial71Clear diagramming lane leader. 3,371 stars and official Excalidraw org backing make it the default for AI-assisted diagramming. Different workflow from design-to-code — listed in UX/UI for visibility but doesn't compete with Figma MCPs.

Kombai

active40Wins on fidelity in every controlled comparison found. FreeCodeCamp tested 75–80% accuracy vs 65–70% for Figma MCP+LLM. Not an MCP server — a specialized Figma plugin + proprietary AI engine. Best for teams where pixel-perfect output matters more than transparency or cost.

Google Stitch

watchOfficial40Provisional #1 in AI-native design creation. Google-backed, free, and the Mar 18 overhaul caused an 8.8% Figma stock drop — the market treats this as a serious threat to Figma's moat. MCP server confirmed. But the update is 2 days old with zero usage evidence. Watch status until adoption data appears.

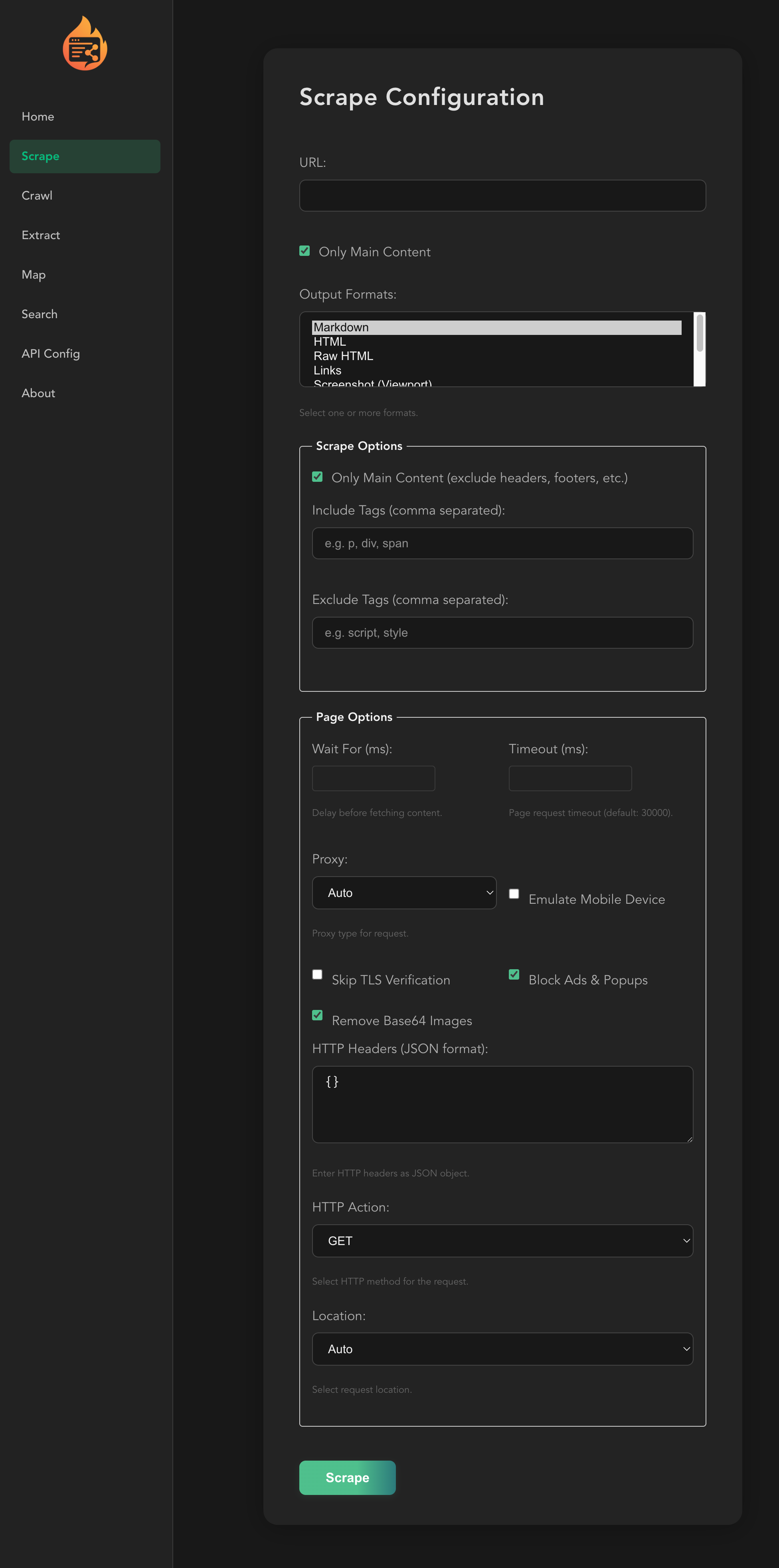

Firecrawl MCP Server

activeOfficial72#1 in product-business-development by volume and evidence. 5,809 stars, 50.6K npm/wk (highest in category), 83% benchmark accuracy (AIMultiple), F1 0.638 (SearchMCP). Morph confirms 89% recall, 95.3% success rate. Four independent sources confirm extraction superiority.

Exa MCP Server

activeOfficial87#8 in product-business-development, #2 research tool. 4,050 stars, 9.3K npm/wk, 19.7K PulseMCP/wk (#56 global). Best for semantic discovery ('find companies like X'). exa-code for coding agents is a unique differentiator. AIMultiple benchmark tested extraction, not discovery — 23% undersells Exa's actual strength.

MCP Atlassian

active85#2 overall, #1 enterprise operating surface for teams on the Atlassian stack. 4,666 stars, deepest tool set (72 tools), 139K PyPI weekly downloads, 140K PulseMCP/wk (#14 global). On-prem/Data Center support is the key moat vs official Rovo MCP.

Notion MCP Server

activeOfficial86#3 overall, #1 startup operating surface — 47K+ npm weekly downloads (17x Atlassian official MCP). Token-optimized Markdown responses show MCP-specific engineering. Notion 3.3 Custom Agents ecosystem positions MCP as multi-tool hub.

Slack MCP Server

active72#10 in product-business-development. Communication lane — real but early. korotovsky community server at 1,461 stars shows genuine developer adoption. Official GA with 50+ partners (Anthropic, Google, OpenAI, Perplexity). PulseMCP 1.6K/wk (#602 weekly), 23.7K all-time. Multiple independent publications covered GA launch (KMWorld, SmallBizTrends, Salesforce Ben). 25x growth claim is selfReported with no disclosed baseline.

Google Workspace MCP

active80#6 overall. Best broad operating skill for teams living in Google Workspace. Breadth is real (100+ tools, 12+ services), but low npm adoption (604/week), community-only status, and existential threat from Google's own CLI weigh against it.

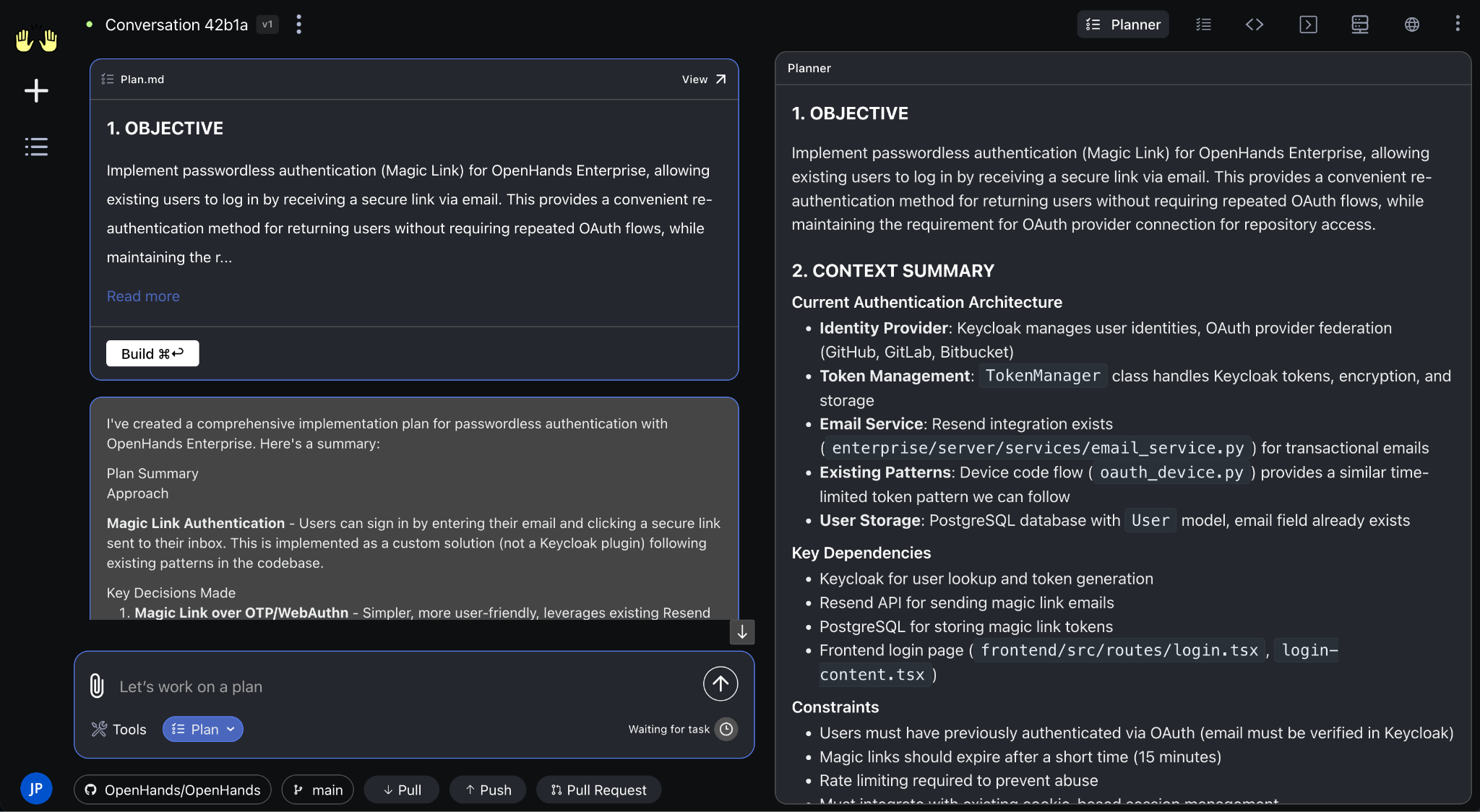

OpenHands

active88Category leader. No other tool has the combination of open-source community (69K stars, 455 contributors), real download volume (1M/month), venture backing ($18.8M), hardware partnerships (AMD), and benchmark leadership (SWE-bench Verified 72%). Gap to #2 is enormous.

Ralph Loop Agent

activeOfficial60Best reference when the team wants a crisp loop pattern instead of a huge agent platform. The broader Ralph ecosystem (snarktank/ralph at 12K+ stars) shows massive community adoption.

SWE-agent

stale79Research/academic reference only. Princeton pedigree and 79.2% SWE-bench Verified on Opus 4.5 scaffold give it strong benchmark credibility. But no release in 10 months (last: v1.1.0, 2025-05-22) puts it outside the production cadence of all active tools. Use as a benchmark scaffold reference, not as a production coding CLI.

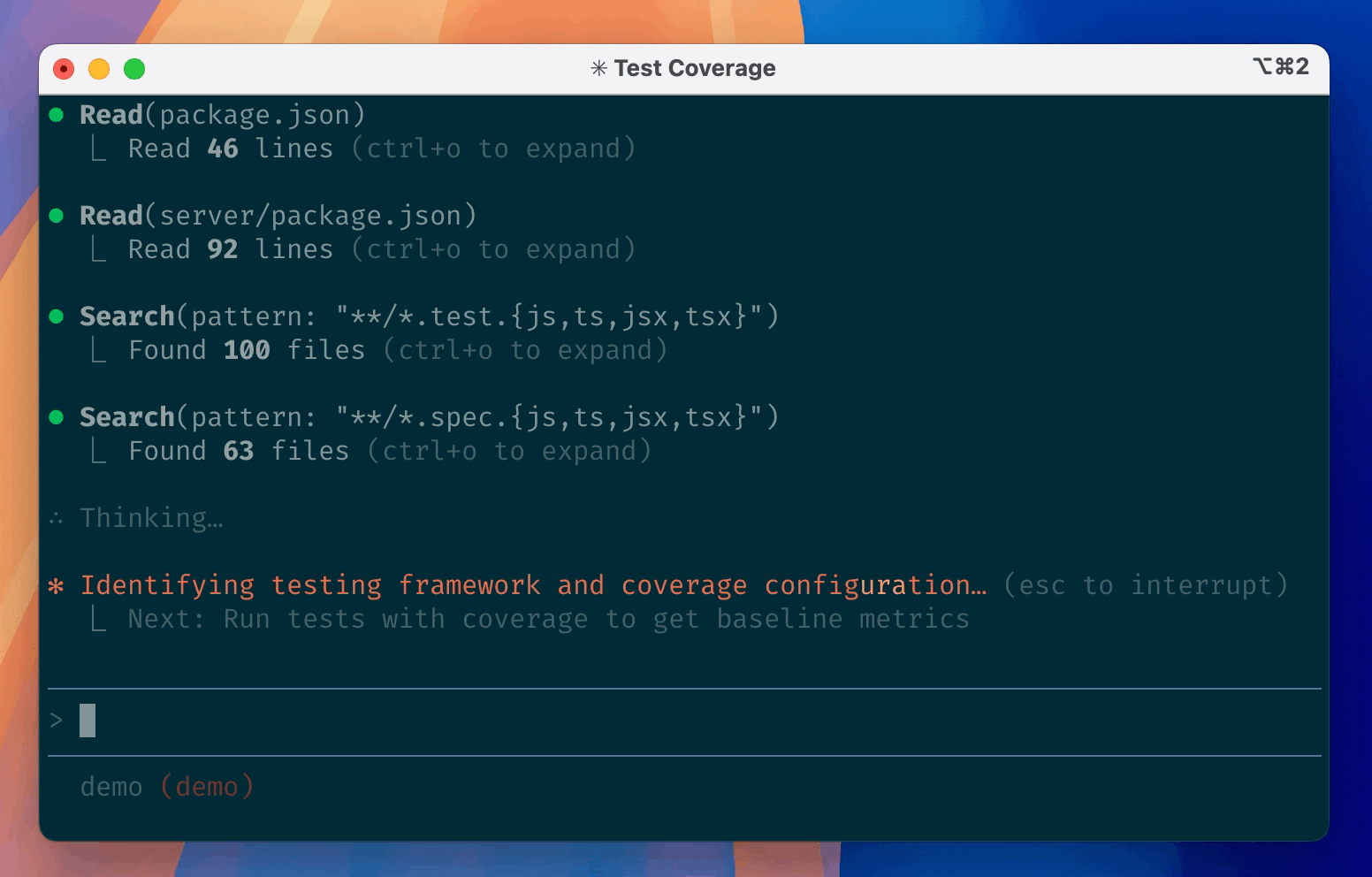

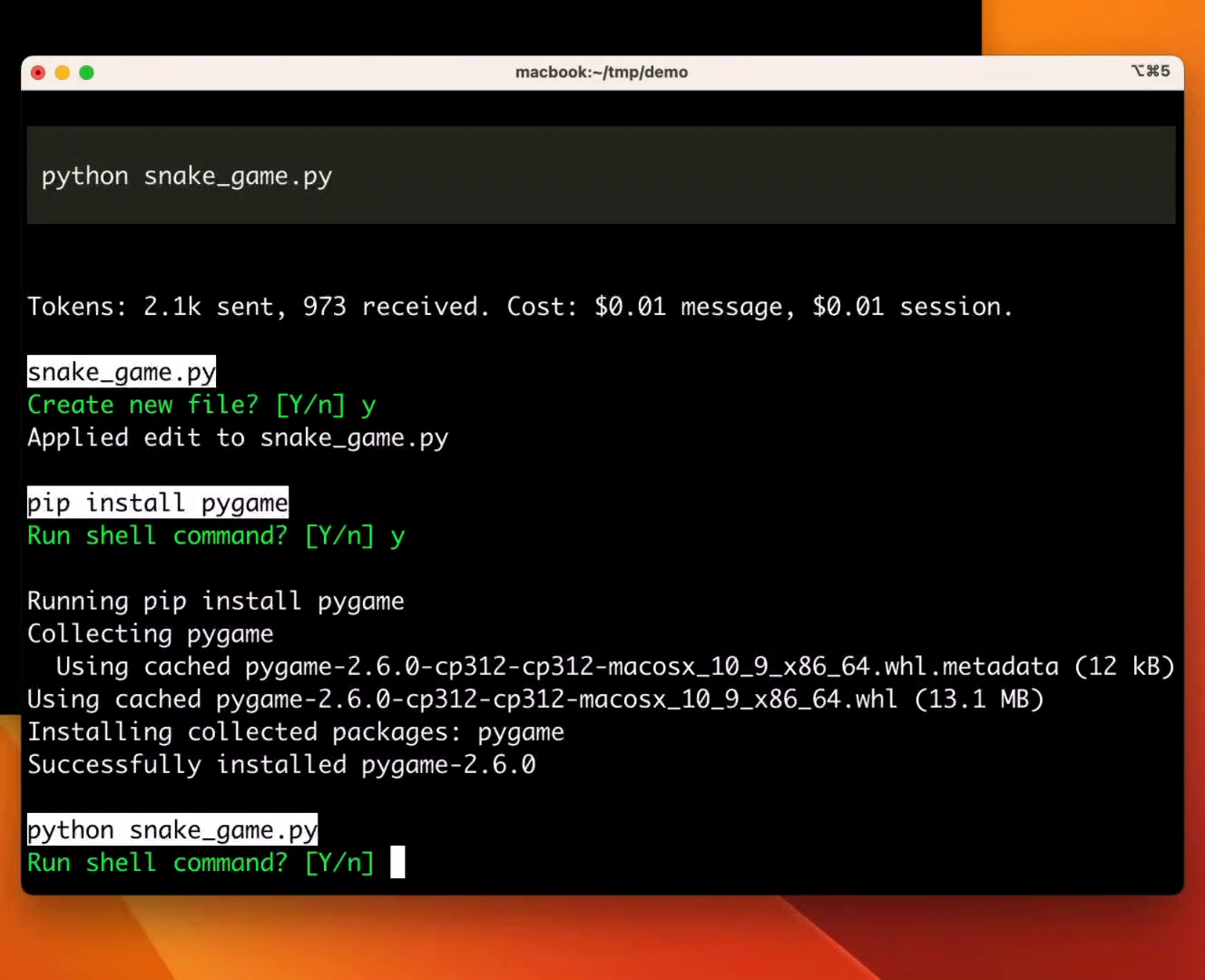

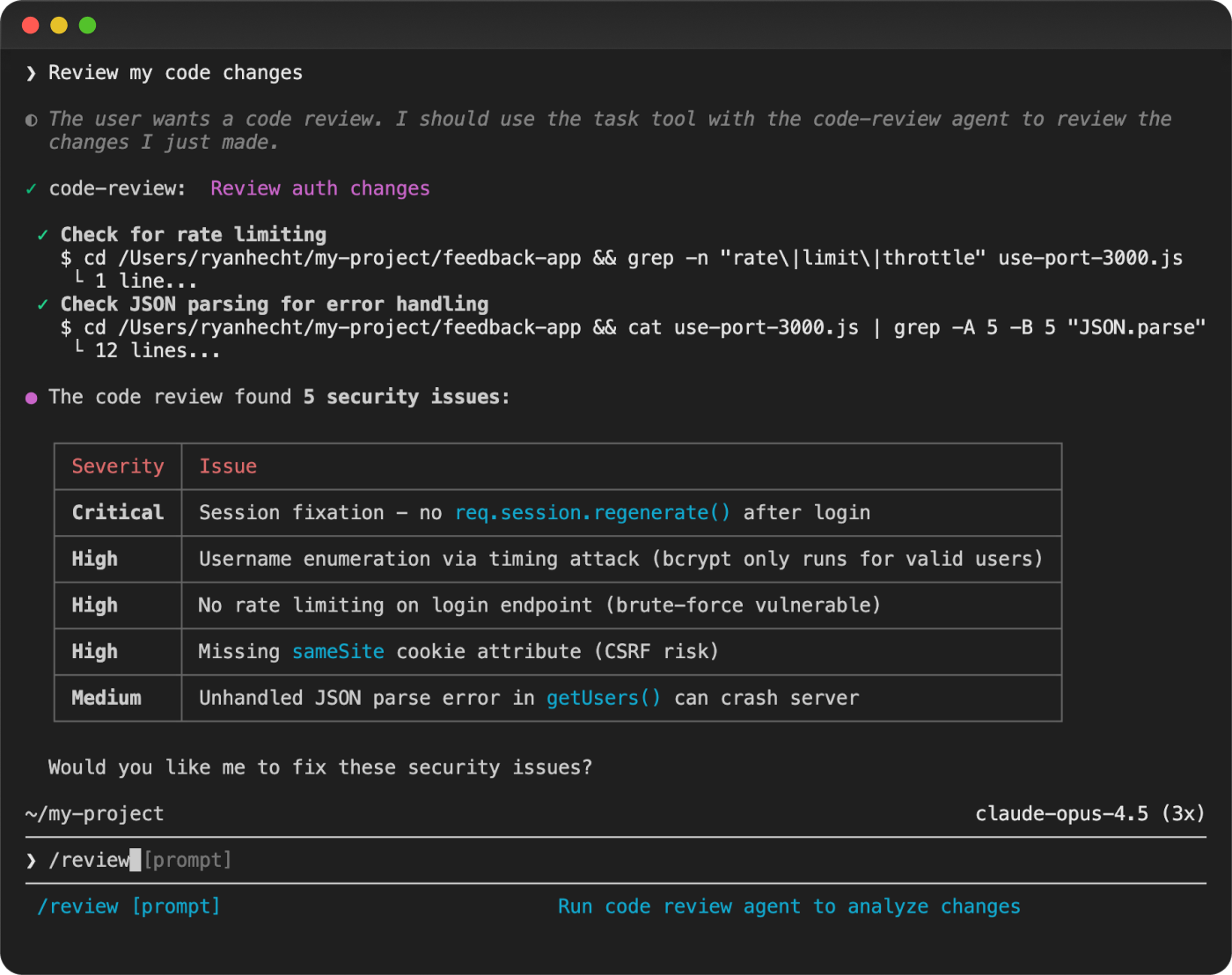

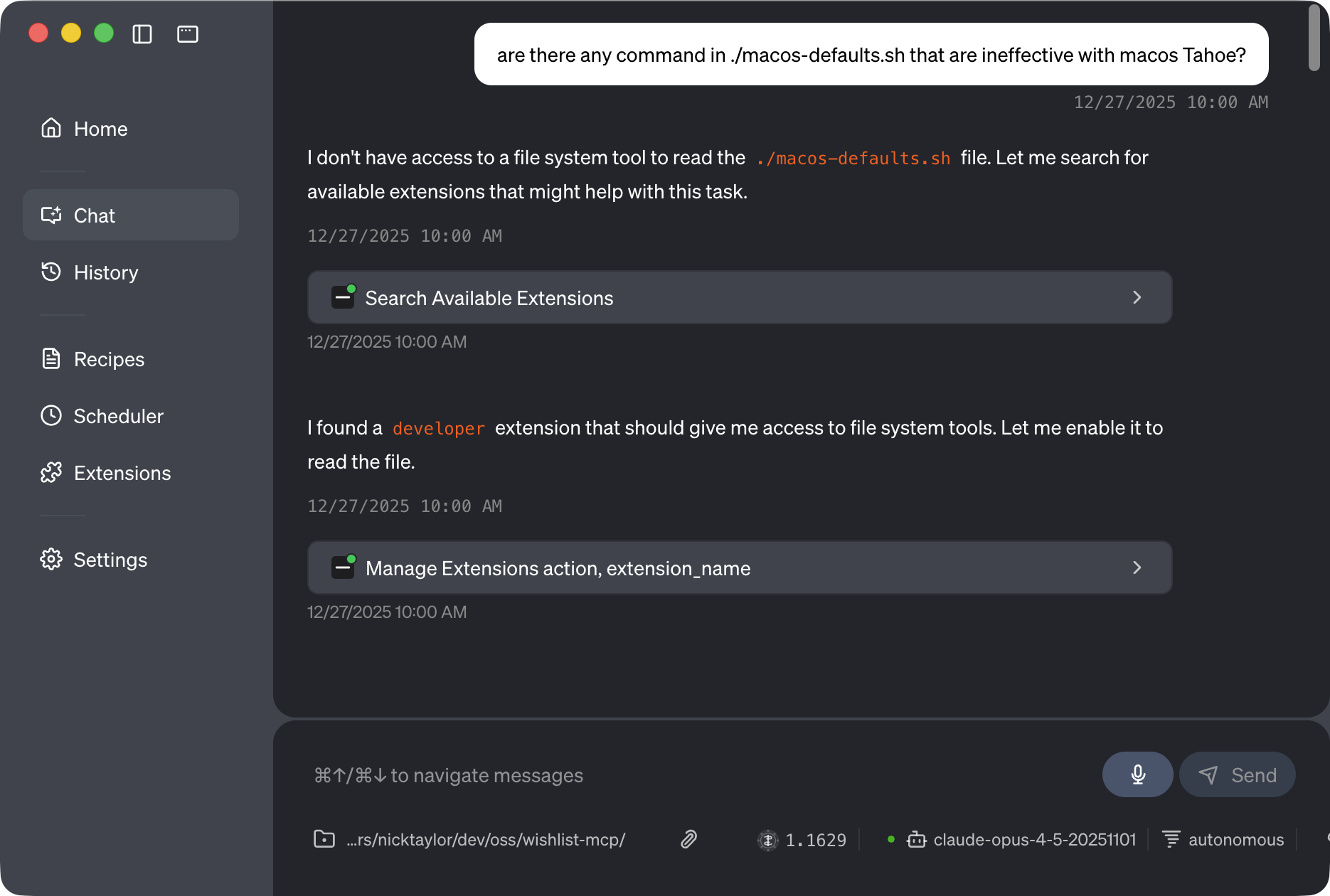

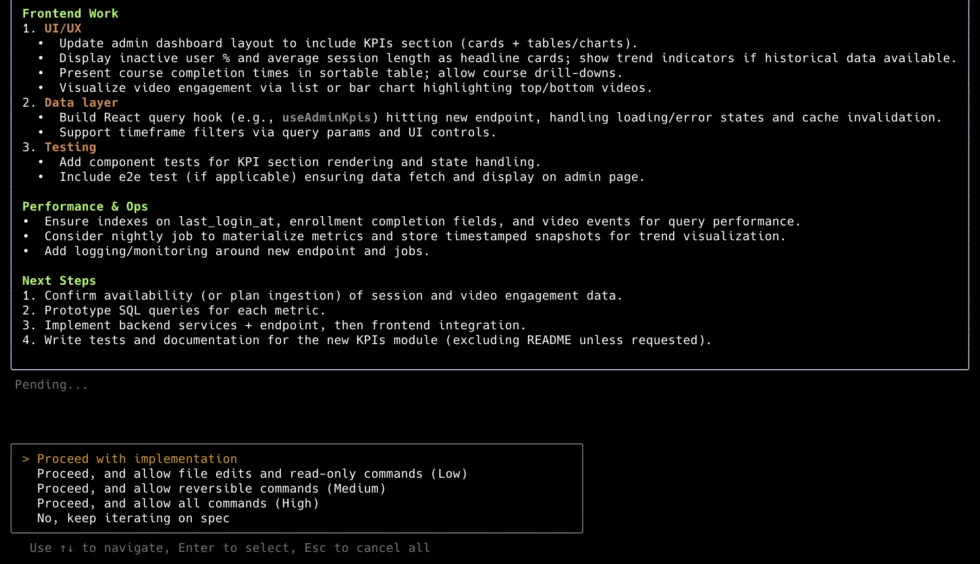

Claude Code

activeOfficial98The #1 coding CLI agent. Leads SWE-bench Pro standardized (45.89%), wins independent head-to-heads on reasoning depth, ~4% of GitHub commits. Rate limits are the #1 complaint. Costs 2-3x more per task than Codex CLI due to higher token consumption.

Aider

active86#7 coding CLI — strong verification story (191K PyPI/week, 5.7M lifetime), but Codex CLI at 2.49M npm/week and Gemini CLI at 678K/week have overtaken Aider's download rank. Category pressure is real: HN thread 'Claude Code with Sonnet 4 is so good I have stopped using Aider' (#44154020). Best for Python devs who want fine-grained model control and git-native workflow. v0.86.2 (2026-02-12) is 5 weeks behind competitors shipping daily.

Continue (Continuous AI)

active82Best pick for teams that want background AI agents enforcing code quality on PRs, not real-time autocomplete. The pivot repositioned it away from individual devs toward team CI workflows.

OpenCode

active88Watch — two serious security incidents (unauthenticated RCE fixed v1.1.10+, CVE-2026-22812 CVSS 8.8-10.0) make trust story the weakest in the category. 126K+ stars are real but star surge driven by Anthropic OAuth controversy — brand-driven, not organic product traction. OpenAI partnership and 393K npm/week are real signals, but security history removes it from the main ranking pending security posture improvement.

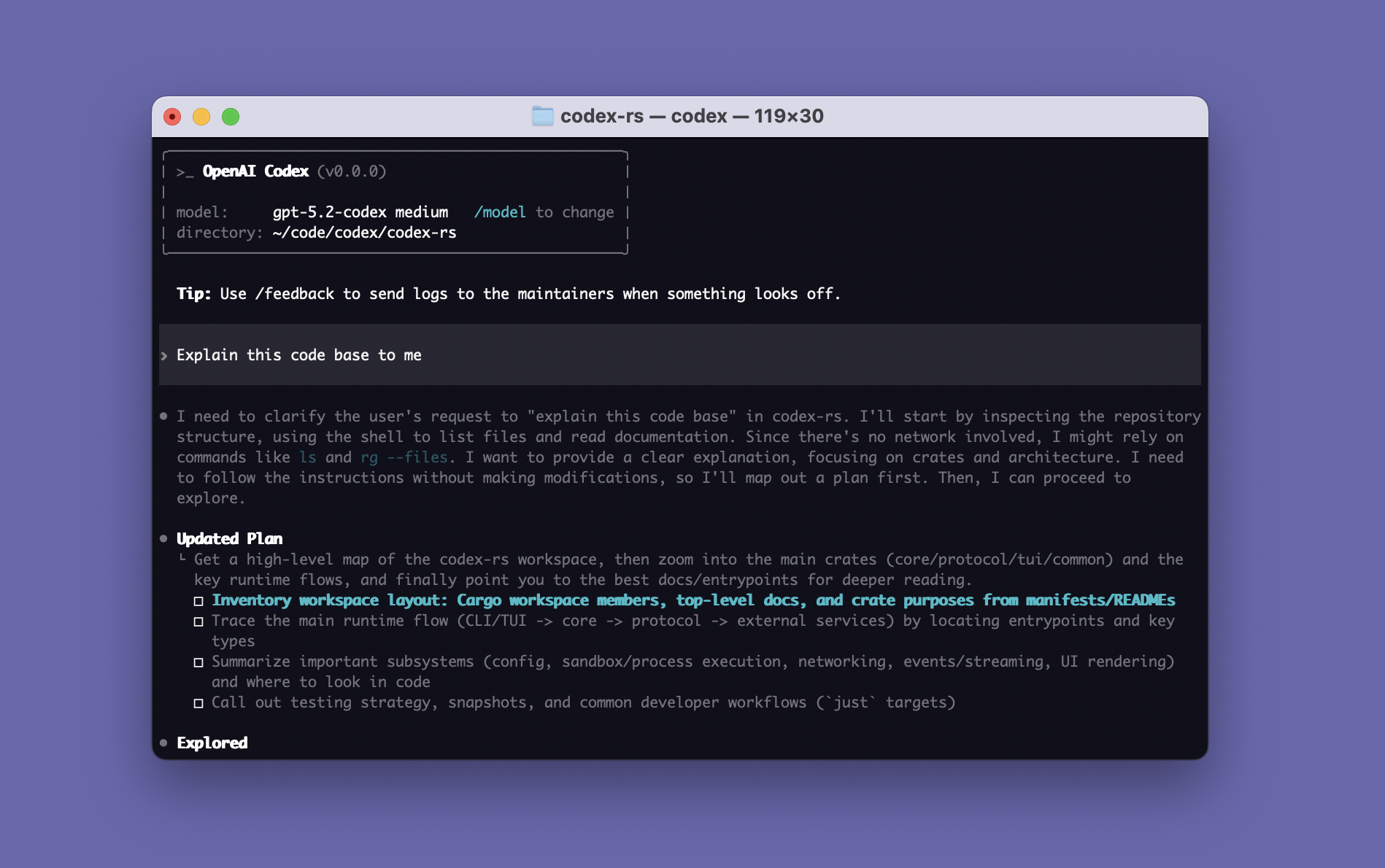

Codex CLI

activeOfficial87#2 coding CLI. Rust rewrite eliminates Node.js dependency — unique in category. Terminal-Bench 77.3% (#2) and 3-4x more token-efficient. Cleanest security record among Tier 1 tools. GPT-5.4 shipped March 2026. Trails Claude Code by ~5pp on SWE-bench Pro standardized (41.04% vs 45.89%) and first-pass quality (67% vs 95%).

Gemini CLI

activeOfficial88#3 coding CLI — best free entry point and Terminal-Bench leader (78.4% #1). 1K req/day free tier is unmatched, 1M context is the largest. SWE-bench Pro standardized 43.30% is competitive. Plan Mode (March 2026) closes last major feature gap. File deletion incident (AI Incident Database #1178) and 50-60% first-pass correctness (roughly half of Claude Code) are real concerns.

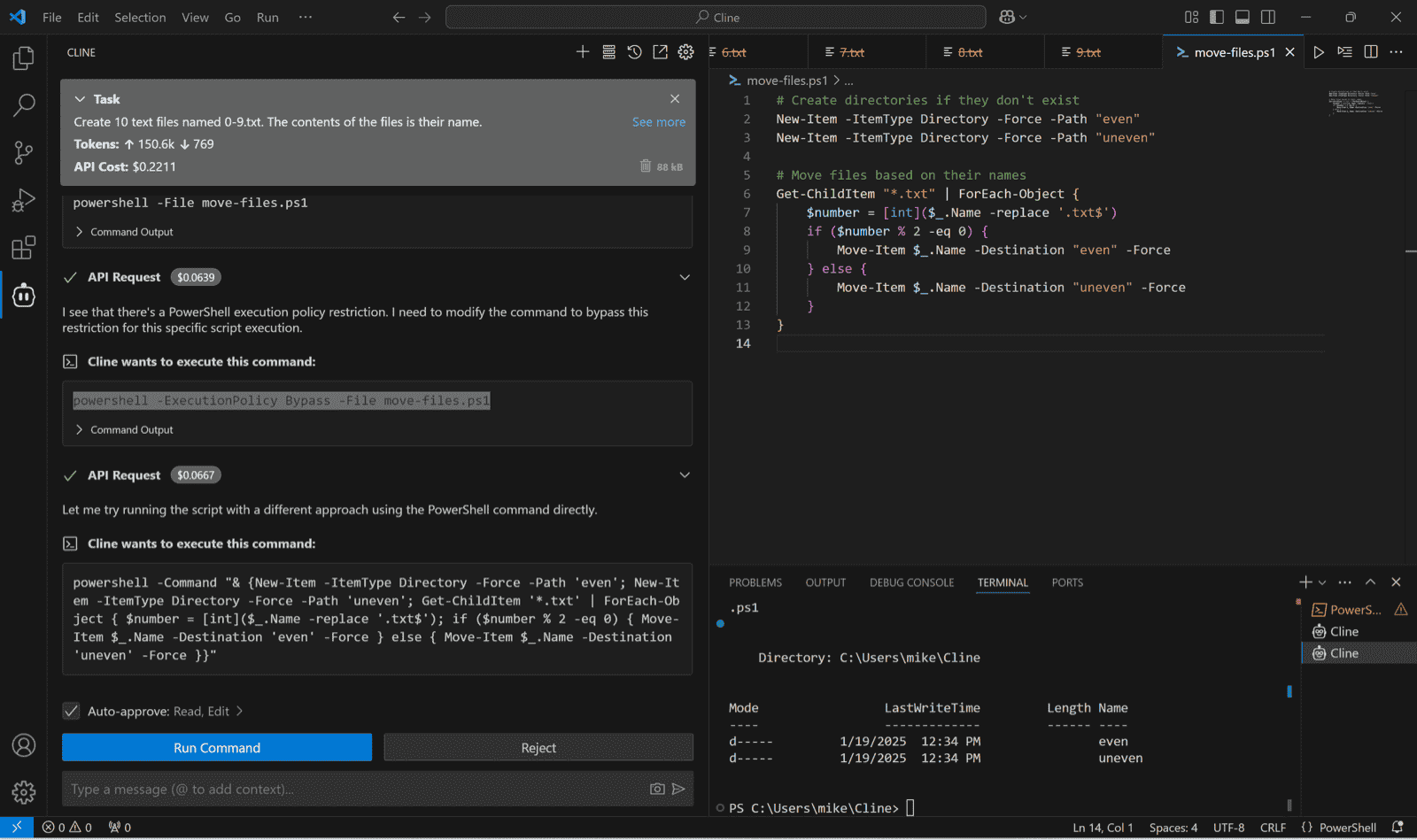

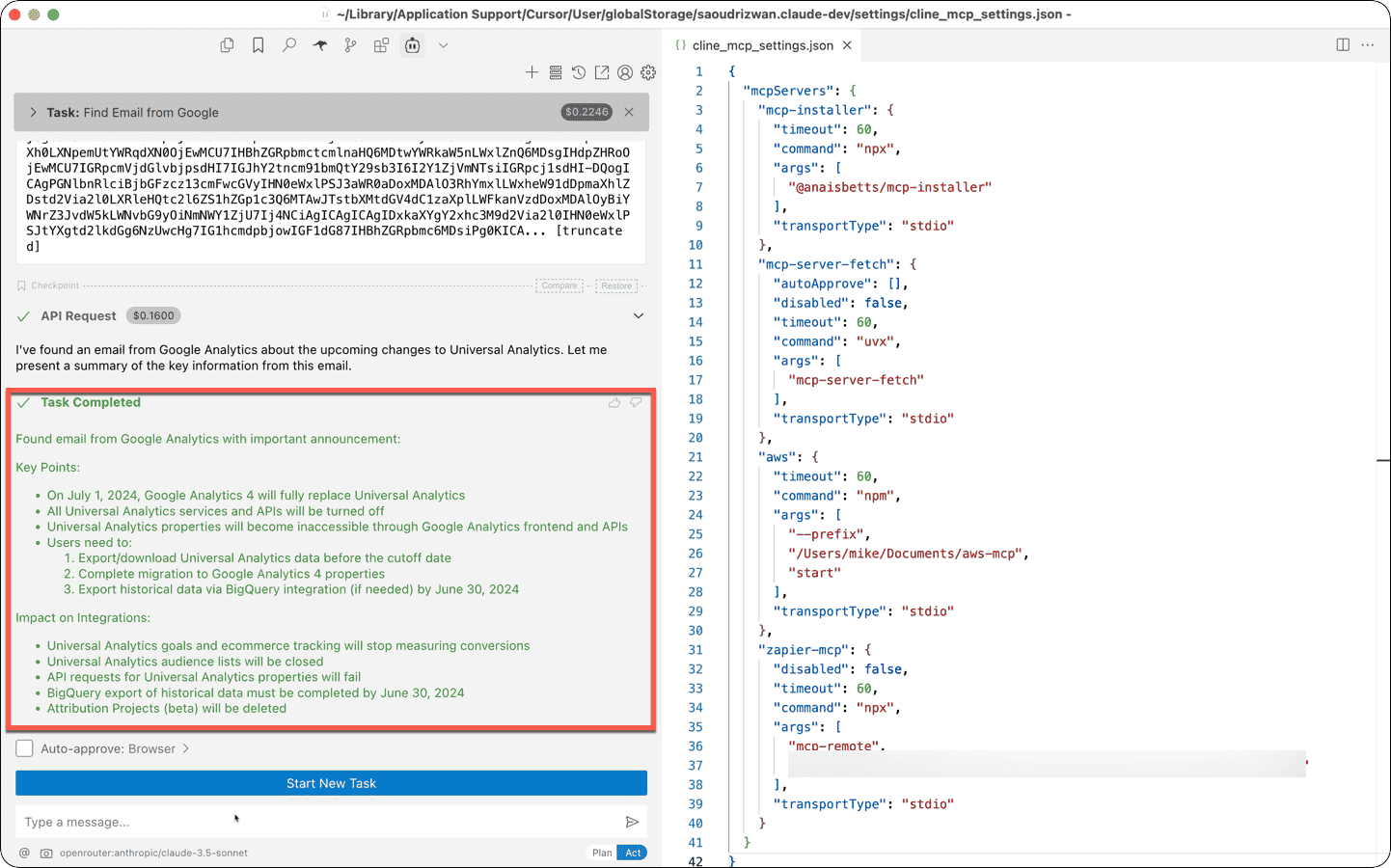

Cline (cline.bot)

watch73#4 coding CLI — strongest in the IDE-embedded-agent segment with 3.35M VS Code installs, 5M total across editors, $32M funding (Emergence Capital), and named enterprise customers (Salesforce, Samsung, SAP). The supply chain incident (v2.3.0 'OpenClaw') is a documented trust flag that still applies. A credible third-party security audit would remove the primary concern. Best for developers who live in VS Code and want agentic assistance without leaving the editor.

GitHub Copilot CLI

activeOfficial10#3 coding CLI — distribution moat (15M Copilot subscribers) and the Enterprise Agent Control Plane are unique advantages, and the v1.0.11 release shows GitHub is iterating quickly. But PromptArmor’s remote-code advisory plus the lack of public benchmarks keep it below Claude/Gemini until security policies catch up.

Amp (Amp Inc.)

active52Watch — corporate spin-out from Sourcegraph to independent Amp Inc. (March 2026) is a material change. The original sourcegraph/amp GitHub link returns 404. Tool still ships (36K npm downloads/week) under ampcode.com. Sub-agent architecture (Oracle, Librarian, Painter) remains the most sophisticated in the category. Update or verify all links before recommending.

Goose (Block)

active82#7 coding CLI — strongest free, open-source terminal agent. MCP-native, Apache 2.0, Linux Foundation governance. ACP integration (March 19, 2026) lets developers use existing Copilot/Claude/Gemini subscriptions. 60% of Block's 12K employees use it weekly (self-reported). No published benchmarks keep it in Tier 2.

Crush (Charmbracelet)

active82Best choice for developers who live in the terminal and want a polished, non-VS-Code-dependent experience. Charmbracelet's proven track record (Bubble Tea, 25K+ apps) is a strong prior. No published benchmark scores — community quality signal is the main trust anchor.

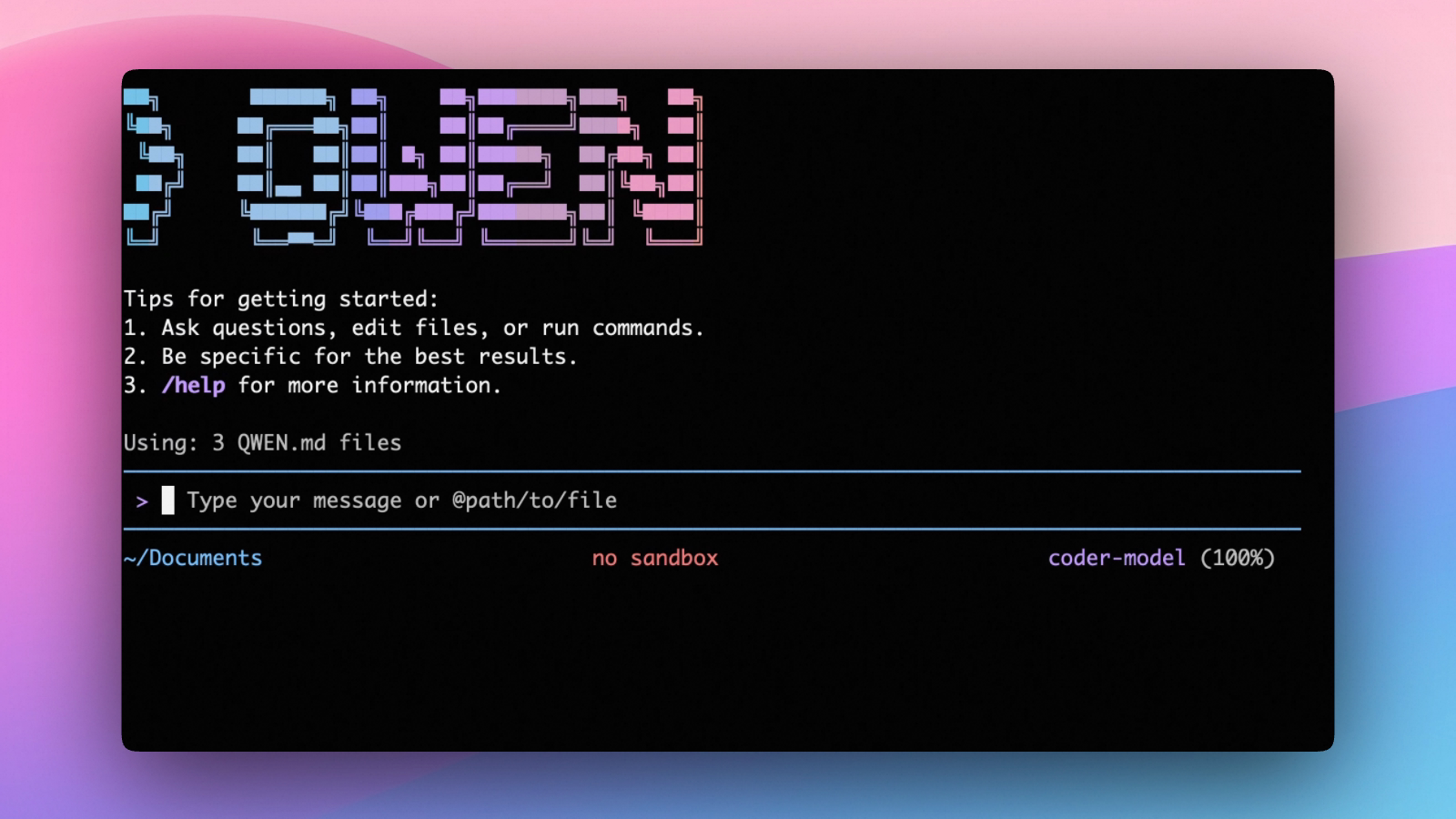

Qwen Code (Alibaba / QwenLM)

activeOfficial82Best cost=zero option for developers who want a serious open-weight model without paying per token. The 70.6% SWE-bench Verified figure (Qwen3-Coder-Next) is the strongest open-weight score in the category and anchors the strongest local/on-prem story. Alibaba/Chinese cloud provenance is a consideration for enterprise and GovCloud use cases.

Junie CLI (JetBrains)

watchOfficial35Too early to rank — no public artifact (no repo, no benchmark, no independent review). JetBrains has 11M+ paid IDE seats; if the JetBrains installed base converts, this becomes a serious Tier 2 contender within 60 days. Check back after first independent comparisons.

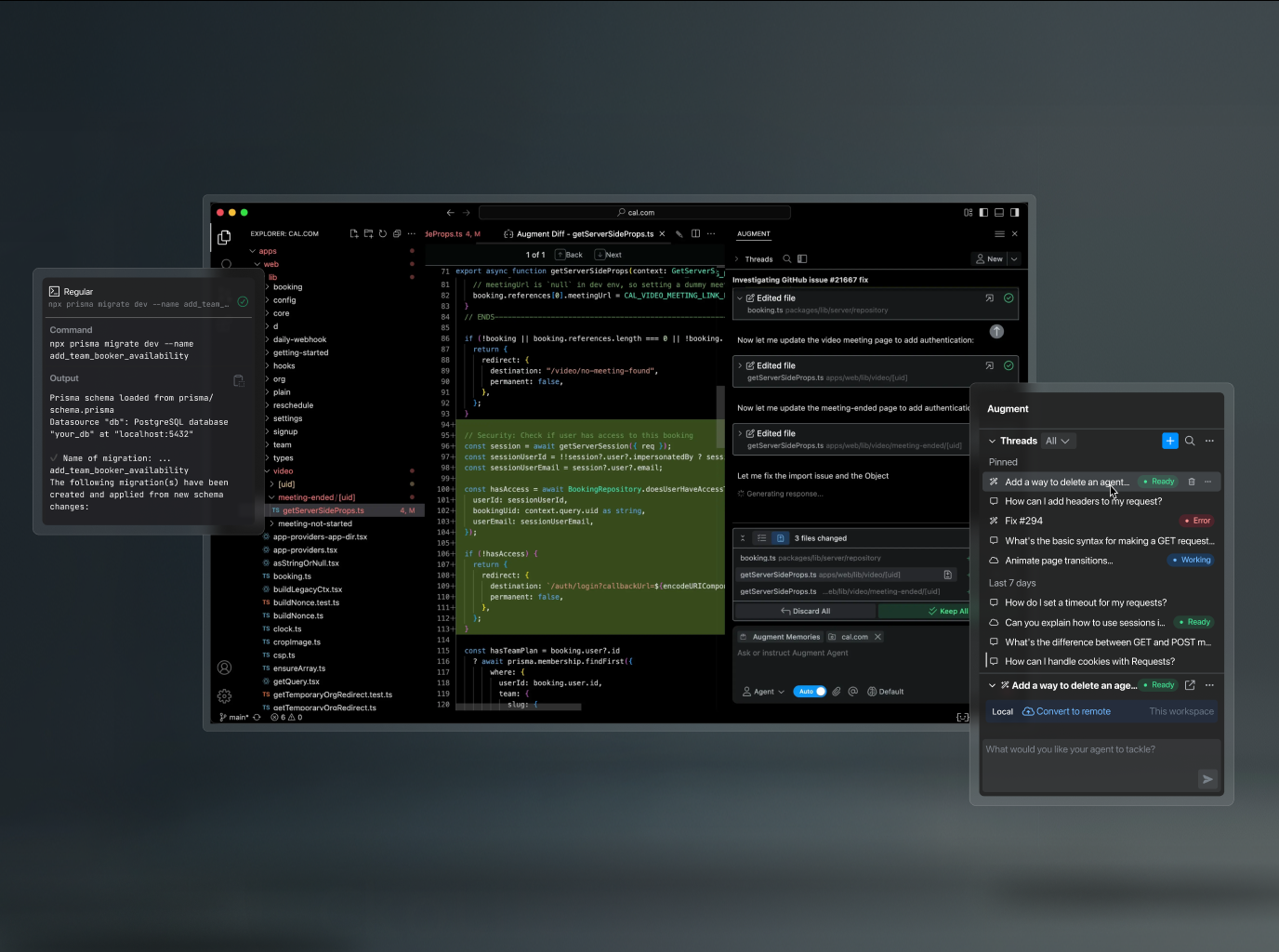

Auggie CLI (Augment Code)

watchOfficial53Highest SWE-bench Pro number in the category (51.80% on Augment scaffold), but the scaffold is not standardized — they used the same Opus 4.5 model that scores 45.89% on SEAL's standardized setup. The architecture/scaffolding advantage is credible and meaningful. Cannot rank above tools with millions of verified installs on a single blog-post benchmark. Watch for: public GA release and independent SWE-bench reproduction.

Browser Use

active76Unchallenged category leader — 81K stars, 1M+ weekly PyPI downloads, 89.1% WebVoyager. The gap to #2 is enormous: 4x stars, 6x downloads vs the next autonomous agent.

Playwright MCP

activeOfficial93Highest raw downloads in category (1.38M npm/wk). Cross-browser (Chromium + Firefox + WebKit). CLI mode is the token-efficient choice — 4x reduction vs MCP confirmed by 13+ independent sources. Microsoft officially recommends CLI for coding agents.

Stagehand

activeOfficial90Best SDK for building browser automation into products. Three clean primitives (act, extract, observe), v3 dropped Playwright dependency, Cloudflare official integration. New browse-cli shows the team reads the CLI-over-MCP signal correctly.

Chrome DevTools MCP

activeOfficial92Lane 2 leader for Chrome debugging workflows. Fastest star growth in category. 599 HN pts (Mar 15) is the highest single thread. Google Chrome team official. Deep debugging (heap snapshots, Lighthouse, performance profiling) that no competitor matches.

Vercel Agent Browser

active88Token-efficiency leader but immature. Anomalous star-to-HN ratio (23.6K stars, ~6 HN pts) warrants caution — community hasn't organically validated this tool yet. 303 open issues in 10 weeks. Best only when token budget is the binding constraint.

Skyvern

active90Best pick for enterprise workflow automation on websites without APIs — form filling, data entry, procurement. Overkill for developer/coding agent browser tasks.

Lightpanda

active84Best infrastructure pick for teams running browser automation at scale who need to reduce costs. Drop-in CDP replacement for Chrome headless in pipelines. Still beta — not a user-facing agent tool.

Vibium

watch74Below cut line — only 2.7K stars, not production-ready by creator's own characterization. But founder pedigree (Selenium, Appium creator), 443-point HN thread, near-daily releases, and W3C standards-first architecture demand close tracking. The only Lane 2 tool with credible cross-browser (Firefox + Safari) story.

BrowserOS / Nxtscape

active82Lane 4 leader. Just crossed 10K stars. 314 HN pts with 206 comments is strong organic engagement. YC S24 backing. Privacy-first Chromium fork with built-in AI. Different market than Lanes 1-3 but clear leader in consumer agentic browsers.

Salesforce MCP

activeOfficial80#2 CRM lane, behind HubSpot. 26.6K npm/week is primarily Salesforce DX developer tooling (SFDX developers), not CRM product teams. Agentforce-gated open CRM access keeps PulseMCP low (~2K/wk). Right choice only for existing Salesforce Enterprise customers already committed to Agentforce.

Atlassian Rovo MCP

activeOfficial56#11 in product-business-development. Official backing, 459 stars, GA since Feb 2026. 12+ AI client partnerships. Cloud-native with OAuth 2.1 and Cloudflare Agents SDK. Cloud-only limitation and 200:1 developer preference gap vs sooperset keep it behind. ~2K PulseMCP/wk.

Airtable MCP Server

active75#15 in product-business-development. Niche but real — 2.5K npm/week shows genuine adoption. Fills the gap between spreadsheets and databases for non-dev business teams using Airtable as their project database.

Linear MCP Server

active57#7 overall, #1 in project/PM lane. Feb 2026 PM upgrade (triage, backlog prioritization, initiative creation, milestone management) elevated from dev-only to full PM surface. Developer-first API returns clean typed objects — less context token waste than Jira. ~2,743 combined npm/wk, 12.9K PulseMCP/wk.

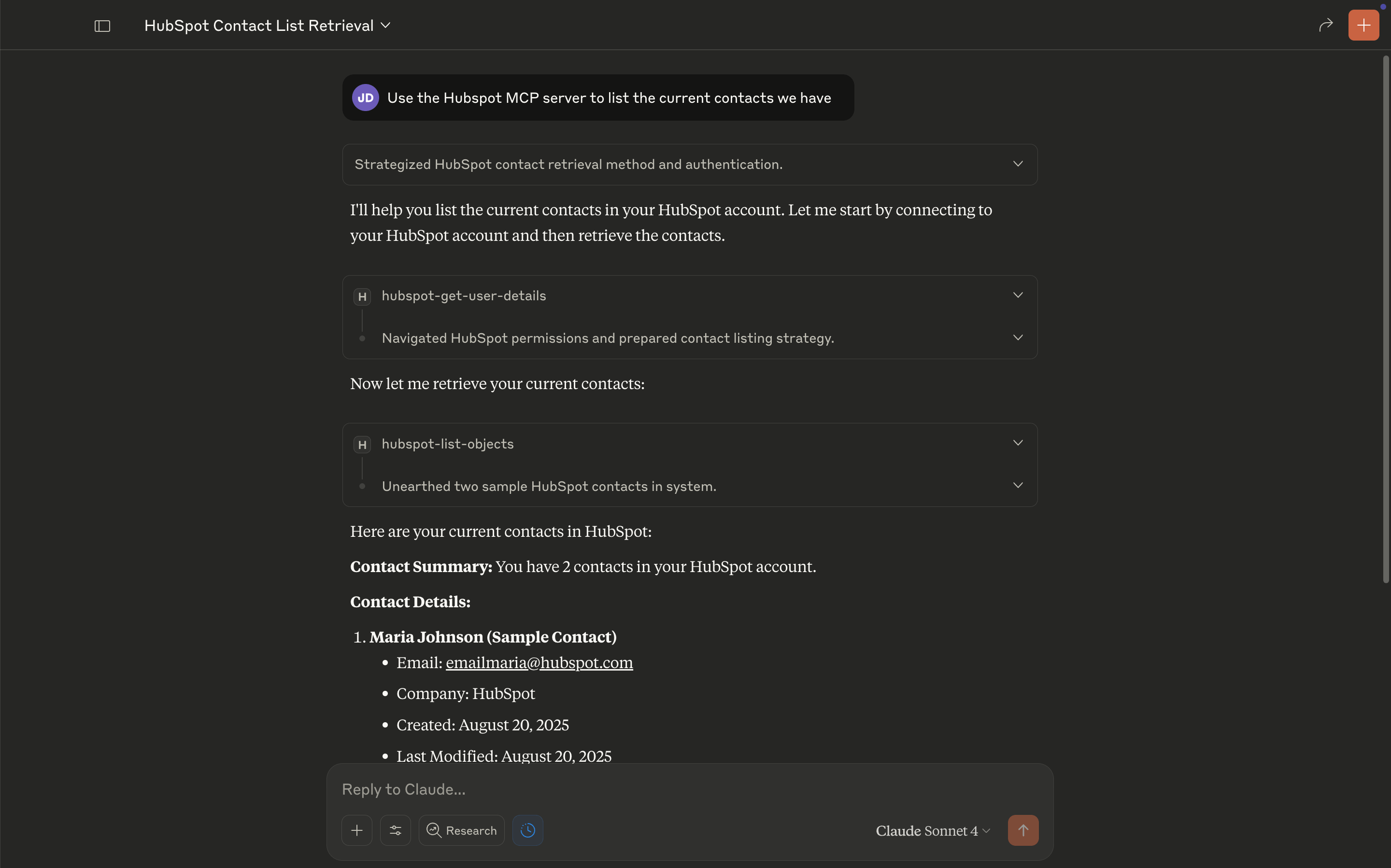

HubSpot MCP

activeOfficial47#1 CRM lane for startup/SMB teams. 10.7K npm/wk (ahead of Exa), 12K PulseMCP/wk (6x Salesforce), 335K all-time (#93 global). Open ecosystem approach is closing the CRM gap vs Salesforce's Agentforce gate. Key caveat: currently read-only.

Zapier MCP

activeOfficial44#1 business automation/connector lane. 35.7K PulseMCP/wk (#46 global), 966K all-time. Unique breadth: 7,000+ apps in a single hosted MCP. No-install surface makes it the only MCP accessible to non-technical business users.

PostHog MCP

activeOfficial84#4 overall, #1 product analytics lane. 5.7M all-time PulseMCP visits (#5 globally), 20.6K/wk. 27 tools across 7 categories. Unique: LLM analytics tracking and error tracking for AI pipelines. Open-source, self-hostable, MIT. Amplitude (#2) and Mixpanel (watch) now catalogued but gap is 100x+ on PulseMCP.

Dynamics 365 MCP

activeOfficial40#12 in product-business-development. The most fully documented enterprise MCP server found — GA on production infrastructure with clear licensing, Entra ID auth, and role-based access. Enterprise-only audience (requires D365 license + Tier 2+ environment). Community traction is thin (25-27 stars). No PulseMCP or npm presence.

Amplitude MCP

activeOfficial35#13 in product-business-development, #2 in product analytics lane. Enterprise positioning with OAuth 2.0 and warehouse-native analytics is a genuine differentiator vs PostHog's API key model. Adoption signals are thin: 34 PyPI downloads/month, fragmented repos (16 + 44 stars), 6.2K PulseMCP/wk.

Monday.com MCP

watchOfficial35Watch — official MCP, free on all plans, 380 stars, but no independent adoption evidence. No PulseMCP listing, no npm package, no independent usage reports for the MCP server specifically. Monday.com has larger market share than Linear for non-dev teams, but the MCP itself is too new to rank confidently.

Asana MCP

watchOfficial35Watch — official with 42 tools and V2 Streamable HTTP migration showing serious investment. Read + write access. However, no public GitHub repo (remote-hosted only), zero independent adoption metrics. V1 deprecation deadline (May 2026) is forcing migration.

Gong MCP

watch42Watch — not catalog-ready. Official MCP announced but Gong Collective page says 'Coming Soon.' Community repos ≤28 stars, stale (last push Dec 2025). No independent users found. Third-party platforms (Zapier, n8n) list Gong via generic API bridges, not native MCP. Revisit Q2 2026 when GA ships.

Google Workspace CLI

activeOfficial45Below cut line. Proves Google is shipping its own surface instead of blessing MCP. CLI approach avoids the tool-count flooding problem. Intentionally non-MCP and narrower scope.

Mixpanel MCP

watchOfficial35Watch — weakest signals in the product analytics lane. Hosted-only model limits community engagement. SSE-only transport is deprecated. US-only expanding. Broadest AI client support (ChatGPT, Gemini, Cursor) is the unique differentiator for non-developer PMs. Not catalog-ready until stronger adoption signals emerge.

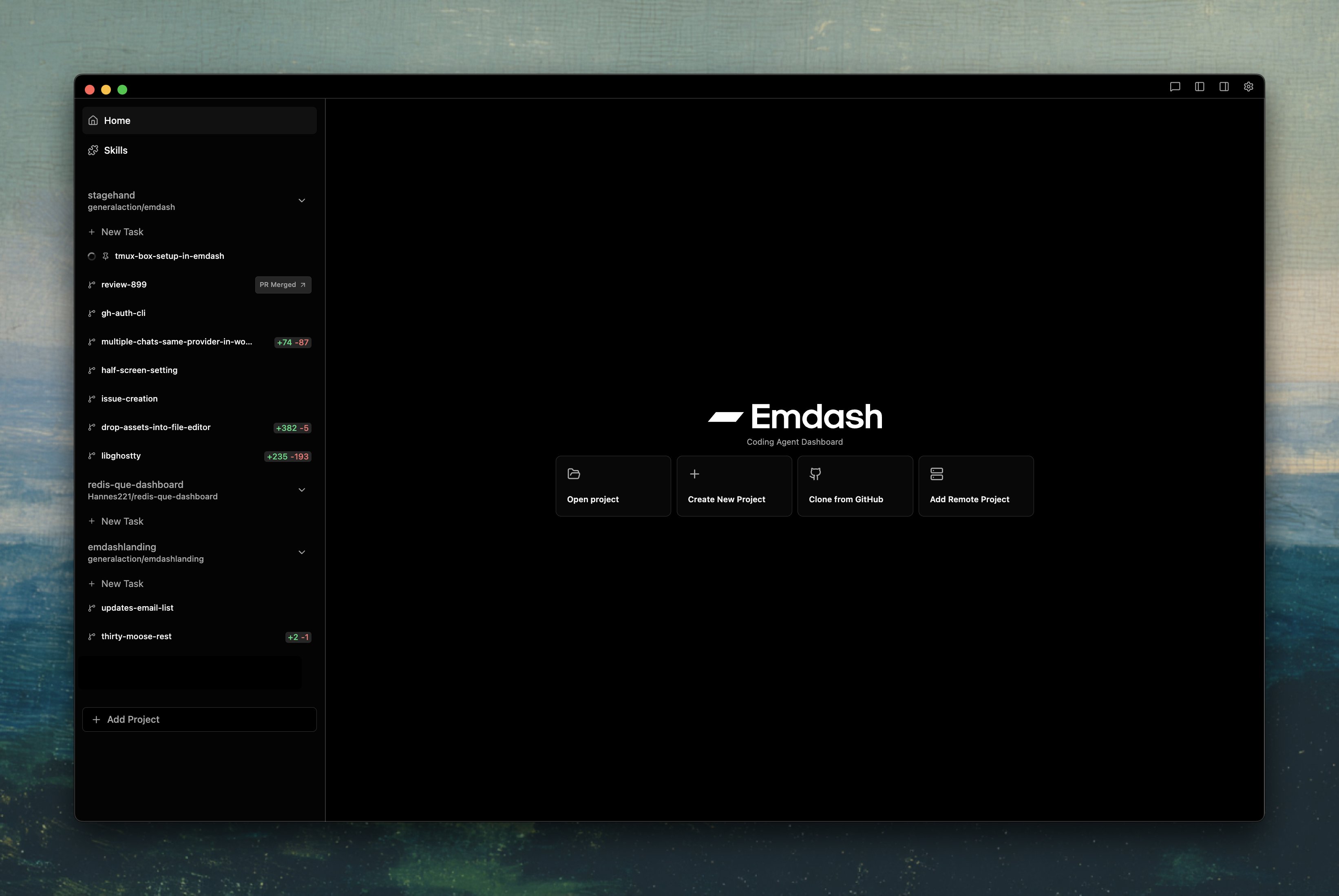

Emdash

active66Best orchestration layer for teams juggling multiple top CLIs. Show HN hit 206 points, YC backing is public, and Ry Walker’s independent comparison put it in Tier 1. Still smaller than Superset by stars, but the feature depth (parallel repos, remote servers, notifications) is unmatched.

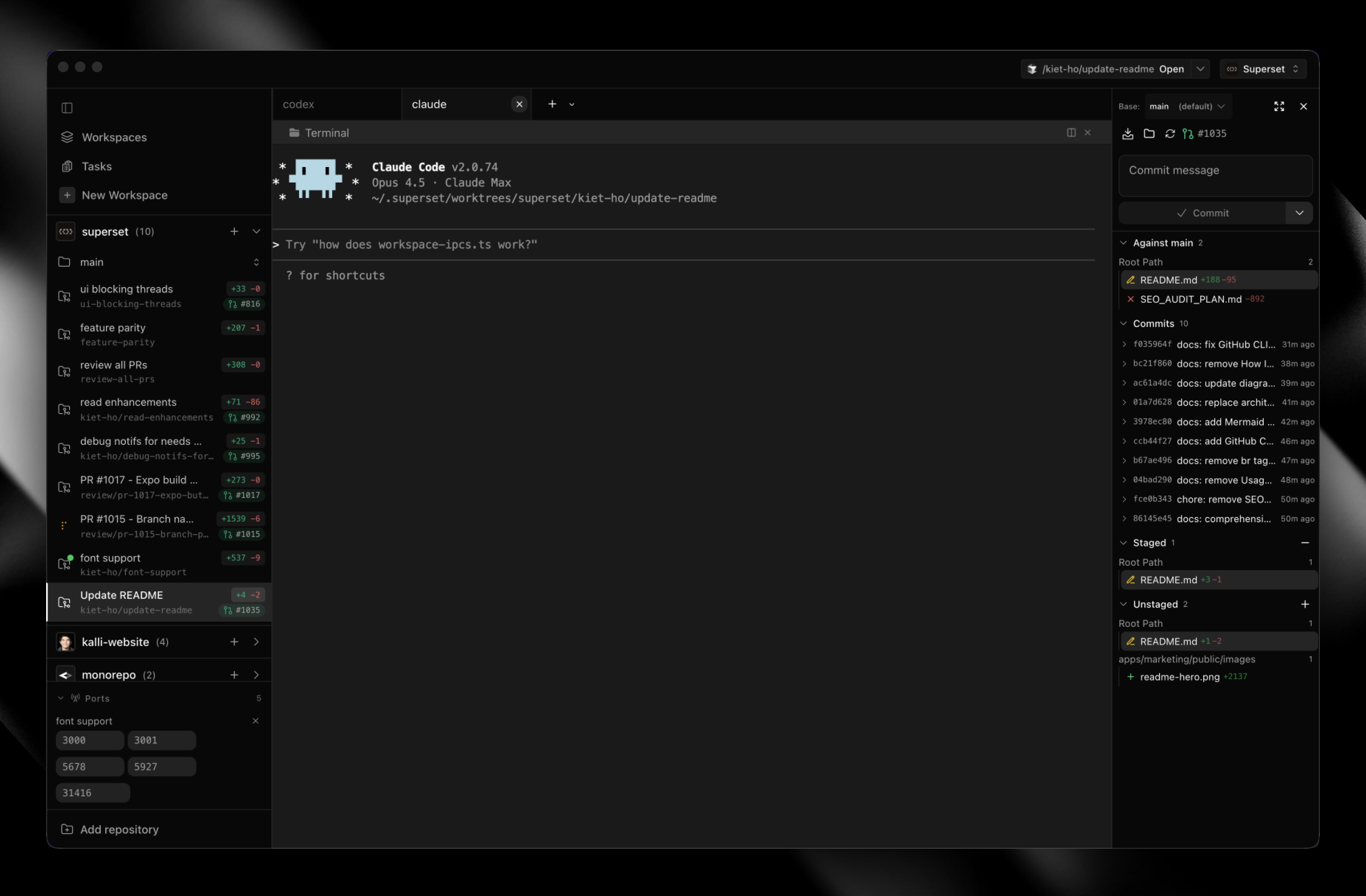

Superset

active80Best pure multiplexer. Highest raw community traction among orchestrators (7.2K stars, 512 PH). Privacy-first (Apache 2.0, zero telemetry, BYOK). Simple and focused.

Grov

active55Best option today for teams who love CLI agents but hate starting every session from a blank slate. Grov keeps a persistent memory DB, injects the right snippets when a new task starts, and intervenes when Claude/Codex/Gemini wander off-plan. Still early (hundreds of stars, limited enterprise proof), but the architecture fills the 'agent memory layer' gap that keeps popping up in rank packets.

Factory AI (Droids)

active62Enterprise-only with legitimate backing, but zero grassroots signal and an unverified benchmark claim. Large enterprises with white-glove support needs may benefit; not for individual developers or small teams.

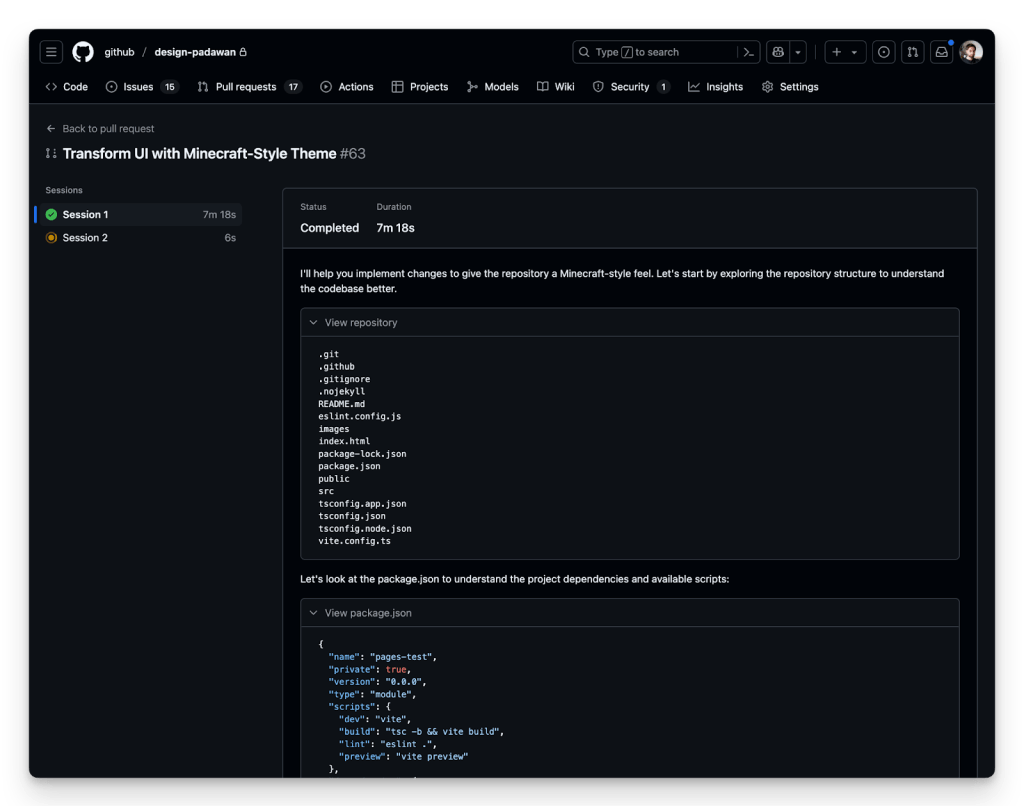

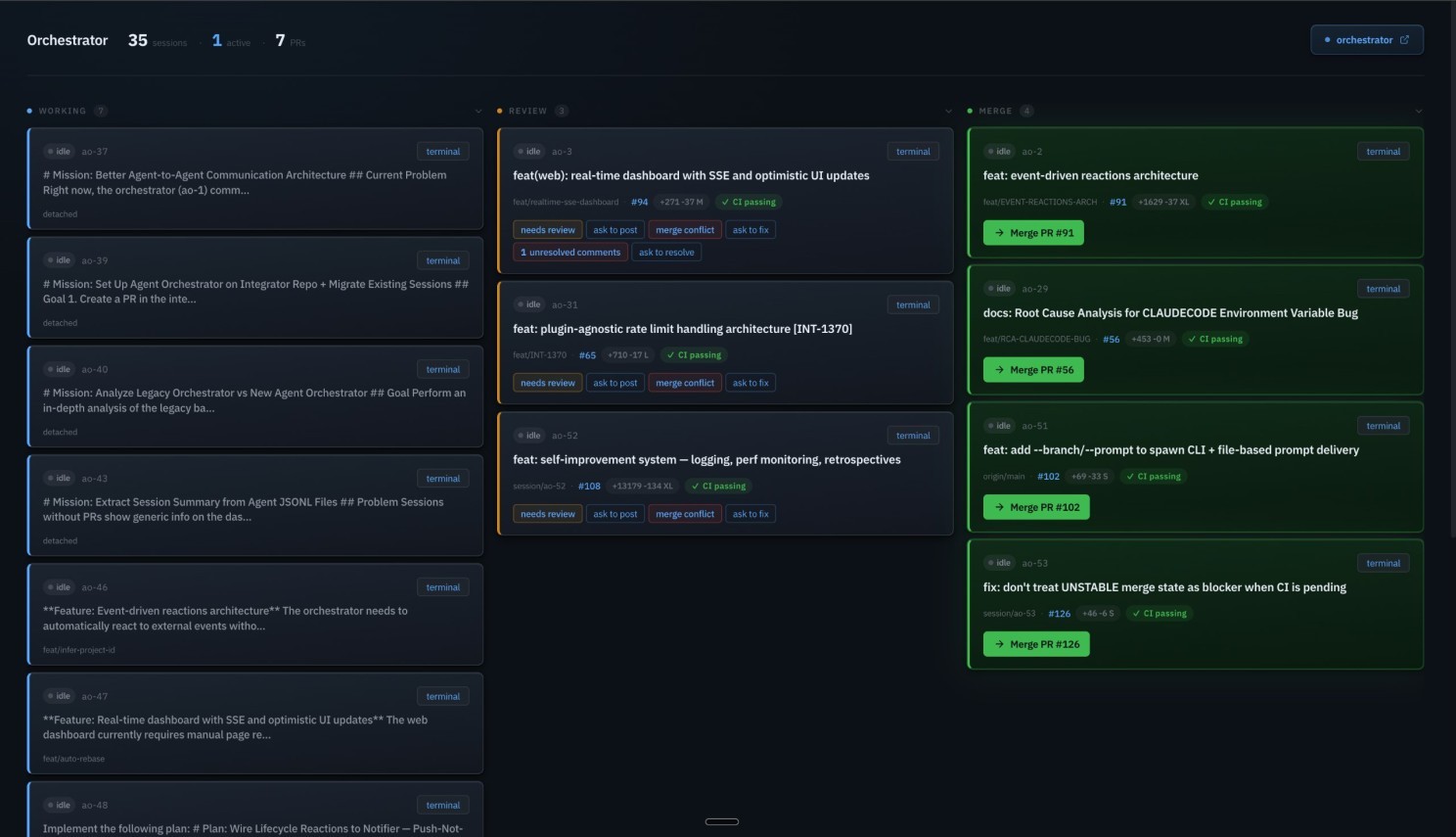

GitHub Copilot Coding Agent

activeOfficial42The enterprise default for async autonomous coding. Wins on distribution and integration depth (lives inside GitHub where most code already is), not raw capability. SWE-bench 56.0% trails Claude Code (80.8%) by 25 points — pragmatic default, not best tool.

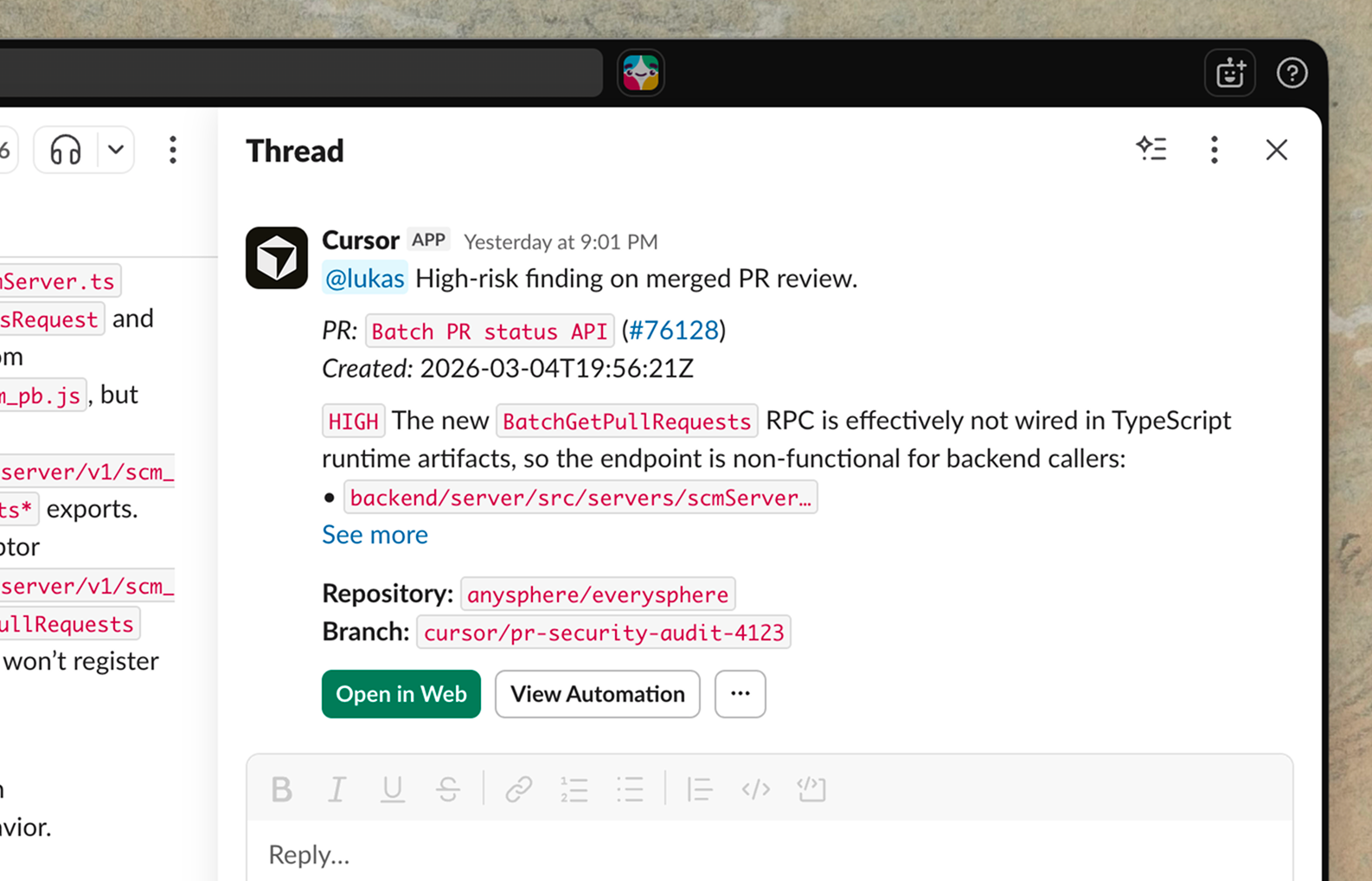

Cursor Automations

active39Most innovative architecture in the category — event-driven triggers are genuinely new. But 12 days old with zero independent validation. Needs 30+ days of production evidence before confidence can increase.

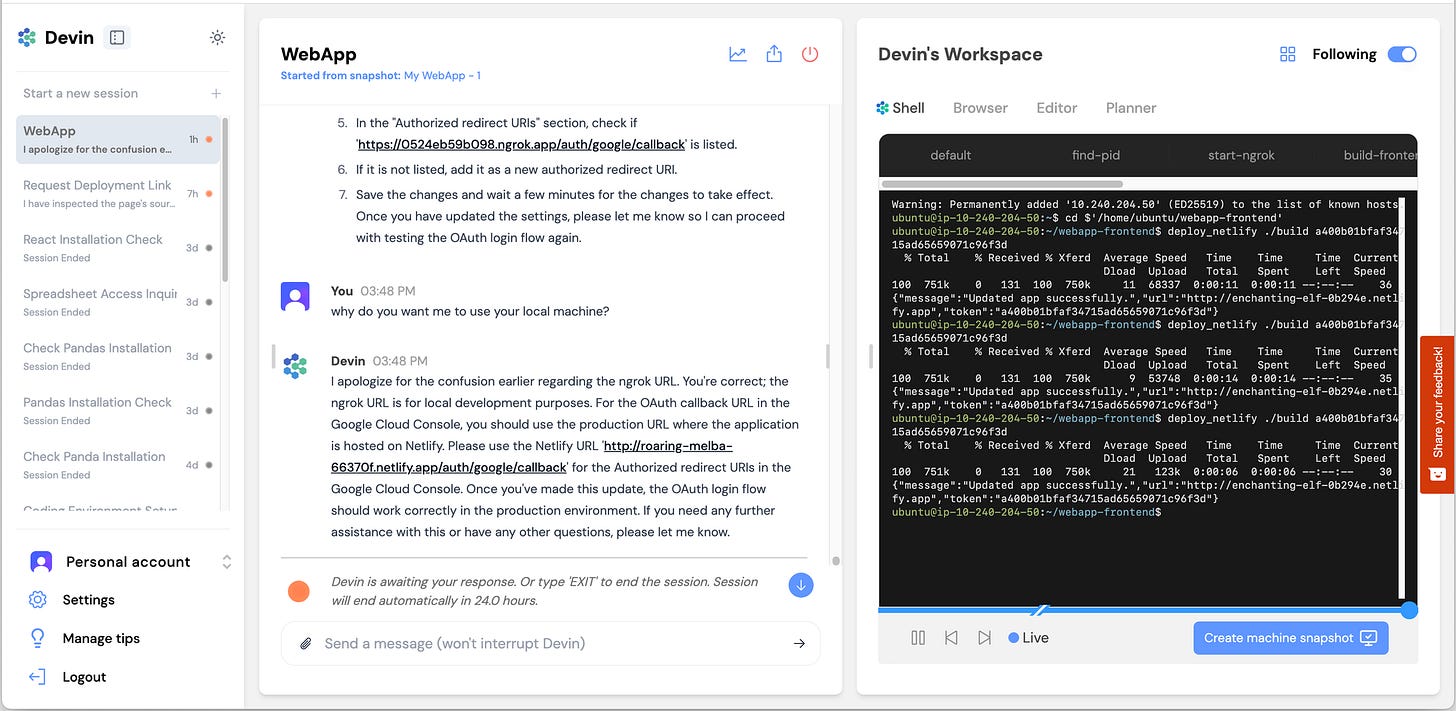

Devin (Cognition)

active41Highest-funded pure-play, but the gap between self-reported (67% merge rate) and independent results (15% success) is the defining data point. Business metrics are strong; product evidence on complex tasks is weak.

Jules (Google)

active38Google-backed with a unique proactive scanning feature, but every independent review says 'not yet a daily driver.' Notable absence of SWE-bench scores. Best for free experimentation.

Augment Code / Intent Agent

active40Strongest enterprise contender outside the top 3 by funding and claimed benchmark. The 70.6% SWE-bench score (if verified) would rank it #2. But public trust signals are weak relative to claims — no open-source repo, no public GitHub activity, near-zero community signal. Would move to #2 with a verified, auditable benchmark submission.

Replit Agent 4

active45Architecturally impressive and the ChatGPT integration could make it the dominant tool for non-developers building apps. For professional software factories (issue → PR, CI integration, repository-scale refactors), it falls short of the top tier. Production deletion incident (CEO apologized) is a trust signal issue.

oh-my-claudecode

watch77Watch list. Extraordinary star growth (10K in 10 weeks) but zero independent validation — no HN posts, no Reddit discussion, no reviews. Cannot rank until independent evidence appears. Potential star inflation flag.

Composio Agent Orchestrator

watch80Provisional #2 in automation — architecturally differentiated as the auth/integration layer FOR AI agents. 27K stars but zero HN traction is an anomalous trust signal. Drops to #4-5 if independent usage evidence doesn't emerge. Use Composio inside n8n or alongside other workflow tools, not as a replacement.

Spine Swarm

watch40Watch list. Benchmark leader in multi-agent research (GAIA, DeepSearchQA) but not a coding tool. Does not belong in coding-specific rankings unless it ships coding features.

LangGraph

active95The production default for Python multi-agent teams. Highest download volume in category (40.2M/month, 7× #2 Python competitor), most independently-verified Fortune 500 deployments, and best-in-class observability via LangSmith. Steeper learning curve than CrewAI — accept the tradeoff consciously.

CrewAI

active93Second-largest Python deployment footprint with real Fortune 500 adoption. Consistently rated 'fastest to prototype' — not marketing copy but backed by independent consensus. The right default for role-based business workflow automation teams prioritizing speed. Trade off: observability less mature than LangGraph without AMP Suite.

OpenAI Agents SDK

activeOfficial92Best for teams that want fast iteration with minimal boilerplate. Minimalist 4-primitive API learnable in an afternoon. Now supports 100+ LLMs — no longer OpenAI-locked. Pre-1.0 API, no state persistence. If your team needs to ship an agent system this week and has never used a framework, start here.

Mastra

active85For JS/TS teams, this is not a comparison decision — it is the default. No other serious TypeScript-native multi-agent framework at scale. 442-pt HN thread is the strongest community validation signal in the entire frameworks category. Custom license (not MIT/Apache) — review before production use.

Google Agent Development Kit (ADK)

activeOfficial92Strong download velocity for its age — GCP-native deployment and multi-language commitment give it a longer runway than single-language frameworks. Best for teams already on GCP/Vertex AI. No independently-verified production case studies outside Google-controlled publications.

AWS Strands Agents SDK

watchOfficial87AWS Bedrock teams only. High claimed downloads but anomalous download/star ratio (1,038 vs CrewAI 122) and zero HN organic discussion despite 14M cumulative downloads raises CI/CD pipeline inflation concern. Official AWS tooling is genuine advantage for Bedrock teams; lock-in penalty is high for everyone else.

smolagents (HuggingFace)

watch91Research and experimentation only. LocalPythonExecutor must NOT be used in production under any circumstances. Two independent security firms (JFrog + NCC Group) confirmed this. Docker or E2B sandboxing is an architectural requirement. Best for: evaluating CodeAgent paradigm, HuggingFace model experimentation, academic research.

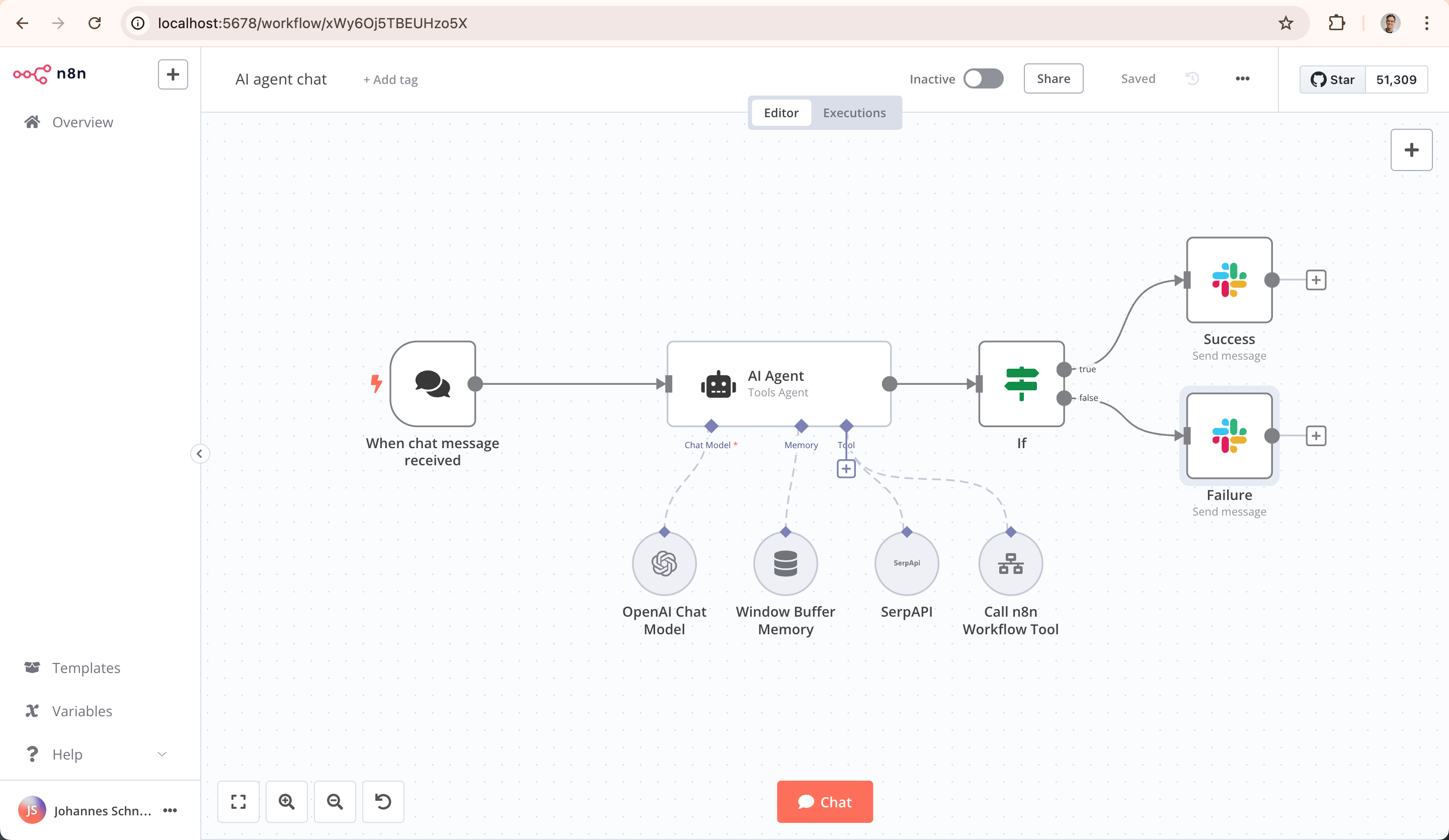

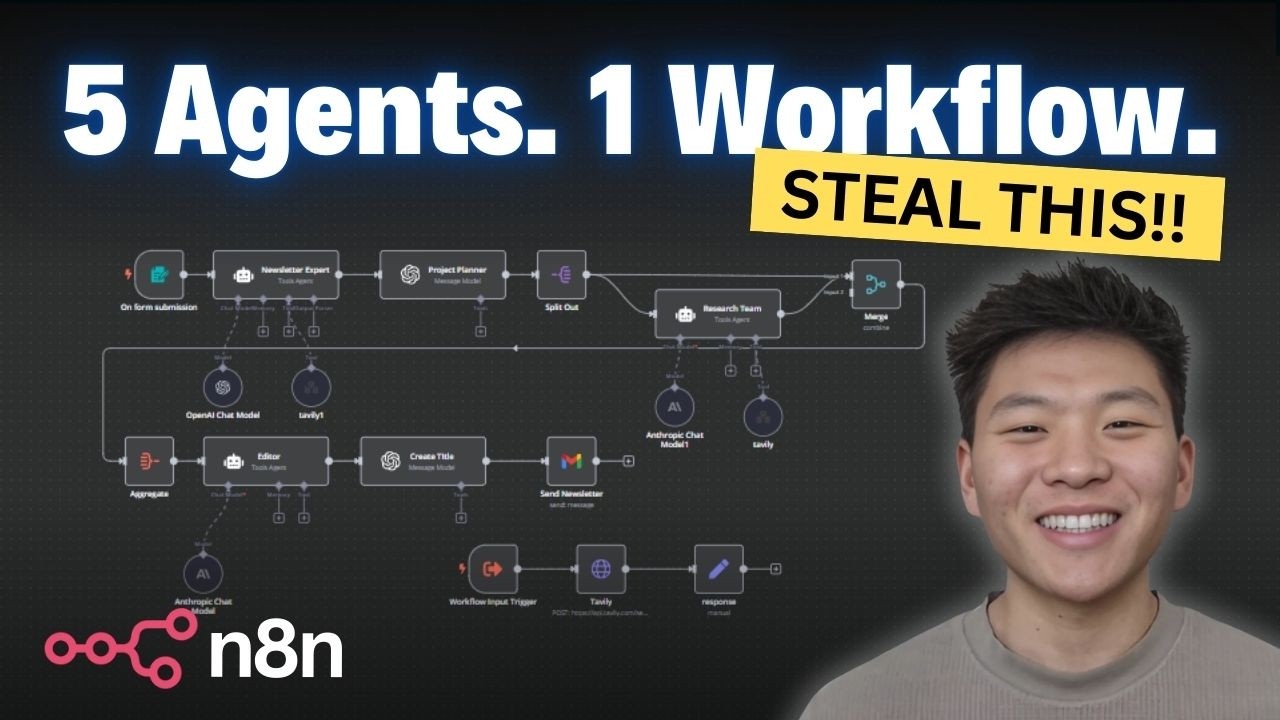

n8n

active88Clear #1 for workflow automation with AI nodes. If your goal is to wire together SaaS tools with AI orchestration, n8n is the answer. If you're building an agent system from code, use LangGraph or CrewAI instead. Do not mix these use cases.

Pydantic AI

active95The type-safe agent logic layer for Python teams. 15.6M downloads/month makes it #3 by volume. Not a competitor to LangGraph — it's a complement. The Pydantic team's reputation is an unmatched trust signal in the Python ecosystem. Best paired with LangGraph for orchestration.

Semantic Kernel (Microsoft)

activeOfficial92The enterprise choice for .NET/C# + Azure shops. Strongest named customer list in the entire category (KPMG, BMW, Fujitsu — independently corroborated). Multi-language (Python, C#, Java) is unique. Python teams should prefer LangGraph or Pydantic AI — Semantic Kernel's Python SDK is secondary.

Agno (formerly Phidata)

watch90Include with caveats. High star count but inflated DL/star ratio and self-promotional HN pattern. No independently confirmed enterprise customers. Full-stack offering is ambitious but evidence is thin. Re-evaluate upward if independent enterprise evidence surfaces.

AutoGen (Microsoft)

staleOfficial95Sunset in progress. Microsoft confirmed maintenance mode — no new features. Being replaced by Microsoft Agent Framework which combines AutoGen + Semantic Kernel. Do not start new projects on AutoGen. Existing teams should plan migration to Microsoft Agent Framework (GA ~Q2 2026).

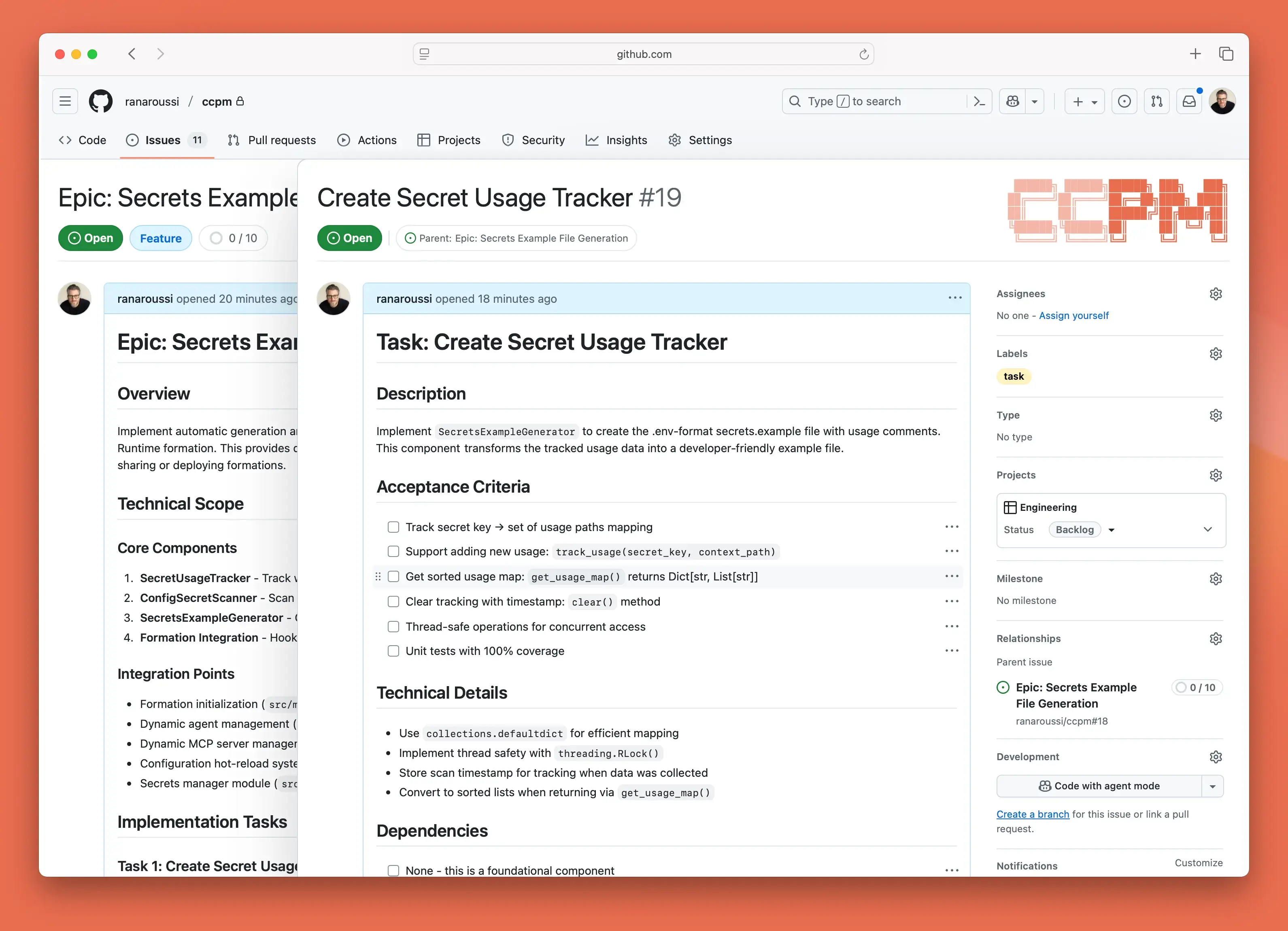

ccpm

active71Pragmatic parallel agent IDE for developers who prefer shell-based workflows over GUI tools. 112 HN comments is the deepest community discussion in the segment. Uses GitHub Issues + git worktrees rather than inventing new orchestration — appealingly simple.

Sim Studio

active82The only credible open-source alternative to n8n for workflow automation. Apache-2.0 license is a genuine differentiator for teams that need true open-source. Revenue and enterprise evidence is thin — too early to challenge n8n's 3,000+ enterprise customers, but the trajectory is promising.

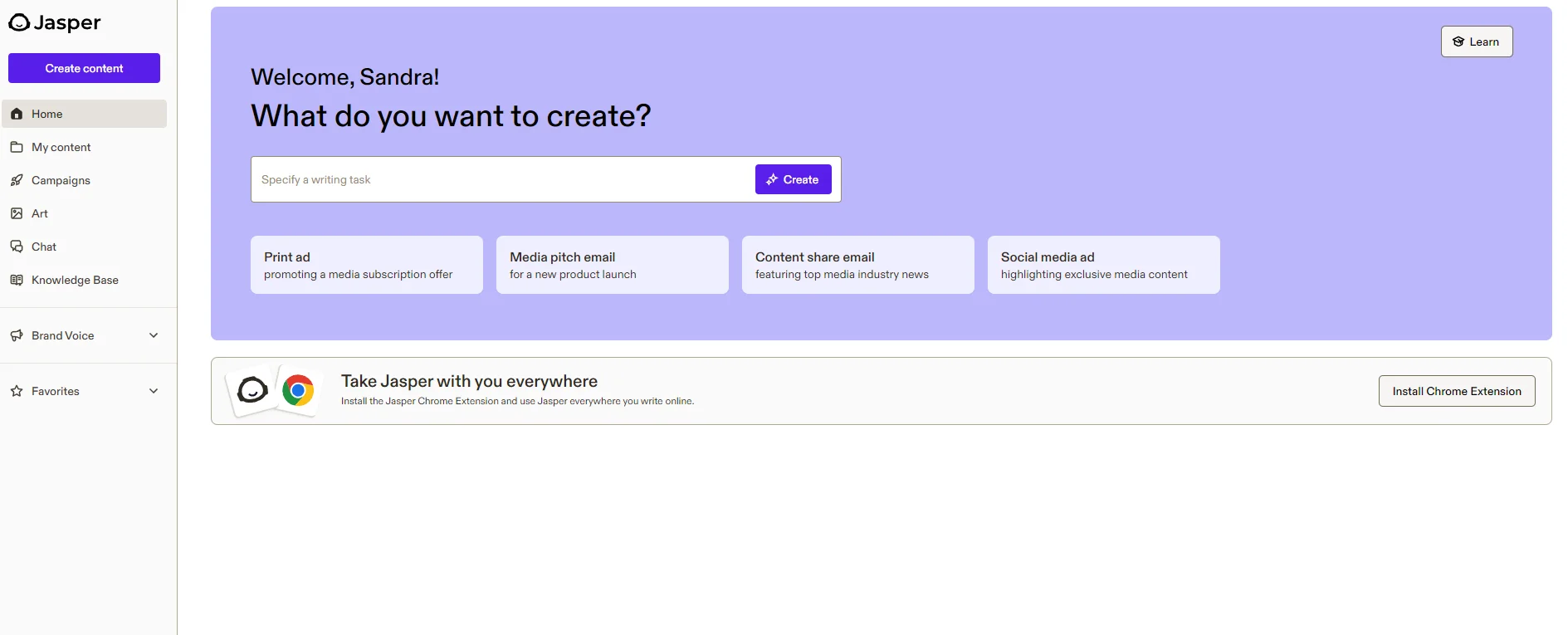

Jasper AI

active43Best for brand-governed marketing teams of 3-10 people. Strongest independent review coverage (8/10 avg), verified enterprise adoption (~20% Fortune 500 claimed). Moat is orchestration + brand rails, not generation quality — ChatGPT/Claude match raw copy at lower cost. No open-source, no MCP, no CLI.

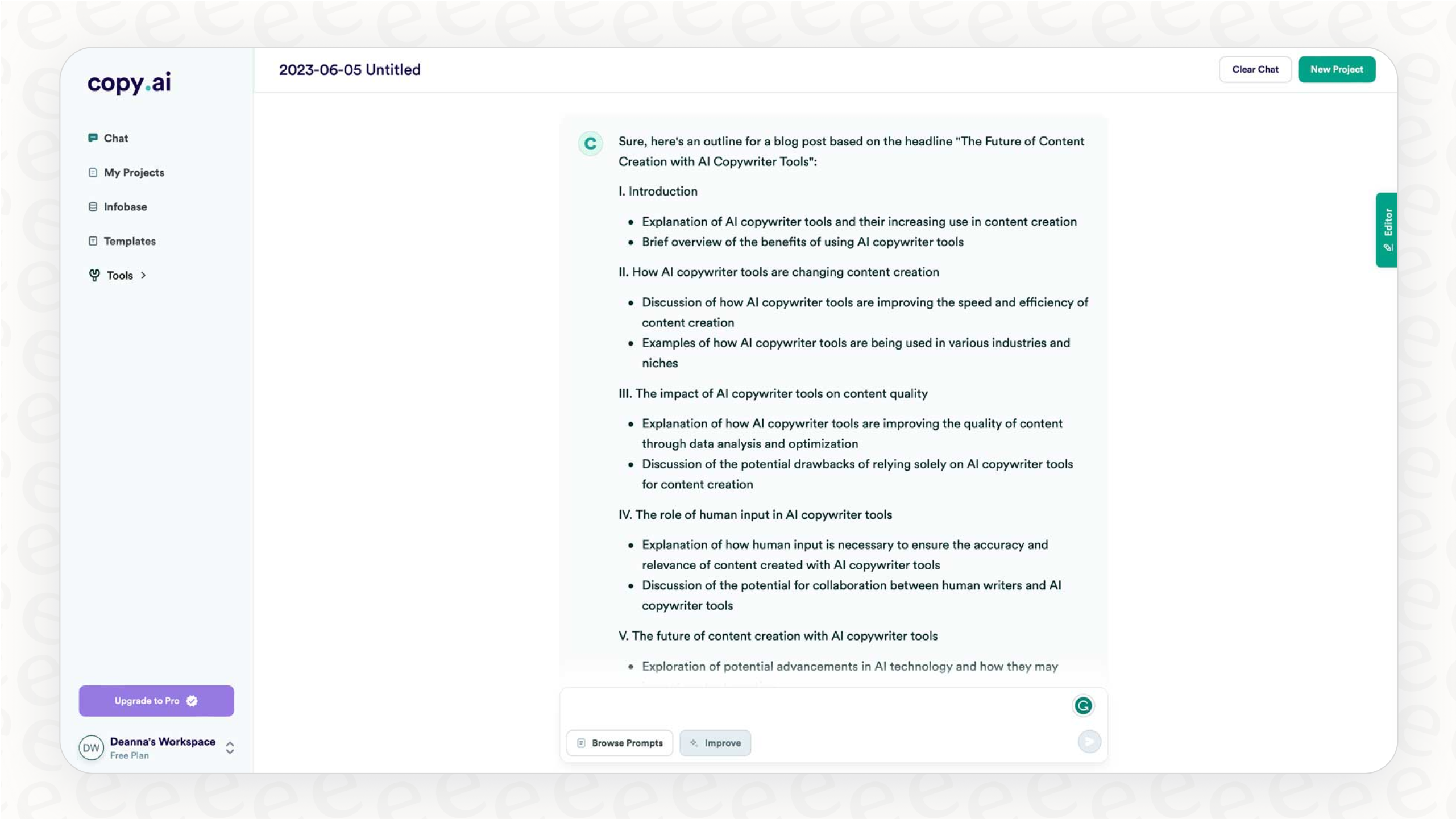

Copy.ai

active45Best for marketing workflow automation. Strongest workflow builder in the category — chains research → draft → edit → publish. $29/mo entry is accessible. Named enterprise evidence (Banzai VP reduced campaign creation from 5-6 hours to under an hour). No open-source, no MCP.

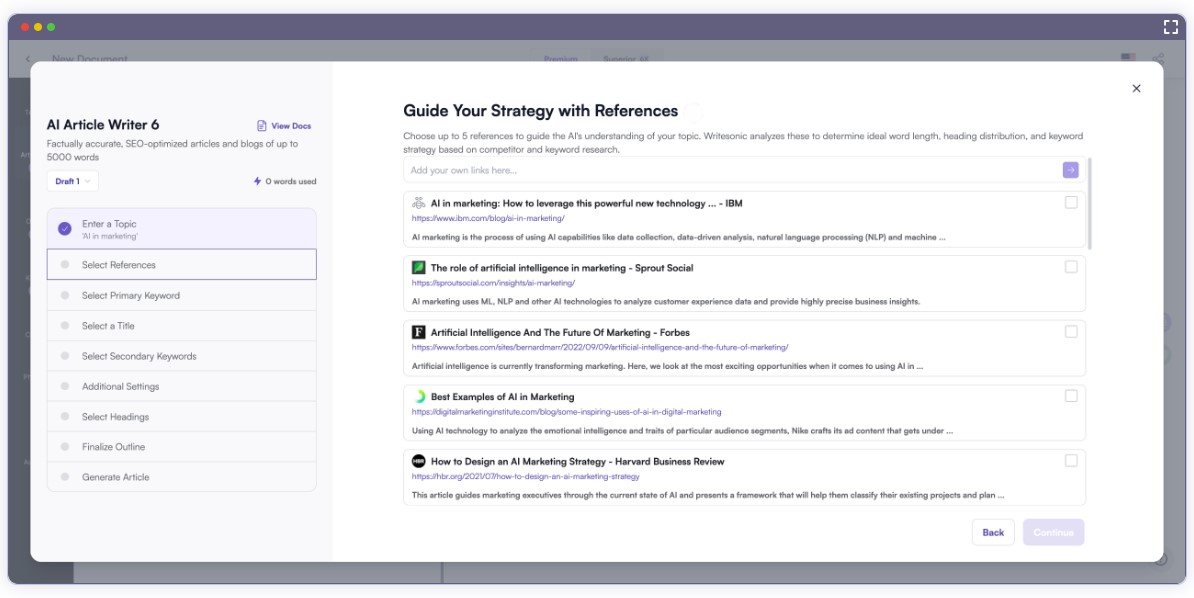

Writesonic / Chatsonic

active42Best pure SEO play at budget pricing ($19/mo entry). 4.7/5 Trustpilot from 5K+ reviews is highest verified user satisfaction in category. But GEO feature appears broken per independent practitioner test, and content quality questioned. Mixed evidence overall.

Frase

active41Best value SEO content tool with AI visibility tracking. GEO tracking is a genuine 2026 differentiator — monitors how content appears in AI-generated answers. $38-45/mo is price leader vs Surfer ($89) and Clearscope ($189). Strong independent comparison coverage.

Surfer SEO

active40The benchmark for data-driven on-page SEO. Consistently ranked as SERP analysis leader across independent sources. $89/mo is premium but justified by depth. No AI agent capabilities, no MCP. Consider Frase instead if GEO tracking or budget matters more.

AirOps

active42Best for content operations at scale — but only if your content strategy is already solid. Enterprise client list provides credibility. Steep learning curve confirmed independently. TechCrunch's 'SEO slop' framing is a reputational risk. $99/mo Scale plan.

Postiz

active85The SkillBench play for social media. Strongest open-source signal in marketing (27.3K stars), HN traction (42pts Show HN), viable business ($17K MRR, ~472 subscribers, 80% margins). Agent CLI makes it skills-ecosystem-native. Narrow scope (social scheduling only) but dominates that lane.

Klue

active42Enterprise CI leader — different lane from other marketing tools but marketing-adjacent. 4.7/5 G2 (2,257 reviews) is the strongest social proof in the entire marketing category. Forrester Strong Performer. ~$16K/year pricing limits to enterprise. Compete Agent is first-mover in autonomous CI.

SE Ranking MCP Server

activeOfficial53Only MCP-native SEO tool with a working implementation. Compelling time savings (40+ min/project per independent test). But 8 GitHub stars is negligible — most users access via SE Ranking's paid API. The SkillBench play for SEO data if adoption grows.

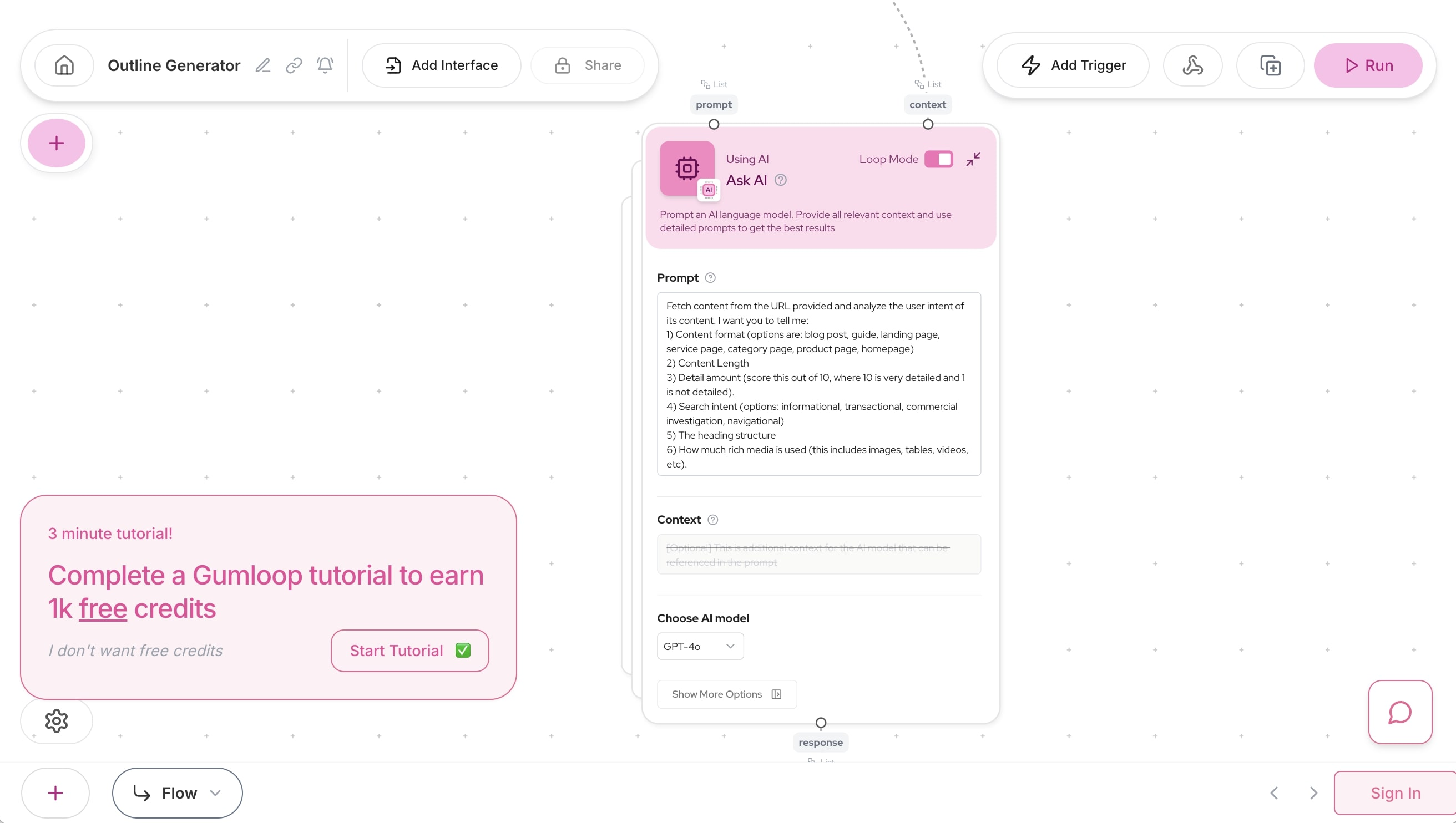

Gumloop

active40Strongest funding signal in category ($50M Series B from Benchmark, $70M total). Visual pipeline builder appeals to non-technical marketers. But broader than marketing (general automation), credit-based pricing is opaque, and product maturity is unclear despite funding.

Competely

watch35Lightweight competitor analysis for startups — the affordable alternative to Klue's $16K/year. Thin independent evidence. No HN signal, no major review coverage. Listed in roundups but not deeply reviewed. Too small for strong ranking but fills a gap.

Clearscope

active35Premium content grading for teams writing 15+ articles/month. Declining relevance — Frase and Surfer offer comparable functionality at lower prices ($38-89 vs $189). No AI agent capabilities, no workflow automation. Coasting on 2023-era reputation.

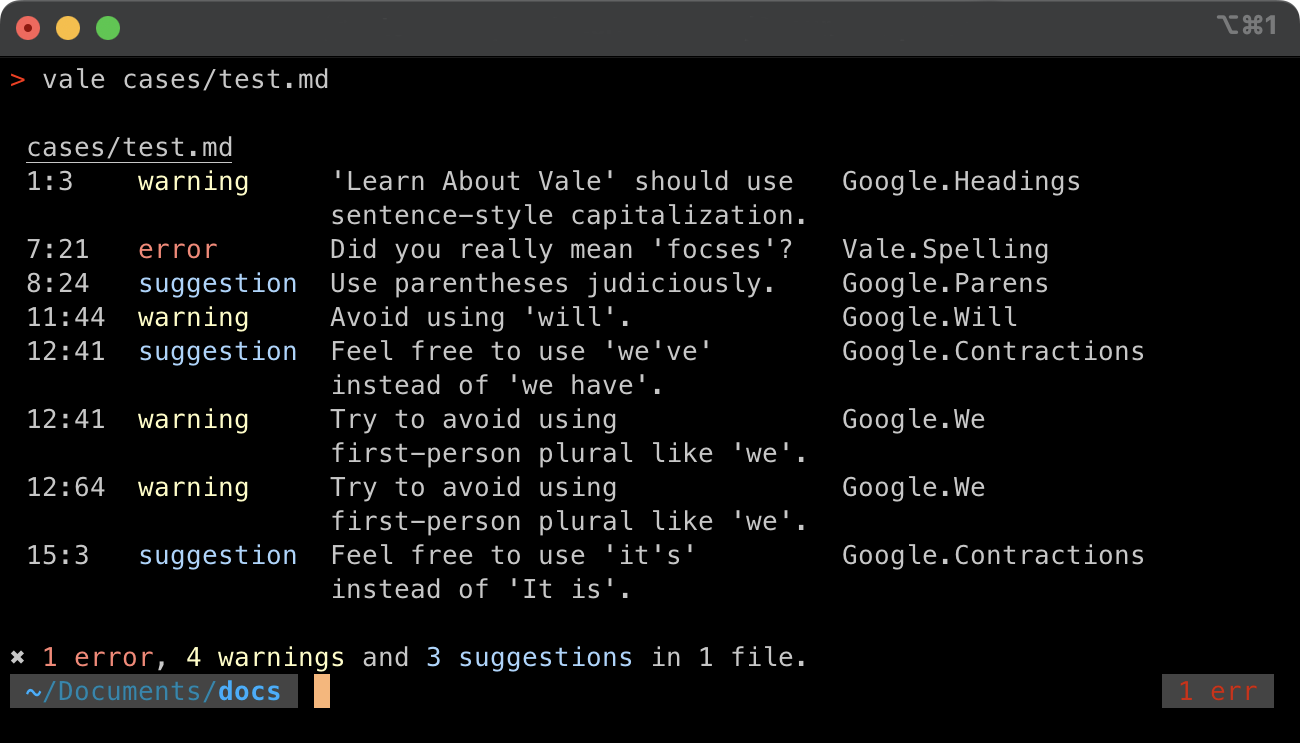

Vale

active80The standard for docs-as-code style enforcement. Adopted by Grafana, Datadog, Meilisearch. Pragmatic Bookshelf published a dedicated book. HN 230pts — strongest writing-tool HN signal. No real competitor for CI/CD prose linting. 5,299 stars.

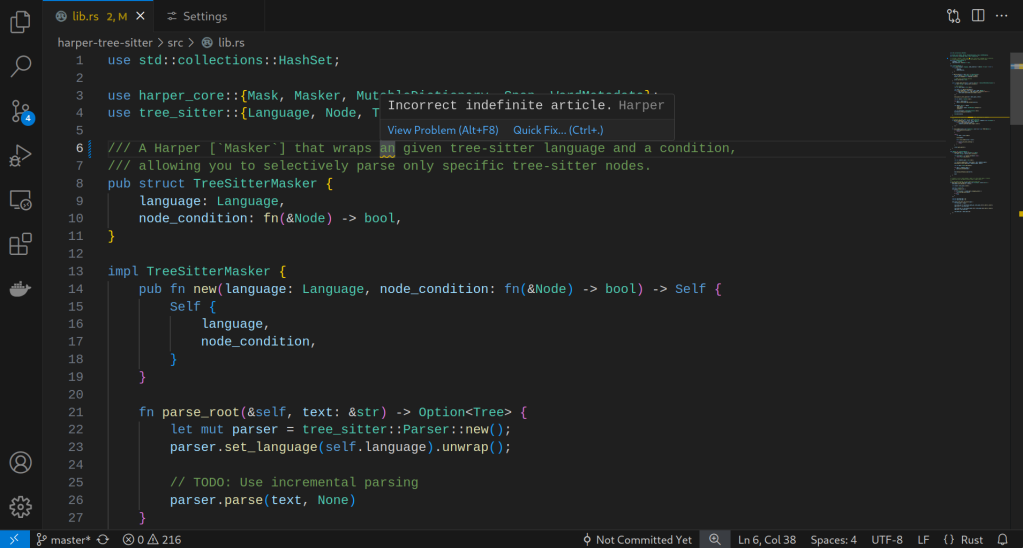

Harper

active85Best privacy-first grammar checker for developers. 10,111 stars (2x Vale), HN 645pts (highest in entire content-writing category). Backed by Automattic. English-only, grammar-only — no style guides, no AI rewriting. Different tool than Vale: grammar vs style enforcement.

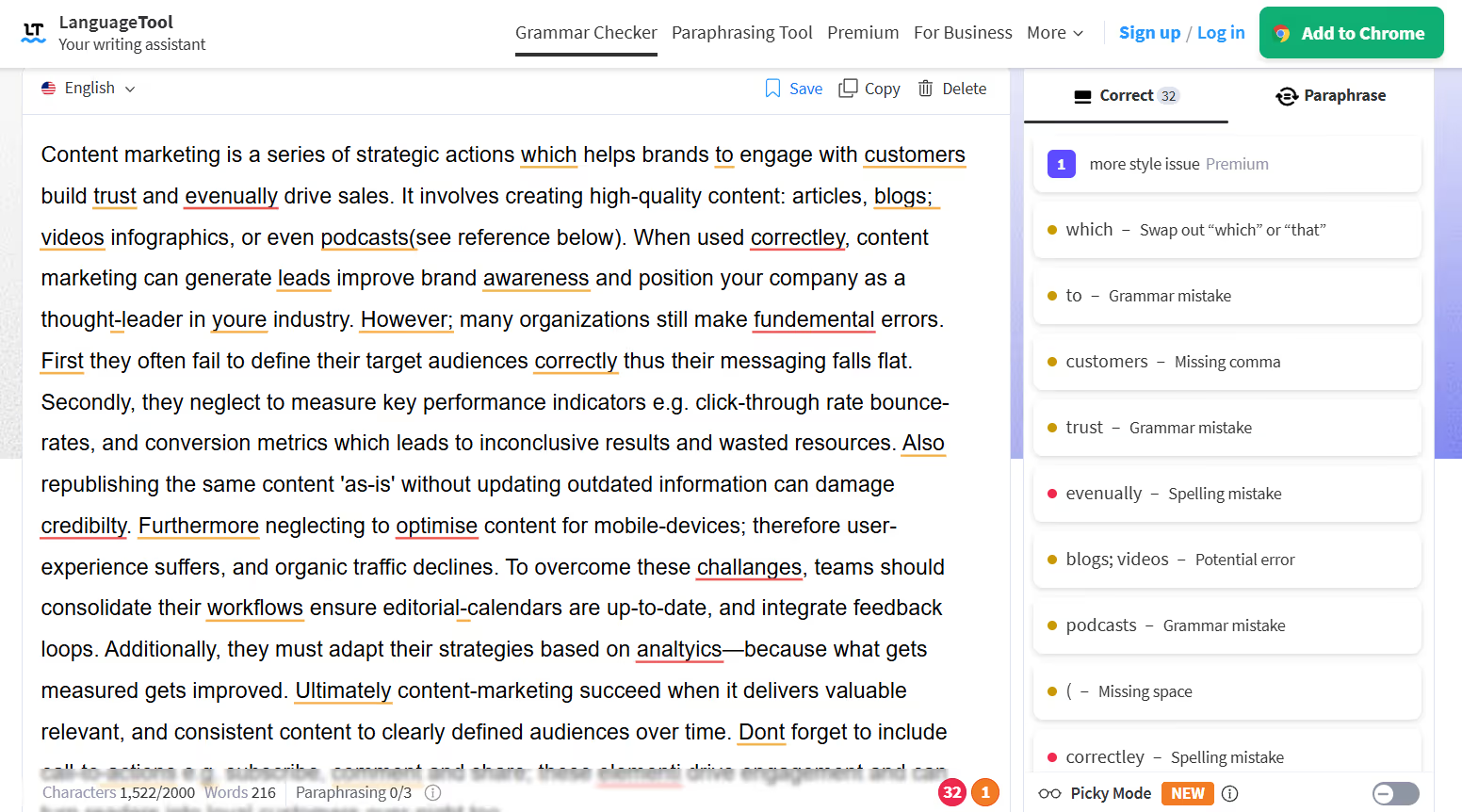

LanguageTool

active84Best for multilingual teams and self-hosted deployments. 14,184 stars (highest in category), HN 370pts. 30+ languages — unmatched. Self-hostable with SOC 2 and GDPR compliance. The go-to for non-English support or on-premise deployment.

Sudowrite

active40Category winner for AI fiction writing — no real competitor. Custom Muse model, Story Bible for lore consistency, fiction-specific UX. HN 51pts. Endorsed by Kindlepreneur and NerdyNav. Users report 4x faster first drafts. $19-59/mo.

Writer.com

active38Best for deep enterprise AI governance. Proprietary Palmyra LLMs, Knowledge Graph, AI Guardrails are genuinely differentiated features. VentureBeat coverage. But enterprise pricing, mixed reception for Palmyra quality vs frontier models, thin independent reviews.

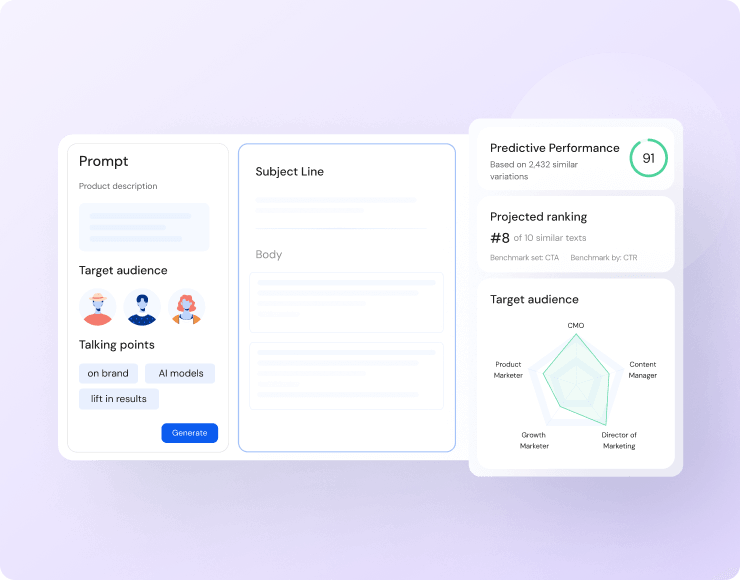

Anyword

active40Best for performance marketers (narrow subcase). Unique Predictive Performance Score is the only quantified predictive scoring in the category. G2-verified reviews confirm adoption. $49-499/mo limits to agencies/enterprises. Not a general-purpose content tool.

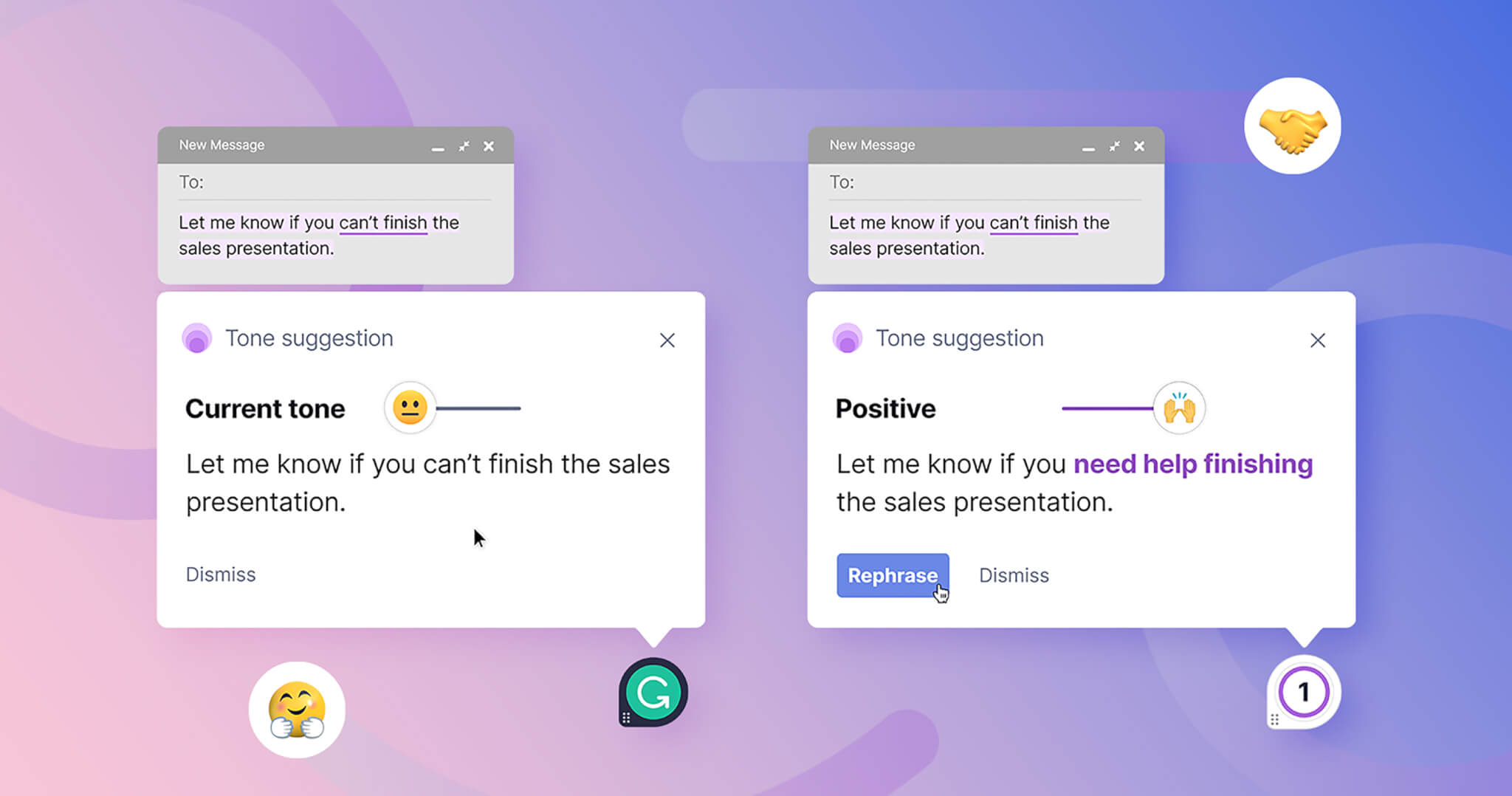

Grammarly / Superhuman

active45Best for broad writing assistance at enterprise scale. Near-universal adoption. Rebrand to Superhuman signals pivot away from pure writing toward AI productivity suite. HN community deeply skeptical (privacy/keylogger concerns, 464pts negative). Lighter governance than Writer.com.

Acrolinx

active35Best for regulated industries (narrow subcase). Only contender purpose-built for compliance-heavy content ops. Fortune 2000 focus. But almost entirely self-reported evidence — no HN signal, no GitHub presence, no independent reviews with substance. If you're in a regulated industry, evaluate. Otherwise, Writer.com covers governance better.

KoalaWriter

active35Best for high-volume SEO blog drafts (narrow subcase). One-keyword-to-article pipeline is fast for affiliate/niche site operators. $9-49/mo. But output requires heavy editing, rarely ready to publish. A draft factory, not a content tool.

alex

active30Best for inclusive language enforcement (narrow subcase). Unique niche — no competitor does this. Works well in CI alongside Vale. 5,091 stars. Narrow scope but valuable as a CI/CD complement.

write-good

active30Lightweight naive linter. Good starter tool, but Vale subsumes its functionality via the write-good style package. 5,067 stars. Use Vale instead for serious docs-as-code workflows.

proselint

stale72Stale — high issue count (247 open), Vale covers its functionality with better maintenance. 4,519 stars. Use Vale with the proselint style package instead.

Ocoya

watch35Budget social media AI at $15/mo. 30+ integrations but limited differentiation vs Postiz (open-source, self-hostable, agent CLI) or native platform tools. AI agent capabilities are 'coming soon' — lags the trend. Not strong enough for top ranking.

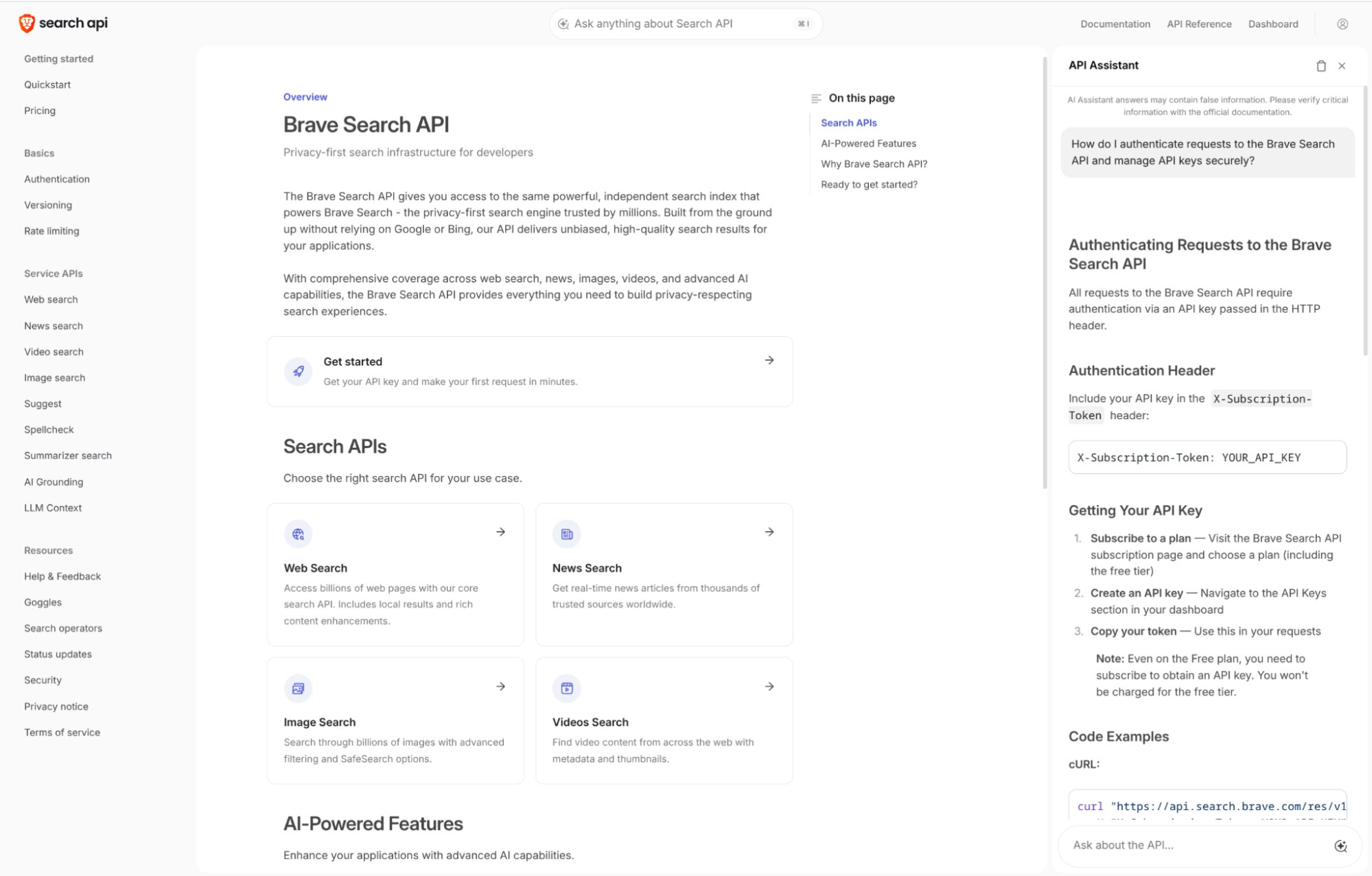

Brave Search API

activeOfficial72#1 search API for AI agents — fastest latency (669ms), highest independent benchmark score (14.89), SOC 2 Type II attested. Default recommendation for general-purpose agent search.

SearXNG

active88#4 in search-news — the only option where no query ever leaves your infrastructure. 26,644 stars, active development. Not independently benchmarked on quality, but unmatched on privacy and cost.

Tavily

activeOfficial40#5 in search-news — biggest distribution advantage via LangChain default integration. AIMultiple 13.67 (#5) shows meaningful gap below top-4. /research endpoint GA adds deep research lane. Nebius acquisition ($275-400M) adds strategic uncertainty but strongest financial runway.

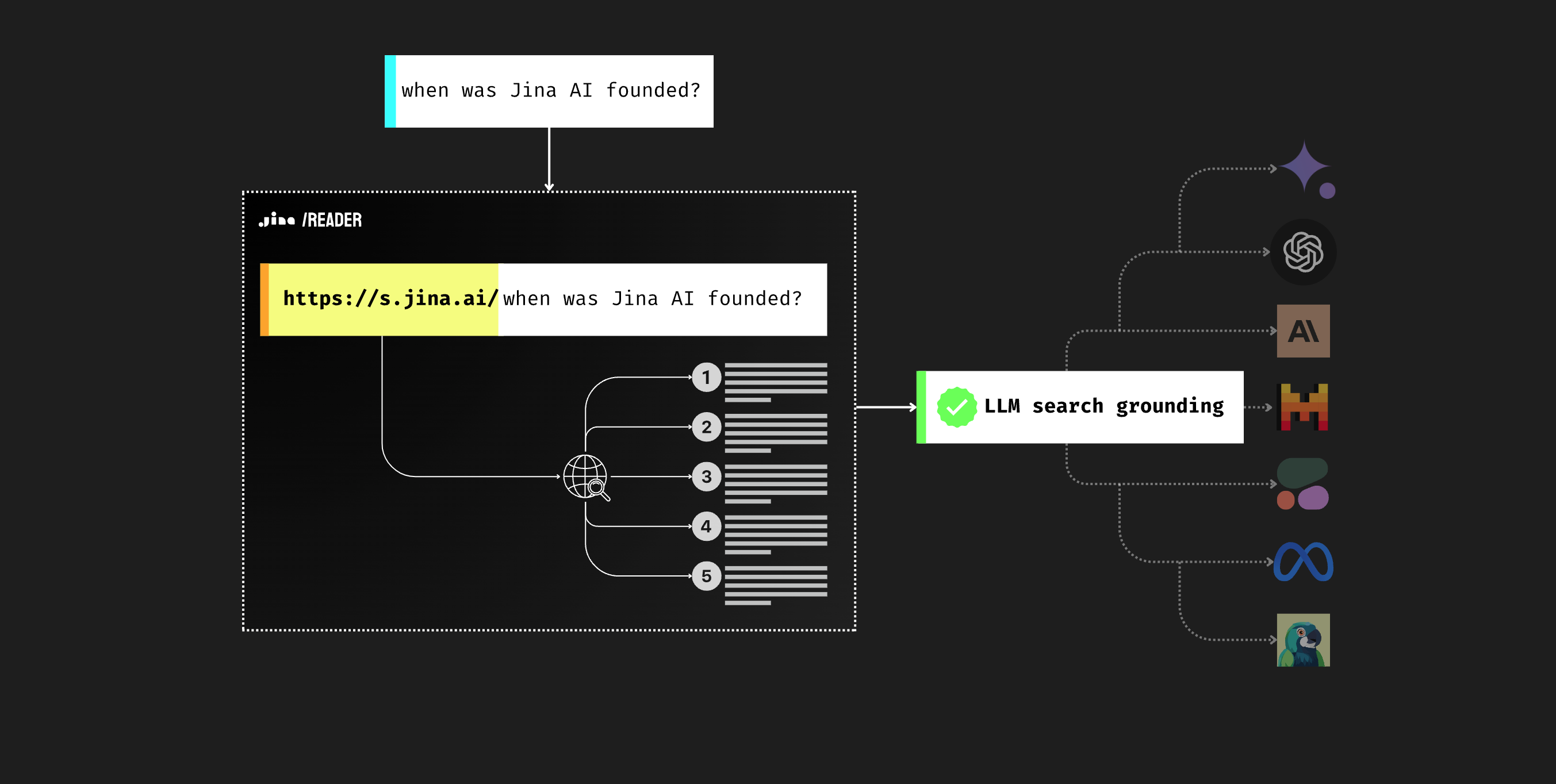

Jina Reader

staleOfficial60#6 in search-news — effectively dead. No commits since May 2025 (10+ months). ReaderLM-v2 is strong for edge/on-device but hosted API only. Firecrawl is a strict superset. Do not recommend for new projects.

Crawl4AI

active93#7 in search-news — the open-source self-hosted choice. 62K stars, Apache-2.0, actively maintained (v0.8.5 released 2026-03-18). ScrapeOps rates 'best open source' (7/10). Fork count nearly matches Firecrawl (6,353 vs 6,516) showing heavy dev usage. Wins on license, cost, and developer control.

Parallel AI Search

activeOfficial40Below cut line in search-news. Top-4 AIMultiple quality (14.21) but 20x Brave's latency makes it async-only. Extreme pricing ($300-$1,200 CPM) limits to high-value enterprise. Closed source, no MCP server. Best deep research API if latency and cost don't matter.

You.com Search API

activeOfficial35Below cut line in search-news. Research API launched Feb 26, 2026 with strong self-reported benchmark claims (#1 DeepSearchQA). Too new for independent verification — no HN traction, no AIMultiple placement. If claims verified, jumps to ranked list immediately.

Linkup

watchOfficial35Below cut line in search-news. 86K weekly downloads suggest real adoption despite 43 GitHub stars. Strong angel backing (Datadog CEO, Mistral CEO). All benchmark claims self-reported — no independent verification yet.

Perplexity Sonar API

activeOfficial40Below cut line in search-news. Consumer brand doesn't translate to API performance. AIMultiple #7 (12.96), 11K+ ms latency, BrowseComp 8%. HumAI shows highest accuracy (87%) but at cost of speed.

Valyu DeepSearch

watchOfficial35Below cut line in search-news. Unique proprietary data angle (50+ sources: SEC, clinical trials) but almost all evidence is self-reported. Minimal traction. Needs independent verification.

Serper

activeOfficial35Below cut line in search-news. Budget Google SERP wrapper. No semantic understanding, no independent index. Useful only when cost is the primary constraint.

Spider Cloud

active58Below cut line in search-news. Niche high-volume crawling tool. Benchmark claims entirely self-reported. Tiny community (2.3K stars).

Google Grounding with Search

activeOfficial35Below cut line in search-news. Platform lock-in (Gemini API only). Not a standalone search API. If Gemini Deep Research exits preview with MCP support, deep research lane shifts dramatically.

Bright Data MCP

activeOfficial65Below cut line in search-news. #1 in Browser MCP Benchmark (100% extraction success, 90% automation). 2,214 stars, 60+ MCP tools. Not a search API — web access infrastructure. The only option for scraping sites with aggressive anti-bot defenses.

Hyperbrowser MCP

watchOfficial50Below cut line in search-news. Strong 90% browser automation (tied Bright Data #1) but 63% web extraction (worst among ranked). GitHub stale 4+ months (last push Nov 2025). Watchlist — strong HN traction and stealth capabilities, but maintenance risk.

ScrapeGraphAI

activeOfficial82Below cut line in search-news. 23K stars, 194 HN pts (strongest HN score in category), unique LLM graph pipeline approach. But only 14.6K weekly PyPI downloads vs Firecrawl's 752K — star count likely inflated by viral novelty. Stars-to-downloads ratio 1,580:1 vs Firecrawl's 81:1.

Kimi Code (Moonshot AI)

watchOfficial60Watch — real download signal (124K PyPI/week) and strong model capability (K2.5, HN 388 pts). Limited Western ecosystem integration and no SWE-bench Pro or Terminal-Bench scores. Best for Chinese developer ecosystem or teams using Moonshot AI models.

Kilo Code (Kilo-Org)

watch65Watch — real download signal (131K npm/week) and meaningful seed funding ($8M). OpenRouter-native architecture is a genuine differentiator for teams wanting model flexibility without managing API keys. Needs stronger differentiation beyond OpenRouter integration.

Cursor (Anysphere)

activeOfficial35Reference only in coding-clis — Cursor is primarily an IDE, not a CLI agent. $29.3B valuation and strong adoption are real, but closed-source, paid, and IDE-first puts it outside the terminal-native category. Best for developers who want a polished commercial IDE with integrated AI.

Warp (Warp Technologies)

activeOfficial65Reference only in coding-clis — Warp is an AI terminal, not a coding CLI agent. Strong UX and 75.8% SWE-bench Verified are real signals, but 4,350 open issues and closed-source licensing are concerns. Best for developers who want an AI-first terminal experience rather than a code agent.

Kiro (AWS)

watchOfficial42Watch — spec-driven approach is architecturally sound and GovCloud presence is meaningful for regulated industries. But too early to rank higher without a verified benchmark, user count, or independent case study. Outage controversy unresolved.

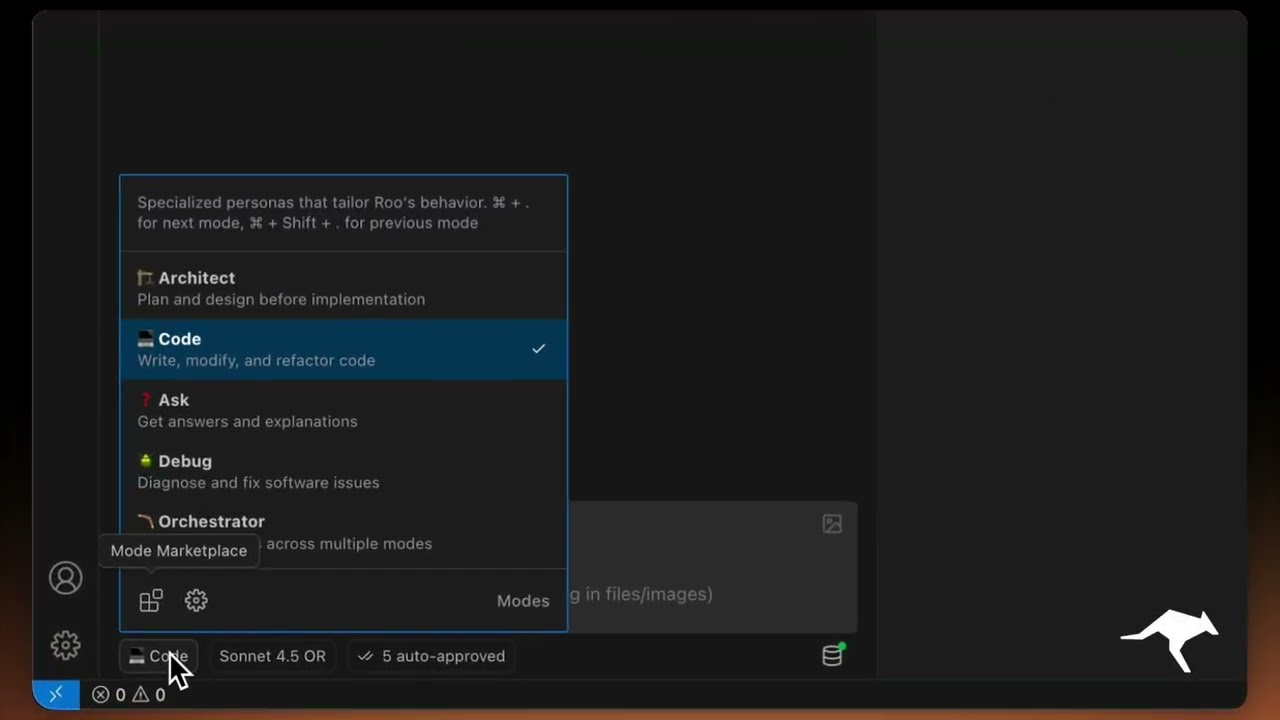

RooCode (RooVeterinaryInc)

active68#9 coding CLI — best security posture among VS Code agents (SOC 2 Type 2 compliance). 5.0/5 VS Code rating on 1.37M installs is the strongest quality signal in IDE-embedded segment. Custom Modes (security reviewer, test writer, architect personas) are practical differentiation beyond Cline fork origins. Clean security record.

Gamma

active40Default choice for AI pitch decks. Market leader by every quantitative measure — $102M ARR, 70M users, TechCrunch-verified. Design quality is good but can feel 'generic' at investor scale; Slidebean wins for active fundraising workflows. Lowest-friction entry at $8/mo.

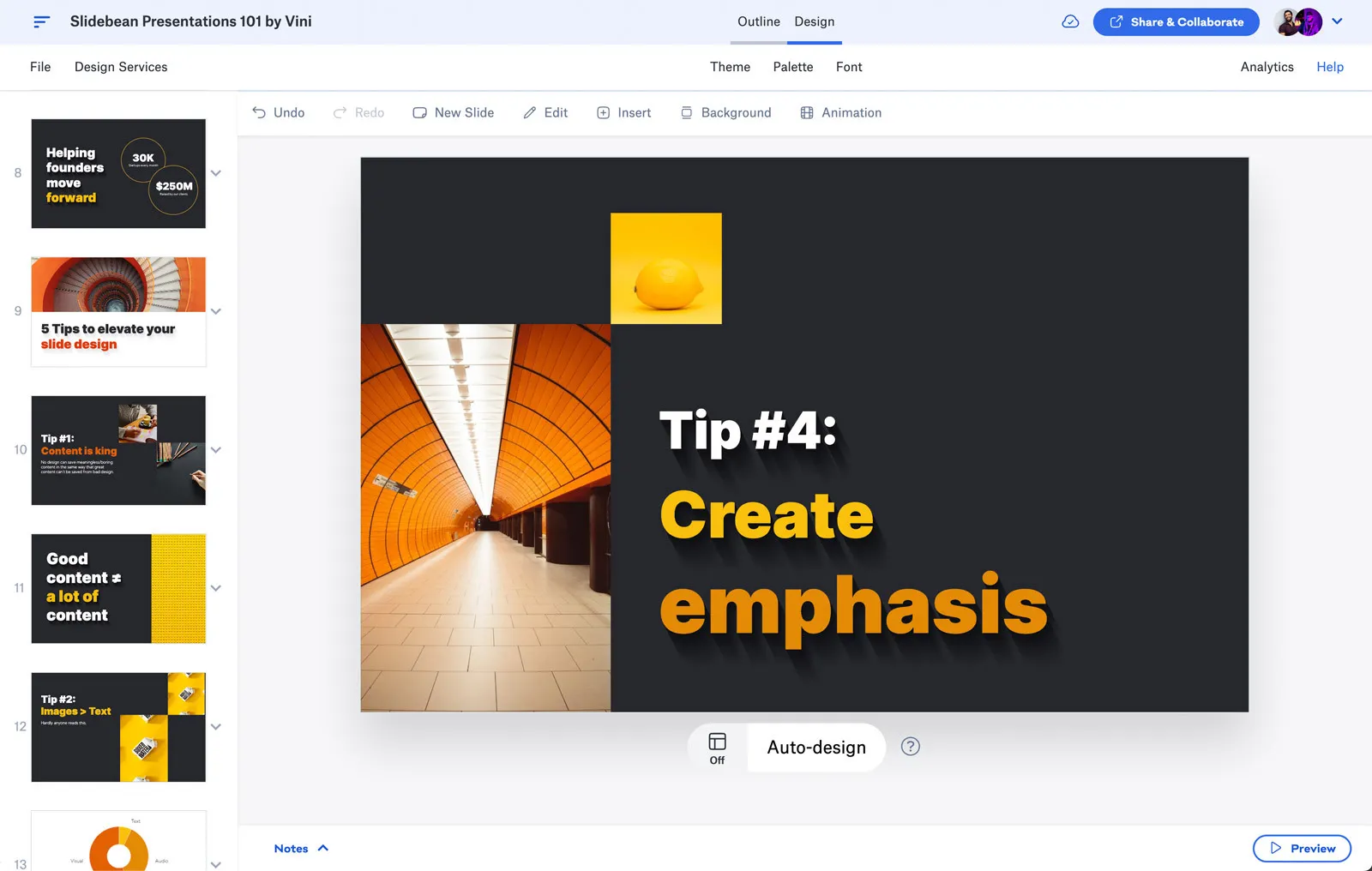

Slidebean

active40Best for active fundraising. Wins over Gamma when you need investor CRM + financial slides in one tool. 30K+ startups served, 500K YouTube subscribers give social proof. If you just need a great-looking deck fast, Gamma wins.

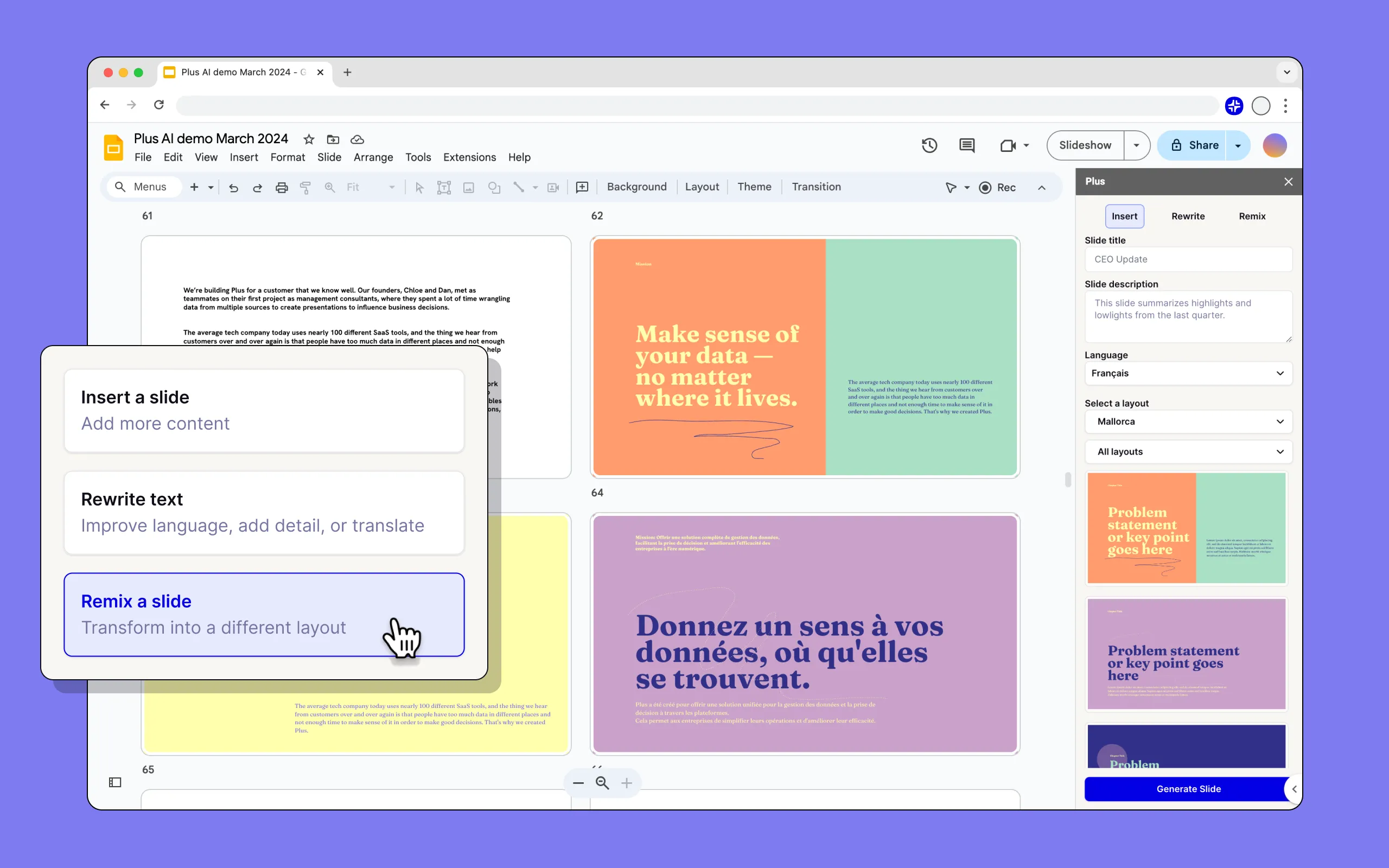

Plus AI

active35Best if you live in Google Slides or PowerPoint. 1M+ users, top-rated on Google Workspace Marketplace and Microsoft AppSource. SOC 2 Type II. Wins on zero learning curve but lacks standalone design capabilities of Gamma.

Alai

watch35Best design quality in AI decks per independent comparison (Reprezent test across 11 tools). Worth considering when investor-facing aesthetics matter more than speed. Lacks Gamma's scale and Slidebean's fundraising workflow.

o11

watch35Wall Street Prep #1 for AI financial modeling. Creates real IB-grade Excel output (linked formulas, not summaries). But dangerously underfunded ($500K seed, ranks 818th on Tracxn). High survival risk despite strong product signal.

Tracelight

active40Best Excel-native AI assistant for financial modeling. IB/PE practitioners report 90% time savings. FP&A Guy podcast endorsement adds credibility. $3.6M seed is modest but growing. Wins for Excel power users who need formula generation, not PDF extraction.

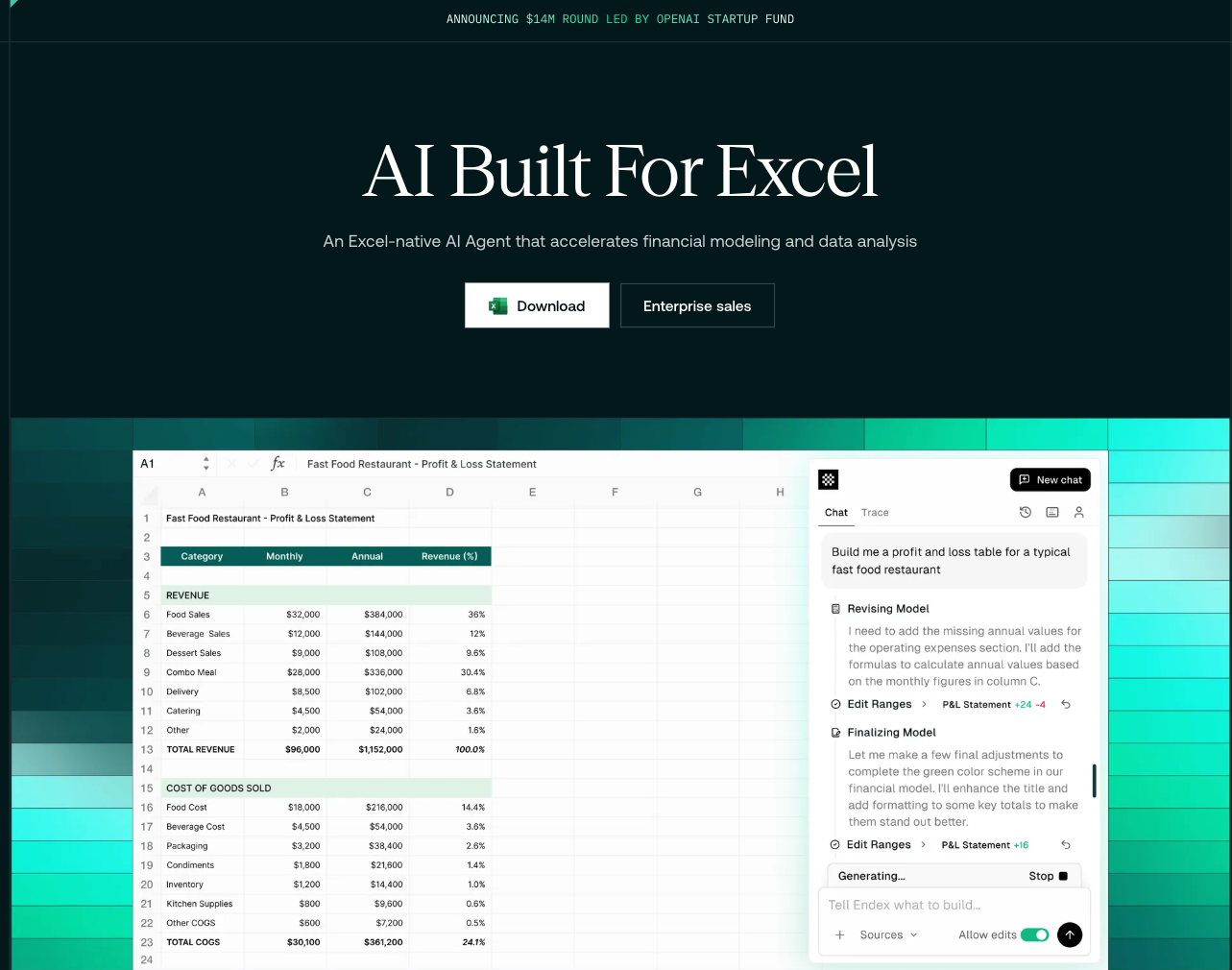

Endex

active45Best-funded AI financial modeling tool ($14M, OpenAI-led). Unique PDF-to-Excel pipeline using vision models. Featured by OpenAI as showcase customer. Wins for 'Research Associate' niche — extracting data from PDFs into structured Excel. Not a full modeling tool like o11 or Tracelight.

Rows

active45Best AI-native spreadsheet for analysis. 89% first-try accuracy in public benchmark (self-run but reproducible methodology). $8/user/mo. Wins for general analysis; not suited for IB-grade three-statement models. 74% dynamic outputs is a unique capability.

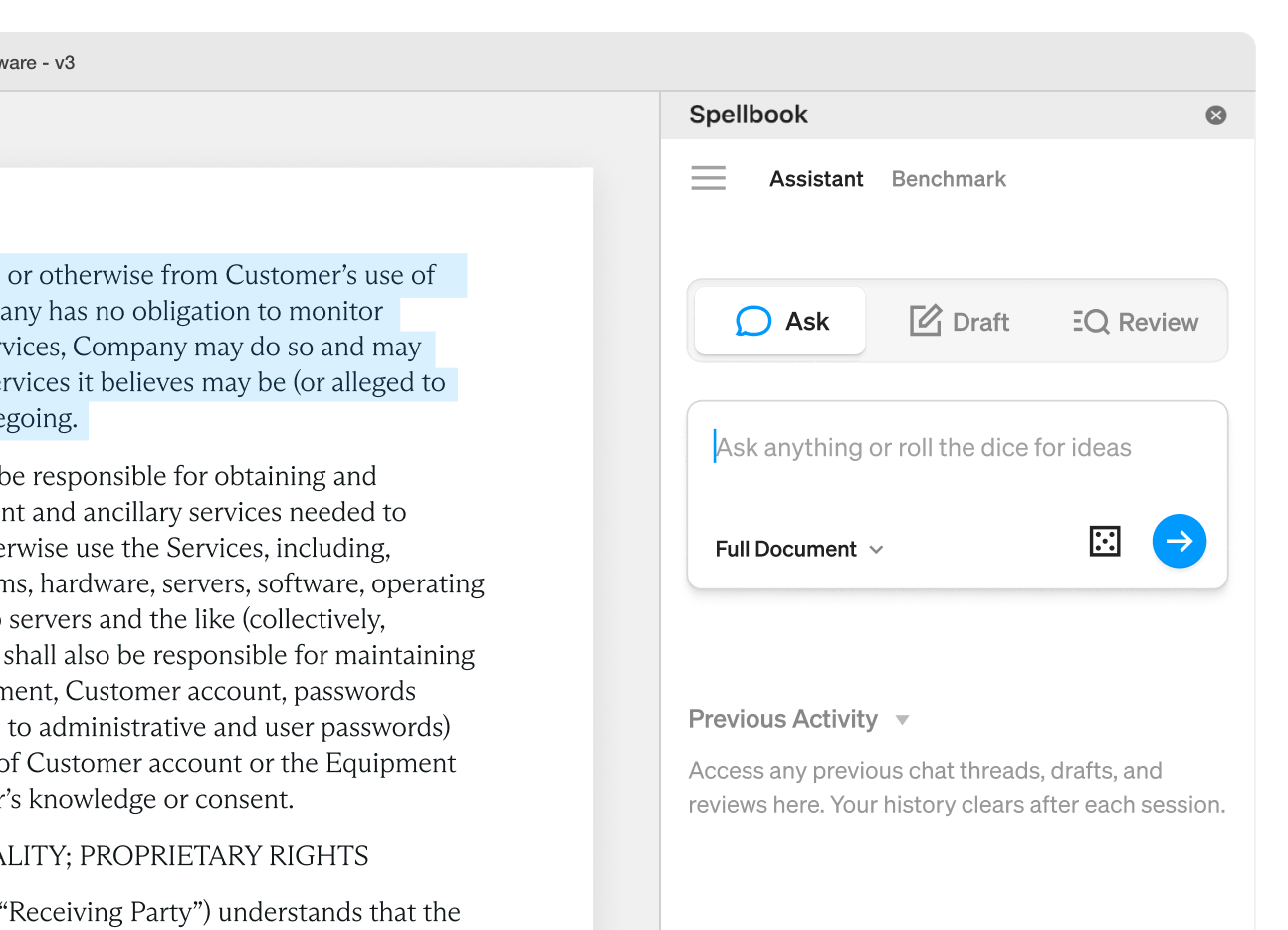

Spellbook

active42Market leader in AI contract review. $90M+ raised, on track for $100M ARR, 4,000 law firms across 80 countries. Exclusive CBA partner (40K members). Now securing $40M debt for acquisitions — signals market consolidation. Best for law firms needing Word-native drafting assistance.

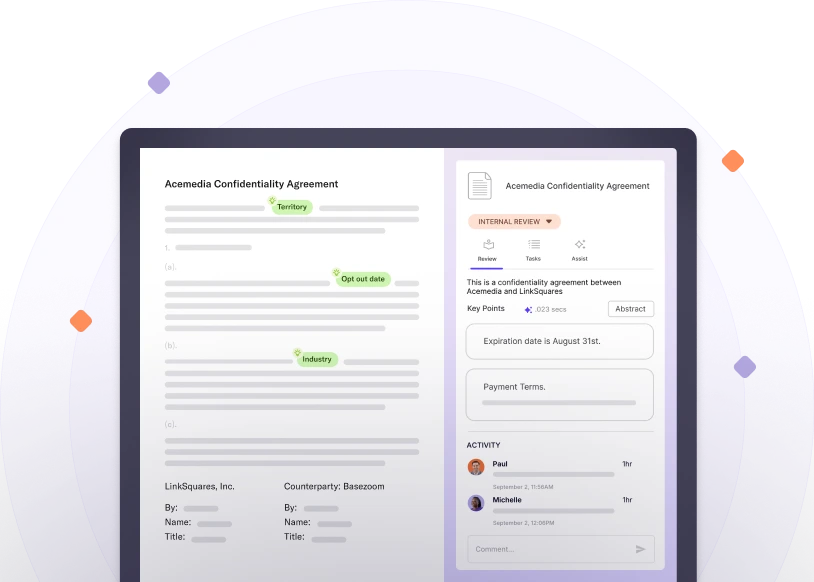

LinkSquares

active45Best for enterprise legal operations. $164M raised, $800M valuation, G2 leader 5 consecutive years. Wins for bulk contract analysis, M&A due diligence, and legal team management at scale. Not a drafting co-pilot like Spellbook — it's a CLM platform with AI built in.

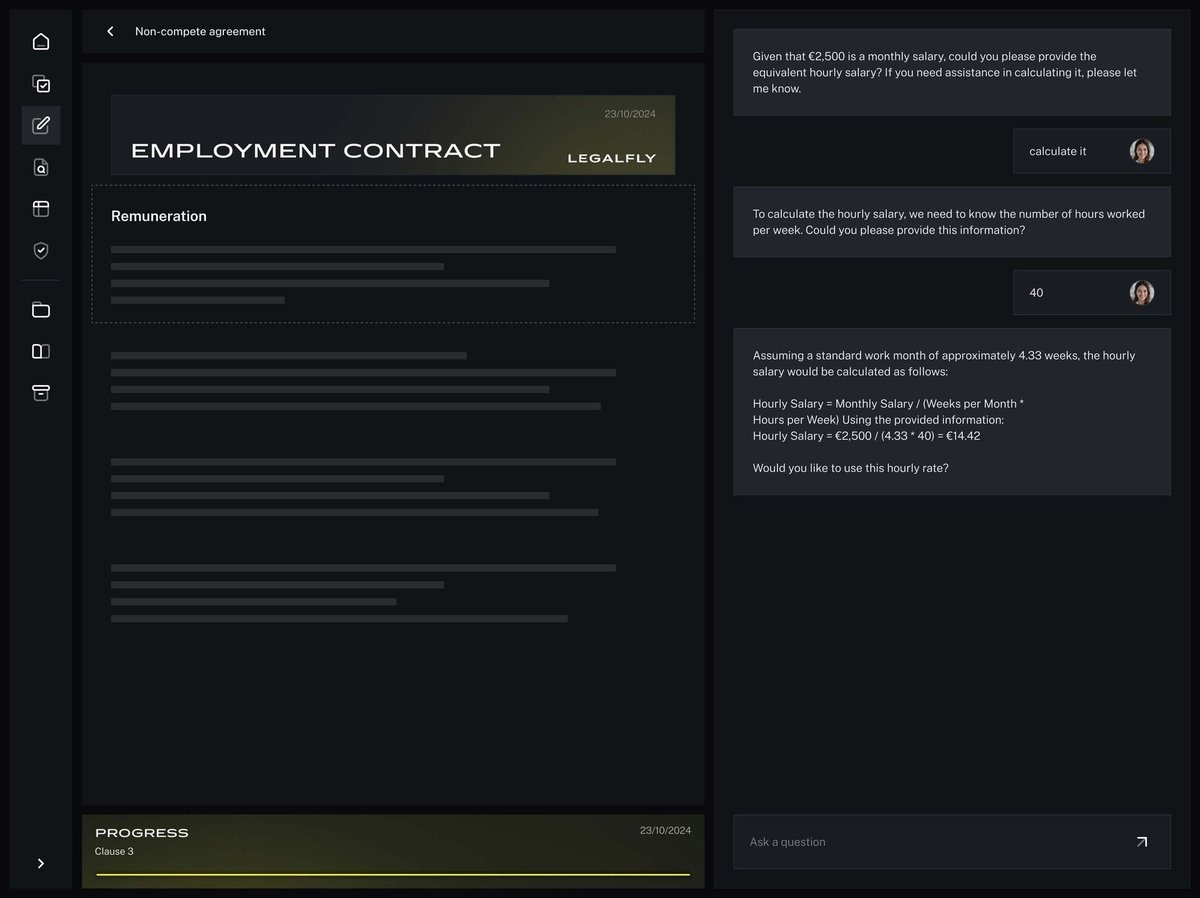

LEGALFLY

active45Fastest-growing legal AI tool (800% YoY ARR growth). Unique privacy differentiator: anonymizes every document before AI processing. Best for regulated industries (healthcare, EU government, financial services). SAP and Lufthansa validate enterprise adoption.

Tability

watch35Only dedicated AI-first OKR tool with public signal. Wins UI, OKR management, and reporting in independent comparisons. But evidence base is thin — no public funding, no benchmark, limited independent verification. Most teams should use Notion/Linear/Asana instead unless they want a dedicated OKR tool.

Midday

active88Best open-source business tool for freelancers and small teams. 14K+ GitHub stars (3.1x nearest OSS competitor). Strong Show HN (119pts). Self-hostable, bank connections, AI-powered receipt matching. Wins for developers/freelancers who want control over their business stack.

Frihet MCP

watch50First MCP-native ERP server — directly relevant to Claude-native business workflows. 31 tools for invoicing, expenses, and business ops via natural language. But only 3 GitHub stars — too early to recommend for production. Worth watching for the MCP ecosystem angle.

invoice-mcp

watch33Minimal but functional MCP tool for PDF invoice generation via Claude. Too early to rank (5 stars) but interesting for the MCP ecosystem. Active development is a positive signal.

Proposify

active40Market leader in proposal management. G2 4.6/5 with ~1,000 reviews, Gartner Peer Insights recognition. Not AI-first but has AI-assisted content generation. Most teams can use Google Docs + Claude instead — Proposify wins only at volume with analytics, e-signatures, and template libraries.

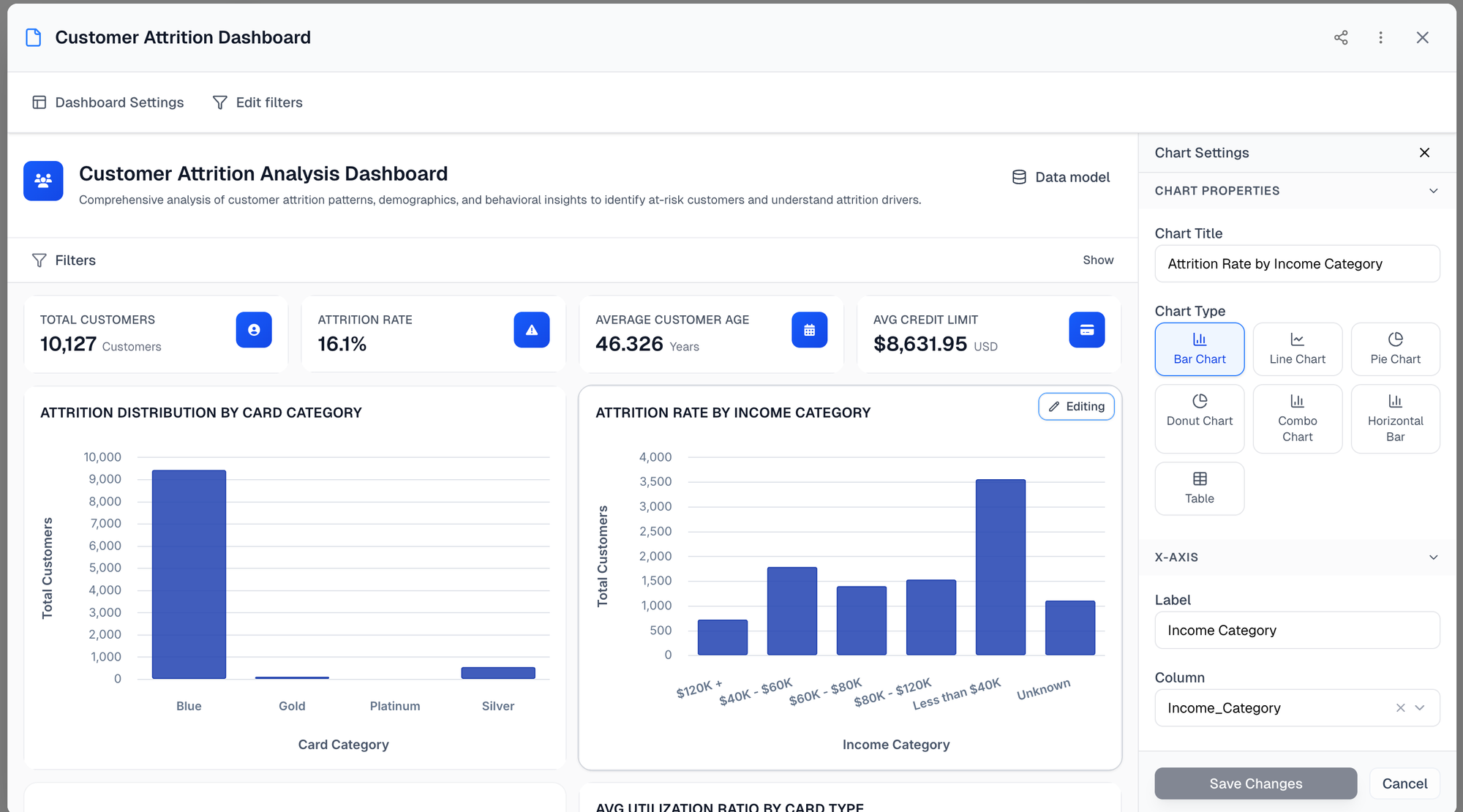

Bin (bi.new)

watch45Most promising AI-native BI experiment. 59pts Show HN is a solid signal for a new BI tool. But extremely early stage — file upload only, no database connectors. For serious BI use Metabase (OSS), Looker, or Tableau. bi.new is for quick ad-hoc analysis where a full BI stack is overkill.

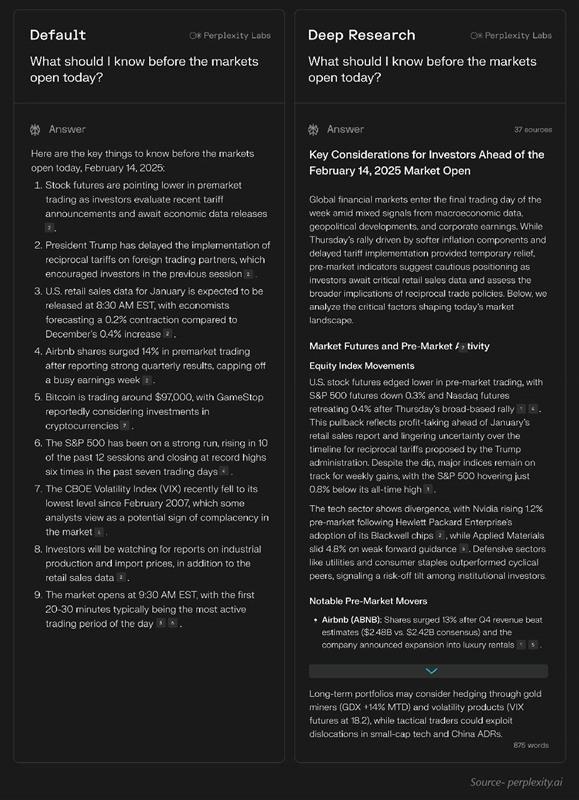

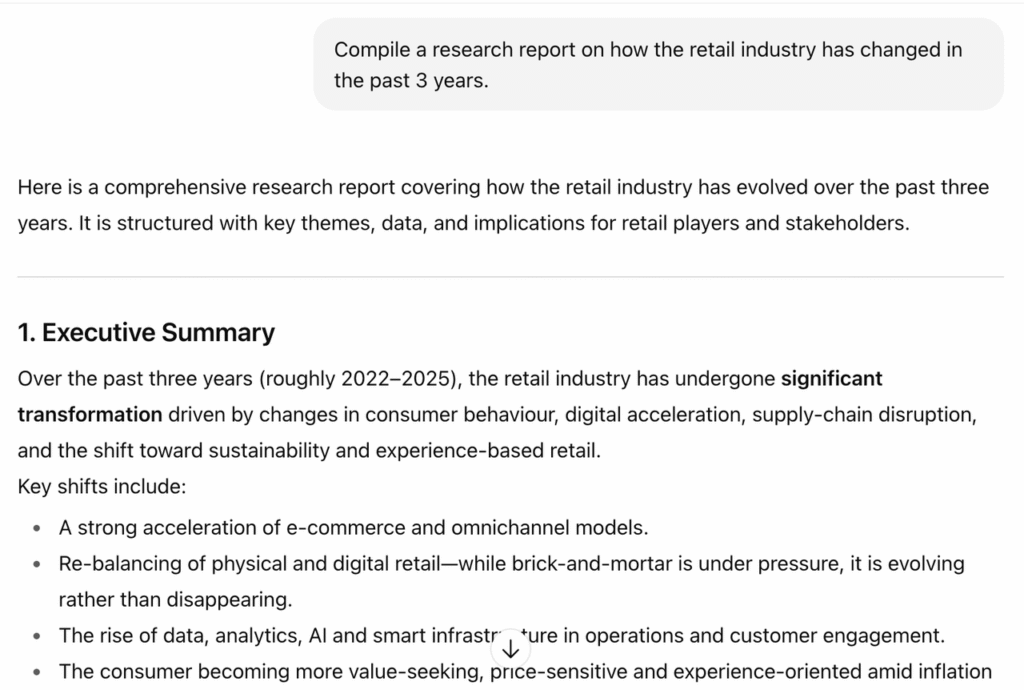

Perplexity Deep Research

activeOfficial43#1 in research. Speed king (15-30s vs minutes for competitors), highest citation reliability in independent tests, 93.9% SimpleQA accuracy (Helicone). Reddit academic communities endorse as go-to. Best value at $20/mo.

OpenAI Deep Research

activeOfficial42#2 in research. Reasoning king — 26.6% HLE and 72.57% GAIA are best reported results from any system. MCP support (Feb 2026) enables enterprise toolchain integration. Slower and pricier than Perplexity but unmatched for PhD-level questions.

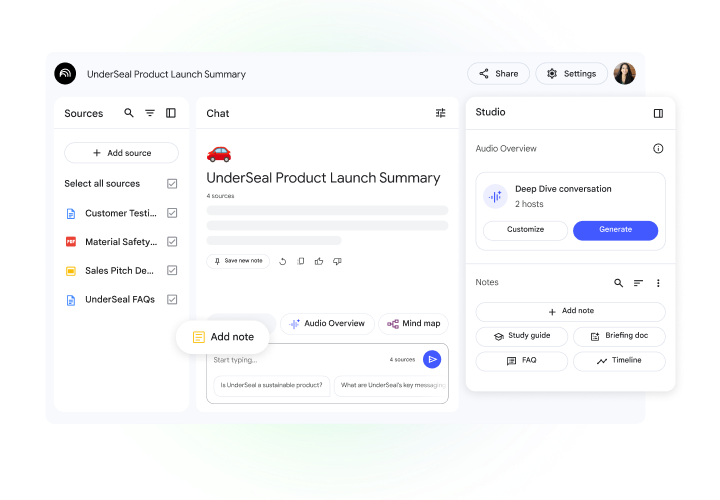

Google NotebookLM + Deep Research

activeOfficial42#3 in research. Best multimodal research tool — video, audio, PDFs, data tables. 100+ sources per query (highest coverage). 907 HN pts is the highest engagement of any tool in this category. More a research workbench than a search tool.

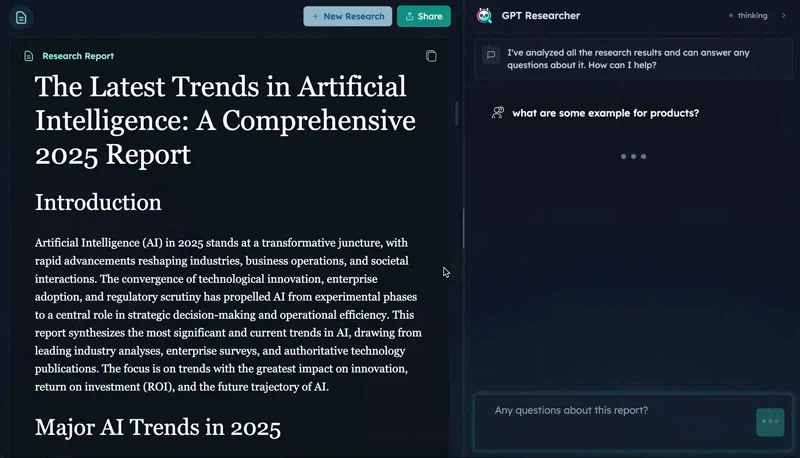

GPT Researcher

active89#4 in research. Open-source leader with the strongest third-party validation — CMU benchmark winner beating Perplexity and OpenAI on citation and report quality. Real PyPI adoption (15.9K/wk) and MCP integration make it the pick for self-hosted research pipelines.

Tongyi DeepResearch

active82#5 in research. Open-source disruptor — HLE 32.9 exceeds OpenAI's 26.6 on the same benchmark, runs on consumer hardware via MoE. Called 'the DeepSeek moment for AI agents' by VentureBeat. Ranked below GPT Researcher due to less proven adoption data.

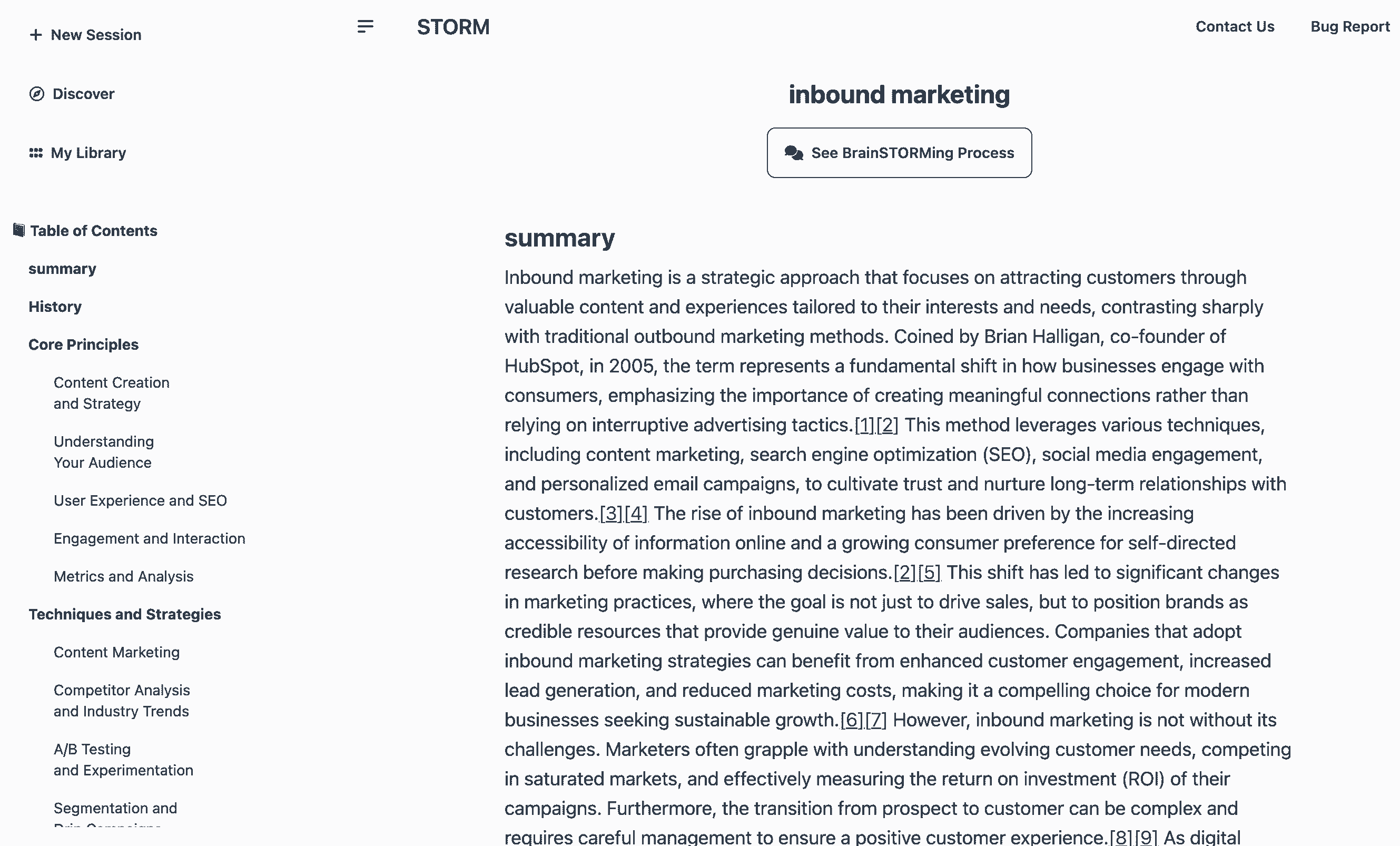

STORM (Stanford)

active66#6 in research. Best for structured knowledge curation — Wikipedia-style article generation with peer-reviewed citation quality (84.8% recall, 85.2% precision). Stanford pedigree, Co-STORM collaborative mode (EMNLP 2024). Less practical for agentic workflows than GPT Researcher.

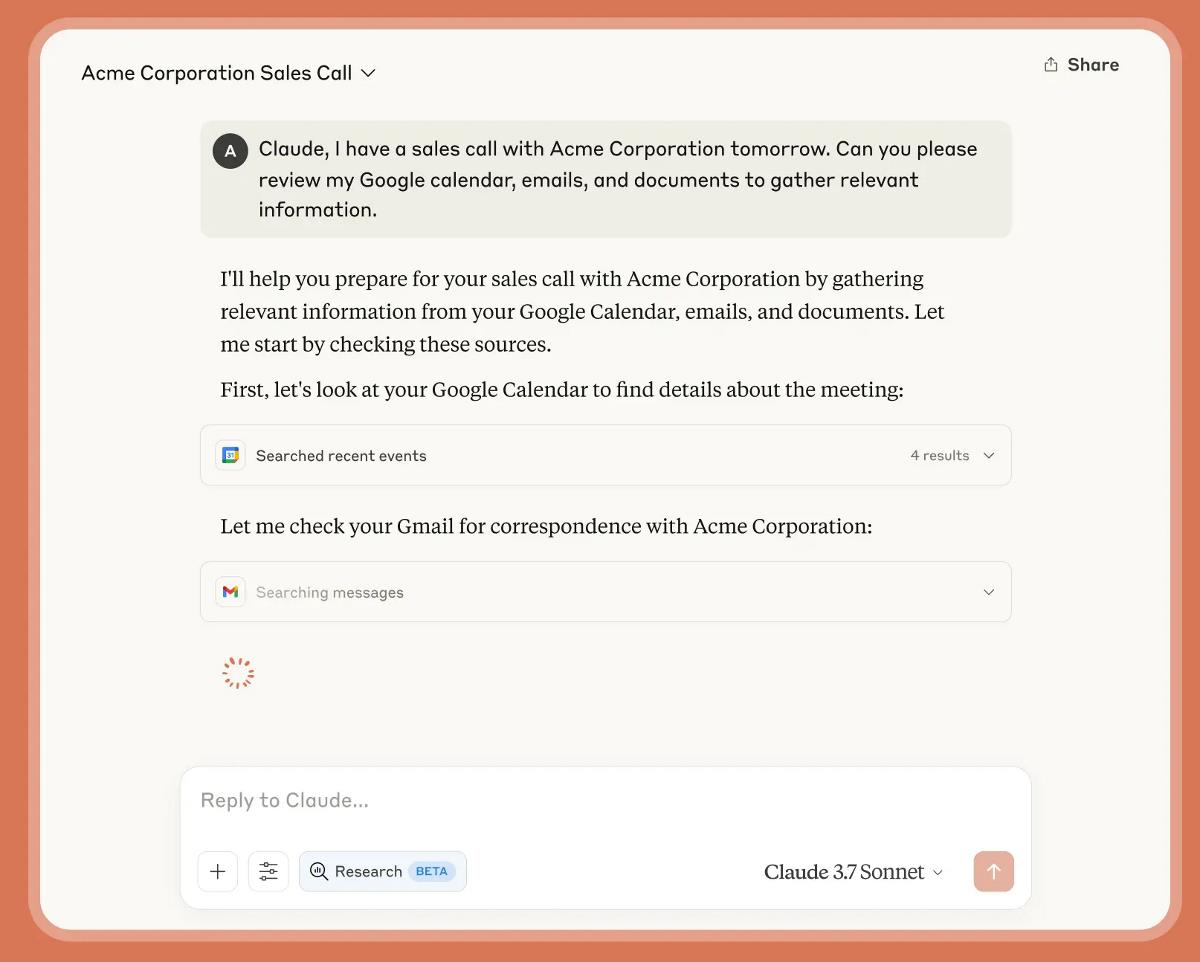

Claude Research

activeOfficial38#7 in research. Architecturally distinct multi-agent approach is theoretically superior, but 90.2% improvement claim is self-reported with no independent validation. No dedicated HN thread for Claude research. Promising but needs third-party benchmarks to justify a higher ranking.

Grok DeepSearch / DeeperSearch

activeOfficial35#8 in research. Uniquely positioned for social media and breaking news research — only tool with native X/Twitter integration. But no hard benchmark numbers from independent sources. Qualitative reviews only. Niche use case limits general ranking.

Elicit

activeOfficial40#1 in academic/scientific research subcategory. Purpose-built for systematic reviews and meta-analyses — no other tool does structured data extraction across dozens of papers. API launch (Mar 2026) enables programmatic workflows. Reddit r/GradSchool endorsement is strong organic signal.

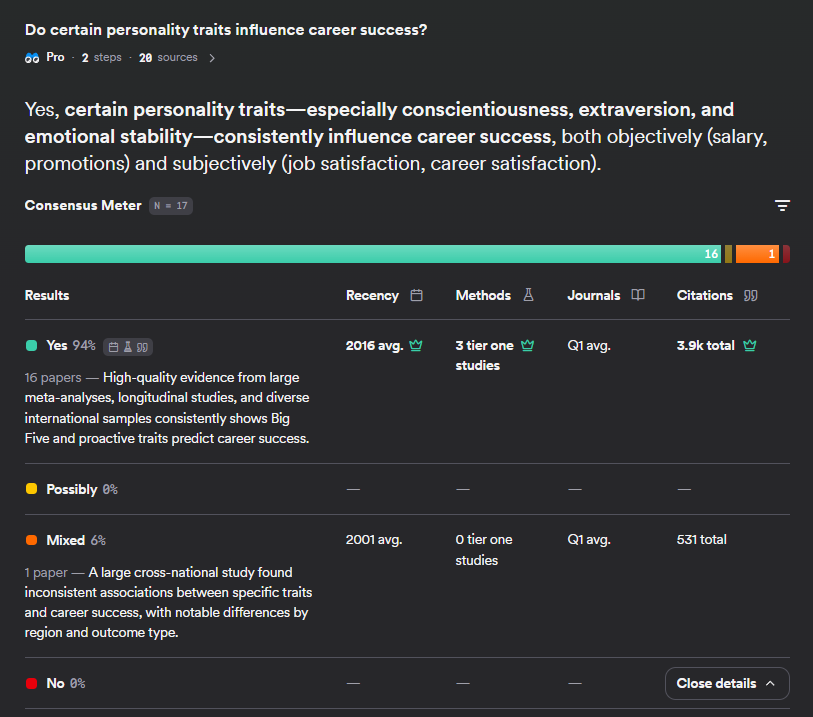

Consensus

activeOfficial38#2 in academic/scientific research subcategory. Unique consensus meter for claim-level evidence checking — no competitor has this. 200M+ papers via Semantic Scholar. Deep Search (1,000+ papers/query) launched but low HN signal (11 pts) raises adoption concerns.

Semantic Scholar

activeOfficial35Infrastructure layer for academic research, not a ranked research agent. 214M papers, citation graphs, free API. Consensus and other tools build on it. Essential for paper discovery and citation mapping but not a standalone research agent.

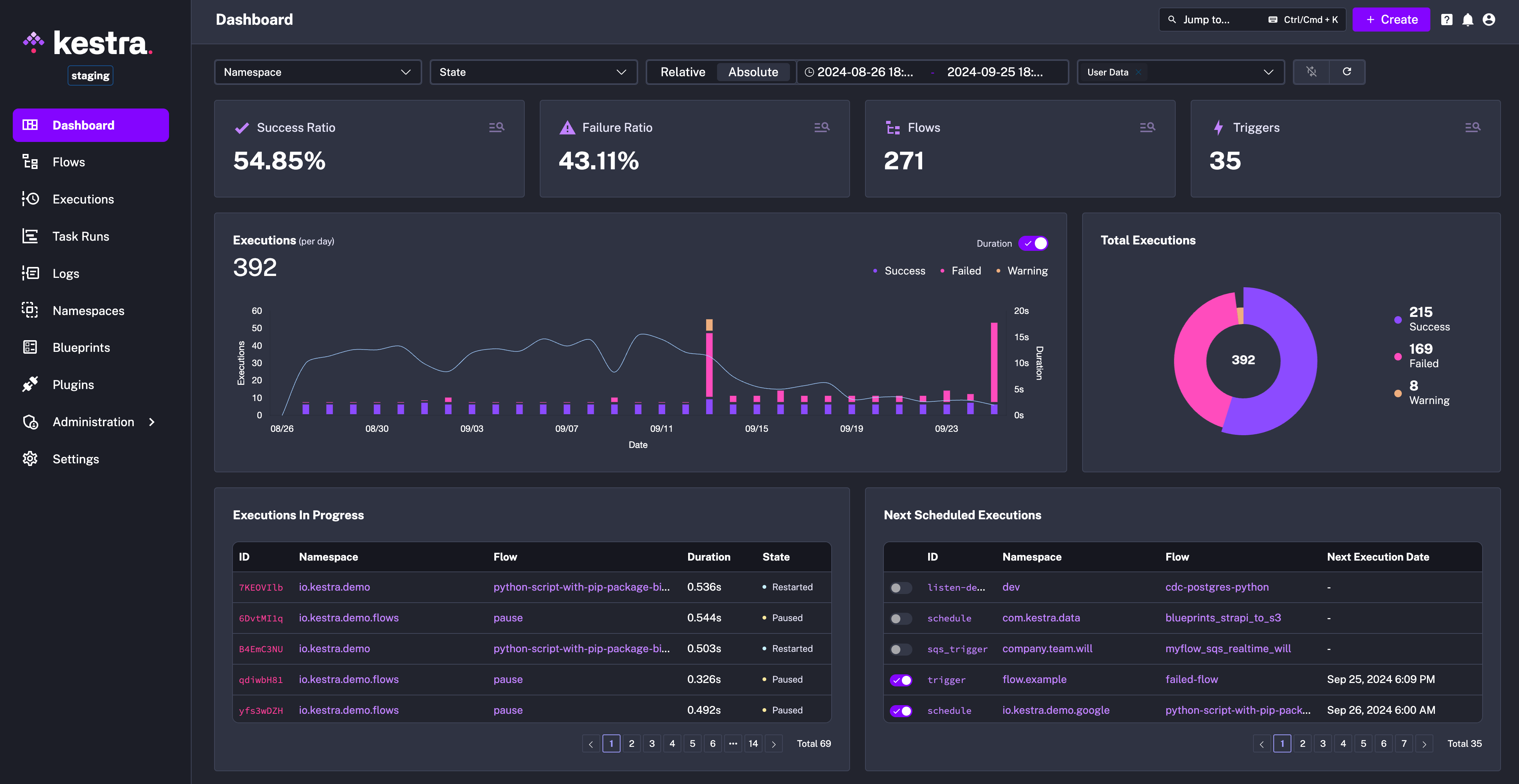

Kestra

active80Best pick for data engineering and DevOps teams migrating from Airflow, or anyone who wants declarative (YAML) workflow definitions with Git version control. If your automation is data pipelines or scheduled ETL, Kestra beats n8n. No MCP bridge limits relevance for Claude Code users.

Activepieces

active80Best for startups and non-developer teams who want open-source + simplicity. If your team isn't technical enough for n8n but wants open-source, choose Activepieces. Fewer integrations (200+ vs n8n's 1,000+) and no MCP bridge limit power-user appeal.

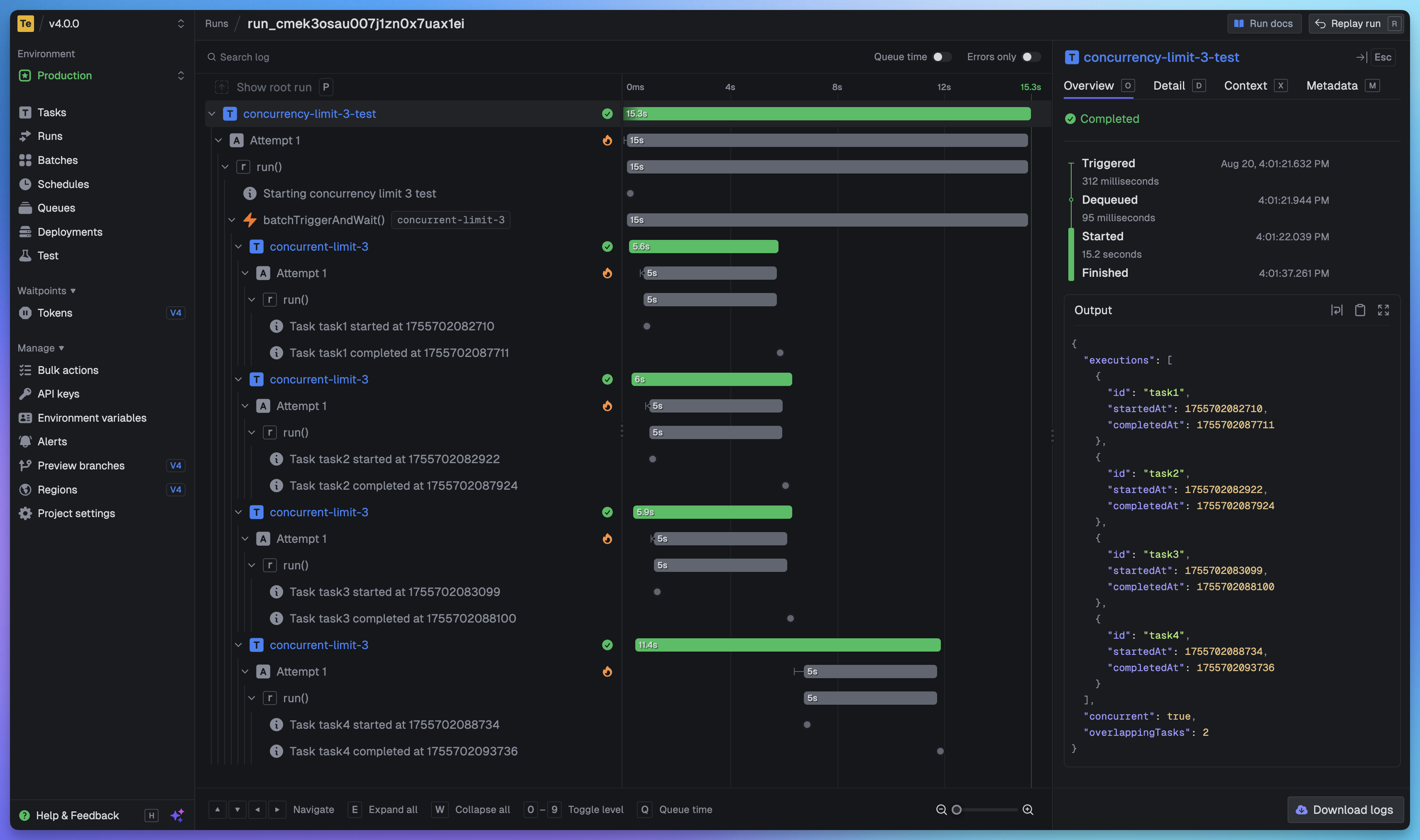

Trigger.dev

active92Best for TypeScript developers who need background jobs with long-running compute or AI agent workflows. For heavy compute tasks that exceed serverless limits, Trigger.dev beats Inngest. Transparent security incident post-mortem (262 HN pts) builds trust.

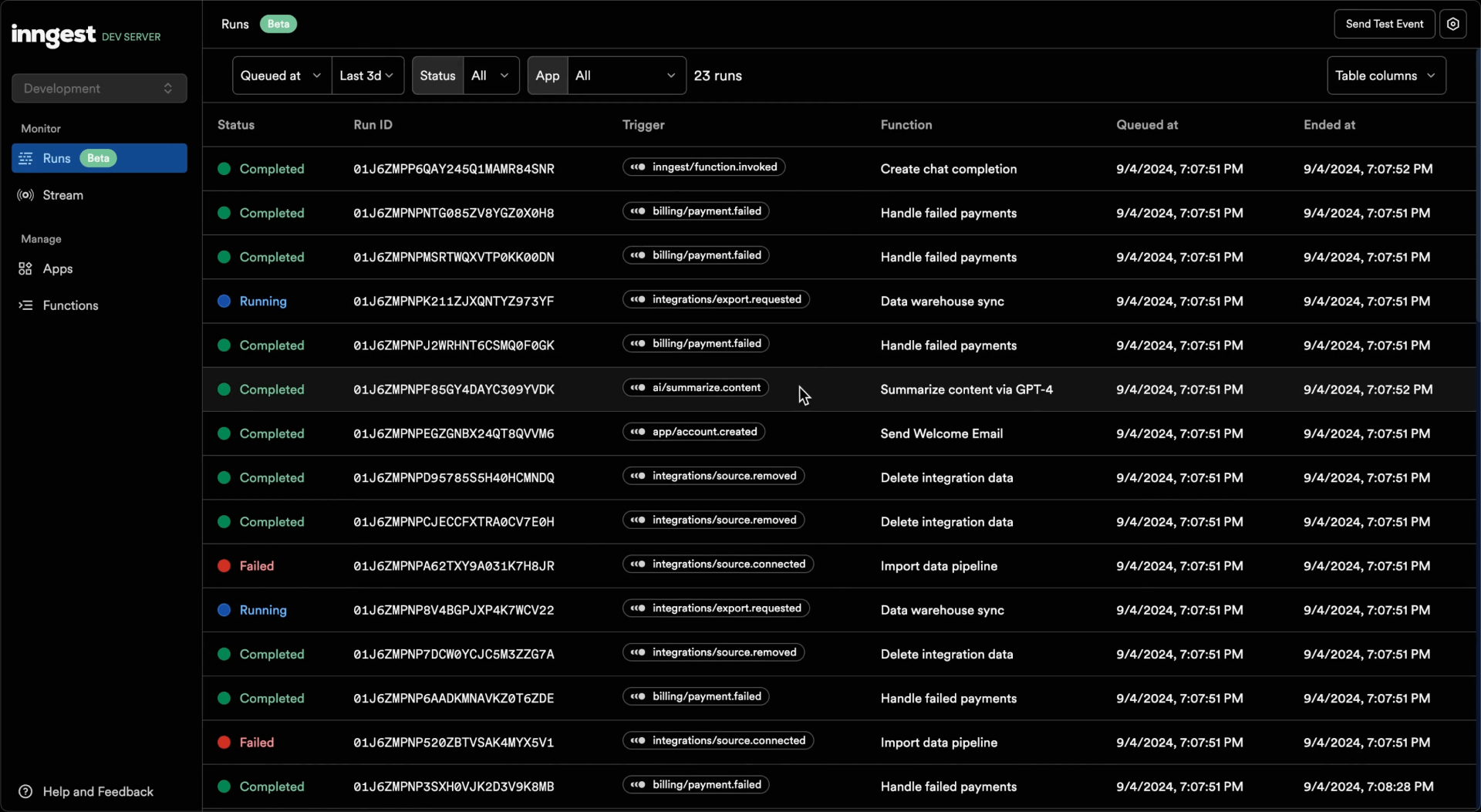

Inngest

active90Default choice for Next.js/Vercel teams needing serverless workflow orchestration with AI inference built in. 499K npm downloads/wk (highest in automation by 2x) proves deep practical adoption despite modest star count. Cannot self-host (ELv2 license) — limits appeal for on-prem teams.

Windmill

active92Best for developers who want workflows-as-code rather than visual canvases. Purpose-built for internal tooling, DevOps automation, and script orchestration. If your automation is 'run these scripts in sequence with a UI,' Windmill is the answer. No MCP bridge limits Claude Code integration.

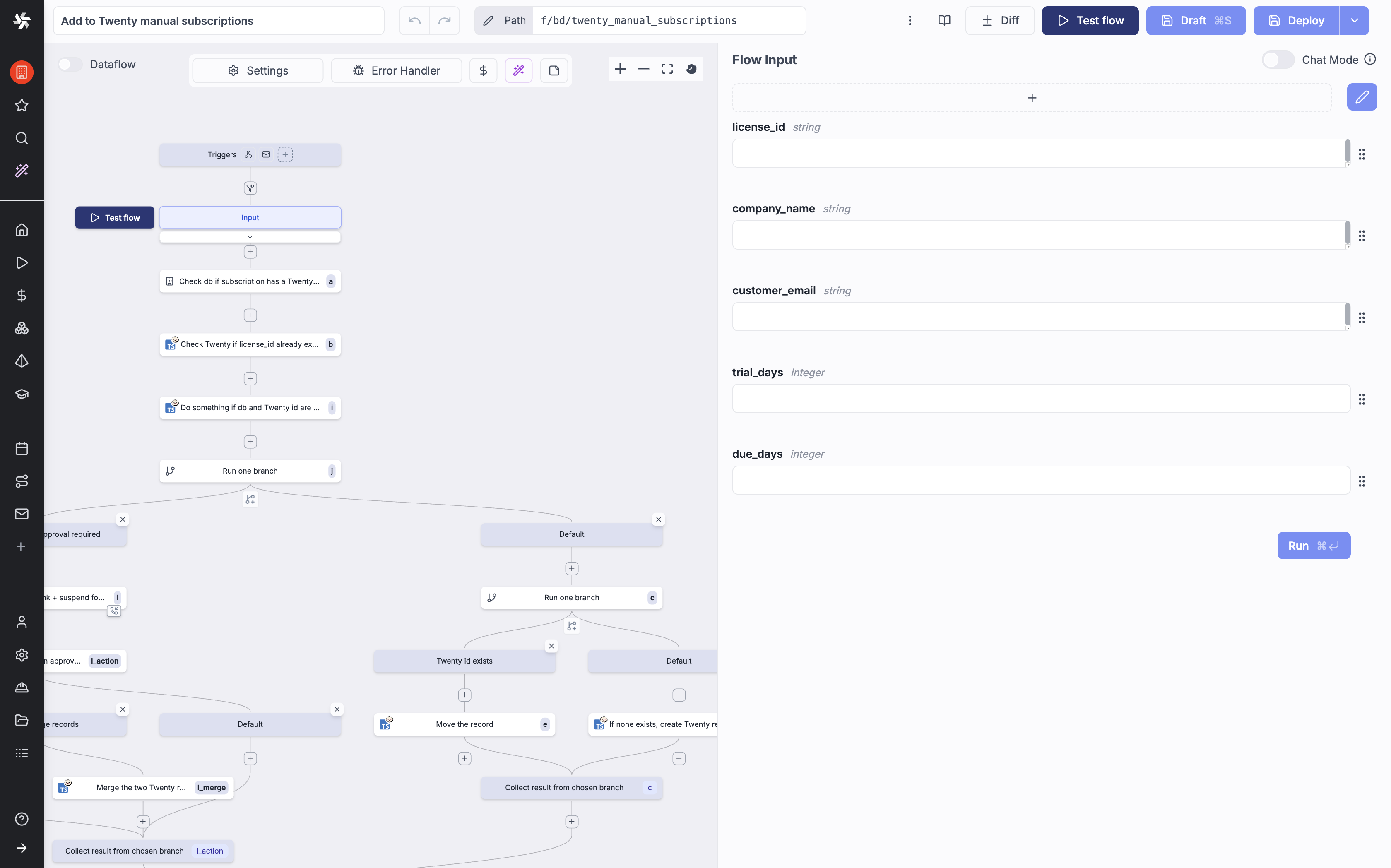

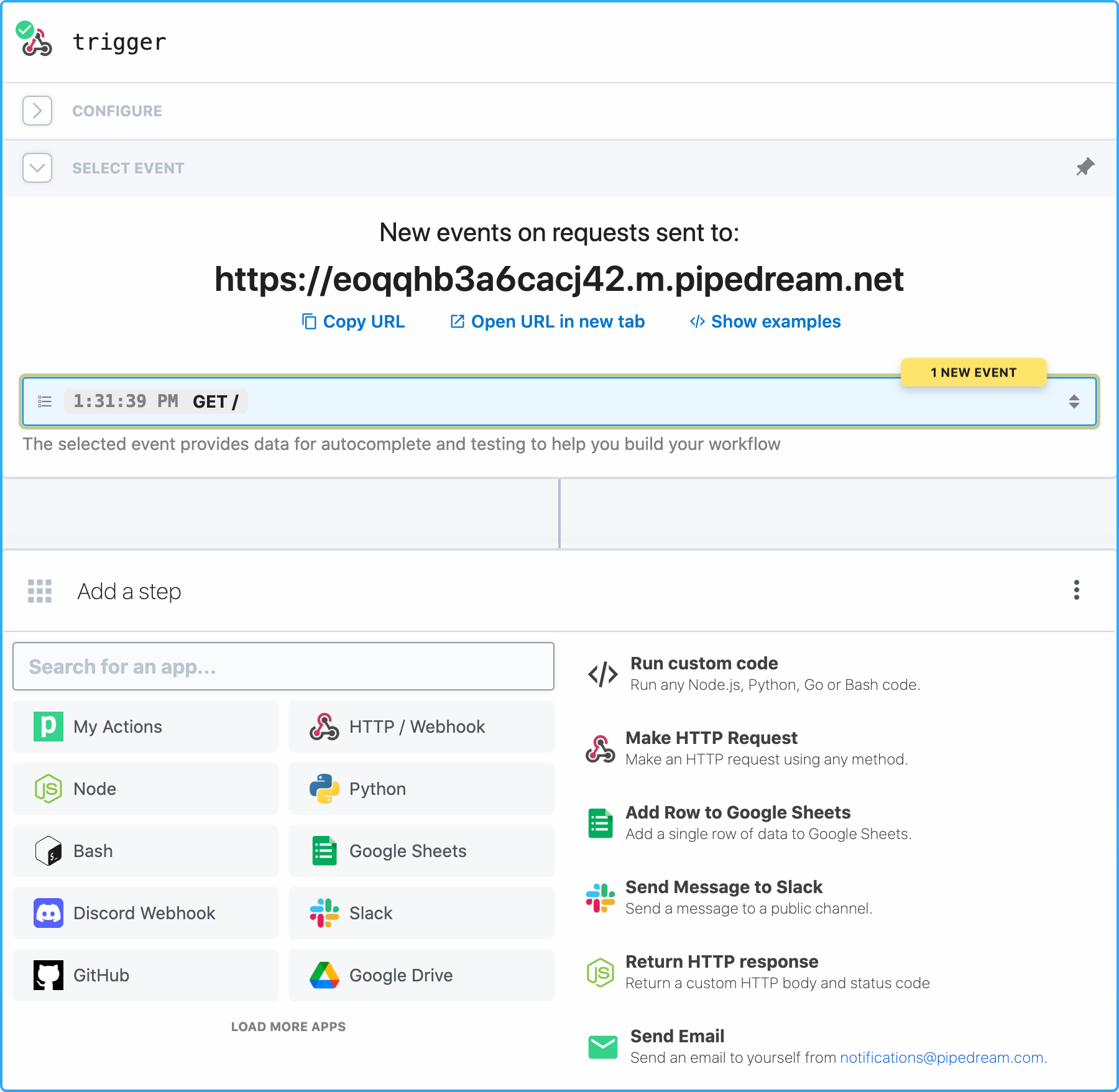

Pipedream

active90Best for developers who need quick MCP access to a massive API surface without building their own auth. For one-off API integrations via MCP (no workflow needed), Pipedream's free tier is the fastest path. 4,362 open issues raise maintenance capacity concerns.

Marimo

active93Best-in-class reactive notebook — the Jupyter replacement that actually works. Reactive execution guarantees reproducibility. Pure .py format is natively agent-readable. The natural choice for agent-assisted data workflows.

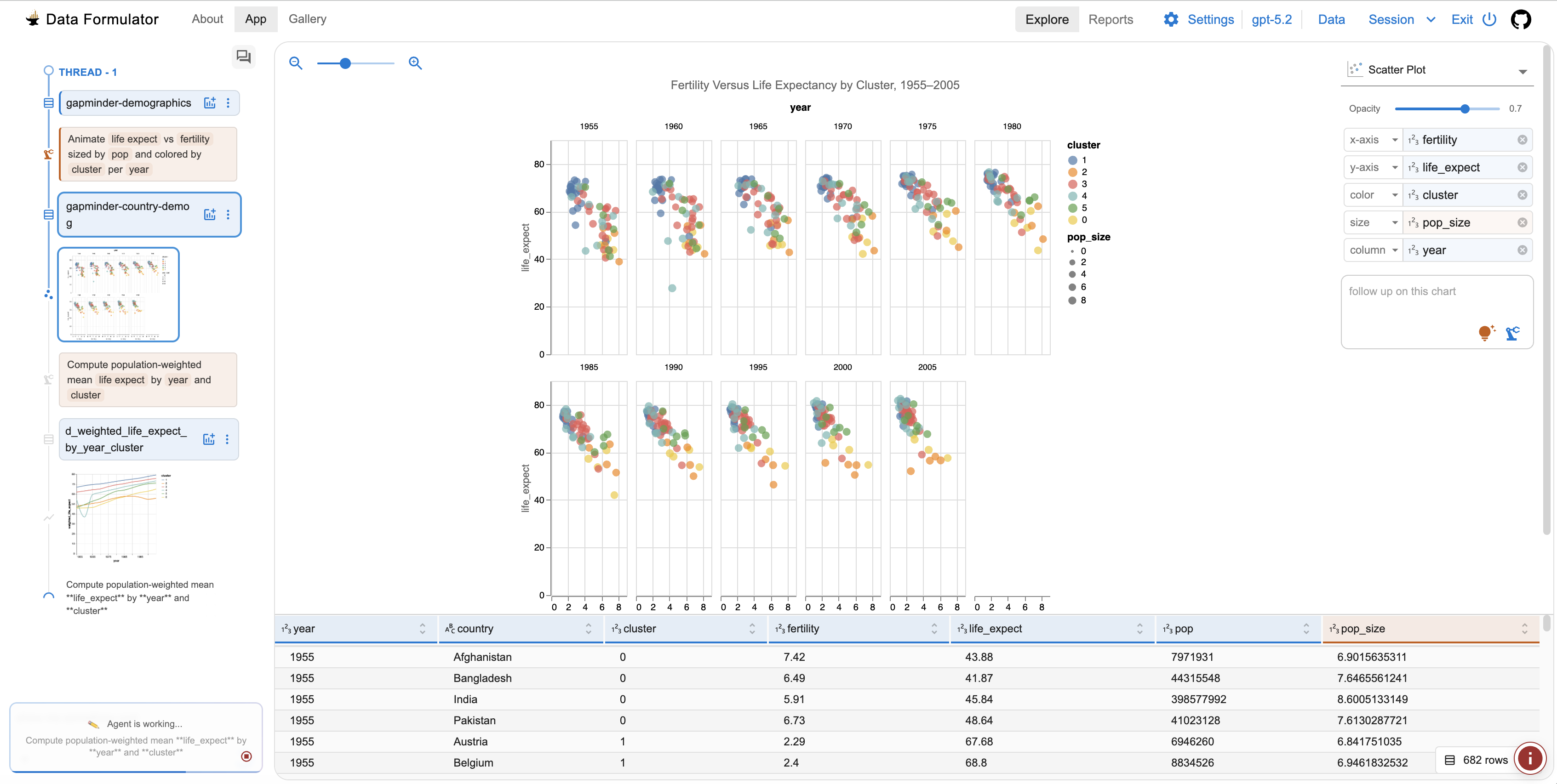

Data Formulator

active82Best AI-powered data visualization tool — Microsoft Research quality, fully open source. Fills a niche no other tool covers: conversational, iterative chart building from raw data.

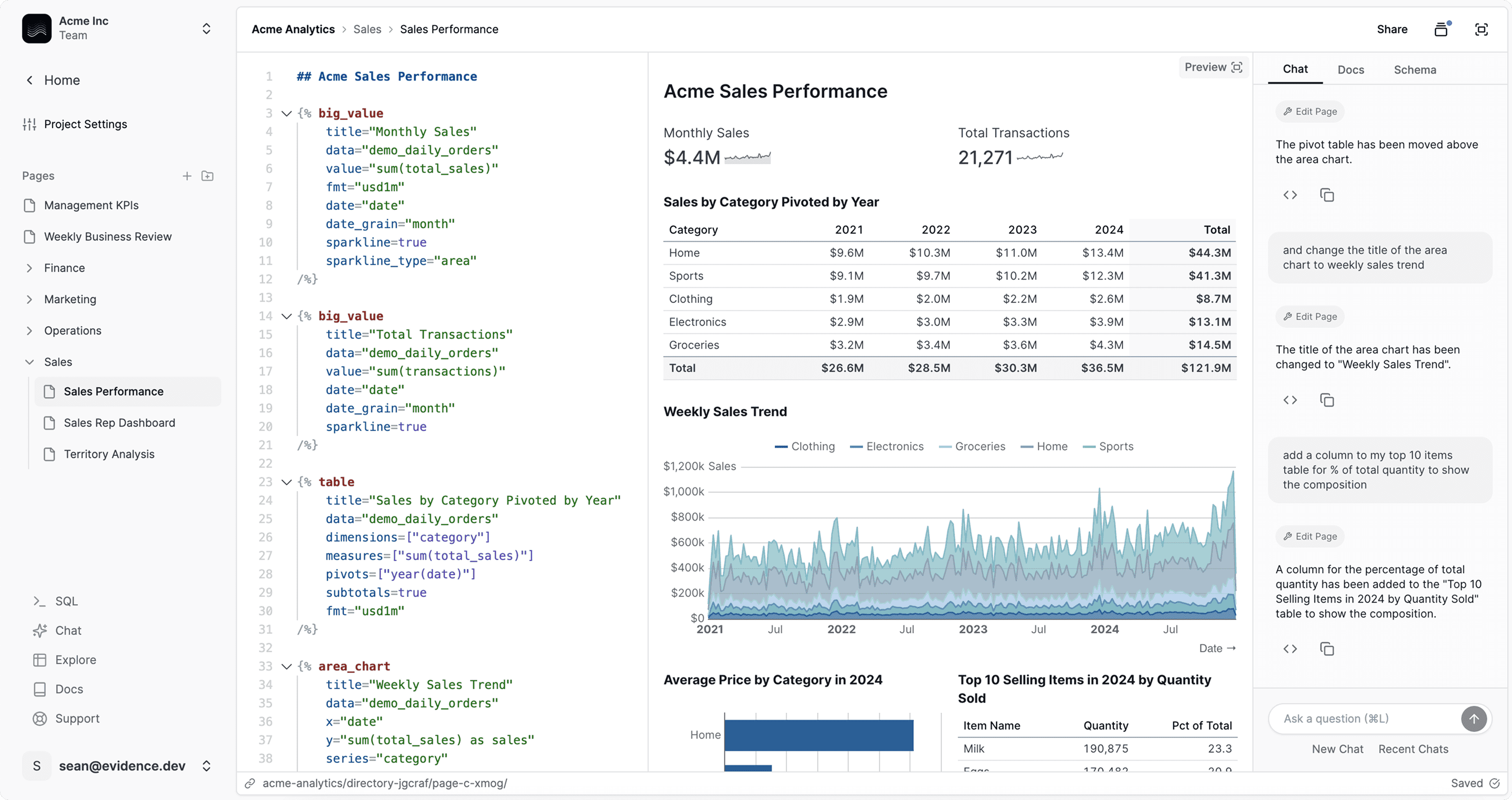

Evidence

active78Best BI-as-code platform for SQL-first analysts. If your team thinks in SQL and wants version-controlled, code-authored reports, Evidence beats every other tool. The only tool in the category that doesn't require a general-purpose programming language.

Observable Framework

active84Best for developer-built data dashboards with D3-quality visualization. If you need custom, interactive, D3-quality visualizations in a static site, Observable Framework is unmatched.

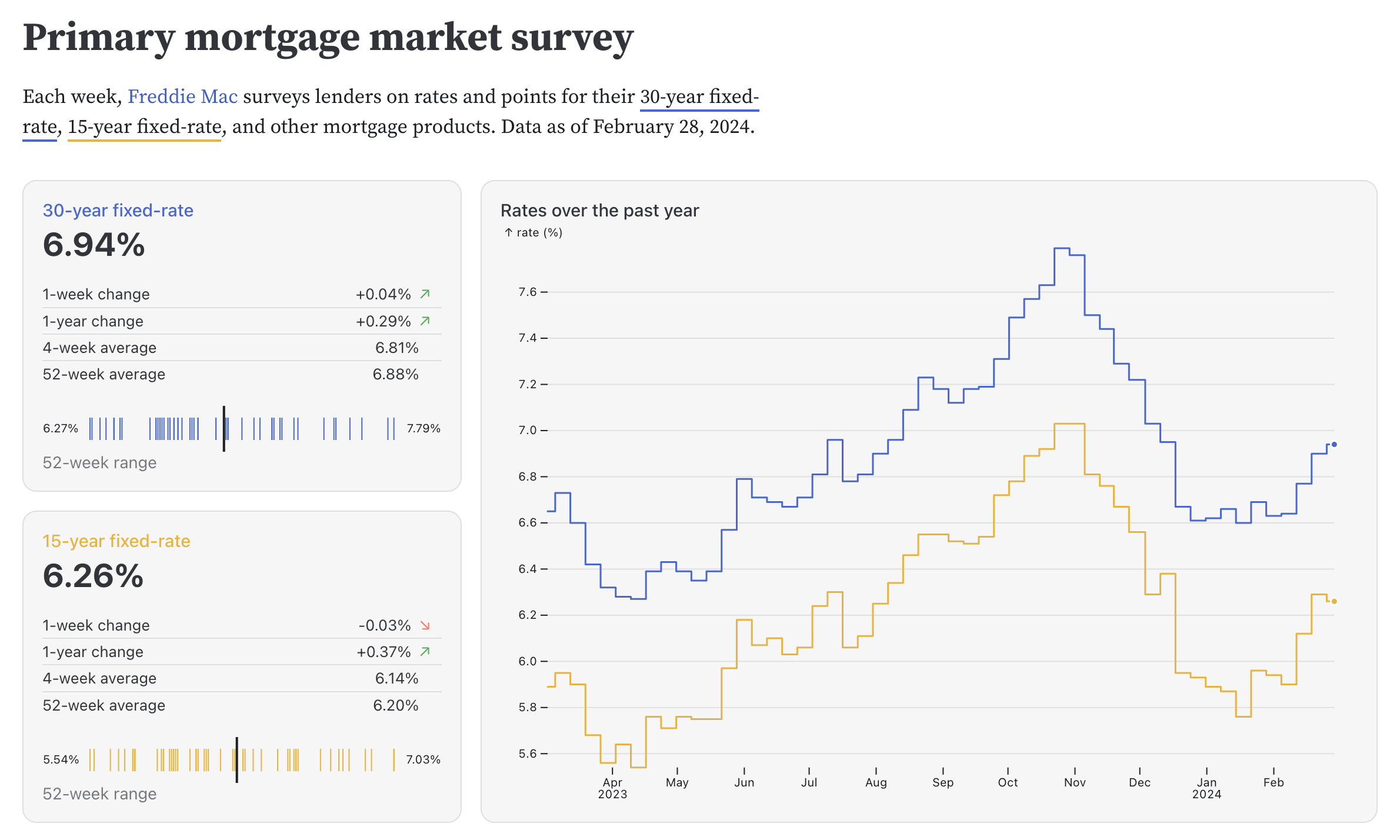

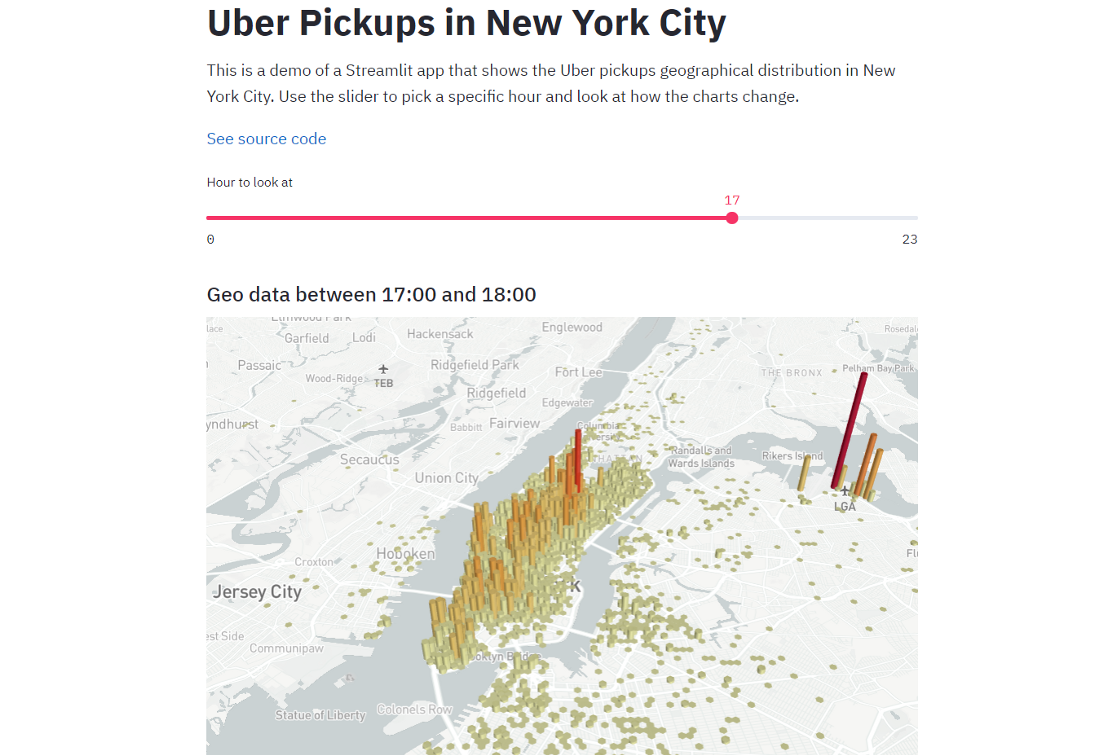

Streamlit

active90Ecosystem giant — essential infrastructure for deploying data apps, but not an analysis engine. Reruns entire script on every interaction. Include as foundational infrastructure, not a direct AI analysis tool.

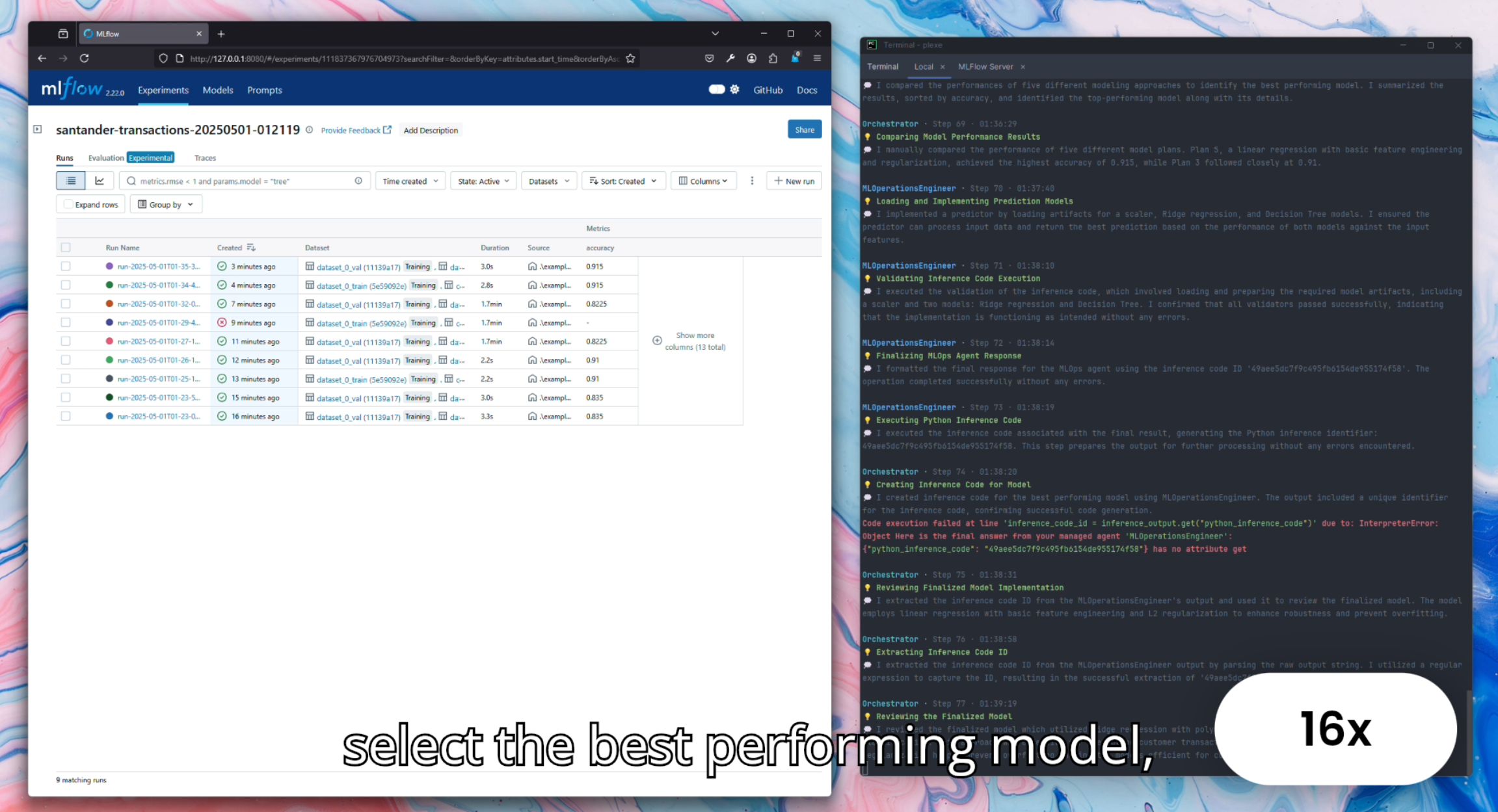

Plexe

active75Most promising prompt-to-ML-model tool — early but uniquely differentiated. Natural language → trained ML model. Needs more real-world validation before it can rank higher.

PandasAI

stale72High name recognition but stale and problematic. 5 months without commits, documented hallucination risk, custom license. Good for quick throwaway exploration only — not suitable for anything where correctness matters.

Fumadocs

active90The breakout docs framework of 2026. 11.2K stars with only 5 open issues — exceptional maintenance. 309K npm/week surpasses Starlight. 3x YoY growth is the fastest in the category. For Next.js teams, Fumadocs is the clear pick over Nextra.

Astro Starlight

active91The developer experience leader. Zero client-side JS by default, Go-compiled dev server starts in half the time of Docusaurus. 8.1K stars, 200K npm/week (doubled YoY), 283 contributors. Real migration trend from Docusaurus (Distr, W3C). Critical gap: no built-in versioned docs.

Docusaurus

active92The incumbent with the biggest numbers but coasting. 64.2K stars, 765K npm/week, 457 contributors. v4.0 roadmap at 20% with no target date. Developers actively migrating to Starlight/Fumadocs for DX. Still the right choice when versioned docs and plugin ecosystem are requirements.

Fern

active89The most strategically significant move in API docs in 2026. Only tool generating both docs AND production SDKs from a single spec. Postman acquisition (Jan 2026) gives access to 500K+ companies. 3.6K stars, 89K npm/week. Daily pushes post-acquisition confirm continued development.

Mintlify

active64Market leader for developer-facing documentation SaaS. Helicone acquisition (Mar 2026) signals pivot to AI-agent knowledge infrastructure. 40%+ of doc traffic from AI agents (CEO claim, directionally confirmed by Context7 data). Security incident (GitHub token leak, Dec 2025) is a real trust concern. Premium pricing ($300/mo Pro).

Redocly / Redoc

active78The enterprise governance pick for API documentation. 25.6K stars, 1.15M npm/week — most downloaded API docs renderer. Only platform supporting SOAP. Strongest OpenAPI linting. Development velocity has slowed (last push 6 weeks ago, last release Sep 2025). Fern+Postman is a growing competitive threat.

MkDocs Material

watch92Most-downloaded docs framework globally by raw numbers (4.2M PyPI/week). But now in maintenance mode. MkDocs upstream is unmaintained since Aug 2024 — supply chain risk. Zensical successor promises 4-5x faster builds but isn't feature-complete. New projects should choose Starlight or Fumadocs instead.

Swagger UI

active95The universal baseline for API documentation. 28.7K stars, 603K npm/week combined. Actively maintained with weekly releases. Every API docs tool benchmarks against Swagger UI. Reliable and free but hasn't innovated in years. If you need more than basic OpenAPI rendering, look at Fern or Redocly.

Promptless

active38The only tool focused on keeping docs in sync with code changes automatically. YC W25 backed. 107pts Launch HN. Named customers (Vellum, Vitess, Amplitude) but all traction is self-reported. Premium pricing ($500-1,000/mo). Team of 5. Early-stage risk is high but positioning is unique.

GitBook

active35The 'easy button' for teams with non-developer contributors. WYSIWYG editor lowers the barrier. AI Agent connects docs to support channels. But closed-source SaaS with expensive pricing ($65-249/mo + $12/user/mo) competing against free OSS tools with better developer experience.

DocsGPT

active82Best open-source 'chat with your docs' solution. 17.8K stars, 256pts Show HN (strongest HN reception in documentation category). Self-hostable, multi-model. Complements docs generators (Docusaurus, Mintlify) rather than competing. Release cadence slowing (last release Dec 2025).

Nextra

active81Still works, still maintained, but Fumadocs has overtaken it on every momentum metric (309K vs 113K npm/week, 3x YoY growth vs modest). No reason to choose Nextra over Fumadocs for a new Next.js project. Existing Nextra users don't need to rush to migrate, but the writing is on the wall.

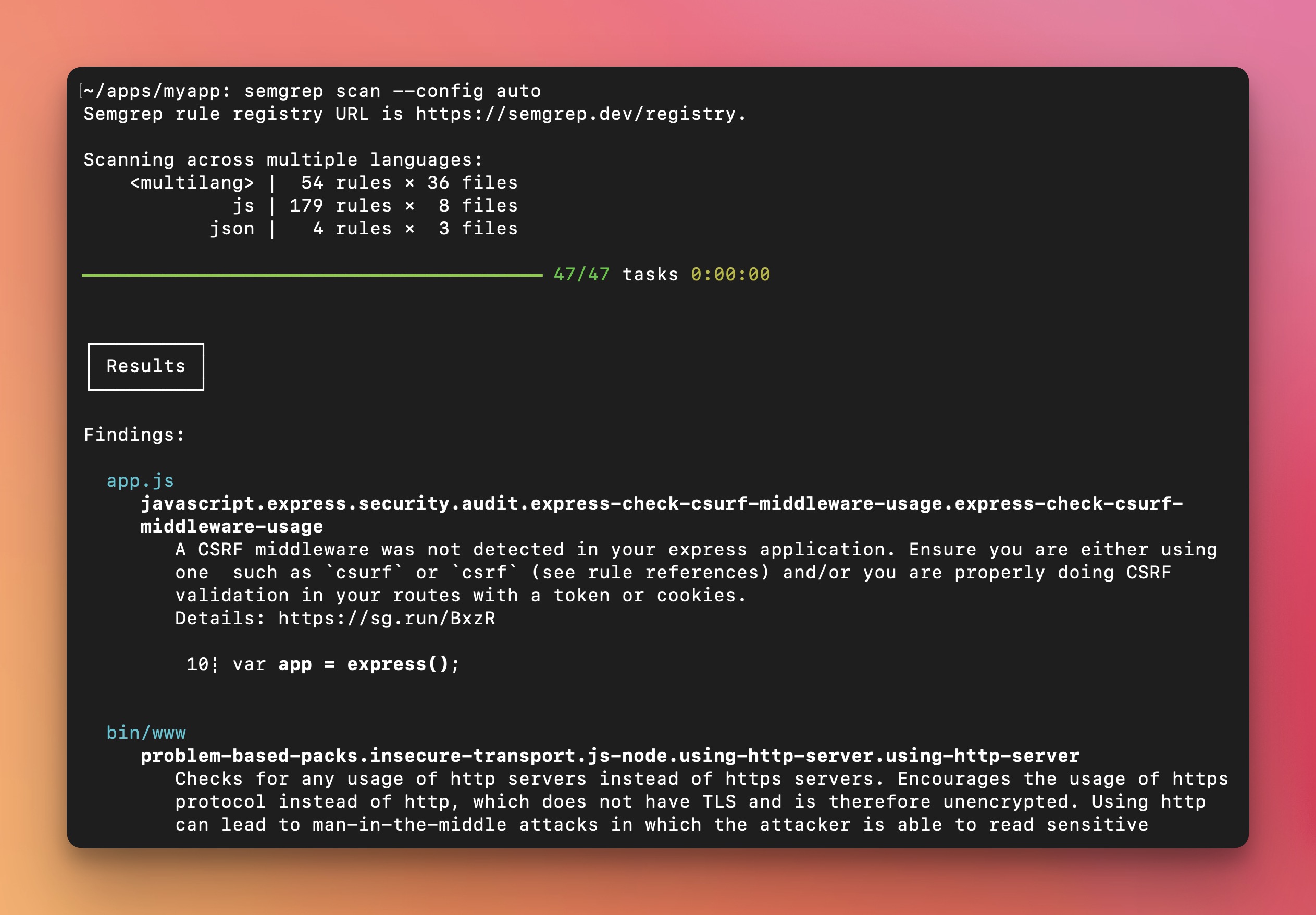

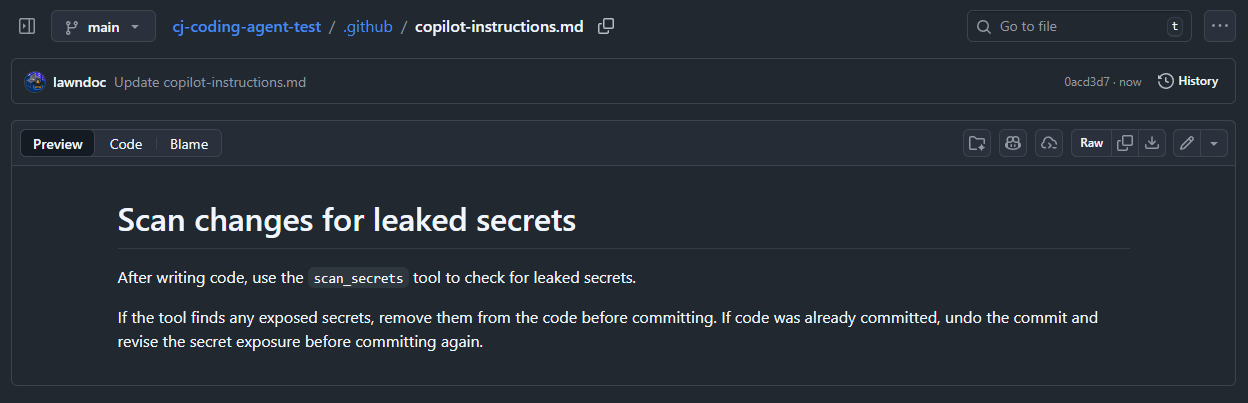

Semgrep MCP

activeOfficial10#1 SAST skill. Best-in-class OSS SAST with official MCP server. 46% vuln detection in DryRun benchmark (vs SonarQube 19%). AST-based rules are transparent and auditable. Rising mindshare (1.6% → 2.6%). LinkedIn rebuilt SAST pipeline around it. The default recommendation for code scanning via AI agents.

DryRun Security (Code Insights MCP)

activeOfficial35Highest reported SAST detection rate (88%) but self-reported benchmark. AI-native with natural language code policies. Official MCP server. $8.7M raised. The dark horse — if an independent third party confirms the 88% detection rate, moves to #1 above Semgrep.

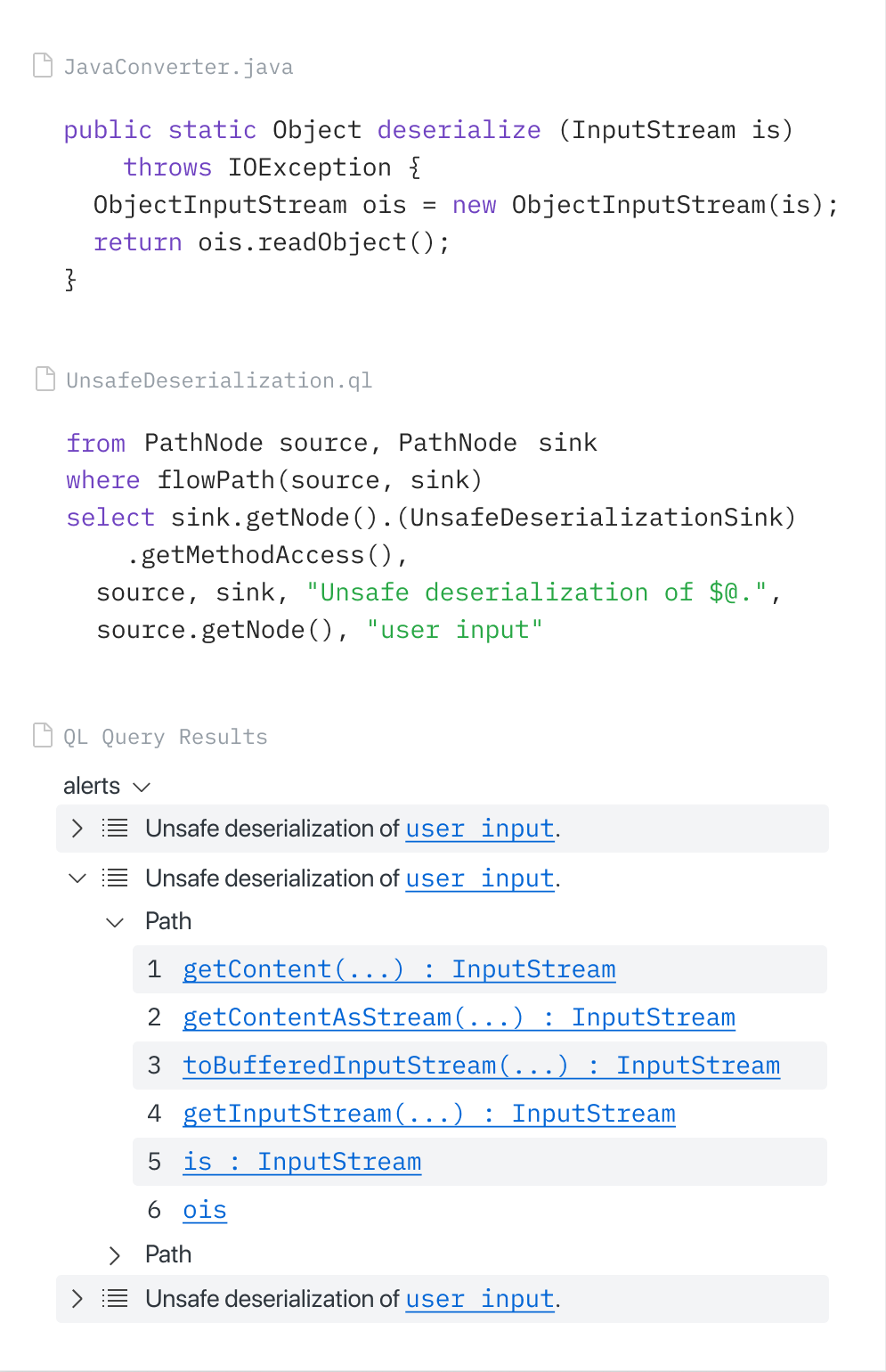

CodeQL (via GitHub MCP Server)

activeOfficial88#3 SAST. Best for GitHub-native shops — zero extra setup via the official GitHub MCP Server. Copilot Autofix auto-generates fixes from CodeQL alerts. GitHub Security Lab Taskflow Agent found ~30 real CVEs. If you're all-in on GitHub, this jumps to #1.

Snyk Code (via Snyk MCP Server)

activeOfficial35#4 SAST. Best commercial all-in-one security platform (SAST + SCA + IaC + containers). DeepCode AI engine with Agent Fix auto-remediation. Strongest for teams already on Snyk — adding Agent Scan is the only incremental tool needed.

Datadog Code Security MCP

activeOfficial35Best for Datadog-native shops. SAST + secrets + SCA + IaC within your existing observability stack. Don't add 4 separate tools if you already have Datadog. GA March 2026.

GitGuardian MCP (ggmcp)

activeOfficial60#1 secret detection. Purpose-built secret scanning MCP with 500+ detectors and hard merge gates for AI-generated code. State of Secrets Sprawl 2026 report (81% surge in AI-service key leaks, 24,008 secrets in MCP configs) is the definitive source on the problem. The default recommendation for secret scanning in agent workflows.

TruffleHog

active88Best for credential verification in CI/CD pipelines. 18K+ stars, 800+ secret types, and unique active credential verification (tells you which leaks are still dangerous). Scans beyond git (S3, Docker, Slack). No official MCP server is the gap — use in CI/CD rather than agent workflows.

Gitleaks

active88Best pre-commit secret scanner. 24.4K stars — most-starred in the category. 150+ patterns, fastest scanner. The community default. No official MCP server — use as pre-commit hook, not agent integration.

Snyk Agent Scan

activeOfficial40#1 agent/MCP security scanner. Scans AI agents, MCP servers, and skills for prompt injection, tool poisoning, and toxic flows. Auto-discovers Claude, Cursor, Gemini CLI, Windsurf configs. Skill Inspector (Feb 2026) + Vercel supply chain partnership. Enterprise trust.

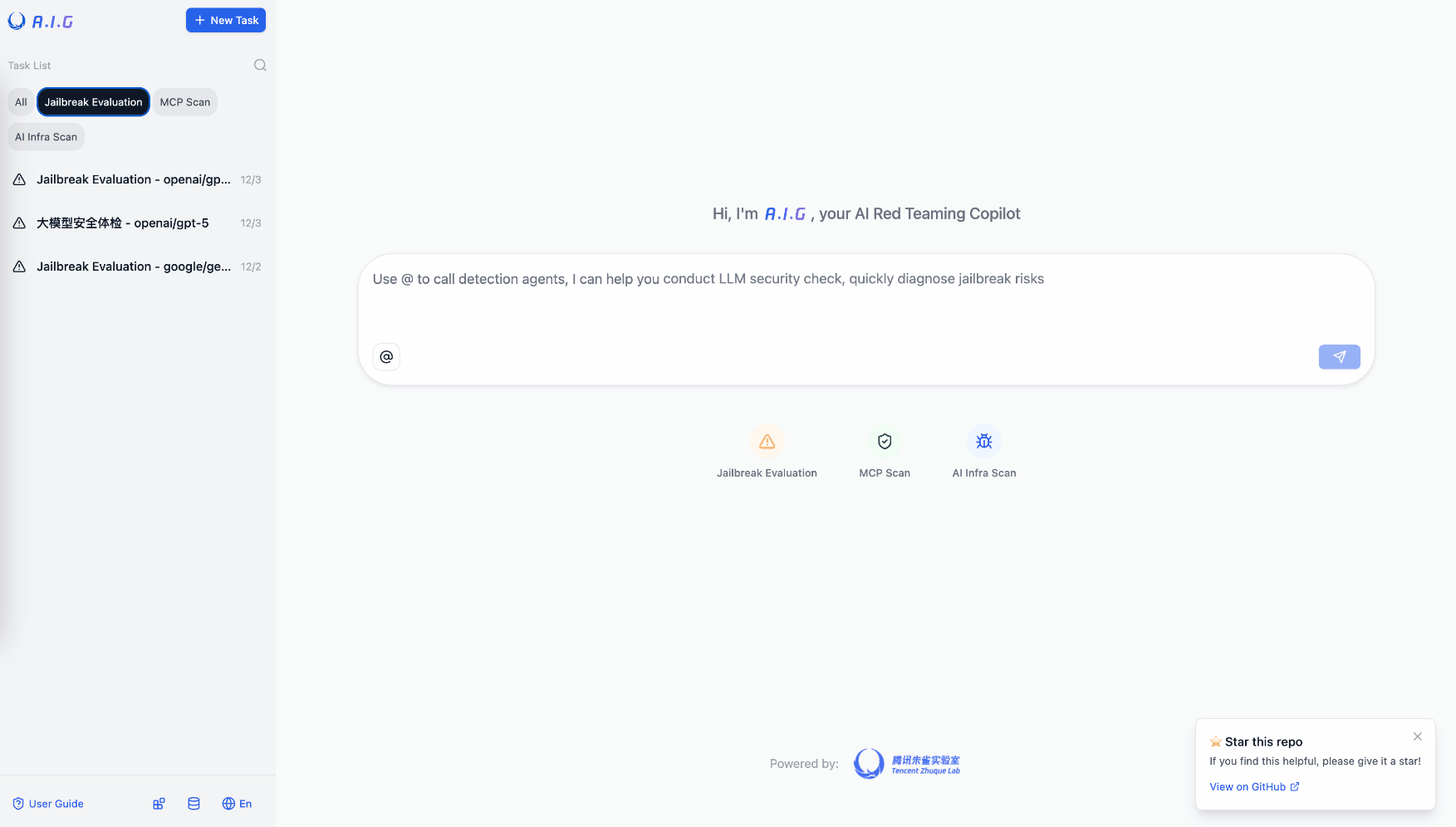

Tencent AI-Infra-Guard

activeOfficial81#2 agent/MCP security scanner. Most comprehensive OSS red teaming tool — ClawScan, Agent Scan, Skills Scan, MCP scan, jailbreak eval. 3,264 stars (highest in agent security). 43 AI framework components, 589 CVEs cataloged. Best for OSS-first teams wanting breadth without commercial dependencies.

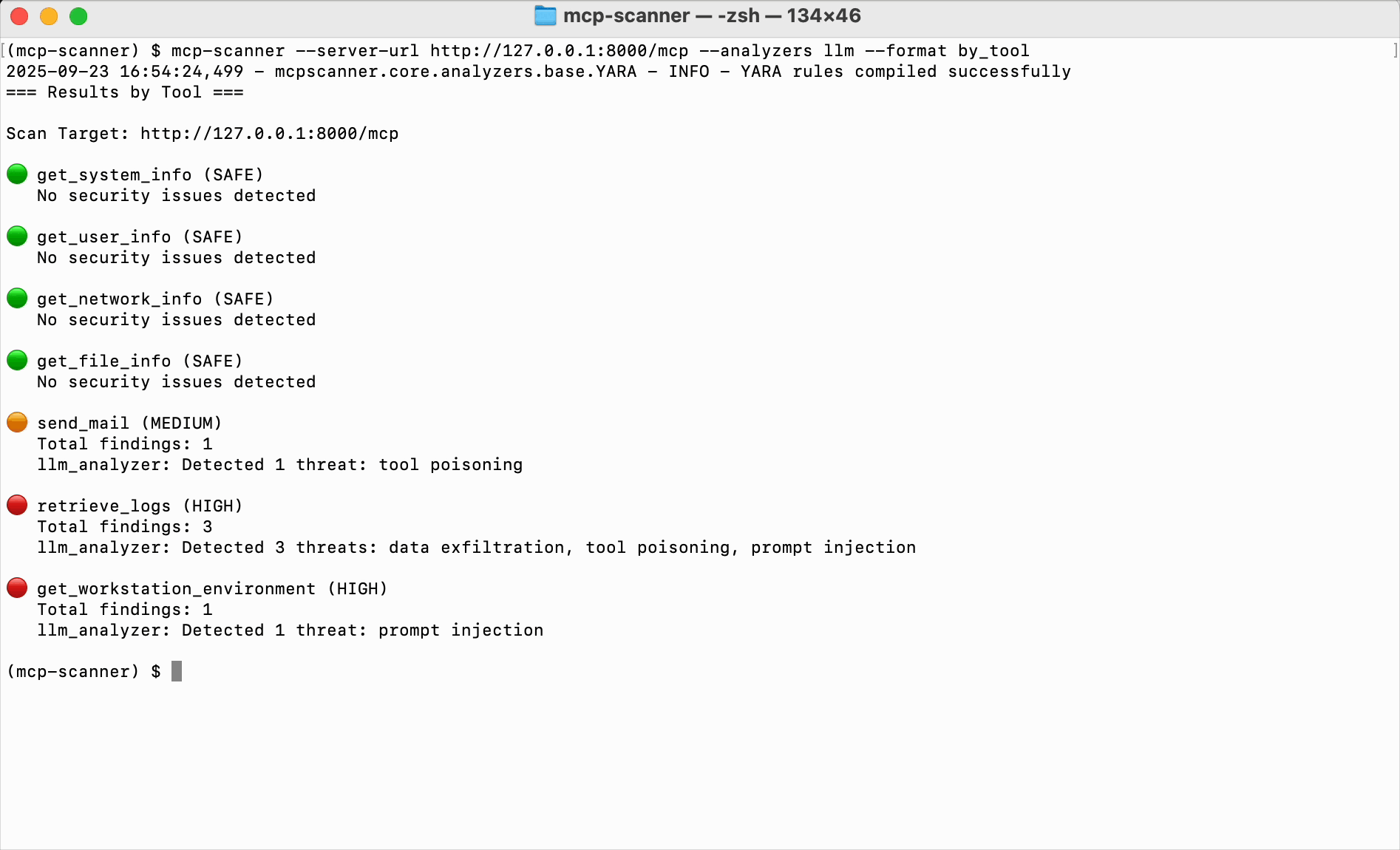

Cisco MCP Scanner

activeOfficial64#3 agent/MCP security scanner. Best behavioral analysis — 3 scanning engines (Yara, LLM-as-judge, Cisco AI Defense) detect semantic threats that pattern matching misses. 852 stars. Enterprise-backed (Cisco) and open source.

MCP-Shield

active38First-mover MCP security scanner. 548 stars, 134 pts HN. Simpler than Snyk Agent Scan or Tencent AI-Infra-Guard but battle-tested. Good for teams wanting a lightweight, proven scanner without enterprise overhead.

HexStrike AI

active80#1 offensive security skill. 7,561 stars — largest security MCP repo. 150+ cybersecurity tools. For authorized pentesting, CTF, and bug bounty only. The clear leader in agent-assisted offensive security.

MCP for Security (cyproxio)

active53#2 offensive security. Curated collection of pentesting MCP servers (SQLMap, FFUF, NMAP, Masscan). 569 stars. Better organized than HexStrike but narrower scope. For authorized pentesting only.

FuzzingLabs Security Hub

active59#3 offensive security. MCP servers for Nmap, Ghidra, Nuclei, SQLMap, Hashcat. 481 stars. Differentiates on reverse engineering / binary analysis. Best for security researchers working with binaries and protocols.