Outperformed Perplexity, OpenAI, OpenDeepSearch, and HuggingFace. Strongest third-party validation for any open-source research tool.

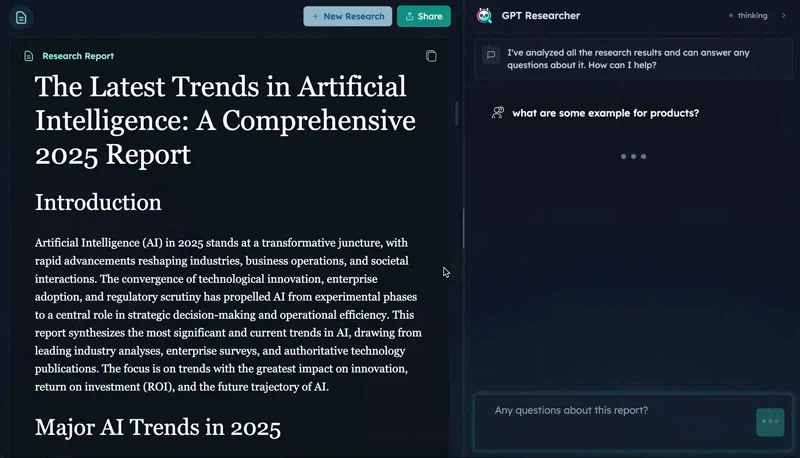

GPT Researcher

activeOpen-source autonomous deep research agent. CMU DeepResearchGym #1 on citation quality, report quality, info coverage. 25.8K stars, 15.9K weekly PyPI downloads. Apache 2.0.

Where it wins

CMU DeepResearchGym #1 — beat Perplexity, OpenAI on citation quality, report quality, info coverage

25,845 GitHub stars, 3,435 forks — most-starred dedicated open-source deep research agent

15,876 weekly PyPI downloads — real adoption signal

MCP server available for integration with Claude, Cursor, etc.

Apache 2.0 license — commercial use allowed

Multi-agent architecture: planner + crawler agents + publisher

Where to be skeptical

Requires LLM API key (not self-contained like Tongyi)

No dedicated HN thread with high engagement

CMU benchmark is from May 2025 — needs fresh validation

Orchestrator, not a model — quality depends on underlying LLM

Editorial verdict

#4 in research. Open-source leader with the strongest third-party validation — CMU benchmark winner beating Perplexity and OpenAI on citation and report quality. Real PyPI adoption (15.9K/wk) and MCP integration make it the pick for self-hosted research pipelines.

Source

Related

Tongyi DeepResearch

82First fully open-source deep research agent matching closed-source leaders on benchmarks. HLE 32.9 (exceeds OpenAI's 26.6), 30.5B params / 3.3B active (MoE), runs locally. 18.5K stars. Apache 2.0.

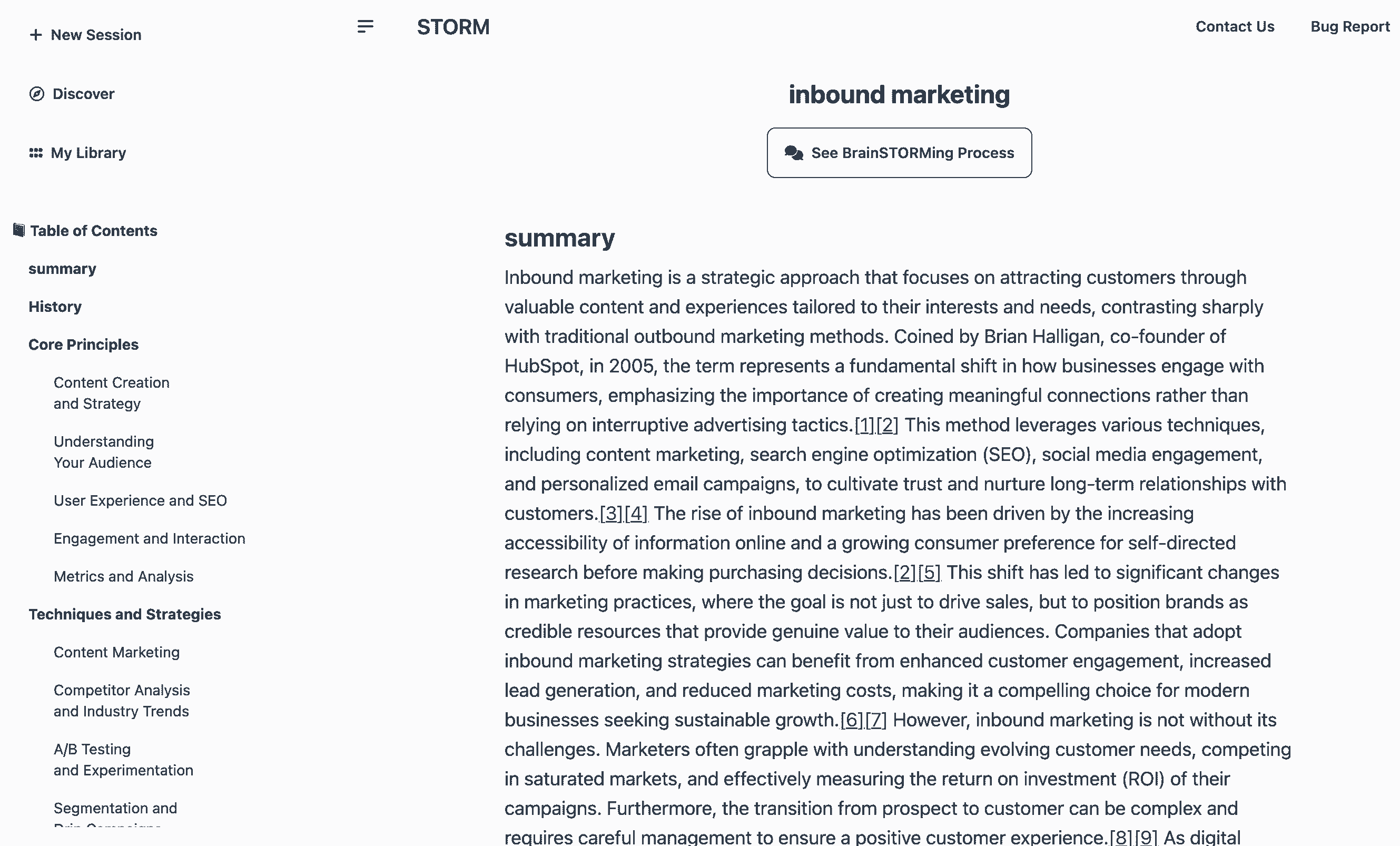

STORM (Stanford)

66Stanford's LLM-powered knowledge curation system. Generates Wikipedia-style articles with citations in ~3 min. 28K stars, 84.8% citation recall / 85.2% precision (peer-reviewed). MIT license.

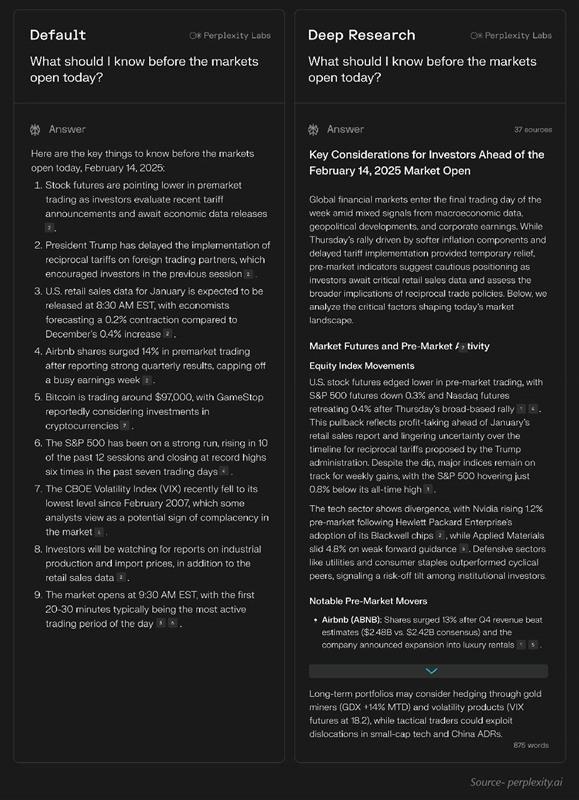

Perplexity Deep Research

43Research-first search engine with inline citations. Fastest deep research (15-30s), 93.9% SimpleQA accuracy, 50+ sources per report. $20/mo Pro.

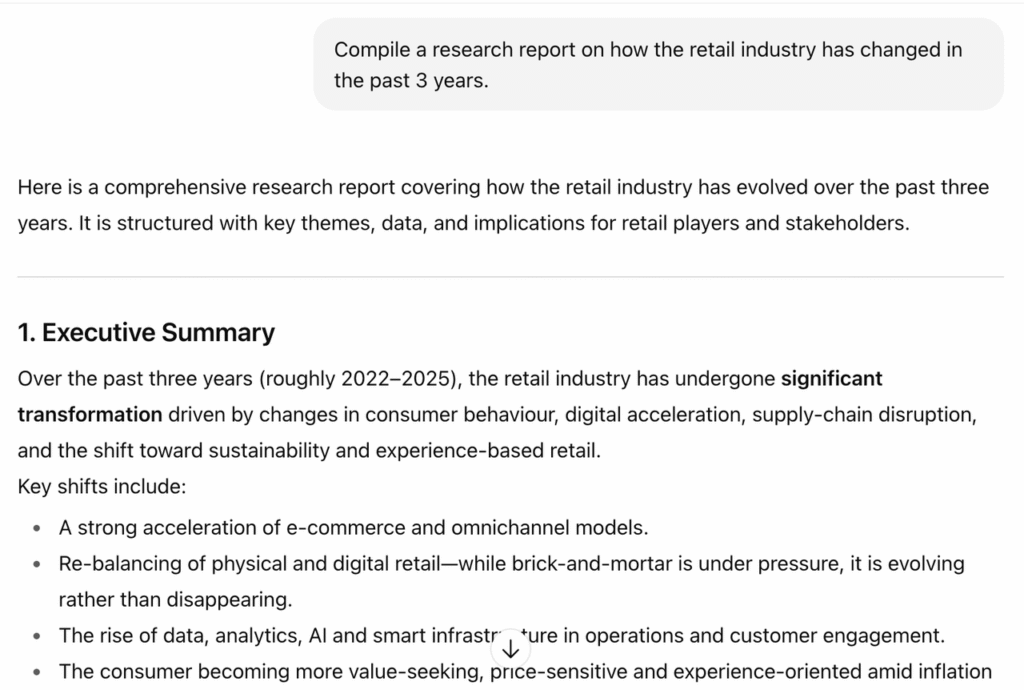

OpenAI Deep Research

42Agentic research mode powered by o3/o4-mini. 26.6% HLE (highest of any system), 72.57% GAIA, MCP support (Feb 2026). Slower (3-15 min) but deepest reasoning.

Public evidence

Most-starred dedicated open-source deep research agent. 174 open issues indicates active development.

Real adoption signal — people are actually installing and using it in production.

MCP integration makes it pluggable into Claude, Cursor, and other agent workflows.

Raw GitHub source

GitHub README peek

Constrained peek so you can sanity-check the source material without leaving the site.

English | 中文 | 日本語 | 한국어

</div>🔎 GPT Researcher

GPT Researcher the first open deep research agent designed for both web and local research on any given task.

The agent produces detailed, factual, and unbiased research reports with citations. GPT Researcher provides a full suite of customization options to create tailor made and domain specific research agents. Inspired by the recent Plan-and-Solve and RAG papers, GPT Researcher addresses misinformation, speed, determinism, and reliability by offering stable performance and increased speed through parallelized agent work.

Our mission is to empower individuals and organizations with accurate, unbiased, and factual information through AI.

Why GPT Researcher?

- Objective conclusions for manual research can take weeks, requiring vast resources and time.

- LLMs trained on outdated information can hallucinate, becoming irrelevant for current research tasks.

- Current LLMs have token limitations, insufficient for generating long research reports.

- Limited web sources in existing services lead to misinformation and shallow results.

- Selective web sources can introduce bias into research tasks.

Demo

<a href="https://www.youtube.com/watch?v=f60rlc_QCxE" target="_blank" rel="noopener"> <img src="https://github.com/user-attachments/assets/ac2ec55f-b487-4b3f-ae6f-b8743ad296e4" alt="Demo video" width="800" target="_blank" /> </a>Install as Claude Skill

Extend Claude's deep research capabilities by installing GPT Researcher as a Claude Skill:

npx skills add assafelovic/gpt-researcher

Once installed, Claude can leverage GPT Researcher's deep research capabilities directly within your conversations.

Architecture

The core idea is to utilize 'planner' and 'execution' agents. The planner generates research questions, while the execution agents gather relevant information. The publisher then aggregates all findings into a comprehensive report.

<div align="center"> <img align="center" height="600" src="https://github.com/assafelovic/gpt-researcher/assets/13554167/4ac896fd-63ab-4b77-9688-ff62aafcc527"> </div>Steps:

- Create a task-specific agent based on a research query.

- Generate questions that collectively form an objective opinion on the task.

- Use a crawler agent for gathering information for each question.

- Summarize and source-track each resource.

- Filter and aggregate summaries into a final research report.

Tutorials

- How it Works

- How to Install

- Live Demo

Features

- 📝 Generate detailed research reports using web and local documents.

- 🖼️ Smart image scraping and filtering for reports.

- 🍌 AI-generated inline images using Google Gemini (Nano Banana) for visual illustrations.

- 📜 Generate detailed reports exceeding 2,000 words.

- 🌐 Aggregate over 20 sources for objective conclusions.

- 🖥️ Frontend available in lightweight (HTML/CSS/JS) and production-ready (NextJS + Tailwind) versions.

- 🔍 JavaScript-enabled web scraping.

- 📂 Maintains memory and context throughout research.

- 📄 Export reports to PDF, Word, and other formats.

📖 Documentation

See the Documentation for:

- Installation and setup guides

- Configuration and customization options

- How-To examples

- Full API references

⚙️ Getting Started

Installation

-

Install Python 3.11 or later. Guide.

-

Clone the project and navigate to the directory:

git clone https://github.com/assafelovic/gpt-researcher.git cd gpt-researcher -

Set up API keys by exporting them or storing them in a

.envfile.export OPENAI_API_KEY={Your OpenAI API Key here} export TAVILY_API_KEY={Your Tavily API Key here}(Optional) For enhanced tracing and observability, you can also set:

# export LANGCHAIN_TRACING_V2=true # export LANGCHAIN_API_KEY={Your LangChain API Key here}For custom OpenAI-compatible APIs (e.g., local models, other providers), you can also set:

export OPENAI_BASE_URL={Your custom API base URL here} -

Install dependencies and start the server:

pip install -r requirements.txt python -m uvicorn main:app --reload

Visit http://localhost:8000 to start.

For other setups (e.g., Poetry or virtual environments), check the Getting Started page.

Run as PIP package

pip install gpt-researcher

Example Usage:

...

from gpt_researcher import GPTResearcher

query = "why is Nvidia stock going up?"

researcher = GPTResearcher(query=query)

# Conduct research on the given query