More stars than GPT Researcher. Stanford pedigree adds credibility.

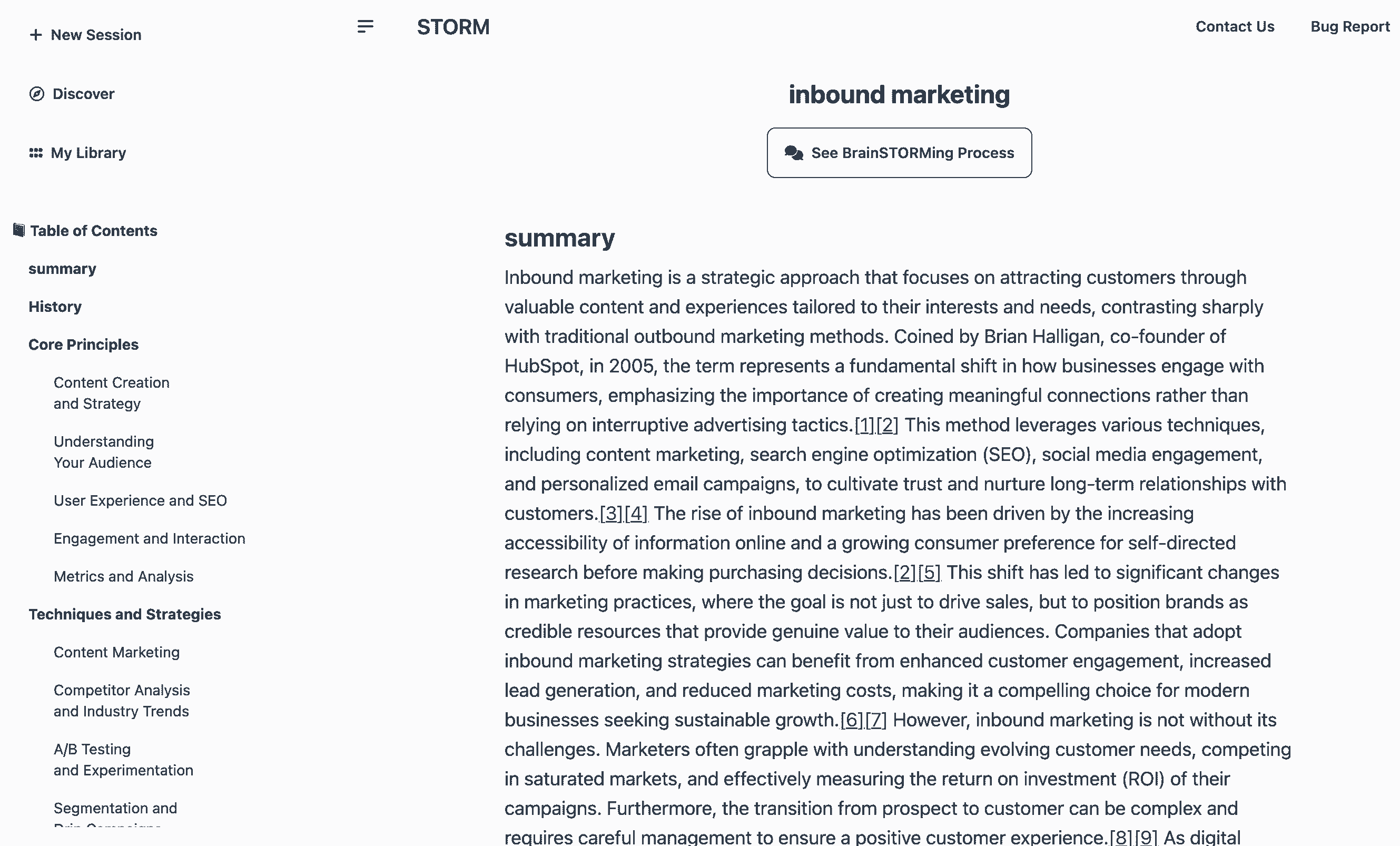

STORM (Stanford)

activeStanford's LLM-powered knowledge curation system. Generates Wikipedia-style articles with citations in ~3 min. 28K stars, 84.8% citation recall / 85.2% precision (peer-reviewed). MIT license.

Where it wins

28,016 stars, 2,556 forks — more stars than GPT Researcher

84.83% citation recall, 85.18% citation precision — peer-reviewed (arXiv)

Wikipedia-style article generation — unique approach

Co-STORM collaborative research mode (EMNLP 2024)

Stanford OVAL pedigree — strong academic credibility

MIT license

Live demo at storm.genie.stanford.edu

Where to be skeptical

No MCP integration — harder to plug into agent workflows

Article-oriented output — less flexible than report-style tools

Academic origin — less polished UX than commercial tools

No dedicated SDK or API beyond the repo

Editorial verdict

#6 in research. Best for structured knowledge curation — Wikipedia-style article generation with peer-reviewed citation quality (84.8% recall, 85.2% precision). Stanford pedigree, Co-STORM collaborative mode (EMNLP 2024). Less practical for agentic workflows than GPT Researcher.

Related

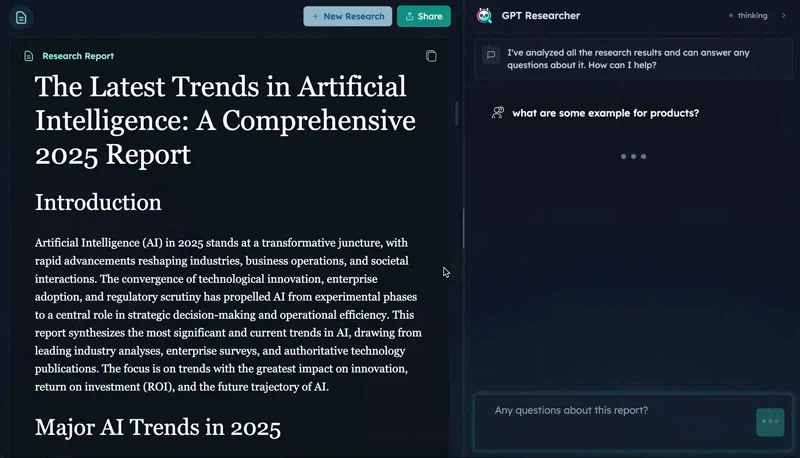

GPT Researcher

89Open-source autonomous deep research agent. CMU DeepResearchGym #1 on citation quality, report quality, info coverage. 25.8K stars, 15.9K weekly PyPI downloads. Apache 2.0.

Tongyi DeepResearch

82First fully open-source deep research agent matching closed-source leaders on benchmarks. HLE 32.9 (exceeds OpenAI's 26.6), 30.5B params / 3.3B active (MoE), runs locally. 18.5K stars. Apache 2.0.

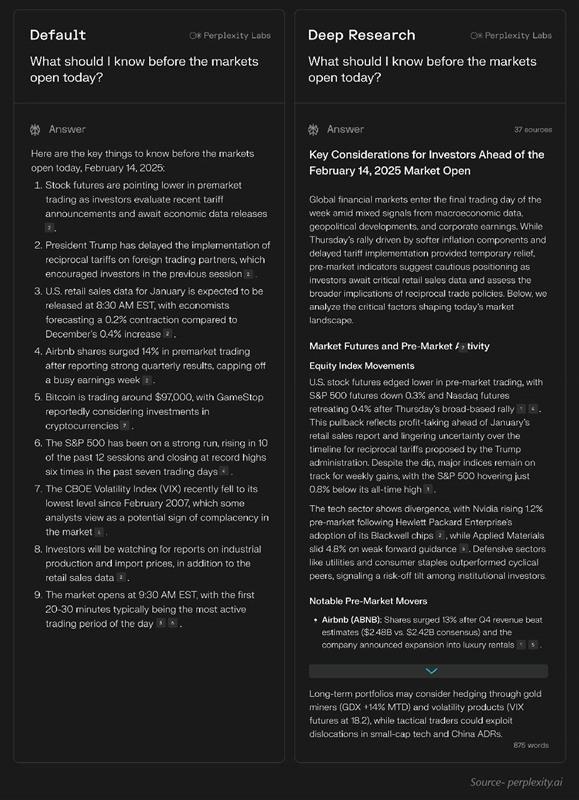

Perplexity Deep Research

43Research-first search engine with inline citations. Fastest deep research (15-30s), 93.9% SimpleQA accuracy, 50+ sources per report. $20/mo Pro.

OpenAI Deep Research

42Agentic research mode powered by o3/o4-mini. 26.6% HLE (highest of any system), 72.57% GAIA, MCP support (Feb 2026). Slower (3-15 min) but deepest reasoning.

Public evidence

Strong community interest for an academic research tool.

Published in peer-reviewed venue with specific metrics. High precision = claims backed by sources.

Wikipedia editors validated quality — real expert evaluation.

Academic conference acceptance validates the collaborative research approach.

Raw GitHub source

GitHub README peek

Constrained peek so you can sanity-check the source material without leaving the site.

STORM: Synthesis of Topic Outlines through Retrieval and Multi-perspective Question Asking

<p align="center"> | <a href="http://storm.genie.stanford.edu"><b>Research preview</b></a> | <a href="https://arxiv.org/abs/2402.14207"><b>STORM Paper</b></a>| <a href="https://www.arxiv.org/abs/2408.15232"><b>Co-STORM Paper</b></a> | <a href="https://storm-project.stanford.edu/"><b>Website</b></a> | </p> **Latest News** 🔥-

[2025/01] We add litellm integration for language models and embedding models in

knowledge-stormv1.1.0. -

[2024/09] Co-STORM codebase is now released and integrated into

knowledge-stormpython package v1.0.0. Runpip install knowledge-storm --upgradeto check it out. -

[2024/09] We introduce collaborative STORM (Co-STORM) to support human-AI collaborative knowledge curation! Co-STORM Paper has been accepted to EMNLP 2024 main conference.

-

[2024/07] You can now install our package with

pip install knowledge-storm! -

[2024/07] We add

VectorRMto support grounding on user-provided documents, complementing existing support of search engines (YouRM,BingSearch). (check out #58) -

[2024/07] We release demo light for developers a minimal user interface built with streamlit framework in Python, handy for local development and demo hosting (checkout #54)

-

[2024/06] We will present STORM at NAACL 2024! Find us at Poster Session 2 on June 17 or check our presentation material.

-

[2024/05] We add Bing Search support in rm.py. Test STORM with

GPT-4o- we now configure the article generation part in our demo usingGPT-4omodel. -

[2024/04] We release refactored version of STORM codebase! We define interface for STORM pipeline and reimplement STORM-wiki (check out

src/storm_wiki) to demonstrate how to instantiate the pipeline. We provide API to support customization of different language models and retrieval/search integration.

Overview (Try STORM now!)

<p align="center"> <img src="https://raw.githubusercontent.com/stanford-oval/storm/main/assets/overview.svg" style="width: 90%; height: auto;"> </p> STORM is a LLM system that writes Wikipedia-like articles from scratch based on Internet search. Co-STORM further enhanced its feature by enabling human to collaborative LLM system to support more aligned and preferred information seeking and knowledge curation.While the system cannot produce publication-ready articles that often require a significant number of edits, experienced Wikipedia editors have found it helpful in their pre-writing stage.

More than 70,000 people have tried our live research preview. Try it out to see how STORM can help your knowledge exploration journey and please provide feedback to help us improve the system 🙏!

How STORM & Co-STORM works

STORM

STORM breaks down generating long articles with citations into two steps:

- Pre-writing stage: The system conducts Internet-based research to collect references and generates an outline.

- Writing stage: The system uses the outline and references to generate the full-length article with citations.

STORM identifies the core of automating the research process as automatically coming up with good questions to ask. Directly prompting the language model to ask questions does not work well. To improve the depth and breadth of the questions, STORM adopts two strategies:

- Perspective-Guided Question Asking: Given the input topic, STORM discovers different perspectives by surveying existing articles from similar topics and uses them to control the question-asking process.

- Simulated Conversation: STORM simulates a conversation between a Wikipedia writer and a topic expert grounded in Internet sources to enable the language model to update its understanding of the topic and ask follow-up questions.

CO-STORM

Co-STORM proposes a collaborative discourse protocol which implements a turn management policy to support smooth collaboration among

- Co-STORM LLM experts: This type of agent generates answers grounded on external knowledge sources and/or raises follow-up questions based on the discourse history.

- Moderator: This agent generates thought-provoking questions inspired by information discovered by the retriever but not directly used in previous turns. Question generation can also be grounded!

- Human user: The human user will take the initiative to either (1) observe the discourse to gain deeper understanding of the topic, or (2) actively engage in the conversation by injecting utterances to steer the discussion focus.

Co-STORM also maintains a dynamic updated mind map, which organize collected information into a hierarchical concept structure, aiming to build a shared conceptual space between the human user and the system. The mind map has been proven to help reduce the mental load when the discourse goes long and in-depth.

Both STORM and Co-STORM are implemented in a highly modular way using dspy.

Installation

To install the knowledge storm library, use pip install knowledge-storm.

You could also install the source code which allows you to modify the behavior of STORM engine directly.

-

Clone the git repository.

git clone https://github.com/stanford-oval/storm.git cd storm -

Install the required packages.

conda create -n storm python=3.11 conda activate storm pip install -r requirements.txt

API

Currently, our package support:

- Language model components: All language models supported by litellm as listed here

- Embedding model components: All embedding models supported by litellm as listed here

- retrieval module components:

YouRM,BingSearch,VectorRM,SerperRM,BraveRM,SearXNG,DuckDuckGoSearchRM,TavilySearchRM,GoogleSearch, andAzureAISearchas

:star2: PRs for integrating more search engines/retrievers into knowledge_storm/rm.py are highly appreciated!

Both STORM and Co-STORM are working in the information curation layer, you need to set up the information retrieval module and language model module to create their Runner classes respectively.

STORM

The STORM knowledge curation engine is defined as a simple Python STORMWikiRunner class. Here is an example of using You.com search engine and OpenAI models.

import os

from knowledge_storm import STORMWikiRunnerArguments, STORMWikiRunner, STORMWikiLMConfigs

from knowledge_storm.lm import LitellmModel

from knowledge_storm.rm import YouRM

lm_configs = STORMWikiLMConfigs()

openai_kwargs = {

'api_key': os.getenv("OPENAI_API_KEY"),

'temperature': 1.0,

'top_p': 0.9,

}

# STORM is a LM system so different components can be powered by different models to reach a good balance between cost and quality.

# For a good practice, choose a cheaper/faster model for `conv_simulator_lm` which is used to split queries, synthesize answers in the conversation.

# Choose a more powerful model for `article_gen_lm` to generate verifiable text with citations.

gpt_35 = LitellmModel(model='gpt-3.5-turbo', max_tokens=500, **openai_kwargs)

gpt_4 = LitellmModel(model='gpt-4o', max_tokens=3000, **openai_kwargs)

lm_configs.set_conv_simulator_lm(gpt_35)

lm_configs.set_question_asker_lm(gpt_35)

lm_configs.set_outline_gen_lm(gpt_4)

lm_configs.set_article_gen_lm(gpt_4)

lm_configs.set_article_polish_lm(gpt_4)

# Check out the STORMWikiRunnerArguments class for more configurations.

engine_args = STORMWikiRunnerArguments(...)

rm = YouRM(ydc_api_key=os.getenv('YDC_API_KEY'), k=engine_args.search_top_k)

runner = STORMWikiRunner(engine_args, lm_configs, rm)

The STORMWikiRunner instance can be evoked with the simple run method:

topic = input('Topic: ')

runner.run(

topic=topic,

do_research=True,