Near-viral reception. 'DeepSeek moment' comparison signals major disruption potential.

Tongyi DeepResearch

activeFirst fully open-source deep research agent matching closed-source leaders on benchmarks. HLE 32.9 (exceeds OpenAI's 26.6), 30.5B params / 3.3B active (MoE), runs locally. 18.5K stars. Apache 2.0.

Where it wins

HLE 32.9 — exceeds OpenAI's 26.6 on same benchmark

GAIA 70.9, BrowseComp 43.4, FRAMES 90.6 — competitive across all benchmarks

30.5B params, only 3.3B active per token (MoE) — runs on consumer hardware

18,483 stars in ~6 months — rapid growth

365 HN pts / 153 comments — near-viral reception

Apache 2.0 — full commercial use allowed

Deployed in Alibaba's Gaode Maps — production-validated

Where to be skeptical

No PyPI/npm download stats — adoption beyond GitHub stars unclear

Newer project (~6 months) — less battle-tested than GPT Researcher

Alibaba corporate backing may concern some users

No MCP server available

Editorial verdict

#5 in research. Open-source disruptor — HLE 32.9 exceeds OpenAI's 26.6 on the same benchmark, runs on consumer hardware via MoE. Called 'the DeepSeek moment for AI agents' by VentureBeat. Ranked below GPT Researcher due to less proven adoption data.

Related

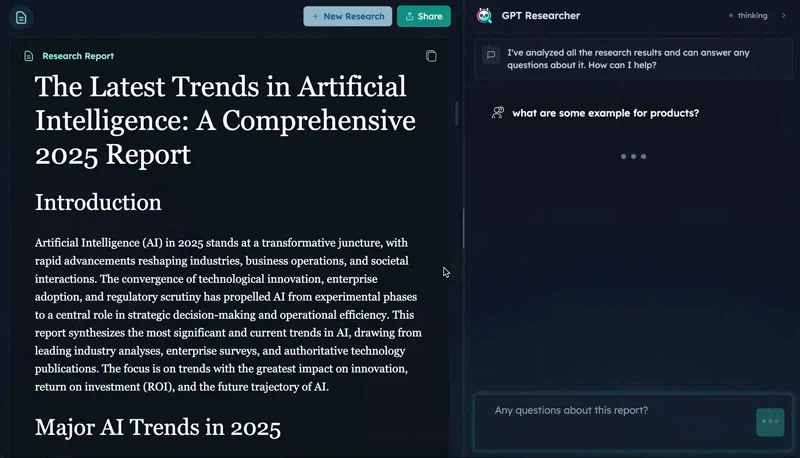

GPT Researcher

89Open-source autonomous deep research agent. CMU DeepResearchGym #1 on citation quality, report quality, info coverage. 25.8K stars, 15.9K weekly PyPI downloads. Apache 2.0.

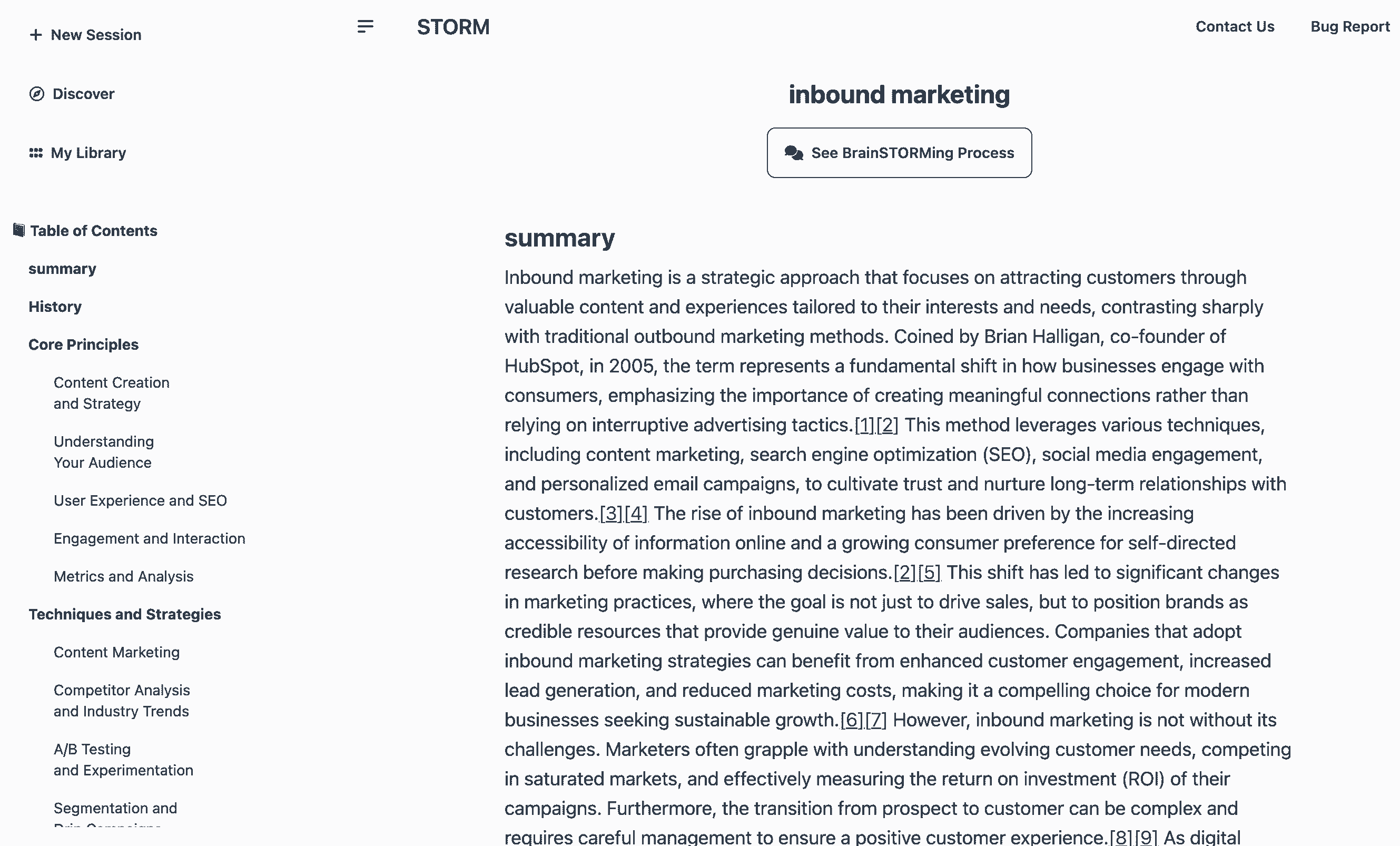

STORM (Stanford)

66Stanford's LLM-powered knowledge curation system. Generates Wikipedia-style articles with citations in ~3 min. 28K stars, 84.8% citation recall / 85.2% precision (peer-reviewed). MIT license.

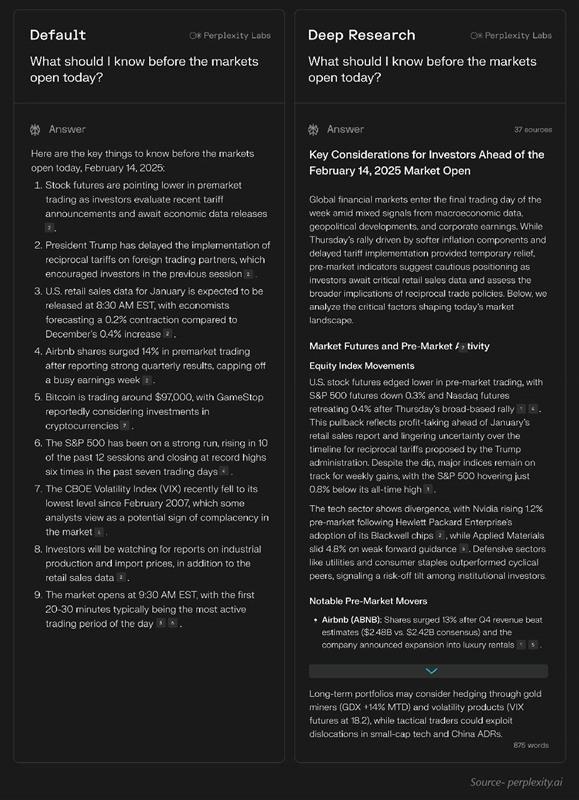

Perplexity Deep Research

43Research-first search engine with inline citations. Fastest deep research (15-30s), 93.9% SimpleQA accuracy, 50+ sources per report. $20/mo Pro.

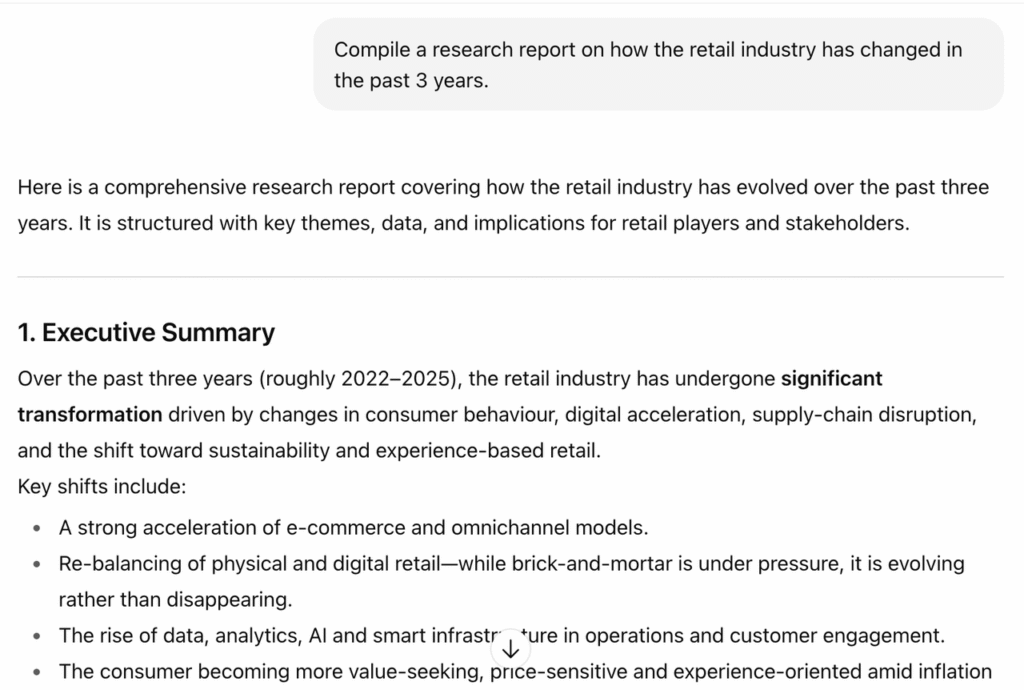

OpenAI Deep Research

42Agentic research mode powered by o3/o4-mini. 26.6% HLE (highest of any system), 72.57% GAIA, MCP support (Feb 2026). Slower (3-15 min) but deepest reasoning.

Public evidence

18K stars in ~6 months since Sep 2025 launch. Strong organic growth.

Matches or exceeds OpenAI o3 on multiple benchmarks while being fully open-source.

Efficient MoE architecture enables local deployment without enterprise GPU infrastructure.

Production deployment at scale validates beyond benchmarks.

Raw GitHub source

GitHub README peek

Constrained peek so you can sanity-check the source material without leaving the site.

👏 Welcome to try Tongyi DeepResearch via our <img src="https://raw.githubusercontent.com/Alibaba-NLP/DeepResearch/main/assets/tongyi.png" width="14px" style="display:inline;"> Modelscope online demo or 🤗 Huggingface online demo or <img src="https://raw.githubusercontent.com/Alibaba-NLP/DeepResearch/main/WebAgent/assets/aliyun.png" width="14px" style="display:inline;"> bailian service!

[!NOTE] This demo is for quick exploration only. Response times may vary or fail intermittently due to model latency and tool QPS limits. For a stable experience we recommend local deployment; for a production-ready service, visit <img src="https://raw.githubusercontent.com/Alibaba-NLP/DeepResearch/main/WebAgent/assets/aliyun.png" width="14px" style="display:inline;"> bailian and follow the guided setup.

Introduction

We present <img src="https://raw.githubusercontent.com/Alibaba-NLP/DeepResearch/main/assets/tongyi.png" width="14px" style="display:inline;"> Tongyi DeepResearch, an agentic large language model featuring 30.5 billion total parameters, with only 3.3 billion activated per token. Developed by Tongyi Lab, the model is specifically designed for long-horizon, deep information-seeking tasks. Tongyi DeepResearch demonstrates state-of-the-art performance across a range of agentic search benchmarks, including Humanity's Last Exam, BrowseComp, BrowseComp-ZH, WebWalkerQA,xbench-DeepSearch, FRAMES and SimpleQA.

Tongyi DeepResearch builds upon our previous work on the <img src="https://raw.githubusercontent.com/Alibaba-NLP/DeepResearch/main/assets/tongyi.png" width="14px" style="display:inline;"> WebAgent project.

More details can be found in our 📰 <a href="https://tongyi-agent.github.io/blog/introducing-tongyi-deep-research/">Tech Blog</a>.

<p align="center"> <img width="100%" src="https://raw.githubusercontent.com/Alibaba-NLP/DeepResearch/main/assets/performance.png"> </p>Features

- ⚙️ Fully automated synthetic data generation pipeline: We design a highly scalable data synthesis pipeline, which is fully automatic and empowers agentic pre-training, supervised fine-tuning, and reinforcement learning.

- 🔄 Large-scale continual pre-training on agentic data: Leveraging diverse, high-quality agentic interaction data to extend model capabilities, maintain freshness, and strengthen reasoning performance.

- 🔁 End-to-end reinforcement learning: We employ a strictly on-policy RL approach based on a customized Group Relative Policy Optimization framework, with token-level policy gradients, leave-one-out advantage estimation, and selective filtering of negative samples to stabilize training in a non‑stationary environment.

- 🤖 Agent Inference Paradigm Compatibility: At inference, Tongyi DeepResearch is compatible with two inference paradigms: ReAct, for rigorously evaluating the model's core intrinsic abilities, and an IterResearch-based 'Heavy' mode, which uses a test-time scaling strategy to unlock the model's maximum performance ceiling.

Model Download

You can directly download the model by following the links below.

| Model | Download Links | Model Size | Context Length |

|---|---|---|---|

| Tongyi-DeepResearch-30B-A3B | 🤗 HuggingFace<br> 🤖 ModelScope | 30B-A3B | 128K |

News

[2025/09/20]🚀 Tongyi-DeepResearch-30B-A3B is now on OpenRouter! Follow the Quick-start guide.

[2025/09/17]🔥 We have released Tongyi-DeepResearch-30B-A3B.

Deep Research Benchmark Results

<p align="center"> <img width="100%" src="https://raw.githubusercontent.com/Alibaba-NLP/DeepResearch/main/assets/benchmark.png"> </p>Quick Start

This guide provides instructions for setting up the environment and running inference scripts located in the inference folder.

1. Environment Setup

- Recommended Python version: 3.10.0 (using other versions may cause dependency issues).

- It is strongly advised to create an isolated environment using

condaorvirtualenv.

# Example with Conda

conda create -n react_infer_env python=3.10.0

conda activate react_infer_env

2. Installation

Install the required dependencies:

pip install -r requirements.txt

3. Environment Configuration and Prepare Evaluation Data

Environment Configuration

Configure your API keys and settings by copying the example environment file:

# Copy the example environment file

cp .env.example .env

Edit the .env file and provide your actual API keys and configuration values:

- SERPER_KEY_ID: Get your key from Serper.dev for web search and Google Scholar

- JINA_API_KEYS: Get your key from Jina.ai for web page reading

- API_KEY/API_BASE: OpenAI-compatible API for page summarization from OpenAI

- DASHSCOPE_API_KEY: Get your key from Dashscope for file parsing

- SANDBOX_FUSION_ENDPOINT: Python interpreter sandbox endpoints (see SandboxFusion)

- MODEL_PATH: Path to your model weights

- DATASET: Name of your evaluation dataset

- OUTPUT_PATH: Directory for saving results

Note: The

.envfile is gitignored, so your secrets will not be committed to the repository.

Prepare Evaluation Data

The system supports two input file formats: JSON and JSONL.

Supported File Formats:

Option 1: JSONL Format (recommended)

- Create your data file with

.jsonlextension (e.g.,my_questions.jsonl) - Each line must be a valid JSON object with

questionandanswerkeys:{"question": "What is the capital of France?", "answer": "Paris"} {"question": "Explain quantum computing", "answer": ""}

Option 2: JSON Format

- Create your data file with

.jsonextension (e.g.,my_questions.json) - File must contain a JSON array of objects, each with

questionandanswerkeys:[ { "question": "What is the capital of France?", "answer": "Paris" }, { "question": "Explain quantum computing", "answer": "" } ]

Important Note: The answer field contains the ground truth/reference answer used for evaluation. The system generates its own responses to the questions, and these reference answers are used to automatically judge the quality of the generated responses during benchmark evaluation.

File References for Document Processing:

- If using the file parser tool, prepend the filename to the

questionfield - Place referenced files in

eval_data/file_corpus/directory - Example:

{"question": "(Uploaded 1 file: ['report.pdf'])\n\nWhat are the key findings?", "answer": "..."}